Abstract

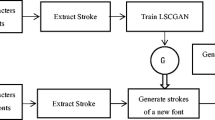

Despite numerous fonts already being designed and easily available online, the desire for new fonts seems to be endless. Previous methods focused on extracting style, shape, and stroke information from a large set of fonts, or transforming and interpolating existing fonts to create new fonts. The drawback of these methods is that generated fonts look-alike fonts of training data. As fonts are created from human handwriting documents, they have uncertainty and randomness incorporated into them, giving them a more authentic feel than standard fonts. Handwriting, like a fingerprint, is unique to each individual. In this paper, we have proposed GAN-based models that automate the entire font generation process, removing the labor involved in manually creating a new font. We extracted data from single-author handwritten documents and developed and trained class-conditioned DCGAN models to generate fonts that mimic the author’s handwriting style.

Supported by I-Hub foundation for Cobotics.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Goodfellow, I.J.: Pouget-Abadie, Jean, Mirza, Mehdi, Xu, Bing, Warde-Farley, David, Ozair, Sherjil. Aaron C., and Bengio, Yoshua. Generative adversarial nets. NIPS, Courville (2014)

Tian, Y.: zi2zi: Master Chinese calligraphy with conditional adversarial networks (2017). https://kaonashi-tyc.github.io/2017/04/06/zi2zi.html. Accessed 16 Apr 2019

Isola, P., Zhu, J.-Y., Zhou, T., Efros, A.A.: Image-to-image translation with conditional adversarial networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1125–1134. CVPR (2017)

Odena, A., Olah, C., Shlens, J.: Conditional image synthesis with auxiliary classifier GANs. In: Proceedings of the 34th International Conference on Machine Learning, pp. 2642–2651. ICML (2017)

Taigman, Y., Polyak, A., Wolf, L.: Unsupervised cross-domain image generation. arXiv preprint arXiv:1611.02200

Chang, J., Gu, Y.: Chinese typography transfer. arXiv preprint arXiv:1707.04904

Ronneberger, O., Fischer, P., Brox, T.: U-net: convolutional networks for biomedical image segmentation. In: International Conference on Medical image Computing and Computer-Assisted Intervention, pp. 234–241. Springer, Cham (2015)

Azadi, S., Fisher, M., Kim, V., Wang, Z., Shechtman, E., Darrell, T.: Multi-content GAN for few-shot font style transfer. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 7564–7573 (2018)

Mirza, M., Osindero, S.: Conditional generative adversarial nets (2014). arXiv preprint arXiv:1411.1784

Guo, Y., Lian, Z., Tang, Y., Xiao, J.: Creating new Chinese fonts based on manifold learning and adversarial networks. In: Diamanti, O., Vaxman, A. (eds) Proceedings of the Eurographics—Short Papers. The Eurographics Association (2018)

Lin, X., Li, J., Zeng, H., Ji, R.: Font generation based on least squares conditional generative adversarial nets. Multimedia Tools Appl. 1–15 (2018)

Radford, A., Metz, L., Chintala, S.: Unsupervised representation learning with deep convolutional generative adversarial networks. arXiv preprint http://arxiv.org/abs/1511.06434 (2015)

Kingma Diederik, P., Adam, J.B.: A method for stochastic optimization. arXiv preprint arXiv:1412.6980 (2014)

Heusel, M., Ramsauer, H., Unterthiner, T., Nessler, B., & Hochreiter, S. (2017). Gans trained by a two time-scale update rule converge to a local nash equilibrium. In Advances in neural information processing systems (pp. 6626-6637)

Benny, Y., Galanti, T., Benaim, S. et al. Evaluation Metrics for Conditional Image Generation. Int J Comput Vis 129, 1712-1731 (2021). https://doi.org/10.1007/s11263-020-01424-w

Acknowledgements

The present research is partially funded by the I-Hub foundation for Cobotics (Technology Innovation Hub of IIT-Delhi set up by the Department of Science and Technology, Govt. of India).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Kalingeri, R., Kushwaha, V., Kala, R., Nandi, G.C. (2023). Synthesis of Human-Inspired Intelligent Fonts Using Conditional-DCGAN. In: Tistarelli, M., Dubey, S.R., Singh, S.K., Jiang, X. (eds) Computer Vision and Machine Intelligence. Lecture Notes in Networks and Systems, vol 586. Springer, Singapore. https://doi.org/10.1007/978-981-19-7867-8_55

Download citation

DOI: https://doi.org/10.1007/978-981-19-7867-8_55

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-19-7866-1

Online ISBN: 978-981-19-7867-8

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)