Abstract

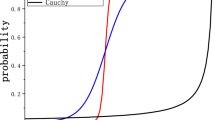

Existing data-dependent hashing methods use large backbone networks with millions of parameters and are computationally complex. Existing knowledge distillation methods use logits and other features of the deep (teacher) model and as knowledge for the compact (student) model, which requires the teacher’s network to be fine-tuned on the context in parallel with the student model on the context. Training teacher on the target context requires more time and computational resources. In this paper, we propose context unaware knowledge distillation that uses the knowledge of the teacher model without fine-tuning it on the target context. We also propose a new efficient student model architecture for knowledge distillation. The proposed approach follows a two-step process. The first step involves pre-training the student model with the help of context unaware knowledge distillation from the teacher model. The second step involves fine-tuning the student model on the context of image retrieval. In order to show the efficacy of the proposed approach, we compare the retrieval results, no. of parameters, and no. of operations of the student models with the teacher models under different retrieval frameworks, including deep cauchy hashing (DCH) and central similarity quantization (CSQ). The experimental results confirm that the proposed approach provides a promising trade-off between the retrieval results and efficiency. The code used in this paper is released publicly at https://github.com/satoru2001/CUKDFIR.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Lew, M.S. , Sebe, N., Djeraba, C., Jain, R.: Content-based multimedia information retrieval: state of the art and challenges. ACM Trans. Multimedia Comput. Commun. Appl. (TOMM) 2(1), pp. 1–19 (2006)

Dubey, S.R., Singh, S.K., Chu, W.T.: Vision transformer hashing for image retrieval. IEEE International Conference on Multimedia and Expo (2022)

Singh, S.R., Yedla, R.R., Dubey, S.R., Sanodiya, R., Chu, W.T.: Frequency disentangled residual network. arXiv preprint arXiv:2109.12556 (2021)

Cheng, Y., Wang, D., Zhou, P., Zhang, T.: A survey of model compression and acceleration for deep neural networks. arXiv preprint arXiv:1710.09282 (2017)

Hinton, G., Vinyals, O., Dean J.: Distilling the knowledge in a neural network. arXiv preprint arXiv:1503.02531 (2015)

Ba, L.J., Caruana, R.: Do deep nets really need to be deep?” CoRR, Vol. abs/1312.6184 (2013). [Online]. Available: http://arxiv.org/abs/1312.6184

Gou, J., Yu, B., Maybank, S.J., Tao, D.: Knowledge distillation: A survey, CoRR, Vol. abs/2006.05525 (2020). [Online]. Available: https://arxiv.org/abs/2006.05525

Romero, A., Ballas, N., Kahou, S.E., Chassang, A., Gatta, C., Bengio, Y.: Fitnets: Hints for thin deep nets, arXiv preprint arXiv:1412.6550 (2014)

Yim, J., Joo, D., Bae, J., Kim, J.: A gift from knowledge distillation: fast optimization, network minimization and transfer learning. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4133–4141 (2017)

Cao, Y., Long, M., Liu, B., Wang, J.: Deep cauchy hashing for hamming space retrieval. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1229–1237 (2018)

Yuan, L., Wang, T., Zhang, X., Tay, F.E., Jie, Z., Liu, W., Feng, J.: Central similarity quantization for efficient image and video retrieval. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 3083–3092 (2020)

Zhu, H., Long, M., Wang, J., Cao, Y.: Deep hashing network for efficient similarity retrieval. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 30 (2016)

Cao, Y., Long, M., Wang, J., Zhu, H., Wen, Q.: Deep quantization network for efficient image retrieval. In: AAAI (2016)

Liu, B., Cao, Y., Long, M., Wang, J., Wang, J.: Deep triplet quantization. In: Proceedings of the 26th ACM international conference on Multimedia, pp. 755–763 (2018)

Zhai, H., Lai, S., Jin, H., Qian, X., Mei, T.: Deep transfer hashing for image retrieval. IEEE Trans. Circuits Syst. Video Technol. 31(2), 742–753 (2020)

Cao, Z., Long, M., Wang, J., Yu, P.S.: Hashnet: deep learning to hash by continuation. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 5608–5617 (2017)

Dubey, S. R.: A decade survey of content based image retrieval using deep learning. IEEE Trans. Circuits Syst. Video Technol. (2021)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Krizhevsky, A., Sutskever, I., Hinton, G.E.: Imagenet classification with deep convolutional neural networks. Adv. Neural Inf. Process. Syst. 25, 1097–1105 (2012)

Chua, T.-S., Tang, J., Hong, R., Li, H., Luo, Z., Zheng, Y.: Nus-wide: a real-world web image database from national university of Singapore. In Proceedings of the ACM International Conference on Image and Video Retrieval, pp. 1–9 (2009)

Krizhevsky, A.: Learning multiple layers of features from tiny images, pp. 32-33 (2009). [Online]. Available: https://www.cs.toronto.edu/kriz/learning-features-2009-TR.pdf

Deng, J., Dong, W., Socher, R., Li, L.-J.: Kai Li and Li Fei-Fei, “ImageNet: A large-scale hierarchical image database.” IEEE Conf. Comput. Vis. Pattern Recognit. 2009, 248–255 (2009). https://doi.org/10.1109/CVPR.2009.5206848

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Reddy, B.Y., Dubey, S.R., Sanodiya, R.K., Karn, R.R.P. (2023). Context Unaware Knowledge Distillation for Image Retrieval. In: Tistarelli, M., Dubey, S.R., Singh, S.K., Jiang, X. (eds) Computer Vision and Machine Intelligence. Lecture Notes in Networks and Systems, vol 586. Springer, Singapore. https://doi.org/10.1007/978-981-19-7867-8_6

Download citation

DOI: https://doi.org/10.1007/978-981-19-7867-8_6

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-19-7866-1

Online ISBN: 978-981-19-7867-8

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)