Abstract

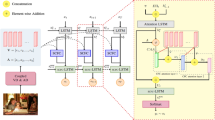

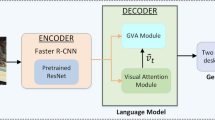

When describing pictures from the point of view of human observers, the tendency is to prioritize eye-catching objects, link them to corresponding labels, and then integrate the results with background information (i.e., nearby objects or locations) to provide context. Most caption generation schemes consider the visual information of objects, while ignoring the corresponding labels, the setting, and/or the spatial relationship between the object and setting. This fails to exploit most of the useful information that the image might otherwise provide. In the current study, we developed a model that adds the object’s tags to supplement the insufficient information in visual object features, and established relationship between objects and background features based on relative and absolute coordinate information. We also proposed an attention architecture to account for all of the features in generating an image description. The effectiveness of the proposed Geometrically-Aware Dual Transformer Encoding Visual and Textual Features (GDVT) is demonstrated in experiment settings with and without pre-training.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Anderson, P., et al.: Bottom-up and top-down attention for image captioning and visual question answering. In: CVPR (2018)

Anderson, P., et al.: Bottom-up and top-down attention for image captioning and visual question answering. In: 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 6077–6086 (2018)

Chen, X., et al.: Microsoft coco captions: data collection and evaluation server. CoRR (2015)

Cornia, M., Baraldi, L., Cucchiara, R.: Show, control and tell: a framework for generating controllable and grounded captions. In: 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 8299–8308 (2019)

Cornia, M., Stefanini, M., Baraldi, L., Cucchiara, R.: Meshed-memory transformer for image captioning. In: 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 10575–10584

Guo, L., Liu, J., Zhu, X., Yao, P., Lu, S., Lu, H.: Normalized and geometry-aware self-attention network for image captioning. In: 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 10324–10333 (2020)

Huang, L., Wang, W., Chen, J., Wei, X.Y.: Attention on attention for image captioning. In: 2019 IEEE/CVF International Conference on Computer Vision (ICCV), pp. 4633–4642 (2019)

Kuo, C., Kira, Z.: Beyond a pre-trained object detector: cross-modal textual and visual context for image captioning. In: 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 17948–17958 (2022)

Li, X., et al.: OSCAR: object-semantics aligned pre-training for vision-language tasks. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, J.-M. (eds.) ECCV 2020. LNCS, vol. 12375, pp. 121–137. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-58577-8_8

Liu, Z., et al.: Swin transformer: Hierarchical vision transformer using shifted windows. In: Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV) (2021)

Nguyen, V.Q., Suganuma, M., Okatani, T.: Grit: faster and better image captioning transformer using dual visual features, pp. 167–184 (2022)

Pan, Y., Yao, T., Li, Y., Mei, T.: X-linear attention networks for image captioning. In: 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 10968–10977 (2020)

Rennie, S.J., Marcheret, E., Mroueh, Y., Ross, J., Goel, V.: Self-critical sequence training for image captioning. In: 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 1179–1195 (2017)

Vaswani, A., et al.: Attention is all you need. In: Guyon, I., et al. (eds.) Advances in Neural Information Processing Systems (2017)

Wang, Z., Yu, J., Yu, A.W., Dai, Z., Tsvetkov, Y., Cao, Y.: SimVLM: Simple visual language model pretraining with weak supervision. In: International Conference on Learning Representations (2022)

Yang, X., Tang, K., Zhang, H., Cai, J.: Auto-encoding scene graphs for image captioning. In: 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 10677–10686 (2019)

Yao, Ting, Pan, Yingwei, Li, Yehao, Mei, Tao: Exploring visual relationship for image captioning. In: Ferrari, Vittorio, Hebert, Martial, Sminchisescu, Cristian, Weiss, Yair (eds.) Computer Vision – ECCV 2018. LNCS, vol. 11218, pp. 711–727. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01264-9_42

Zhang, P., et al.: VinVL: Revisiting visual representations in vision-language models. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 5579–5588 (2021)

Zhou, L., Palangi, H., Zhang, L., Hu, H., Corso, J.J., Gao, J.: Unified vision-language pre-training for image captioning and VQA. ArXiv (2020)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2024 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Chang, YL., Ma, HS., Li, SC., Huang, JW. (2024). Geometrically-Aware Dual Transformer Encoding Visual and Textual Features for Image Captioning. In: Yang, DN., Xie, X., Tseng, V.S., Pei, J., Huang, JW., Lin, J.CW. (eds) Advances in Knowledge Discovery and Data Mining. PAKDD 2024. Lecture Notes in Computer Science(), vol 14649. Springer, Singapore. https://doi.org/10.1007/978-981-97-2262-4_2

Download citation

DOI: https://doi.org/10.1007/978-981-97-2262-4_2

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-97-2264-8

Online ISBN: 978-981-97-2262-4

eBook Packages: Computer ScienceComputer Science (R0)