Abstract

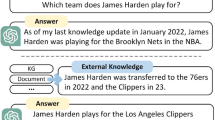

The integration of Large Language Models (LLMs) and Knowledge Graphs (KGs) has emerged as a vibrant research area in the field of Natural Language Processing (NLP). However, existing approaches need help effectively harnessing the complementary strengths of LLMs and KGs. In this paper, we propose a novel system that addresses this gap by enabling bidirectional conversion between LLMs and KGs. We leverage external knowledge to enhance LLMs for domain-specific responses and fine-tune LLMs for information extraction to construct the Knowledge Graph. Moreover, users can interact with the KG, initiating new rounds of questioning in LLMs. The evaluation results highlight the effectiveness of our approach. Our system showcases the potential of combining LLMs and KGs, paving the way for advanced natural language understanding and generation in various domains.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Dong, C., et al.: A survey of natural language generation. ACM Comput. Surv. 55(8), 1–38 (2022)

Du, Z., et al.: GLM: general language model pretraining with autoregressive blank infilling. In: Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics, Vol. 1, pp. 320–335 (2022)

Kenton, J.D.M.W.C., Toutanova, L.K.: BERT: pre-training of deep bidirectional transformers for language understanding. In: Proceedings of NAACL-HLT, pp. 4171–4186 (2019)

Kumar, S.: A survey of deep learning methods for relation extraction. arXiv preprint. arXiv:1705.03645 (2017)

Li, J., Sun, A., Han, J., Li, C.: A survey on deep learning for named entity recognition. IEEE Trans. Knowl. Data Eng. 34(1), 50–70 (2020)

Liu, J., Chen, Y., Liu, K., Bi, W., Liu, X.: Event extraction as machine reading comprehension. In: Proceedings of the 2020 conference on empirical methods in natural language processing (EMNLP), pp. 1641–1651 (2020)

Liu, X., et al.: P-tuning v2: prompt tuning can be comparable to fine-tuning universally across scales and tasks. arXiv preprint. arXiv:2110.07602 (2021)

Melnyk, I., Dognin, P., Das, P.: Grapher: multi-stage knowledge graph construction using pretrained language models. In: NeurIPS 2021 Workshop on Deep Generative Models and Downstream Applications (2021)

Nan, G., Guo, Z., Sekulić, I., Lu, W.: Reasoning with latent structure refinement for document-level relation extraction. In: Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pp. 1546–1557 (2020)

Pan, S., Luo, L., Wang, Y., Chen, C., Wang, J., Wu, X.: Unifying large language models and knowledge graphs: a roadmap. arXiv preprint arXiv:2306.08302 (2023)

Petroni, F., et al.: Kilt: a benchmark for knowledge intensive language tasks. In: Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pp. 2523–2544 (2021)

Radford, A., Narasimhan, K., Salimans, T., Sutskever, I.: Improving language understanding by generative pre-training

Rosset, C., Xiong, C., Phan, M., Song, X., Bennett, P., Tiwary, S.: Knowledge-aware language model pretraining. arXiv preprint. arXiv:2007.00655 (2020)

Saxena, A., Kochsiek, A., Gemulla, R.: Sequence-to-sequence knowledge graph completion and question answering. In: Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Vol 1: Long Papers), pp. 2814–2828 (2022)

Sun, J., et al.: Think-on-graph: Deep and responsible reasoning of large language model with knowledge graph. arXiv preprint. arXiv:2307.07697 (2023)

Tjong Kim Sang, E.F., De Meulder, F.: Introduction to the CoNLL-2003 shared task: Language-independent named entity recognition. In: Proceedings of the Seventh Conference on Natural Language Learning at HLT-NAACL 2003, pp. 142–147 (2003), https://aclanthology.org/W03-0419

Wang, X., et al.: Improving natural language inference using external knowledge in the science questions domain. In: Proceedings of the AAAI Conference on Artificial Intelligence. vol. 33, pp. 7208–7215 (2019)

Wang, X., et al.: Kepler: a unified model for knowledge embedding and pre-trained language representation. Trans. Assoc. Comput. Linguist. 9, 176–194 (2021)

Yan, H., Gui, T., Dai, J., Guo, Q., Zhang, Z., Qiu, X.: A unified generative framework for various NER subtasks. In: Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Vol 1: Long Papers), pp. 5808–5822 (2021)

Yao, L., Mao, C., Luo, Y.: KG-BERT: Bert for knowledge graph completion. arXiv preprint. arXiv:1909.03193 (2019)

Zhang, Z., Han, X., Liu, Z., Jiang, X., Sun, M., Liu, Q.: Ernie: Enhanced language representation with informative entities. In: Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, pp. 1441–1451 (2019)

Acknowledgement

This work is supported by the CAAI-Huawei MindSpore Open Fund (2022037A).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2024 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Han, L., Wang, X., Li, Z., Zhang, H., Chen, Z. (2024). A Bidirectional Question-Answering System using Large Language Models and Knowledge Graphs. In: Song, X., Feng, R., Chen, Y., Li, J., Min, G. (eds) Web and Big Data. APWeb-WAIM 2023 International Workshops. APWeb-WAIM 2023. Communications in Computer and Information Science, vol 2094. Springer, Singapore. https://doi.org/10.1007/978-981-97-2991-3_1

Download citation

DOI: https://doi.org/10.1007/978-981-97-2991-3_1

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-97-2990-6

Online ISBN: 978-981-97-2991-3

eBook Packages: Computer ScienceComputer Science (R0)