Abstract

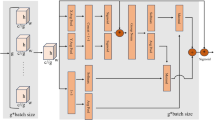

In the mining operation scene, accidents caused by dangerous driving behaviors of drivers occur frequently. Therefore, the driver’s behavior detection can provide early warning and reduce the incidence of accidents. The driver behavior detection task has the issue of high false detection rate of small target detection because of the eyes, mouth and cigarette. Therefore, we propose a new model YOLO-BS, which uses a new structure EVITS and ASPPMP. The EVITS module captures the local and global information of the image feature map, and performs information shuffle and weighted aggregation by grouping and random shift. The purpose is to make the information between groups can spread and interact with each other, and further capture the global context information. The ASPPMP structure has two main functions. One is to use the serialized dilated convolution pyramid to obtain information of different receptive fields and extract multi-scale feature representations. The second is to use the serialized maximum pooling structure to aggregate the local information of the image. By considering the pixels, regions and features around the target, the relationship between the target and its surrounding environment is captured, which helps the algorithm to locate and classify the target more accurately. Experiments on the self-built driver behavior dataset show that the mAP of YOLO-BS is 1.8% higher than that of YOLOv8s in accuracy. In terms of speed, YOLO-BS and YOLOv8s are similar. Our model has been deployed on hundreds of work vehicles at a local mining site to help warn of dangerous driving and reduce the likelihood of accidents.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Lee, B.-G., Chung, W.-Y.: A smartphone-based driver safety monitoring system using data fusion. Sensors 12, 17536–17552 (2012)

Johnson, G., Rajamani, R.: Smartphone localization inside a moving car for prevention of distracted driving. Veh. Syst. Dyn. 58(2), 290–306 (2020)

Wadhwa, A., Roy, S.S.: Driver drowsiness detection using heart rate and behavior methods: a study.Data Anal. Biomed. Eng. Healthc., 163–177 (2021). https://doi.org/10.1016/B978-0-12-819314-3.00011-2

Noomwongs, N., Thitipatanapong, R., Chantranuwathana, S., et al.: Driver behavior detection based on Multi-GNSS precise point positioning technology. Appl. Mech. Mater. 619, 327–331 (2014). https://doi.org/10.4028/www.scientific.net/AMM.619.327

Huang, T., Fu, R., Chen, Y., Sun, Q.: Real-time driver behavior detection based on deep deformable in-verted residual network with an attention mechanism for human-vehicle co-driving system. IEEE Trans. Veh. Technol. (2022)

Zhao, L., Yang, F., Bu, L., Han, S., Zhang, G., Luo, Y.: Driver behavior detection via adaptive spatial attention mechanism. Adv. Eng. Inform. 48, 101280 (2021)

Zhu, X., Su, W., Lu, L., et al.: Deformable DETR: deformable transformers for End-to-End Object Detection (2020). https://doi.org/10.48550/arXiv.2010.04159

Redmon, J., Divvala, S.K., Girshick, R., Farhadi, A.: You only look once: unified, real-time object detection. computer vision and pattern recognition (2016)

Dosovitskiy, A., Beyer, L., Kolesnikov, A., et al.: An image is Worth 16 × 16 words: transformers for image recognition at scale (2020). https://doi.org/10.48550/arXiv.2010.11929

Redmon, J., Farhadi, A.: Yolov3: an incremental improvement. computer vision and pattern recognition. arXiv:2004.10934 (2018)

Bochkovskiy, A., Wang, C.-Y., Liao, H.-Y.M.: “YOLOv4: optimal speed and accuracy of object detection. computer vision and pattern recognition. arXiv:2004.10934 (2020)

Wang, C.Y., Bochkovskiy, A., Liao, H.Y.M.: YOLOv7: trainable bag-of-freebies sets new state-of-the-art for real-time object detectors. arXiv (2022). https://doi.org/10.48550/arXiv.2207.02696

Yu, F., Koltun, V.: Multi-Scale context aggregation by dilated convolutions. In: ICLR. (2016). https://doi.org/10.48550/arXiv.1511.07122

Liu, X., Peng, H., Zheng, N., et al.: EfficientViT: memory efficient vision transformer with cascaded group attention. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 14420–14430 (2023)

Zhang, Q.L., Yang, Y.B.: SA-Net: shuffle attention for deep convolutional neural networks. In: ICASSP 2021–2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 2235–2239. IEEE (2021)

Guo, X., Ma, M., Zhang, J., et al.: YOLOX-B: a better YOLOX model for real-time driver behavior detection. In: ICASSP 2023–2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 1–5. IEEE (2023)

Ioffe, S., Szegedy, C.: Batch normalization: accelerating deep network training by reducing internal covariate shift. In: International Conference on Machine Learning, pp. 448–456. PMLR (2015)

Elfwing, S., Uchibe, E., Doya, K.: Sigmoid-weighted linear units for neural network function approximation in reinforcement learning. Neural Netw. 107, 3–11 (2018). https://doi.org/10.1016/j.neunet.2017.12.012

He, K., Zhang, X., Ren, S., Sun, J.: Spatial pyramid pooling in deep convolutional networks for visual recognition. IEEE Trans. Pattern Anal. Mach. Intell. (2015). https://doi.org/10.1109/TPAMI.2015.2389824

Lim, J.S., Astrid, M., Yoon, H.J., et al.: Small object detection using context and attention. In: 2021 International Conference on Artificial intelligence in Information and Communication (ICAIIC). IEEE, 181–186 (2021)

Wang, P., et al.: understanding convolution for semantic segmentation. In: Workshop on Applications of Computer Vision (2018)

Chen, L.C., Papandreou, G., Kokkinos, I., et al.: DeepLab: semantic image segmentation with deep convolutional nets, Atrous convolution, and fully connected CRFs. IEEE Trans. Pattern Anal. Mach. Intell. 40(4), 834–848 (2018). https://doi.org/10.1109/TPAMI.2017.2699184

Loshchilov, I., Hutter, F.: Decoupled weight decay regularization (2017). https://doi.org/10.48550/arXiv.1711.05101

Tan, M., Le, Q.: EfficientNet: rethinking model scaling for convolutional neural networks. In: International Conference on Machine Learning. PMLR, 6105–6114 (2019)

Long, X., Deng, K., Wang, G., et al.: PP-YOLO: an effective and efficient implementation of object detector. arXiv preprint arXiv:2007.12099 (2020)

Xu, S., Wang, X., Lv, W., et al.: PP-YOLOE: an evolved version of YOLO. arXiv preprint arXiv:2203.16250 (2022)

Ge, Z., Liu, S., Wang, F., et al.: YOLOX: exceeding yolo series in 2021. arXiv preprint arXiv:2107.08430 (2021)

Acknowledgments

This work was supported in part by Inner Mongolia Natural Science Foundation of China under Grant No. 2021MS06016; Research Project on Strengthening the Construction of Important Ecological Security Barrier in Northern China by Higher Education Institutions in Inner Mongolia Autonomous Region under Grant No. STAQZX202321; Self-project of the Engineering Research Center of Ecological Big Data, Ministry of Education, China.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2024 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Xi, Y., Guo, J., Ma, M. (2024). YOLO-BS: A Better Object Detection Model for Real-Time Driver Behavior Detection. In: Huang, DS., Pan, Y., Guo, J. (eds) Advanced Intelligent Computing Technology and Applications. ICIC 2024. Lecture Notes in Computer Science, vol 14873. Springer, Singapore. https://doi.org/10.1007/978-981-97-5615-5_7

Download citation

DOI: https://doi.org/10.1007/978-981-97-5615-5_7

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-97-5614-8

Online ISBN: 978-981-97-5615-5

eBook Packages: Computer ScienceComputer Science (R0)