Abstract

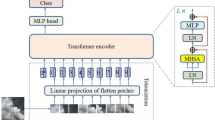

Smoke detection plays a crucial role in the safety production of petrochemical enterprises and fire prevention. Image-based machine learning and deep learning methods have been widely studied. Recently, many works have applied the transformer to solve problems faced by computer vision tasks (such as classification and object detection). To our knowledge, there are few studies using the transformer structure to detect smoke. In order to research the application potential and improve the performance of the transformer in the smoke detection field, we propose a model consisting of two transformer encoders and a convolutional neural network (CNN) module. The first transformer encoder can be used to establish the global relationship of an image, and the CNN structure can provide additional local information to the transformer. The fusion of global information and local information is conducive to the second transfer encoder to make better decisions. Experiments results on large-size dataset for industrial smoke detection illustrate the effectiveness of the proposed model.

This work was supported in part by the National Science Foundation of China under Grant 62273011 and Grant 62076013; in part by the Beijing Natural Science Foundation under Grant JQ21014; in part by the Industry-University-Research Innovation Fund for Chinese University - Blue Point Distributed Intelligent Computing Project under 2021LDA03003; in part by the Ministry of Education of China under Grant 202102535002, Grant 202102535012; in part by the Key Laboratory of Intelligent Control and Optimization for Industrial Equipment of Ministry of Education, Dalian University of Technology under Grant LICO2022TB03.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Atkinson, R.: Atmospheric chemistry of VOCs and NOx. Atmos. Environ. 34(12–14), 2063–2101 (2000)

Song, Y., et al.: Source apportionment of PM\(_{2.5}\) in Beijing by positive matrix factorization. Atmos. Environ. 40(8), 1526–1537 (2006)

Motte, J., Alvarenga, R.A., Thybaut, J.W., Dewulf, J.: Quantification of the global and regional impacts of gas flaring on human health via spatial differentiation. Environ. Pollut. 291, 118213 (2021)

Gu, K., Qiao, J., Lin, W.: Recurrent air quality predictor based on meteorology- and pollution-related factors. IEEE Trans. Industr. Inform. 14(9), 3946–3955 (2018)

Soh, P.-W., Chang, J.-W., Huang, J.-W.: Adaptive deep learning-based air quality prediction model using the most relevant spatial-temporal relations. IEEE Access 6, 38186–38199 (2018)

Gu, K., Qiao, J., Li, X.: Highly efficient picture-based prediction of PM\(_{2.5}\) concentration. IEEE Trans. Ind. Electron. 66(4), 3176–3184 (2019)

Lee, S.H., Lee, S., Song, B.C.: Vision transformer for small-size datasets. arXiv preprint arXiv:2112.13492 (2021)

Gu, K., Liu, H., Xia, Z., Qiao, J., Lin, W., Thalmann, D.: PM\(_{2.5}\) monitoring: use information abundance measurement and wide and deep learning. IEEE Trans. Neural Netw. Learn. Syst. 32(10), 4278–4290 (2021)

Yue, G., Gu, K., Qiao, J.: Effective and efficient photo-based concentration estimation. IEEE Trans. Instrum. Meas. 68(10), 396–3971 (2019)

Gu, K., Liu, J., Shi, S., Xie, S., Shi, T., Qiao, J.: Self-organizing multi-channel deep learning system for river turbidity monitoring. IEEE Trans. Instrum. Meas. 71, 1–13 (2022)

Dosovitskiy, A., et al.: An image is worth 16x16 words: transformers for image recognition at scale. arXiv preprint arXiv:2010.11929 (2020)

Gu, K., Xia, Z., Qiao, J.: Stacked selective ensemble for PM\(_{2.5}\) forecast. IEEE Trans. Instrum. Meas. 69(3), 660–671 (2019)

Gu, K., Xia, Z., Qiao, J., Lin, W.: Deep dual-channel neural network for image-based smoke detection. IEEE Trans. Multimedia 22(2), 311–323 (2020)

Graham, B., et al.: Levit: a vision transformer in ConvNet’s clothing for faster inference. In: Proceedings IEEE International Conference on Computer Vision, pp. 12259–12269 (2021)

Gu, K., Zhang, Y., Qiao, J.: Ensemble meta learning for few-shot soot density recognition. IEEE Trans. Industr. Inform. 17(3), 2261–2270 (2021)

Carion, N., Massa, F., Synnaeve, G., Usunier, N., Kirillov, A., Zagoruyko, S.: End-to-end object detection with transformers. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, J.-M. (eds.) ECCV 2020. LNCS, vol. 12346, pp. 213–229. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-58452-8_13

Zhu, X., Su, W., Lu, L., Li, B., Wang, X., Dai, J.: Deformable DETR: deformable transformers for end-to-end object detection. arXiv preprint arXiv:2010.04159 (2020)

Zheng, S., et al.: Rethinking semantic segmentation from a sequence-to-sequence perspective with transformers. In: Proceedings IEEE/CVF Conference Computer Vision Pattern Recognition, pp. 6881–6890 (2021)

Chen, H., et al.: Pre-trained image processing transformer. In: Proceedings IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 12299–12310 (2021)

Ojo, J.A., Oladosu, J.A.: Video-based smoke detection algorithms: a chronological survey. In: Proceedings International Institute for Science, Technology and Education, pp. 2222–1719 (2014)

Gu, K., Li, L., Lu, H., Min, X., Lin, W.: A fast reliable image quality predictor by fusing micro-and macro-structures. IEEE Trans. Ind. Electron. 64(5), 3903–3912 (2017)

Kaabi, R., Frizzi, S., Bouchouicha, M., Fnaiech, F., Moreau, E.: Video smoke detection review: State of the art of smoke detection in visible and IR range. In: Proceedings International Conference on Smart, Monitored and Controlled Cities, pp. 81–86 (2017)

Gu, K., Zhang, Y., Qiao, J.: Ensemble meta-learning for few-shot soot density recognition. IEEE Trans. Industr. Inform. 17(3), 2261–2270 (2020)

Gaur, A., Singh, A., Kumar, A., Kumar, A., Kapoor, K.: Video flame and smoke based fire detection algorithms: a literature review. Fire Technol. 56(5), 1943–1980 (2020)

Gu, K., Zhai, G., Yang, X., Zhang, W.: Hybrid no-reference quality metric for singly and multiply distorted images. IEEE Trans. Broadcast. 60(3), 555–567 (2014)

Cui, Y., Dong, H., Zhou, E.: An early fire detection method based on smoke texture analysis and discrimination. In: Proceedings Congress Image Signal Processing, vol. 3, pp. 95–99 (2008)

Gubbi, J., Marusic, S., Palaniswami, M.: Smoke detection in video using wavelets and support vector machines. Fire Saf. J. 44(8), 1110–1115 (2009)

Vidal-Calleja, T.A., Agammenoni, G.: Integrated probabilistic generative model for detecting smoke on visual images. In: Proceedings International Conference on Robotics and Automation, pp. 2183–2188 (2012)

Yuan, F.: Video-based smoke detection with histogram sequence of LBP and LBPV pyramids. Fire Saf. J. 46(3), 132–139 (2011)

Yuan, F., Xia, X., Shi, J., Li, H., Li, G.: Non-linear dimensionality reduction and Gaussian process based classification method for smoke detection. IEEE Access 5, 6833–6841 (2017)

Gu, K., Zhang, Y., Qiao, J.: Vision-based monitoring of flare soot. IEEE Trans. Instrum. Meas. 69(9), 7136–7145 (2020)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Huang, G., Liu, Z., Van Der Maaten, L., Weinberger, K.Q.: Densely connected convolutional networks. In: Proceedings IEEE Conference on Computer Vision and Pattern Recognition, pp. 4700–4708 (2017)

Szegedy, C., et al.: Going deeper with convolutions. In: Proceedings IEEE Conference on Computer Vision and Pattern Recognition, pp. 1–9 (2015)

Yin, Z., Wan, B., Yuan, F., Xia, X., Shi, J.: A deep normalization and convolutional neural network for image smoke detection. IEEE Access 5, 18429–18438 (2017)

Gu, K., Xia, Z., Qiao, J., Lin, W.: Deep dual-channel neural network for image-based smoke detection. IEEE Trans. Multimedia 22(2), 311–323 (2019)

Gu, K., Wang, S., Zhai, G., Ma, S., Lin, W.: Screen image quality assessment incorporating structural degradation measurement. In: Proceedings IEEE International Symposium on Circuits and Systems, pp. 125–128 (2015)

Yue, G., Hou, C., Gu, K., Zhou, T., Zhai, G.: Combining local and global measures for DIBR-synthesized image quality evaluation. IEEE Trans. Image Process. 28(4), 2075–2088 (2019)

Gu, K., Zhai, G., Yang, X., Zhang, W.: Deep learning network for blind image quality assessment. In: Proceedings IEEE International Conference on Image Processing, pp. 511–515 (2014)

Sun, W., Gu, K., Ma, S., Zhu, W., Liu, N., Zhai, G.: A large-scale compressed 360-degree spherical image database: from subjective quality evaluation to objective model comparison. In: Proceedings IEEE International Workshop on Multimedia Signal Processing, pp. 1–6 (2018)

Liu, Y., Qin, W., Liu, K., Zhang, F., Xiao, Z.: A dual convolution network using dark channel prior for image smoke classification. IEEE Access 7, 60697–60706 (2019)

You, C., Li, Z., Li, M., Gao, Z., Li, W.: Db-net: dual attention network with bilinear pooling for fire-smoke image classification. J. Phys: Conf. Ser. 1631, 012054 (2020)

He, L., Gong, X., Zhang, S., Wang, L., Li, F.: Efficient attention based deep fusion CNN for smoke detection in fog environment. Neurocomputing 434, 224–238 (2021)

Han, K., Xiao, A., Wu, E., Guo, J., Xu, C., Wang, Y.: Transformer in transformer. In: Advances in Neural Information Processing Systems, vol. 34, pp. 15908–15919 (2021)

Liu, Z., et al.: Swin transformer: hierarchical vision transformer using shifted windows. In: Proceedings IEEE/CVF International Conference on Computer Vision, pp. 10012–10022 (2021)

Ali, A., et al.: XCiT: cross-covariance image transformers. In: Advances in Neural Information Processing Systems, vol. 34, pp. 20014–20027 (2021)

Yuan, L., et al.: Tokens-to-token VIT: training vision transformers from scratch on ImageNet. In: Proceedings IEEE International Conference on Computer Vision, pp. 558–567 (2021)

Wu, H., et al.: CvT: introducing convolutions to vision transformers. In: Proceedings IEEE International Conference on Computer Vision, pp. 22–31 (2021)

Yuan, K., Guo, S., Liu, Z., Zhou, A., Yu, F., Wu, W.: Incorporating convolution designs into visual transformers. In: Proceedings IEEE International Conference on Computer Vision, pp. 579–588 (2021)

Li, Y., Zhang, K., Cao, J., Timofte, R., Van Gool, L.: Localvit: bringing locality to vision transformers. arXiv preprint arXiv:2104.05707 (2021)

Gong, Y., Ma, X.: Multiple categories of visual smoke detection database. arXiv preprint arXiv:2208.00210 (2022)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Gong, Y., Lian, X., Ma, X., Xia, Z., Zhou, C. (2023). Combining Transformer and Convolutional Neural Network for Smoke Detection. In: Zhai, G., Zhou, J., Yang, H., Yang, X., An, P., Wang, J. (eds) Digital Multimedia Communications. IFTC 2022. Communications in Computer and Information Science, vol 1766. Springer, Singapore. https://doi.org/10.1007/978-981-99-0856-1_9

Download citation

DOI: https://doi.org/10.1007/978-981-99-0856-1_9

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-99-0855-4

Online ISBN: 978-981-99-0856-1

eBook Packages: Computer ScienceComputer Science (R0)