Abstract

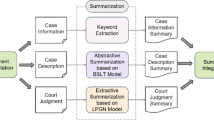

The growing number of public legal documents has led to an increased demand for automatic summarization. Considering the well-organized structure of legal documents, extractive methods can be an efficient method for text summarization. Generic text summarisation models extract based on textual semantic information, ignoring the important role of topic information and articles of law in legal text summarization. In addition, the LSTM model fails to capture global topic information and suffers from long-distance information loss when dealing with legal texts that belong to long texts. In this paper, we propose a method for summarization extraction in the legal domain, which is based on enhanced LSTM and aggregated legal article information. The enhanced LSTM is an improvement of the LSTM model by fusing text topic vectors and introducing slot storage units. Topic information is applied to interact with sentences. The slot memory unit is applied to model the long-range relationship between sentences. The enhanced LSTM helps to improve the feature extraction of legal texts. The articles of law after being encoded is applied to the sentence classification to improve the performance of the model for summary extraction. We conduct experiments on the Chinese legal text summarization dataset, the experimental results demonstrate that our proposed method outperforms the baseline methods.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

http://cail.cipsc.org.cn.

- 2.

https://thunlp.oss-cn-qingdao.aliyuncs.com/bert/ms.zip.

References

Arumae, K., Liu, F.: Reinforced extractive summarization with question-focused rewards. In: Proceedings of ACL 2018, Melbourne, Australia, July 15-20, 2018, Student Research Workshop (2018)

Bi, K., Jha, R., Croft, W.B., Celikyilmaz, A.: AREDSUM: adaptive redundancy-aware iterative sentence ranking for extractive document summarization. In: Proceedings of the 16th Conference of the European Chapter of the Association for Computational Linguistics: Main Volume, EACL, pp. 281–291. Association for Computational Linguistics (2021)

Cheng, J., Lapata, M.: Neural summarization by extracting sentences and words. In: Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics, ACL. The Association for Computer Linguistics (2016)

Cui, P., Hu, L., Liu, Y.: Enhancing extractive text summarization with topic-aware graph neural networks. In: Proceedings of the 28th International Conference on Computational Linguistics, pp. 5360–5371 (2020)

Dieng, A.B., Wang, C., Gao, J., Paisley, J.W.: TOPICRNN: a recurrent neural network with long-range semantic dependency. In: 5th International Conference on Learning Representations, ICLR. OpenReview.net (2017)

Dong, Y., Shen, Y., Crawford, E., van Hoof, H., Cheung, J.C.K.: Banditsum: extractive summarization as a contextual bandit. In: Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, pp. 3739–3748. Association for Computational Linguistics (2018)

Issam, K.A.R., Patel, S., N, S.C.: Topic modeling based extractive text summarization. arxiv:2106.15313 (2021)

Joshi, A., Fidalgo, E., Alegre, E., Fernández-Robles, L.: Deepsumm: exploiting topic models and sequence to sequence networks for extractive text summarization. Expert Syst. Appl. 211, 118442 (2023)

Kingma, D.P., Ba, J.: Adam: A method for stochastic optimization. In: 3rd International Conference on Learning Representations, ICLR (2015)

Kingma, D.P., Welling, M.: Auto-encoding variational bayes. arXiv.org (2014)

Le, Q.V., Mikolov, T.: Distributed representations of sentences and documents. In: Proceedings of the 31th International Conference on Machine Learning, ICML. vol. 32, pp. 1188–1196 (2014)

Liu, Y., Lapata, M.: Text summarization with pretrained encoders. In: Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing, EMNLP-IJCNLP (2019)

Miao, Y., Grefenstette, E., Blunsom, P.: Discovering discrete latent topics with neural variational inference. In: Proceedings of the 34th International Conference on Machine Learning, ICML. Proceedings of Machine Learning Research, vol. 70, pp. 2410–2419. PMLR (2017)

Mikolov, T., Zweig, G.: Context dependent recurrent neural network language model. In: 2012 IEEE Spoken Language Technology Workshop (SLT), pp. 234–239. IEEE (2012)

Nallapati, R., Zhai, F., Zhou, B.: Summarunner: a recurrent neural network based sequence model for extractive summarization of documents. In: Proceedings of the Thirty-First AAAI Conference on Artificial Intelligence, pp. 3075–3081. AAAI Press (2017)

Narayan, S., Cohen, S.B., Lapata, M.: Don’t give me the details, just the summary! topic-aware convolutional neural networks for extreme summarization. In: Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, pp. 1797–1807. Association for Computational Linguistics (2018)

Paulus, R., Xiong, C., Socher, R.: A deep reinforced model for abstractive summarization. In: 6th International Conference on Learning Representations, ICLR (2018)

Ruan, Q., Ostendorff, M., Rehm, G.: HiStruct+: improving extractive text summarization with hierarchical structure information. In: Findings of the Association for Computational Linguistics: ACL (2022)

Wang, L., Yao, J., Tao, Y., Zhong, L., Liu, W., Du, Q.: A reinforced topic-aware convolutional sequence-to-sequence model for abstractive text summarization. In: Proceedings of the Twenty-Seventh International Joint Conference on Artificial Intelligence, IJCAI, pp. 4453–4460. ijcai.org (2018)

Wei, Y.: Document summarization method based on heterogeneous graph. In: 9th International Conference on Fuzzy Systems and Knowledge Discovery, FSKD, pp. 1285–1289. IEEE (2012)

Xiao, W., Carenini, G.: Extractive summarization of long documents by combining global and local context. In: Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing, EMNLP-IJCNLP (2019)

Xu, J., Gan, Z., Cheng, Y., Liu, J.: Discourse-aware neural extractive model for text summarization. arxiv1910.14142 (2019)

Zhang, X., Lapata, M., Wei, F., Zhou, M.: Neural latent extractive document summarization. In: Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, pp. 779–784. Association for Computational Linguistics (2018)

Zhang, X., Wei, F., Zhou, M.: HIBERT: document level pre-training of hierarchical bidirectional transformers for document summarization. In: Proceedings of the 57th Conference of the Association for Computational Linguistics, ACL (2019)

Zheng, X., Sun, A., Li, J., Muthuswamy, K.: Subtopic-driven multi-document summarization. In: Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing, EMNLP-IJCNLP, pp. 3151–3160 (2019)

Zhong, H., Zhang, Z., Liu, Z., Sun, M.: Open Chinese language pre-trained model zoo. Tech. rep. (2019). https://github.com/thunlp/openclap

Zhong, M., Liu, P., Chen, Y., Wang, D., Qiu, X., Huang, X.: Extractive summarization as text matching. In: Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, ACL. pp. 6197–6208. Association for Computational Linguistics (2020)

Zhou, Q., Yang, N., Wei, F., Huang, S., Zhou, M., Zhao, T.: Neural document summarization by jointly learning to score and select sentences. In: Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics, ACL, pp. 654–663. Association for Computational Linguistics (2018)

Acknowledgements

This work is supported by the National Natural Science Foundation of China (NSFC) under Grant 61872111.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2024 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Chen, Z., Ye, L., Zhang, H. (2024). Enhancing LSTM and Fusing Articles of Law for Legal Text Summarization. In: Luo, B., Cheng, L., Wu, ZG., Li, H., Li, C. (eds) Neural Information Processing. ICONIP 2023. Communications in Computer and Information Science, vol 1968. Springer, Singapore. https://doi.org/10.1007/978-981-99-8181-6_9

Download citation

DOI: https://doi.org/10.1007/978-981-99-8181-6_9

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-99-8180-9

Online ISBN: 978-981-99-8181-6

eBook Packages: Computer ScienceComputer Science (R0)