Abstract

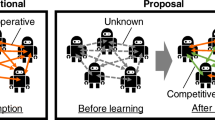

This paper presents a novel competitive collaboration-based reinforcement learning strategy to improve the performance of goal-oriented autonomous agent systems. Competitive characteristics are introduced while agents are required to collaboratively share and update a single knowledge pool. Experimental evaluations are conducted in a reward collection task where the goal is to collect as many reward points as possible across a set space navigating through multiple channels while avoiding costs associated with channel switching. Evaluations across 50 challenge levels of the task in comparison to state-of-the-art reinforcement learning models reveal that introducing a combination of competition and collaboration attributes facilitate statistically significant performance improvements. The compared incremental models carry forward knowledge from previous challenge levels to learn more complex challenges. However, they resort to sub-optimal solutions where cost minimisation is given priority over higher reward collection. Traditional reinforcement learning is generally incapable of identifying a balance between reward collection and cost minimisation leading to poor performance. In contrast, the proposed competitive collaboration-based model is capable of strategically planning the best route while taking appropriate risks with higher costs for better rewards in the long run. Further, investigations with multiple reward schemes illustrate that frequent rewards to guide the agents’ strategies as opposed to delayed rewards that are awarded at less frequent intervals are imperative for agent performance. The presented evaluations lend insights into future research for optimising agent behaviours in complex real-world applications with competitive collaboration.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Cruz, D.L., Yu, W.: Path planning of multi-agent systems in unknown environment with neural kernel smoothing and reinforcement learning. Neurocomputing 233, 34–42 (2017)

Ding, L., Lin, Z., Shi, X., Yan, G.: Target-value-competition-based multi-agent deep reinforcement learning algorithm for distributed nonconvex economic dispatch. IEEE Trans. Power Syst. 1 (2022). https://doi.org/10.1109/TPWRS.2022.3159825

Esteban, P.G., Insua, D.R.: Supporting an autonomous social agent within a competitive environment. Cybern. Syst. 45(3), 241–253 (2014)

Goldberg, D., Matarić, M.J.: Maximizing reward in a non-stationary mobile robot environment. Auton. Agent. Multi-Agent Syst. 6, 287–316 (2003)

Jiang, Y., Ji, L., Liu, Q., Yang, S., Liao, X.: Couple-group consensus for discrete-time heterogeneous multiagent systems with cooperative-competitive interactions and time delays. Neurocomputing 319, 92–101 (2018). https://doi.org/10.1016/j.neucom.2018.08.048

Jin, D., Kannengießer, N., Sturm, B., Sunyaev, A.: Tackling challenges of robustness measures for autonomous agent collaboration in open multi-agent systems. In: HICSS, pp. 1–10 (2022)

Kouka, N., BenSaid, F., Fdhila, R., Fourati, R., Hussain, A., Alimi, A.M.: A novel approach of many-objective particle swarm optimization with cooperative agents based on an inverted generational distance indicator. Inf. Sci. 623, 220–241 (2023)

Low, E.S., Ong, P., Cheah, K.C.: Solving the optimal path planning of a mobile robot using improved Q-learning. Robot. Auton. Syst. 115, 143–161 (2019)

Lu, C.X., Sun, Z.Y., Shi, Z.Z., Cao, B.X.: Using emotions as intrinsic motivation to accelerate classic reinforcement learning. In: 2016 International Conference on Information System and Artificial Intelligence (ISAI), pp. 332–337 (2016). https://doi.org/10.1109/ISAI.2016.0077

Mukherjee, D., Gupta, K., Chang, L.H., Najjaran, H.: A survey of robot learning strategies for human-robot collaboration in industrial settings. Robot. Comput.-Integr. Manuf. 73, 102231 (2022). https://doi.org/10.1016/j.rcim.2021.102231

Samarasinghe, D., Barlow, M., Lakshika, E.: Flow-based reinforcement learning. IEEE Access 10, 102247–102265 (2022). https://doi.org/10.1109/ACCESS.2022.3209260

Samarasinghe, D., Barlow, M., Lakshika, E., Kasmarik, K.: Grammar-based cooperative learning for evolving collective behaviours in multi-agent systems. Swarm Evol. Comput. 69, 101017 (2022). https://doi.org/10.1016/j.swevo.2021.101017

Shen, S., Chi, M.: Reinforcement learning: the sooner the better, or the later the better? In: Proceedings of the 2016 Conference on User Modeling Adaptation and Personalization, pp. 37–44. UMAP ’16, Association for Computing Machinery, New York, NY, USA (2016)

Shen, Z., Miao, C., Tao, X., Gay, R.: Goal oriented modeling for intelligent software agents. In: Proceedings. IEEE/WIC/ACM International Conference on Intelligent Agent Technology, 2004. (IAT 2004), pp. 540–543 (2004). https://doi.org/10.1109/IAT.2004.1343014

Sutton, R.S., Barto, A.G.: Reinforcement Learning: An Introduction. MIT Press, Cambridge (2018)

Tadewos, T.G., Shamgah, L., Karimoddini, A.: Automatic decentralized behavior tree synthesis and execution for coordination of intelligent vehicles. Knowl.-Based Syst. 260, 110181 (2023). https://doi.org/10.1016/j.knosys.2022.110181

Wang, J., et al.: Cooperative and competitive multi-agent systems: from optimization to games. IEEE/CAA J. Autom. Sin. 9(5), 763–783 (2022). https://doi.org/10.1109/JAS.2022.105506

Watkins, C.J., Dayan, P.: Q-learning. Mach. Learn. 8, 279–292 (1992)

Zeng, S., Chen, T., Garcia, A., Hong, M.: Learning to coordinate in multi-agent systems: a coordinated actor-critic algorithm and finite-time guarantees. In: Firoozi, R., et al. (eds.) Proceedings of The 4th Annual Learning for Dynamics and Control Conference. Proceedings of Machine Learning Research, vol. 168, pp. 278–290. PMLR, 23–24 June 2022

Zhang, Y., Zhang, C., Liu, X.: Dynamic scholarly collaborator recommendation via competitive multi-agent reinforcement learning. In: Proceedings of the Eleventh ACM Conference on Recommender Systems, pp. 331–335. RecSys ’17, Association for Computing Machinery, New York, NY, USA (2017). https://doi.org/10.1145/3109859.3109914

Zhou, Z., Xu, H.: Mean field game and decentralized intelligent adaptive pursuit evasion strategy for massive multi-agent system under uncertain environment. In: 2020 American Control Conference (ACC), pp. 5382–5387 (2020)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2024 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Samarasinghe, D., Barlow, M., Lakshika, E. (2024). Competitive Collaboration for Complex Task Learning in Agent Systems. In: Liu, T., Webb, G., Yue, L., Wang, D. (eds) AI 2023: Advances in Artificial Intelligence. AI 2023. Lecture Notes in Computer Science(), vol 14472. Springer, Singapore. https://doi.org/10.1007/978-981-99-8391-9_26

Download citation

DOI: https://doi.org/10.1007/978-981-99-8391-9_26

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-99-8390-2

Online ISBN: 978-981-99-8391-9

eBook Packages: Computer ScienceComputer Science (R0)