Abstract

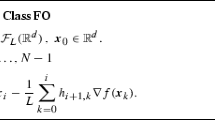

In this paper we propose a new line search algorithm that ensures global convergence of the Polak-Ribière conjugate gradient method for the unconstrained minimization of nonconvex differentiable functions. In particular, we show that with this line search every limit point produced by the Polak-Ribière iteration is a stationary point of the objective function. Moreover, we define adaptive rules for the choice of the parameters in a way that the first stationary point along a search direction can be eventually accepted when the algorithm is converging to a minimum point with positive definite Hessian matrix. Under strong convexity assumptions, the known global convergence results can be reobtained as a special case. From a computational point of view, we may expect that an algorithm incorporating the step-size acceptance rules proposed here will retain the same good features of the Polak-Ribière method, while avoiding pathological situations.

Similar content being viewed by others

References

M. Al-Baali, Descent property and global convergence of the Fletcher-Reeves method with inexact line search,IMA Journal on Numerical Analysis 5 (1985) 121–124.

R. De Leone, M. Gaudioso and L. Grippo, Stopping criteria for line search methods without derivatives,Mathematical Programming 30 (1984) 285–300.

L.C.W. Dixon, Nonlinear optimization: A survey of the state of the art, in: D.J. Evans, ed.,Software for numerical mathematics (Academic Press, New York, 1973) pp. 193–216.

R. Fletcher,Practical methods of optimization (John Wiley, New York, 1987).

R. Fletcher and C.M. Reeves, Function minimization by conjugate gradients,Computing Journal 7 (1964) 149–154.

J.C. Gilbert and J. Nocedal, Global convergence of conjugate gradient methods for optimization,SIAM Journal on Optimization 2 (1992) 21–42.

L. Grippo, F. Lampariello and S. Lucidi, Global convergence and stabilization of unconstrained minimization methods without derivatives,Journal of Optimization Theory and Applications 56 (1988) 385–406.

M.R. Hestenes,Conjugate direction methods in optimization (Springer, New York, 1980).

M.R. Hestenes and E.L. Stiefel, Methods of conjugate gradients for solving linear systems,J. Res. Nat. Bur. Standards Sect. 5 49 (1952) 409–36.

K.M. Khoda, Y. Liu and C. Storey, Generalized Polak-Ribiere algorithm,Journal on Optimization Theory and Applications 75 (1992) 345–354.

Y. Liu and C. Storey, Efficient generalized conjugate gradient algorithms, part 1: Theory,Journal on Optimization Theory and Applications 69 (1991) 129–137.

E. Polak and G. Ribière, Note sur la convergence de méthodes de directions conjuguées,Revue Francaise d’Informatique et de Recherche Opérationnelle 16 (1969) 35–43.

M.J.D. Powell, Nonconvex minimization calculations and the conjugate gradient method, in: Lecture Notes in Mathematics 1066 (Springer, Berlin, 1984) pp. 122–141.

M.J.D. Powell, Convergence properties of algorithms for nonlinear optimization,SIAM Review 28 (1986) 487–500.

D.F. Shanno, Globally convergent conjugate gradient algorithms,Mathematical Programming 33 (1985) 61–67.

D.F. Shanno, Conjugate gradient methods with inexact searches,Mathematics of Operations Research 3 (1978) 244–2567.

D.F. Shanno, On the convergence of a new conjugate gradient algorithm,SIAM Journal on Numerical Analysis 15 (1978) 1247–1257.

G. Zoutendijk, Nonlinear programming computational methods, in: J. Abadie, ed.,Integer and Nonlinear Programming (North-Holland, Amsterdam, 1970) pp. 37–86.

Author information

Authors and Affiliations

Additional information

This research was supported by Agenzia Spaziale Italiana, Rome, Italy.

Rights and permissions

About this article

Cite this article

Grippo, L., Lucidi, S. A globally convergent version of the Polak-Ribière conjugate gradient method. Mathematical Programming 78, 375–391 (1997). https://doi.org/10.1007/BF02614362

Received:

Revised:

Issue Date:

DOI: https://doi.org/10.1007/BF02614362