Abstract

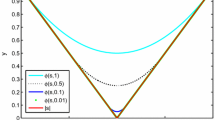

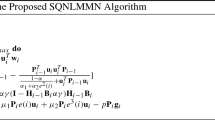

In this paper, a smoothing inertial neural network (SINN) is proposed for the \(L_{p}\)-\(L_{1}\) \((1\ge p>0)\) minimization problem, in which the objective function is non-smooth, non-convex, and non-Lipschitz. First, based on the smooth approximation technique, the objective function can be transformed into a smooth optimization problem, effectively solving the \(L_{p}\)-\(L_{1}\) \((1\ge p>0)\) minimization model with non-smooth terms. Second, the Lipschitz property of the gradient that is smooth objective function is discussed. Then through theoretical analysis, the existence and uniqueness of the solution is discussed under the condition of restricted isometric property (RIP), and it is proved that the proposed SINN converges to the optimal solution of the minimization problem. Finally, the effectiveness and superiority of the proposed SINN are verified by the successful recovery performance under different pulse noise levels.

Similar content being viewed by others

References

A. Beck, M. Teboulle, A fast iterative shrinkage thresholding algorithm for linear inverse problems. SIAM J. Imaging Sci. 2(1), 183–202 (2009)

S. Becker, J. Bobin, E. Candes, Nesta: a fast and accurate first-order method for sparse recovery. SIAM J. Imaging Sci. 4(1), 1–39 (2011)

W. Bian, X. Chen, Smoothing neural network for constrained nonlipschitz optimization with applications. IEEE Trans. Neural Networks Learn. Syst. 23(3), 399–411 (2012)

W. Bian, X. Chen, Neural network for nonsmooth, nonconvex constrained minimization via smooth approximation. IEEE Trans. Neural Networks Learn. Syst. 25(3), 545–556 (2013)

W. Bian, X. Xue, Subgradient-based neural networks for nonsmooth nonconvex optimization problems. IEEE Trans. Neural Networks. 20(6), 1024–1038 (2009)

S. Boyd, N. Parikh, E. Chu, B. Peleato, J. Eckstein, Distributed optimization and statistical learning via the alternating direction method of multipliers. Found. Trends Mach. Learn. 3(1), 1–122 (2010)

E. Candes, The restricted isometry property and its implications for compressed sensing. Comptes Rendus Mathematique. 346(9), 589–592 (2008)

E. Candes, J. Romberg, Sparsity and incoherence in compressive sampling. Inverse Prob. 23(3), 969–985 (2006)

S. Chen, D. Donoho, M. Saunders, Atomic decomposition by basis pursuit. SIAM J. Sci. Comput. 20(1), 33–61 (1998)

X. Chen, Smoothing methods for nonsmooth, nonconvex minimization. Math. Program. 134(1), 71–99 (2012)

F. Clarke, Optimization and nonsmooth analysis. Soc. Ind. Appl. Math. (1990). https://epubs.siam.org/doi/abs/10.1137/1.9781611971309

E. Elhamifar, R. Vidal, Sparse subspace clustering: algorithm, theory, and applications. IEEE Trans. Pattern Anal. Mach. Intel. 35(11), 2765–2781 (2012)

E. Esser, Y. Lou, J. Xin, A method for finding structured sparse solutions to non-negative least squares problems with applications. SIAM J. Imaging Sci. 6(4), 2010–2046 (2013)

X. He, H. Wen, T. Huang, A fixed-time projection neural network for solving \(l_1\) minimization problem. IEEE Trans. Neural Networks Learn. Syst. (2021). https://doi.org/10.1109/TNNLS.2021.3088535

C. Hu, Y. Liu, G. Li, X. Wang, Improved focuss method for reconstruction of cluster structured sparse signals in radar imaging. Sci. China (Inf. Sci.) 55(8), 1776–1788 (2012)

N. Jason, K. Sami, F. Marco, S. Tamer, Theory and implementation of an analog-to-information converter using random demodulation. In: IEEE International Symposium on Circuits and Systems, pp.1959-1962 (2007)

W. John, Y. Allen, G. Arvind, Robust face recognition via sparse representation. IEEE Trans. Pattern Anal. Mach. Intel. 31(2), 210–227 (2009)

Y. Li, A. Cichocki, S. Amari, Analysis of sparse representation and blind source separation. MIT Press. 16(6), 1193–1234 (2004)

Y. Li, A. Cichocki, S. Amari, Blind estimation of channel parameters and source components for eeg signals:a sparse factorization approach. IEEE Trans. Neural Networks. 17(2), 419–431 (2006)

J. Mairal, F. Bach, J. Ponce, G. Sapiro, A. Zisserman, Non-local sparse models for image restoration. In: 2009 IEEE 12th International Conference on Computer Vision (ICCV). pp. 2272–2279 (2010)

B. Natarajan, Sparse approximate solutions to linear systems. SIAM J. Comput. 24(2), 227–234 (1995)

S. Qin, X. Yang, X. Xue, J. Song, A one-layer recurrent neural network for pseudoconvex optimization problems with equality and inequality constraints. IEEE Trans. Cybern. 47(10), 3063–3074 (2017)

Y. Tian, Z. Wang, Stochastic stability of markovian neural networks with generally hybrid transition rates. IEEE Trans. Neural Networks Learn. Syst. (2021). https://doi.org/10.1109/TNNLS.2021.3084925

Y. Tian, Z. Wang, Extended dissipativity analysis for markovian jump neural networks via double-integral-based delay-product-type lyapunov functional. IEEE Trans. Neural Networks Learn. Syst. 32(7), 3240–3246 (2021)

R. Tibshirani, Regression shrinkage and selection via the lasso. J. Royal Statist. Soc.. Ser. B: Methodol. 58(1), 267–288 (1996)

J. Tropp, Greed is good: algorithmic results for sparse approximation. IEEE Trans. Inf. Theory. 50(10), 2231–2242 (2004)

D. Wang, Z. Zhang, Generalized sparse recovery model and its neural dynamical optimization method for compressed sensing. Circ. Syst. Signal Process. 36, 4326–4353 (2017)

D. Wang, Z. Zhang, KKT condition-based smoothing recurrent neural network for nonsmooth nonconvex optimization in compressed sensing. Neural Comput. Appl. 31, 2905–2920 (2019)

Y. Wang, G. Zhou, L. Caccetta, W. Liu, An alternative lagrange-dual based algorithm for sparse signal reconstruction. IEEE Trans. Signal Process. 59(4), 1895–1901 (2011)

F. Wen, P. Liu, Y. Liu, R. Qiu, W. Yu, Robust sparse recovery in impulsive noise via \(l_1\)-\(l_p\) optimization. IEEE Trans. Signal Process. 65(1), 105–118 (2017)

H. Wen, H. Wang, X. He, A neurodynamic algorithm for sparse signal reconstruction with finite-time convergence. Circ. Syst. Signal Process. 39(12), 6058–6072 (2020)

Y. Xiao, H. Zhu, S. Wu, Primal and dual alternating direction algorithms for \(l_1\)-\(l_1\)-norm minimization problems in compressive sensing. Comput. Optim. Appl. 54(2), 441–459 (2013)

Y. Zhao, X. He, T. Huang, J. Huang, Smoothing inertial projection neural network for minimization \(l_{p-q}\) in sparse signal reconstruction. Neural Networks. 99, 31–41 (2018)

Y. Zhao, X. He, T. Huang, J. Huang, P. Li, A smoothing neural network for minimization \(l_1\)-\(l_p\)in sparse signal reconstruction with measurement noises. Neural Networks. 122, 40–53 (2020)

Y. Zhao, X. Liao, X. He, R. Tang, Centralized and collective neurodynamic optimization approaches for sparse signal reconstruction via \(l_1\)-minimization. IEEE Trans. Neural Networks Learn. Syst. (2021). https://doi.org/10.1109/TNNLS.2021.3085314

J. Zhu, S. Rosset, T. Hastie, R. Tibshirani, 1-norm support vector machines. Adv. Neural Inf. Process. Syst. 16(1), 16 (2003)

Acknowledgements

This work is supported by Natural Science Foundation of China (Grant nos: 61773320), Fundamental Research Funds for the Central Universities (Grant no. XDJK2020TY003), and also supported by the Natural Science Foundation Project of Chongqing CSTC (Grant no. cstc2018jcyjAX0583).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix A

Appendix A

Proof of Theorem 2

We take \(x^*\in \Omega \) and a stationary point is defined as (19), and consider the Lyapunov function \(L(t)=\frac{1}{2}\Vert x(t)-x^*\Vert ^2\). Then, the following two equations hold:

and so we can obtain

and calculate with (15), it is not hard to get

Then, (46) become

Since \(A\mathbb {R}(x^*,\varepsilon (t))=0\), then

From condition (ii), it follows that

Combining (48) and (49), we obtain

Therefore, (50) can be converted to

we can get it from (51)

From (51), we know V(t) is a monotone nonincreasing function, so we obtain

Then, it can be obtained from condition (c)

Next, multiply the two sides of the equation by \(\mathrm{exp}(\lambda t)\) and integrate from 0 to t

where \(h_{0}=0\), so the trajectory of the algorithm and L(t) are bounded.

And then from the inequality (53), we can get

That is, we can get that \(\Vert \dot{x}(t)\Vert \) is also bounded, then the following conditions can be obtained by (49) and (53) : \(\int _{0}^{+\infty }\Vert \dot{x}(r)\Vert ^2dr=A<+\infty ,\ \Vert \ddot{x}(r)\Vert dr\le B\) where t is at \([0,+\infty ),\ A,\ B>0\), therefore

Thus, the limit of \(\Vert \dot{x}(t)\Vert ^3\) exists. Furthermore, it can be obtained that the limit of \(\lim \limits _{t\rightarrow \infty }\Vert \dot{x}(t)\Vert \) also exists, since \(\int _{0}^{+\infty }\Vert \dot{x}(r)\Vert ^2dr=A<+\infty \) and L(t) are bounded, we can obtain \(\lim \limits _{t\rightarrow \infty }\Vert \dot{x}(t)\Vert =0\), and further obtain \(\lim \limits _{t\rightarrow \infty }\dot{x}(t)=0\).

Afterward, we give the proof of \(\lim \limits _{t\rightarrow \infty }\Vert \ddot{x}(t)\Vert =0\).

Define \(h_{\upsilon }(t)=(\frac{1}{\upsilon })(\dot{x}(t+\upsilon )-\dot{x}(t))\), and available by simple calculations:

Then, according to the condition (a), we can get

Integrate (58), we can easily get \(\lim \limits _{t\rightarrow +\infty } \sup \Vert h_{\upsilon }(t)\Vert =0\), then we can get condition \(\lim \limits _{t\rightarrow \infty }\Vert \ddot{x}(t)\Vert =0\) from \(\Vert \ddot{x}(r)\Vert \le \sup \Vert h_{\upsilon }(t)\Vert \). Thence, we have

Therefore, we demonstrate that \(x^*\) is a stable point defined by (19).

Rights and permissions

About this article

Cite this article

Jiang, L., He, X. A Smoothing Inertial Neural Network for Sparse Signal Reconstruction with Noise Measurements via \(L_p\)-\(L_1\) minimization. Circuits Syst Signal Process 41, 6295–6313 (2022). https://doi.org/10.1007/s00034-022-02083-7

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00034-022-02083-7