Abstract

To a great extent, immersion of a virtual environment (VE) depends on the naturalness of the interface it provides for interaction. As people commonly exploit gestures during communication, therefore interaction based on hand-postures enhances the degree of realism of a VE. However, the choice of selecting hand postures for interaction varies from person to person. Generalizing the use of a specific posture with a particular interaction requires considerable computation which in turns depletes intuition of a 3D interface. By investigating machine learning in the domain of virtual reality (VR), this paper presents an open posture-based approach for 3D interaction. The technique is user-independent and relies neither on the size and color of hand nor on the distance between camera and posing-position. The system works in two phases—in the first phase, hand-postures are learnt, whereas in the second phase the known postures are used to perform interaction. With an ordinary camera, a scanned image is partitioned into equal size non-overlapping tiles. Four light-weight features, based on binary histogram and invariant moments, are calculated for each part and portion of a posture-image. The support vector machine classifier is trained by posture-specific knowledge carried accumulatively in each tile. By posing any known posture, the system extracts the tiles information to detect a particular hand-posture. At the successful recognition, appropriate interaction is activated in the designed VE. The proposed system is implemented in a case-study application; vision-based open posture interaction using the libraries of OpenCV and OpenGL. The system is assessed in three separate evaluation sessions. Results of the evaluations testify efficacy of the approach in various VR applications.

Similar content being viewed by others

References

Ackad, C., Kay, J., Tomitsch, M.: Towards learnable gestures for exploring hierarchical information spaces at a large public display. In: CHI’14 Workshop on Gesture-based Interaction Design, vol. 49, p. 57 (2014). https://doi.org/10.1002/rwm3.20180

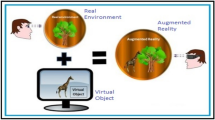

Akman, O., Poelman, R., Caarls, W., Jonker, P.: Multi-cue hand detection and tracking for a head-mounted augmented reality system. Mach. Vis. Appl. 24(5), 931–946 (2013). https://doi.org/10.1007/s00138-013-0500-6

Alam, M.M., Islam, M.T., Rahman, S.: A unified learning approach for hand gesture recognition and fingertip detection. arXiv preprint arXiv:2101.02047 (2021)

Alqahtani, A.S., Daghestani, L.F., Ibrahim, L.F.: Environments and system types of virtual reality technology in stem: a survey. Int. J. Adv. Comput. Sci. Appl. (IJACSA) (2017). https://doi.org/10.14569/IJACSA.2017.080610

Althoff, F., Lindl, R., Walchshausl, L., Hoch, S.: Robust multimodal hand-and head gesture recognition for controlling automotive infotainment systems. VDI Berichte 1919, 187 (2005)

Arif, A.S., Stuerzlinger, W., de Mendonca Filho, E.J., Gordynski, A.: How do users interact with an error-prone in-air gesture recognizer? (2008). https://doi.org/10.1145/2559206.2581188

Baudel, T., Beaudouin-Lafon, M.: Charade: remote control of objects using free-hand gestures. Commun. ACM 36(7), 28–35 (1993). https://doi.org/10.1145/159544.159562

Belgioioso, G., Cenedese, A., Cirillo, G.I., Fraccaroli, F., Susto, G.A.: A machine learning based approach for gesture recognition from inertial measurements. In: 53rd IEEE Conference on Decision and Control, pp. 4899–4904. IEEE (2014). https://doi.org/10.1109/CDC.2014.7040154

Beurden van, M.H., Ijsselsteijn, W.A., de Kort, Y.A.: User experience of gesture based interfaces: a comparison with traditional interaction methods on pragmatic and hedonic qualities. In: International Gesture Workshop, pp. 36–47. Springer (2011). https://doi.org/10.1007/978-3-642-34182-3_4

Caceres, C.A.: Machine learning techniques for gesture recognition. Ph.D. thesis, Virginia Tech (2014). https://doi.org/10.1109/CDC.2014.7040154

Caicedo, J.C., Cruz, A., Gonzalez, F.A.: Histopathology image classification using bag of features and kernel functions. In: Conference on Artificial Intelligence in Medicine in Europe, pp. 126–135. Springer (2009). https://doi.org/10.1007/978-3-642-02976-9_17

Camplani, M., Salgado, L.: Efficient spatio-temporal hole filling strategy for kinect depth maps. In: Three-Dimensional Image Processing (3DIP) and Applications II, vol. 8290, p. 82900E. International Society for Optics and Photonics (2012). https://doi.org/10.1117/12.911909

Clapés, A., Pardo, À., Vila, O.P., Escalera, S.: Action detection fusing multiple kinects and a wimu: an application to in-home assistive technology for the elderly. Mach. Vis. Appl. 29(5), 765–788 (2018). https://doi.org/10.1007/s00138-018-0931-1

Clark, A., Moodley, D.: A system for a hand gesture-manipulated virtual reality environment. In: Proceedings of the Annual Conference of the South African Institute of Computer Scientists and Information Technologists, pp. 1–10 (2016). https://doi.org/10.12968/sece.2016.25.10a

Clark, G.D., Lindqvist, J.: Engineering gesture-based authentication systems. IEEE Pervasive Comput. 14(1), 18–25 (2015). http://dx.doi.org/10.1145/2858036.2858270

Dardas, N.H., Alhaj, M.: Hand gesture interaction with a 3d virtual environment. Res. Bull. Jordan ACM 2(3), 86–94 (2011). https://doi.org/10.3917/afcul.086.0094

Desai, P.R., Desai, P.N., Ajmera, K.D., Mehta, K.: A review paper on oculus rift-a virtual reality headset. arXiv preprint arXiv:1408.1173 (2014). https://doi.org/10.14445/22315381/IJETT-V13P237

Elons, A., Ahmed, M., Shedid, H., Tolba, M.: Arabic sign language recognition using leap motion sensor. In: 9th International Conference on Computer Engineering & Systems (ICCES), pp. 368–373. IEEE (2014). https://doi.org/10.1109/ISIE.2014.6864742

Fiorentino, M., Uva, A.E., Monno, G.: Vr interaction for cad basic tasks using rumble feedback input: experimental study. In: Product Engineering, pp. 337–352. Springer (2008). https://doi.org/10.1007/978-1-4020-8200-9_16

Francese, R., Passero, I., Tortora, G.: Wiimote and kinect: gestural user interfaces add a natural third dimension to HCI. In: Proceedings of the International Working Conference on Advanced Visual Interfaces, pp. 116–123. ACM (2012). https://doi.org/10.1145/2254556.2254580

Froehlich, B., Hochstrate, J., Skuk, V., Huckauf, A.: The globefish and the globemouse: two new six degree of freedom input devices for graphics applications. In: Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, pp. 191–199. ACM (2006). https://doi.org/10.1145/1124772.1124802

Georgi, M., Amma, C., Schultz, T.: Recognizing hand and finger gestures with IMU based motion and EMG based muscle activity sensing. In: Biosignals, pp. 99–108 (2015). https://doi.org/10.5220/0005276900990108

Gribnau, M.W., Hennessey, J.M.: Comparing single-and two-handed 3d input for a 3d object assembly task. In: Chi 98 Conference Summary on Human Factors in Computing Systems, pp. 233–234 (1998). https://doi.org/10.1145/286498.286720

Grossman, T., Fitzmaurice, G., Attar, R.: A survey of software learnability: metrics, methodologies and guidelines. In: Proceedings of the Sigchi Conference on Human Factors in Computing Systems, pp. 649–658 (2009). https://doi.org/10.1145/1518701.1518803

Gupta, P., da Vitoria Lobo, N., Laviola, J.J.: Markerless tracking and gesture recognition using polar correlation of camera optical flow. Mach. Vis. Appl. 24(3), 651–666 (2013). https://doi.org/10.1007/s00138-012-0451-3

Hafner, J., Sawhney, H.S., Equitz, W., Flickner, M., Niblack, W.: Efficient color histogram indexing for quadratic form distance functions. IEEE Trans. Pattern Anal. Mach. Intell. 17(7), 729–736 (1995). https://doi.org/10.1109/34.391417

Hsu, S.C., Huang, J.Y., Kao, W.C., Huang, C.L.: Human body motion parameters capturing using kinect. Mach. Vis. Appl. 26(7–8), 919–932 (2015). https://doi.org/10.1007/s00138-015-0710-1

Hua, J., Qin, H.: Haptic sculpting of volumetric implicit functions. In: Proceedings Ninth Pacific Conference on Computer Graphics and Applications. Pacific Graphics 2001, pp. 254–264. IEEE (2001). https://doi.org/10.1109/PCCGA.2001.962881

Huang, C.L., Huang, W.Y.: Sign language recognition using model-based tracking and a 3d hopfield neural network. Mach. Vis. Appl. 10(5-6), 292–307 (1998). https://doi.org/10.1007/s001380050080

Itkarkar, R.R., Nandy, A.K.: A study of vision based hand gesture recognition for human machine interaction. Int. J. Innov. Res. Adv. Eng. (IJIRAE) 1(12) (2014)

Jacob, R.J., Girouard, A., Hirshfield, L.M., Horn, M.S., Shaer, O., Solovey, E.T., Zigelbaum, J.: Reality-based interaction: a framework for post-wimp interfaces. In: Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, pp. 201–210 (2008). https://doi.org/10.1145/1357054.1357089

Jáuregui, D.A.G., Horain, P.: Real-time 3d motion capture by monocular vision and virtual rendering. Mach. Vis. Appl. 28(8), 839–858 (2017). https://doi.org/10.1007/s00138-017-0861-3

Jörg, S., Hodgins, J., Safonova, A.: Data-driven finger motion synthesis for gesturing characters. ACM Trans. Graph. (TOG) 31(6), 1–7 (2012). https://doi.org/10.1145/2366145.2366214

Kang, T., Chae, M., Seo, E., Kim, M., Kim, J.: DeepHandsVR: hand interface using deep learning in immersive virtual reality. Electronics 9(11), 1863 (2020). https://doi.org/10.3390/electronics9111863

Kaushik, D.M., Jain, R.: Gesture based interaction NUI: an overview. arXiv preprint arXiv:1404.2364 (2014). https://doi.org/10.14445/22315381/IJETT-V9P319

Kerber, F., Puhl, M., Krüger, A.: User-independent real-time hand gesture recognition based on surface electromyography. In: Proceedings of the 19th International Conference on Human–Computer Interaction with Mobile Devices and Services, pp. 1–7 (2017). http://dx.doi.org/10.1007/s10462-012-9356-9

Khattak, S., Cowan, B., Chepurna, I., Hogue, A.: A real-time reconstructed 3d environment augmented with virtual objects rendered with correct occlusion. In: IEEE Games Media Entertainment, pp. 1–8. IEEE (2014). https://doi.org/0.1109/GEM.2014.7048102

Khundam, C.: First person movement control with palm normal and hand gesture interaction in virtual reality. In: 12th International Joint Conference on Computer Science and Software Engineering (JCSSE), pp. 325–330. IEEE (2015). https://doi.org/10.1109/JCSSE.2015.7219818

Kim, D., Hilliges, O., Izadi, S., Butler, A.D., Chen, J., Oikonomidis, I., Olivier, P.: Digits: freehand 3d interactions anywhere using a wrist-worn gloveless sensor. In: Proceedings of the 25th Annual ACM Symposium on User Interface Software and Technology, pp. 167–176 (2012). https://doi.org/10.1145/2380116.2380139

Kim, J.O., Kim, M., Yoo, K.H.: Real-time hand gesture-based interaction with objects in 3d virtual environments. Int. J. Multimed. Ubiquitous Eng. 8(6), 339–348 (2013)

Lajevardi, S.M., Hussain, Z.M.: Feature extraction for facial expression recognition based on hybrid face regions. Adv. Electr. Comput. Eng. 9(3), 63–67 (2009)

LaViola, J.: MSVT: a virtual reality-based multimodal scientific visualization tool. In: Proceedings of the Third IASTED International Conference on Computer Graphics and Imaging, pp. 1–7 (2000). https://doi.org/10.1.1.33.7853

Lee, C.S., Oh, K.M., Park, C.J.: Virtual environment interaction based on gesture recognition and hand cursor. Electron, Resour (2008)

Li, Y., Chen, X., Tian, J., Zhang, X., Wang, K., Yang, J.: Automatic recognition of sign language subwords based on portable accelerometer and emg sensors. In: International Conference on Multimodal Interfaces and the Workshop on Machine Learning for Multimodal Interaction, pp. 1–7 (2010). https://doi.org/10.1145/1891903.1891926

Liu, Y., Shen, Y., Chan, H., Lu, Y., Wang, D.: Touching the future: the effects of gesture-based interaction on virtual product experience (2015)

Lumsden, J., Brewster, S.: A paradigm shift: alternative interaction techniques for use with mobile and wearable devices. In: Proceedings of the 2003 Conference of the Centre for Advanced Studies on Collaborative Research, pp. 197–210. IBM Press (2003). https://doi.org/10.1145/961322.961355

Lupinetti, K., Ranieri, A., Giannini, F., Monti, M.: 3d dynamic hand gestures recognition using the leap motion sensor and convolutional neural networks. In: International Conference on Augmented Reality, Virtual Reality and Computer Graphics, pp. 420–439. Springer (2020). https://doi.org/10.1007/978-3-030-58465-8_31

Ma, R., Zhang, Z., Chen, E.: Human motion gesture recognition based on computer vision. Complexity 2021,(2021). https://doi.org/10.1155/2021/6679746

Mahzoon, M., Maher, M.L., Grace, K., Locurto, L., Outcault, B.: The willful marionette: modeling social cognition using gesture–gesture interaction dialogue. In: International Conference on Augmented Cognition, pp. 402–413. Springer (2016). https://doi.org/10.1007/978-3-319-39952-2_39

Mikolajczyk, K., Leibe, B., Schiele, B.: Local features for object class recognition. In: Tenth IEEE International Conference on Computer Vision (ICCV’05) Volume 1, vol. 2, pp. 1792–1799. IEEE (2005). https://doi.org/10.1109/ICCV.2005.146

Molina, J., Pajuelo, J.A., Escudero-Viñolo, M., Bescós, J., Martínez, J.M.: A natural and synthetic corpus for benchmarking of hand gesture recognition systems. Mach. Vis. Appl. 25(4), 943–954 (2014). https://doi.org/10.1007/s00138-013-0576-z

Nacenta, M.A., Kamber, Y., Qiang, Y., Kristensson, P.O.: Memorability of pre-designed and user-defined gesture sets. In: Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, pp. 1099–1108 (2013). https://doi.org/10.1145/2470654.2466142

Oprisescu, S., Barth, E.: 3d hand gesture recognition using the hough transform. Adv. Electr. Comput. Eng. 13(3), 71–77 (2013). https://doi.org/10.1016/j.coastaleng.2013.02.005

Oron, S., Bar-Hillel, A., Avidan, S.: Real-time tracking-with-detection for coping with viewpoint change. Mach. Vis. Appl. 26(4), 507–518 (2015). https://doi.org/10.1007/s00138-015-0676-z

Pal, M., Mather, P.M.: Assessment of the effectiveness of support vector machines for hyperspectral data. Future Gen. Comput. Syst. 20(7), 1215–1225 (2004). https://doi.org/10.1016/j.future.2003.11.011

Park, S., Trivedi, M.M.: Multi-person interaction and activity analysis: a synergistic track-and body-level analysis framework. Mach. Vis. Appl. 18(3–4), 151–166 (2007). https://doi.org/10.1007/s00138-006-0055-x

Pavlovic, V.I., Sharma, R., Huang, T.S.: Visual interpretation of hand gestures for human-computer interaction: a review. IEEE Trans. Pattern Anal. Mach. Intell. 19(7), 677–695 (1997). https://doi.org/10.1109/34.598226

Phung, S.L., Bouzerdoum, A., Chai, D.: A novel skin color model in ycbcr color space and its application to human face detection. In: Proceedings of International Conference on Image Processing, vol. 1, pp. I–I. IEEE (2002). https://doi.org/10.1109/ICIP.2002.1038016

Rabbi, I., Ullah, S.: Extending the tracking distance of fiducial markers for large indoor augmented reality applications. Adv. Electr. Comput. Eng. 15(2), 59–65 (2015). https://doi.org/10.1007/s35127-015-0706-1

Raees, M., Ullah, S., Rahman, S.U., Rabbi, I.: Image based recognition of pakistan sign language. J. Eng. Res. 1(4), 1–21 (2016). https://doi.org/10.7603/s40632-016-0002-6

Rautaray, S.S., Agrawal, A.: Real time hand gesture recognition system for dynamic applications. Int. J. UbiComp 3(1), 21 (2012). https://doi.org/10.5121/iju.2012.3103

Rocha, L., Velho, L., Carvalho, P.C.P.: Image moments-based structuring and tracking of objects. In: Proceedings of XV Brazilian Symposium on Computer Graphics and Image Processing, pp. 99–105. IEEE (2002). https://doi.org/10.1109/SIBGRA.2002.1167130

Saffer, D.: Designing Gestural Interfaces: Touchscreens and Interactive Devices. O’Reilly Media Inc, Newton (2008)

Sahane, M., Salve, H., Dhawade, N., Bajpai, S.: Visual interpretation of hand gestures for human computer interaction. Environments (VEs) 2, 53 (2014). https://doi.org/10.1109/34.598226

Schröder, M., Elbrechter, C., Maycock, J., Haschke, R., Botsch, M., Ritter, H.: Real-time hand tracking with a color glove for the actuation of anthropomorphic robot hands. In: 2012 12th IEEE-RAS International Conference on Humanoid Robots (Humanoids 2012), pp. 262–269. IEEE (2012). https://doi.org/10.1109/HUMANOIDS.2012.6651530

Sharma, R.P., Verma, G.K.: Human computer interaction using hand gesture. Procedia Comput. Sci. 54, 721–727 (2015). https://doi.org/10.1016/j.procs.2015.06.085

Smus, B., Riederer, C.: Magnetic input for mobile virtual reality. In: Proceedings of the 2015 ACM International Symposium on Wearable Computers, pp. 43–44. ACM (2015). http://dx.doi.org/10.1145/2802083.2808395

Starner, T., Leibe, B., Minnen, D., Westyn, T., Hurst, A., Weeks, J.: The perceptive workbench: computer-vision-based gesture tracking, object tracking, and 3d reconstruction for augmented desks. Mach. Vis. Appl. 14(1), 59–71 (2003). https://doi.org/10.1016/S1246-7391(03)00007-1

Tapu, R., Mocanu, B., Tapu, E.: A survey on wearable devices used to assist the visual impaired user navigation in outdoor environments. In: 11th International Symposium on Electronics and Telecommunications (ISETC), pp. 1–4. IEEE (2014). https://doi.org/10.1109/ISETC.2014.7010793

Tootaghaj, D.Z., Sampson, A., Mytkowicz, T., McKinley, K.S.: High five: improving gesture recognition by embracing uncertainty. arXiv preprint arXiv:1710.09441 (2017)

Torres-Huitzil, C.: A review of image interest point detectors: from algorithms to fpga hardware implementations. In: Image Feature Detectors and Descriptors, pp. 47–74. Springer (2016). https://doi.org/10.1007/978-3-319-28854-3_3

Tran, T.T., Pham, V.T., Shyu, K.K.: Moment-based alignment for shape prior with variational b-spline level set. Mach. Vis. Appl. 24(5), 1075–1091 (2013). https://doi.org/10.1007/s00138-013-0504-2

Vanacken, D., Beznosyk, A., Coninx, K.: Help systems for gestural interfaces and their effect on collaboration and communication. In: Workshop on Gesture-Based Interaction Design: Communication and Cognition. Citeseer (2014)

Wachs, J.P., Kölsch, M., Stern, H., Edan, Y.: Vision-based hand-gesture applications. Commun. ACM 54(2), 60–71 (2011). https://doi.org/10.1145/1897816.1897838

Walter, R., Bailly, G., Müller, J.: Strikeapose: revealing mid-air gestures on public displays. In: Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, pp. 841–850 (2013). https://doi.org/10.1145/2470654.2470774

Wang, C., Cannon, D.: A virtual end-effector pointing system in point-and-direct robotics for inspection of surface flaws using a neural network based skeleton transform. In: Proceedings IEEE International Conference on Robotics and Automation, pp. 784–789. IEEE (1993). https://doi.org/10.1109/ROBOT.1993.292240

Weissmann, J., Salomon, R.: Gesture recognition for virtual reality applications using data gloves and neural networks. In: Proceedings of International Joint Conference on Neural Networks, IJCNN’99 (Cat. No. 99CH36339), vol. 3, pp. 2043–2046. IEEE (1999). http://dx.doi.org/10.1109/IJCNN.1999.832699

Wilson, V.: Applicability: What is it? how do you find it? Evid. Based Libr. Inf. Pract. 5(2), 111–113 (2010)

Wolf, M.T., Assad, C., Stoica, A., You, K., Jethani, H., Vernacchia, M.T., Fromm, J., Iwashita, Y.: Decoding static and dynamic arm and hand gestures from the jpl biosleeve. In: IEEE Aerospace Conference, pp. 1–9. IEEE (2013). https://doi.org/10.1109/AERO.2013.6497171

Wu, M.Y., Ting, P.W., Tang, Y.H., Chou, E.T., Fu, L.C.: Hand pose estimation in object-interaction based on deep learning for virtual reality applications. J. Vis. Commun. Image Represent. 70, 102802 (2020). https://doi.org/10.1016/j.jvcir.2020.102802

Xiao, M., Feng, Z., Yang, X., Xu, T., Guo, Q.: Multimodal interaction design and application in augmented reality for chemical experiment. Virtual Real. Intell. Hardw. 2(4), 291–304 (2020). https://doi.org/10.1016/j.vrih.2020.07.005

Ye, G., Corso, J.J., Burschka, D., Hager, G.D.: VICS: a modular HCI framework using spatiotemporal dynamics. Mach. Vis. Appl. 16(1), 13–20 (2004). https://doi.org/10.1007/s00138-004-0159-0

Zhang, D., Adipat, B.: Challenges, methodologies, and issues in the usability testing of mobile applications. Int. J. Hum. Comput. Interact. 18(3), 293–308 (2005). https://doi.org/10.1207/s15327590ijhc1803_3

Zhang, M., Zhang, Z., Chang, Y., Aziz, E.S., Esche, S., Chassapis, C.: Recent developments in game-based virtual reality educational laboratories using the microsoft kinect. Int. J. Emerg. Technol. Learn. (iJET) 13(1), 138–159 (2018). https://doi.org/10.3991/ijet.v13i08.9048

Zhao, J., Allison, R.S.: Comparing head gesture, hand gesture and gamepad interfaces for answering yes/no questions in virtual environments. Virtual Real. 24(3), 515–524 (2020). https://doi.org/10.1007/s10055-019-00416-7

Zielasko, D., Horn, S., Freitag, S., Weyers, B., Kuhlen, T.W.: Evaluation of hands-free HMD-based navigation techniques for immersive data analysis. In: IEEE Symposium on 3D User Interfaces (3DUI), pp. 113–119. IEEE (2016). https://doi.org/10.1109/VR.2016.7504781

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Raees, M., Ullah, S. LPI: learn postures for interactions. Machine Vision and Applications 32, 113 (2021). https://doi.org/10.1007/s00138-021-01235-0

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s00138-021-01235-0