Abstract

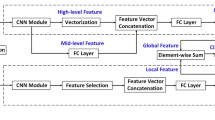

Facial expression recognition (FER) has attracted much more attention due to its broad range of applications. Occlusions and head-pose variations are two major obstacles for automatic FER. In this paper, we propose a convolution-transformer dual branch network (CT-DBN) that takes advantage of local and global facial information to tackle the real-word occlusions and head-pose variant robust FER. The CT-DBN contains two branches. Taking into account local modeling ability of CNN, the first branch utilizes CNN to capture local edge information. Inspired by transformers’ successful application in natural language processing, we employ transformer to the second branch to be responsible for obtaining better global representation. Then, a local–global feature fusion module is proposed to adaptively integrate both features to hybrid features and model the relationship between them. With the help of feature fusion module, our network not only integrates local and global features in an adaptive weighting manner but can also learn the corresponding distinguishable features autonomously. Experimental results under inner-database and cross-database evaluation on four leading facial expression databases illustrate that our proposed CT-DBN outperforms other state-of-the-art methods and achieves robust performance under in-the-wild condition.

Similar content being viewed by others

Explore related subjects

Discover the latest articles and news from researchers in related subjects, suggested using machine learning.References

Agrawal, A., Mittal, N.: Using CNN for facial expression recognition: a study of the effects of kernel size and number of filters on accuracy. Vis. Comput. 36(2), 405–412 (2020). https://doi.org/10.1007/s00371-019-01630-9

Barsoum, E., Zhang, C., Ferrer, C.C., Zhang, Z.: Training deep networks for facial expression recognition with crowd-sourced label distribution. In: Proceedings of the 18th ACM International Conference on Multimodal Interaction, pp. 279–283 (2016)

Cai, J., Meng, Z., Khan, A.S., Li, Z., O’Reilly, J., Tong, Y.: Island loss for learning discriminative features in facial expression recognition. In: 2018 13th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2018), pp. 302–309. IEEE (2018)

Chen, L.F., Zhou, M.T., Su, W.J., Wu, M., She, J.H., Hirota, K.: Softmax regression based deep sparse autoencoder network for facial emotion recognition in human–robot interaction. Inf. Sci. 428, 49–61 (2018). https://doi.org/10.1016/j.ins.2017.10.044

Cruz, E.A.S., Jung, C.R., Franco, C.H.E.: Facial expression recognition using temporal poem features. Pattern Recognit. Lett. 114, 13–21 (2018). https://doi.org/10.1016/j.patrec.2017.08.008

Dahmane, M., Meunier, J.: Emotion recognition using dynamic grid-based hog features. In: Face and Gesture 2011, pp. 884–888. IEEE (2011)

Dai, Y., Gieseke, F., Oehmcke, S., Wu, Y., Barnard, K.: Attentional feature fusion. In: Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, pp. 3560–3569 (2021)

Ding, H., Zhou, P., Chellappa, R.: Occlusion-adaptive deep network for robust facial expression recognition. arXiv preprint arXiv:2005.06040 (2020)

Dosovitskiy, A., Beyer, L., Kolesnikov, A., Weissenborn, D., Zhai, X., Unterthiner, T., Dehghani, M., Minderer, M., Heigold, G., Gelly, S.: An image is worth 16x16 words: Transformers for image recognition at scale. arXiv preprint arXiv:2010.11929 (2020)

Falcon, W.: Pytorch lightning. GitHub. Note: https://github.com/PyTorchLightning/pytorch-lightning, vol. 3 (2019)

Farzaneh, A.H., Qi, X.: Facial expression recognition in the wild via deep attentive center loss. In: Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, pp. 2402–2411 (2021)

Girdhar, R., Carreira, J., Doersch, C., Zisserman, A.: Video action transformer network. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 244–253 (2019)

Goodfellow, I.J., Erhan, D., Carrier, P.L., Courville, A., Mirza, M., Hamner, B., Cukierski, W., Tang, Y., Thaler, D., Lee, D.H.: Challenges in representation learning: a report on three machine learning contests. In: International Conference on Neural Information Processing, pp. 117–124. Springer (2013)

Han, K., Wang, Y., Chen, H., Chen, X., Guo, J., Liu, Z., Tang, Y., Xiao, A., Xu, C., Xu, Y.: A survey on visual transformer. arXiv preprint arXiv:2012.12556 (2020)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Kharghanian, R., Peiravi, A., Moradi, F.: Pain detection from facial images using unsupervised feature learning approach. In: 2016 38th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), pp. 419–422. IEEE (2016)

Kollias, D., Cheng, S.Y., Ververas, E., Kotsia, I., Zafeiriou, S.: Deep neural network augmentation: generating faces for affect analysis. Int. J. Comput. Vis. 128(5), 1455–1484 (2020). https://doi.org/10.1007/s11263-020-01304-3

Li, K., Jin, Y., Akram, M.W., Han, R.Z., Chen, J.W.: Facial expression recognition with convolutional neural networks via a new face cropping and rotation strategy. Vis. Comput. 36(2), 391–404 (2020). https://doi.org/10.1007/s00371-019-01627-4

Li, S., Deng, W.: Deep facial expression recognition: a survey. IEEE Trans. Aff. Comput. 6, 66 (2020)

Li, S., Deng, W., Du, J.: Reliable crowdsourcing and deep locality-preserving learning for expression recognition in the wild. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2852–2861 (2017)

Li, W., Abtahi, F., Zhu, Z., Yin, L.: Eac-net: deep nets with enhancing and cropping for facial action unit detection. IEEE Trans. Pattern Anal. Mach. Intell. 40(11), 2583–2596 (2018). https://doi.org/10.1109/TPAMI.2018.2791608

Li, Y., Zeng, J., Shan, S., Chen, X.: Occlusion aware facial expression recognition using CNN with attention mechanism. IEEE Trans. Image Process. 28(5), 2439–2450 (2018)

Liang, X., Xu, L., Liu, J., Liu, Z., Cheng, G., Xu, J., Liu, L.: Patch attention layer of embedding handcrafted features in CNN for facial expression recognition. Sensors 21(3), 833 (2021). https://doi.org/10.3390/s21030833

Liu, D.Z., Ouyang, X., Xu, S.J., Zhou, P., He, K., Wen, S.P.: Saanet: Siamese action-units attention network for improving dynamic facial expression recognition. Neurocomputing 413, 145–157 (2020). https://doi.org/10.1016/j.neucom.2020.06.062

Liu, X., Kumar, B.V., Jia, P., You, J.: Hard negative generation for identity-disentangled facial expression recognition. Pattern Recognit. 88, 1–12 (2019). https://doi.org/10.1016/j.patcog.2018.11.001

Liu, Z., Lin, Y., Cao, Y., Hu, H., Wei, Y., Zhang, Z., Lin, S., Guo, B.: Swin transformer: hierarchical vision transformer using shifted windows. arXiv preprint arXiv:2103.14030 (2021)

Lopes, A.T., de Aguiar, E., De Souza, A.F., Oliveira-Santos, T.: Facial expression recognition with convolutional neural networks: coping with few data and the training sample order. Pattern Recognit. 61, 610–628 (2017). https://doi.org/10.1016/j.patcog.2016.07.026

Lucey, P., Cohn, J.F., Kanade, T., Saragih, J., Ambadar, Z., Matthews, I.: The extended Cohn–Kanade dataset (ck+): a complete dataset for action unit and emotion-specified expression. In: 2010 IEEE Computer Society Conference on Computer Vision and Pattern Recognition-workshops, pp. 94–101. IEEE (2010)

Lyons, M., Akamatsu, S., Kamachi, M., Gyoba, J.: Coding facial expressions with Gabor wavelets. In: Proceedings Third IEEE International Conference on Automatic Face and Gesture Recognition, pp. 200–205. IEEE (1998)

Ma, F., Sun, B., Li, S.: Robust facial expression recognition with convolutional visual transformers. arXiv preprint arXiv:2103.16854 (2021)

Miao, S., Xu, H., Han, Z., Zhu, Y.: Recognizing facial expressions using a shallow convolutional neural network. IEEE Access 7, 78000–78011 (2019). https://doi.org/10.1109/Access.2019.2921220

Mollahosseini, A., Chan, D., Mahoor, M.H.: Going deeper in facial expression recognition using deep neural networks. In: 2016 IEEE Winter Conference on Applications of Computer Vision (WACV), pp. 1–10. IEEE (2016)

Qu, X., Zou, Z., Su, X., Zhou, P., Wei, W., Wen, S., Wu, D.: Attend to where and when: cascaded attention network for facial expression recognition. IEEE Trans. Emerg. Top. Comput. Intell. 6, 66 (2021)

Rouast, P.V., Adam, M., Chiong, R.: Deep learning for human affect recognition: insights and new developments. IEEE Trans. Aff. Comput. 6, 66 (2019)

Rudovic, O., Pantic, M., Patras, I.: Coupled Gaussian processes for pose-invariant facial expression recognition. IEEE Trans. Pattern Anal. Mach. Intell. 35(6), 1357–1369 (2012)

Sariyanidi, E., Gunes, H., Cavallaro, A.: Automatic analysis of facial affect: a survey of registration, representation, and recognition. IEEE Trans. Pattern Anal. Mach. Intell. 37(6), 1113–1133 (2014)

Shan, C.F., Gong, S.G., McOwan, P.W.: Facial expression recognition based on local binary patterns: a comprehensive study. Image Vis. Comput. 27(6), 803–816 (2009). https://doi.org/10.1016/j.imavis.2008.08.005

Sikander, G., Anwar, S.: Driver fatigue detection systems: a review. IEEE Trans. Intell. Transp. Syst. 20(6), 2339–2352 (2018)

Sikka, K., Wu, T., Susskind, J., Bartlett, M.: Exploring bag of words architectures in the facial expression domain. In: European Conference on Computer Vision, pp. 250–259. Springer (2012)

Srinivas, A., Lin, T.Y., Parmar, N., Shlens, J., Abbeel, P., Vaswani, A.: Bottleneck transformers for visual recognition. arXiv preprint arXiv:2101.11605 (2021)

Tang, Y., Zhang, X., Hu, X., Wang, S., Wang, H.: Facial expression recognition using frequency neural network. IEEE Trans. Image Process. 30, 444–457 (2020)

Touvron, H., Cord, M., Douze, M., Massa, F., Sablayrolles, A., Jégou, H.: Training data-efficient image transformers & distillation through attention. arXiv preprint arXiv:2012.12877 (2020)

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A.N., Kaiser, Polosukhin, I.: Attention is all you need. In: Advances in Neural Information Processing Systems, pp. 5998–6008 (2017)

Wang, H., Zhu, Y., Green, B., Adam, H., Yuille, A., Chen, L.C.: Axial-deeplab: stand-alone axial-attention for panoptic segmentation. In: European Conference on Computer Vision, pp. 108–126. Springer (2020)

Wang, K., Peng, X., Yang, J., Lu, S., Qiao, Y.: Suppressing uncertainties for large-scale facial expression recognition. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 6897–6906 (2020)

Wang, K., Peng, X., Yang, J., Meng, D., Qiao, Y.: Region attention networks for pose and occlusion robust facial expression recognition. IEEE Trans. Image Process. 29, 4057–4069 (2020). https://doi.org/10.1109/TIP.2019.2956143

Wang, Z., Zeng, F., Liu, S., Zeng, B.: Oaenet: oriented attention ensemble for accurate facial expression recognition. Pattern Recognit. 6, 107694 (2020)

Wei, W., Jia, Q.X., Feng, Y.L., Chen, G., Chu, M.: Multi-modal facial expression feature based on deep-neural networks. J. Multimodal User Interfaces 14(1), 17–23 (2020). https://doi.org/10.1007/s12193-019-00308-9

Wu, H., Xiao, B., Codella, N., Liu, M., Dai, X., Yuan, L., Zhang, L.: Cvt: introducing convolutions to vision transformers. arXiv preprint arXiv:2103.15808 (2021)

Xie, W., Shen, L., Duan, J.: Adaptive weighting of handcrafted feature losses for facial expression recognition. IEEE Trans. Cybern. 51(5), 2787–2800 (2021). https://doi.org/10.1109/TCYB.2019.2925095

Yang, F., Yang, H., Fu, J., Lu, H., Guo, B.: Learning texture transformer network for image super-resolution. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 5791–5800 (2020)

Zeng, G., Zhou, J., Jia, X., Xie, W., Shen, L.: Hand-crafted feature guided deep learning for facial expression recognition. In: 2018 13th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2018), pp. 423–430. IEEE (2018)

Zhang, F., Zhang, T., Mao, Q., Xu, C.: Joint pose and expression modeling for facial expression recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 3359–3368 (2018)

Zhang, H., Su, W., Yu, J., Wang, Z.: Identity-expression dual branch network for facial expression recognition. IEEE Trans. Cognit. Dev. Syst. 6, 66 (2020)

Zhao, G.Y., Huang, X.H., Taini, M., Li, S.Z., Pietikainen, M.: Facial expression recognition from near-infrared videos. Image Vis. Comput. 29(9), 607–619 (2011). https://doi.org/10.1016/j.imavis.2011.07.002

Zheng, M., She, Y., Liu, F., Chen, J., Shu, Y., XiaHou, J.: Babebay-a companion robot for children based on multimodal affective computing. In: 2019 14th ACM/IEEE International Conference on Human–Robot Interaction (HRI), pp. 604–605. IEEE (2019) /newpage

Zhong, L., Liu, Q., Yang, P., Huang, J., Metaxas, D.N.: Learning multiscale active facial patches for expression analysis. IEEE Trans. Cybern. 45(8), 1499–510 (2015). https://doi.org/10.1109/TCYB.2014.2354351

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

This work was partially supported by National Key R&D Program of China (Grant No. 2017YFB1303200), Jiangsu Special Project for Frontier Leading Base Technology (Grant No. BK20192004), Key Support Project of Dean Fund of Hefei Institutes of Physical Science, CAS (Grant No. YZJJZX202017), and Strategic High-tech Innovation Fund of Chinese Academy of Sciences (Grant No. GQRC-19-15).

Rights and permissions

About this article

Cite this article

Liang, X., Xu, L., Zhang, W. et al. A convolution-transformer dual branch network for head-pose and occlusion facial expression recognition. Vis Comput 39, 2277–2290 (2023). https://doi.org/10.1007/s00371-022-02413-5

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00371-022-02413-5