Abstract

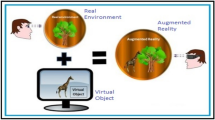

In this paper, we present a real time on-line augmented/diminished reality system that runs entirely on the hand-held moving mobile device. Specifically, we introduce an improved inpainting algorithm that is designed for the on-line usage (i.e., live streaming video) with the moving view point. Unlike other previous approaches, the proposed algorithm produces a reasonably high-quality inpainting imagery by taking advantage of the 3D camera tracking and scene information from the SLAM in selecting the proper source frame from which to extract the parts to replace the masked region, and robustly applying homography to fill it in from the source. The algorithm is evaluated and compared with the state-of-the-art video inpainting methods in terms of the execution time, and objective and subjective inpainted image quality. Although the algorithm is applicable to any dynamic objects, the current implementation is limited to removing and filling in for human figures only. The evaluation results have shown the quality of the inpainted image quality was on par with those by the off-line state-of-the-art systems and yet ran at an interactive rate on a mobile device. A user study was also conducted to assess the user perception and experience in an outdoor interactive AR application using the proposed algorithm to remove the interfering pedestrians. The algorithm significantly reduced the level of distraction and improved the AR user experience by lowering the visual inconsistency and artifacts, when compared to other nominal test conditions.

Similar content being viewed by others

Notes

The dataset is available on-line at this site.

References

Kim, H., Kim, T., Lee, M., Kim, G.J., Hwang, J.I.: Don’t bother me: how to handle content-irrelevant objects in handheld augmented reality. In:26th ACM Symposium on Virtual Reality Software and Technology, pp. 1–5. https://doi.org/10.1145/3385956.3418948 (2020)

Kim, H., Kim, T., Lee, M., Kim, G.J., Hwang, J.I.: CIRO: the effects of visually diminished real objects on human perception in handheld augmented reality. Electronics 10(8), 900 (2021). https://doi.org/10.3390/electronics10080900

Niantic, Inc. PokemonGo. Accessed 17 Mar, 2021. https://www.pokemongo.com/en-us/(2021)

Wang, C., Huang, H., Han, X., Wang, J.: Video inpainting by jointly learning temporal structure and spatial details. In: Proceedings of the AAAI Conference on Artificial Intelligence, Vol. 33, No. 01, pp. 5232–5239. https://doi.org/10.1609/aaai.v33i01.33015232 (2019)

Granados, M., Kim, K.I., Tompkin, J., Kautz, J.,Theobalt, C.: Background inpainting for videos with dynamic objects and a free-moving camera. In: European Conference on Computer Vision, pp. 682–695. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-33718-5_49 (2012)

Newson, A., Almansa, A., Fradet, M., Gousseau, Y., Pérez, P.: Video inpainting of complex scenes. Siam J. Imaging Sci. 7(4), 1993–2019 (2014). https://doi.org/10.1137/140954933

Huang, J.B., Kang, S.B., Ahuja, N., Kopf, J.: Temporally coherent completion of dynamic video. ACM Trans. Graph. TOG 35(6), 1–11 (2016). https://doi.org/10.1145/2980179.2982398

Xu, R., Li, X., Zhou, B., Loy, C.C.: Deep flow-guided video inpainting. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 3723–3732. https://doi.org/10.1109/cvpr.2019.00384 (2019)

Gao, C., Saraf, A., Huang, J.B., Kopf, J.: Flow-edge guided video completion. In: European Conference on Computer Vision, pp. 713–729. Springer, Cham. https://doi.org/10.1007/978-3-030-58610-2_42 (2020)

Yu, J., Lin, Z., Yang, J., Shen, X., Lu, X., Huang, T.S.: Generative image inpainting with contextual attention. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 5505–5514. https://doi.org/10.1109/cvpr.2018.00577 (2018)

Yu, J., Lin, Z., Yang, J., Shen, X., Lu, X., Huang, T.S.: Free-form image inpainting with gated convolution. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 4471–4480. https://doi.org/10.1109/iccv.2019.00457 (2019)

Liu, G., Reda, F.A., Shih, K.J., Wang, T.C., Tao, A., Catanzaro, B.: Image inpainting for irregular holes using partial convolutions. In: Proceedings of the European Conference on Computer Vision (ECCV), pp. 85–100. https://doi.org/10.1007/978-3-030-01252-6_6 (2018)

Telea, A.: An image inpainting technique based on the fast marching method. J. Graph. Tools 9(1), 23–34 (2004). https://doi.org/10.1080/10867651.2004.10487596

Ebdelli, M., Le Meur, O., Guillemot, C.: Video inpainting with short-term windows: application to object removal and error concealment. IEEE Trans. Image Process. 24(10), 3034–3047 (2015). https://doi.org/10.1109/TIP.2015.2437193

Le, T.T., Almansa, A., Gousseau, Y., Masnou, S.: Motion-consistent video inpainting. In: 2017 IEEE International Conference on Image Processing (ICIP), pp. 2094–2098. IEEE. https://doi.org/10.1109/ICIP.2017.8296651 (2017)

Siltanen, S.: Diminished reality for augmented reality interior design. Visual Comput. 33(2), 193–208 (2017). https://doi.org/10.1007/s00371-015-1174-z

Herling, J., Broll, W.: High-quality real-time video inpaintingwith PixMix. IEEE Trans. Visual. Comput. Graph. 20(6), 866–879 (2014). https://doi.org/10.1109/TVCG.2014.2298016

Mori, S., Erat, O., Broll, W., Saito, H., Schmalstieg, D., Kalkofen, D.: InpaintFusion: incremental RGB-D inpainting for 3D scenes. IEEE Trans. Visual. Comput. Graph. 26(10), 2994–3007 (2020). https://doi.org/10.1109/TVCG.2020.3003768

Queguiner, G., Fradet, M., Rouhani, M.: Towards mobile diminished reality. In: 2018 IEEE International Symposium on Mixed and Augmented Reality Adjunct (ISMAR-Adjunct), pp. 226–231. IEEE. https://doi.org/10.1109/ISMAR-Adjunct.2018.00073 (2018)

Yagi, K., Hasegawa, K., Saito, H.: Diminished reality for privacy protection by hiding pedestrians in motion image sequences using structure from motion. In: 2017 IEEE International Symposium on Mixed and Augmented Reality (ISMAR-Adjunct), pp. 334–337. IEEE. https://doi.org/10.1109/ISMAR-Adjunct.2017.101 (2017)

Barnes, C., Shechtman, E., Finkelstein, A., Goldman, D.B.: PatchMatch: a randomized correspondence algorithm for structural image editing. ACM Trans. Graph. 28(3), 24 (2009)

Kim, D., Woo, S., Lee, J.Y., Kweon, I.S.: Deep video inpainting. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 5792–5801. https://doi.org/10.1109/CVPR.2019.00594 (2019)

Woo, S., Kim, D., Park, K., Lee, J.Y., Kweon, I.S. Woo, S., Kim, D., Park, K., Lee, J.Y., Kweon, I.S.: (2020). Align-and-attend network for globally and locally coherent video inpainting. In: The 31st British Machine Vision Virtual Conference. British Machine Vision Virtual Conference (2019)

Lee, S., Oh, S.W., Won, D., Kim, S.J.: Copy-and-paste networks for deep video inpainting. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 4413–4421. https://doi.org/10.1109/iccv.2019.00451(2019)

Rublee, E., Rabaud, V., Konolige, K., Bradski, G.: ORB: an efficient alternative to SIFT or SURF. In: 2011 International Conference on Computer Vision, pp. 2564–2571. IEEE. https://doi.org/10.1109/ICCV.2011.6126544 (2011)

Barath, D., Noskova, J., Ivashechkin, M., Matas, J.: MAGSAC++, a fast, reliable and accurate robust estimator. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 1304–1312. https://doi.org/10.1109/cvpr42600.2020.00138 (2020)

Apple. ARKit. Accessed 17 Mar, 2021. https://developer.apple.com/augmented-reality/ (2021)

Lucas, B.D., Kanade, T.: An iterative image registration technique with an application to stereo vision (1981)

Tareen, S.A.K., Saleem, Z.: A comparative analysis of sift, surf, kaze, akaze, orb, and brisk. In: 2018 International Conference on Computing, Mathematics and Engineering Technologies (iCoMET), pp. 1–10. IEEE. https://doi.org/10.1109/ICOMET.2018.8346440 (2018)

Chum, O., Pajdla, T., Sturm, P.: The geometric error for homographies. Comput. Vis. Image Underst. 97(1), 86–102 (2005). https://doi.org/10.1016/j.cviu.2004.03.004

Wu, X., Xu, K., Hall, P.: A survey of image synthesis and editing with generative adversarial networks. Tsinghua Sci. Technol. 22(6), 660–674 (2017). (https://doi.org/10.23919/TST.2017.8195348)

Zeng, Y., Fu, J., Chao, H.: Learning joint spatial-temporal transformations for video inpainting. In: European Conference on Computer Vision, pp. 528–543. Springer, Cham. https://doi.org/10.1007/978-3-030-58517-4_31 (2020)

Wang, Z., Bovik, A.C., Sheikh, H.R., Simoncelli, E.P.: Image quality assessment: from error visibility to structural similarity. IEEE Trans. Image Process. 13(4), 600–612 (2004). https://doi.org/10.1109/TIP.2003.819861

Zhang, R., Isola, P., Efros, A. A., Shechtman, E., Wang, O.: The unreasonable effectiveness of deep features as a perceptual metric. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 586–595. https://doi.org/10.1109/cvpr.2018.00068 (2018)

Unity Technologies. ARFoundation. Accessed 17 Mar, 2021. https://unity.com/unity/features/arfoundation (2021)

Bradski, G.: The OpenCV Library. Dr. Dobb’s Journal of Software Tools (2000)

Acknowledgements

This research was supported in part by the IITP/MSIT of Korea, under the ITRC support program (IITP-2021-2016-0-00312) and, also KEA/KIAT/MOTIE Competency Development Program for Industry Specialist (N000999), and the NRF Korea through the Basic Science Research (2019R1A2C1086649).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

Both Taehyung Kim and Gerard J. Kim declare that they have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix A Appendix: Questionnaire

Appendix A Appendix: Questionnaire

Distraction | |

DS1 | I was not able to concentrate on the AR scene because of the passerby roaming around in the background |

DS2 | The passerby’s existence bothered me when observing and interacting with the virtual avatar |

DS3 | I became conscious of the people passing across the AR screen |

DS4 | I did not pay attention to the passerby |

Visual inconsistency | |

VI1 | The visual mismatch between outside and inside the screen of the passerby was obvious to me |

VI2 | The different visual representations of the passerby in the AR scene felt awkward |

VI3 | I did not notice the visual inconsistency between the AR scene and the real scene |

VI4 | The passerby’s body parts in the AR scene did not feel awkward at all |

Object presence | |

OP1 | I felt like the avatar was a part of the environment |

OP2 | I didn’t feel like the avatar was actually there in the environment |

OP3 | It seemed as though an avatar was present in the environment |

OP4 | I felt as though an avatar was physically present in the environment |

Rights and permissions

About this article

Cite this article

Kim, T., Kim, G.J. Real-time and on-line removal of moving human figures in hand-held mobile augmented reality. Vis Comput 39, 2571–2582 (2023). https://doi.org/10.1007/s00371-022-02479-1

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00371-022-02479-1