Abstract.

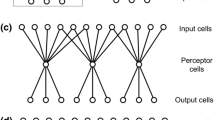

Many cognitive and sensorimotor functions in the brain involve parallel and modular memory subsystems that are adapted by activity-dependent Hebbian synaptic plasticity. This is in contrast to the multilayer perceptron model of supervised learning where sensory information is presumed to be integrated by a common pool of hidden units through backpropagation learning. Here we show that Hebbian learning in parallel and modular memories is more advantageous than backpropagation learning in lumped memories in two respects: it is computationally much more efficient and structurally much simpler to implement with biological neurons. Accordingly, we propose a more biologically relevant neural network model, called a tree-like perceptron, which is a simple modification of the multilayer perceptron model to account for the general neural architecture, neuronal specificity, and synaptic learning rule in the brain. The model features a parallel and modular architecture in which adaptation of the input-to-hidden connection follows either a Hebbian or anti-Hebbian rule depending on whether the hidden units are excitatory or inhibitory, respectively. The proposed parallel and modular architecture and implicit interplay between the types of synaptic plasticity and neuronal specificity are exhibited by some neocortical and cerebellar systems.

Similar content being viewed by others

Author information

Authors and Affiliations

Additional information

Received: 13 October 1996 / Accepted in revised form: 16 October 1997

Rights and permissions

About this article

Cite this article

Poon, CS., Shah, J. Hebbian learning in parallel and modular memories. Biol Cybern 78, 79–86 (1998). https://doi.org/10.1007/s004220050415

Issue Date:

DOI: https://doi.org/10.1007/s004220050415