Abstract

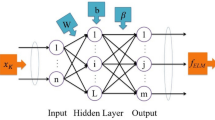

Neural network model of aggression can approximate unknown datasets with the less error. As an important method of global regression, extreme learning machine (ELM) represents a typical learning method in single-hidden layer feedforward network, because of the better generalization performance and the faster implementation. The “randomness” property of input weights makes the nonlinear combination reach arbitrary function approximation. In this paper, we attempt to seek the alternative mechanism to input connections. The idea is derived from the evolutionary algorithm. After predefining the number L of hidden nodes, we generate original ELM models. Each hidden node is seemed as a gene. To rank these hidden nodes, the larger weight nodes are reassigned for the updated ELM. We put L/2 trivial hidden nodes in a candidate reservoir. Then, we generate L/2 new hidden nodes to combine L hidden nodes from this candidate reservoir. Another ranking is used to choose these hidden nodes. The fitness-proportional selection may select L/2 hidden nodes and recombine evolutionary selection ELM. The entire algorithm can be applied for large-scale dataset regression. The verification shows that the regression performance is better than the traditional ELM and Bayesian ELM under less cost gain.

Similar content being viewed by others

References

Blake C, Merz C (1998) UCI repository of machine learning databases. Department of Information and Computer Sciences, University of California, Irvine. http://www.ics.uci.edu/∼mlearn/MLRepository.html

Cortes C, Vapnik V (1995) Support vector networks. Mach Learn 20:273–297

Emilio SO, Juan GS, Joan VF et al (2011) BELM: Bayesian extreme learning machine. IEEE Trans Neural Netw 22(3):505–509

Feng GR, Huang G-B, Lin QP, Robert G (2009) Error minimized extreme learning machine with growth of hidden nodes and incremental learning. IEEE Trans Neural Netw 20(4):1352–1357

Hagan MT, Henhaj M (1994) Training feedforward networks with the Marquardt algorithm. IEEE Trans Neural Netw 5(6):989–993

Huang G-B, Zhu Q-Y, Siew C-K (2006a) Extreme learning machine: theory and applications. Neurocomputing 70:489–501

Huang G-B, Chen L, Siew C-K (2006b) Universal approximation using incremental constructive feedforward networks with random hidden nodes. IEEE Trans Neural Netw 17(4):879–892

Huang G-B, Wang D, Lan Y (2011) Extreme learning machines: a survey. Int J Mach Learn Cyber 2(2):107–122

Liang HT, Won Y (2008) Evolutionary algorithm for training compact single hidden layer feedforward neural networks. Proc IEEE Int Jt Conf Neural Netw 1–8:3028–3033

Liang N-Y, Huang G-B, Saratchandran P et al (2006) A fast and accurate on-line sequential learning algorithm for feedforward networks. IEEE Trans Neural Netw 17(6):1411–1423

Minhas R, Mohammed AA, Wu QMJ (2010) A fast recognition framework based on extreme learning machine using hybrid object information. Neurocomputing 73:1831–1839

Rao CR, Mitra SK (1971) Generalized inverse of matrices and its applications. John Wiley & Sons, Inc., New York

Rong H-J, Ong Y-S, Tan A-H, Zhu Z (2008) A fast pruned-extreme learning machine for classification problem. Neurocomputing 72:359–366

Rumelhart DE, Hinton GE, Wiliams RJ (1986) Learning representations by back-propagating errors. Nature 323:533–536

Wang X-Z, Rong C-R (2009) Improving generalization of fuzzy if-then rules by maximizing fuzzy entropy. IEEE Trans Fuzzy Syst 17(3):556–567

Wang XZ, Chen AX, Feng HM (2011) Upper integral network with extreme learning mechanism. Neurocomputing 74(16):2520–2525

Wang L, Huang Y, Luo X, Wang Z, Luo S (2011) Image deblurring with filters learned by extreme learning machine. Neurocomputing 2464–2474

Wang X-Z, He Y-L, Dong L-C, Zhao H-Y (2011) Particle swarm optimization for determining fuzzy measures from data. Inform Sci 181(19):4230–4252

Wilamowski BM (2009) Neural network architectures and learning algorithms. IEEE Ind Electron Mag 3(4):56–63

Wu J, Wang ST, Chung F-L (2011) Positive and negative fuzzy rule system, extreme learning machine and image classification. Int J Mach Learn Cyber 2(4):261–271

Zhang R, Huang G-B, Sundararajan N, Saratchandran P (2007) Multicategory classification using an extreme learning machine for microarray gene expression cancer diagnosis. IEEE/ACM Trans Comput Biol Bioinform 4(3):485–495

Zheng S-F (2011) Gradient descent algorithms for quantile regression with smooth approximation. Int J Mach Learn Cyber 2(3):191–207

Zhu Q-Y, Qin AK, Suganthan PN, Huang G-B (2005) Evolutionary extreme learning machine. Pattern Recogn 38:1759–1763

Acknowledgments

This work was supported by the National Natural Science Foundation of China under Grants (61103181), the Ph.D. Programs Foundation of Ministry of Education of China (20103108120011), the Natural Science Foundation of Shanghai, China (09ZR1412400, 11ZR1413200), the Innovation Program of Shanghai Municipal Education Commission (10YZ11, 11YZ10).

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Feng, G., Qian, Z. & Zhang, X. Evolutionary selection extreme learning machine optimization for regression. Soft Comput 16, 1485–1491 (2012). https://doi.org/10.1007/s00500-012-0823-7

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00500-012-0823-7