Abstract

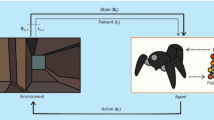

Path planning is important in the field of mobile robot. However, traditional path planning techniques optimize the navigation path solely based on the models of the robot and the environments. Owing to the time-varying environment, the robot is expected to launch the replanning procedure in real-time continuously. It is slow and wastes computing resources for repeated decisions. In this study, a new perspective is adopted which utilizes a knowledge-driven approach for path planning. The concept of relative state tree is proposed to develop an incremental learning method based on a path planning knowledge base. The knowledge library, which stores a collection of the mappings from environmental information to robot decisions, can be established by offline or online learnings. As the robot plans online, its movement is guided by the optimal decision that is retrieved from the library based on the information which matches mostly the current environment. A large number of simulations are executed to verify the proposed method. When comparing to \(k\)-d tree, this novel method has shown to use smaller storage space and have higher efficiency.

Similar content being viewed by others

References

Chen C, Li HX, Dong D (2008) Hybrid control for robot navigation: a hierarchical Q-learning algorithm. IEEE Robot Autom Mag 15(2):37–47

Chen M, Wu QX, Jiang CS (2008) A modified ant optimization algorithm for path planning of UCAV. Appl Soft Comput 8(4):1712–1718

Chen Y, Han J, Zhao X (2012) Three-dimensional path planning for unmanned aerial vehicle based on linear programming. Robotica 30(5):773–781

Earl MG, D’Andrea R (2005) Iterative MILP methods for vehicle control problems. IEEE Trans Robot 21(6):1158–1167

Fahimi F (2009) Autonomous robots modeling, path planning, and control. Springer Science+Business Media LLC, Boston, USA

Fahimi F, Nataraj C, Ashrafiuon H (2009) Real-time obstacle avoidance for multiple mobile robots[J]. Robotica 27(2):189–198

Hu Y, Yang SX (2004) A knowledge based genetic algorithm for path planning of a mobile robot. In: Proceedings of the 2004 IEEE International Conference on Robotics & Automation, pp 4350–4355

Hawkins J, Blakeslee S (2004) On Intelligence. Henry Holt and Company, New York

Hwang JY, Kim JS, Lim SS, Park KH (2003) A fast path planning by path graph optimization [J]. IEEE Trans Syst Man Cybern Part A Syst Hum 33(1):121–129

Kim JO, Khosla PK (1992) Real-time obstacle avoidance using harmonic potential functions [J]. IEEE Trans Robot Autom 8(3):338–349

Kuwata Y, How J (2004) Three dimensional receding horizon control for UAVs. In: Proceedings of AIAA Guidance, Navigation, and Control Conference, pp 2100–2113

Ladd AM, Kavraki LE (2004) Measure theoretic analysis of probabilistic path planning [J]. IEEE Trans Robot Autom 20(2):229–242

Likhachev M, Ferguson D, Gordon G, Stentz A, Thrun S (2005) Anytime dynamic A*: An anytime, replanning algorithm. In: Proceedings of the International Conference on Automated Planning and Scheduling, pp 262–271

Maarja K (2003) Global level path planning for mobile robots in dynamic environments. J Intell Robot Syst 38(1):55–83

Ota J, Katsuki R, Mizuta T, Arai T, Ueyama T, Nishiyama T (2003) Smooth path planning by using visibility graph-like method. In: Proceedings IEEE International Conference on Robotics and Automation, pp. 3770–3775

Richard SS, Andrew GB (1998) Reinforcement learning: an introduction. The MIT Press, Cambridge

Richards A, How JP (2002) Aircraft trajectory planning with collision avoidance using mixed integer linear programming. In: Proceedings of American Control Conference, pp 1936–1941

Sakahara H, Masutani Y, Miyazaki F (2008) Real-time motion planning in unknown environment: A Voronoi-based StRRT (SpatiotemporalRRT). In: The Society of Instrument and Control Engineers Annual Conference, pp 2326–2331

Temizer S (2011) Planning under uncertainty for dynamic collision avoidance. Dissertation, Massachusetts Institute of Technology

Vasudevan C, Ganesan K (1996) Case-based path planning for autonomous underwater vehicles. Auton Robot 3(2–3):79–89

Venkateswaran N, Prahasaran R (2010) Path planning for space robots: based on knowledge extrapolation and risk factors. In: Proceedings of the 2010 IEEE International Conference on Automation and Logistics, pp 373–378

Weng J, Hwang W (2007) Incremental hierarchical discriminant regression. IEEE Trans Neural Netw 18(2):397–415

Yershova A, LaValle SM (2007) Improving motion-planning algorithms by efficient nearest-neighbor searching. IEEE Trans Robot 23(1):151–157

Zhang D, Zhao X, Chen Y (2011) Application of the case-based learning based on KD-tree in unmanned helicopter. In: 2011 International Conference on Electric and Electronics, pp 721–729

Zu D, Han JD, Tan DL (2006) Acceleration space LP for the path planning of dynamic target pursuit and obstacle avoidance. In: Proceedings of the 6th World Congress on Intelligent Control and Automation, pp 9084–9088

Zu D, Han JD, Tan DL (2007) Trajectory generation in relative velocity coordinates using mixed integer linear programming with IHDR guidance. In: The 3rd annual IEEE Conference on Automation Science and Engineering, pp 1125–1130

Acknowledgments

This work was partially supported by Natural Science Foundation of China (No. 61203331 and 61035005), Hubei Province Key Laboratory of Systems Science in Metallurgical Process (Wuhan University of Science and Technology) (No. Z201301), and Henan Provincial Open Foundation of Control Engineering Key Lab of China (No. KG2011-01).

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by G. Acampora.

Rights and permissions

About this article

Cite this article

Chen, Y., Cheng, L., Wu, H. et al. Knowledge-driven path planning for mobile robots: relative state tree. Soft Comput 19, 763–773 (2015). https://doi.org/10.1007/s00500-014-1299-4

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00500-014-1299-4