Abstract

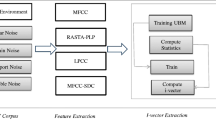

Voice-based biometric security systems involving only neutral speech have achieved promising performance. However, the speakers are very likely to fail the recognition when the test data exhibit multiple emotions. This paper aimed to address the mismatch of the emotional states between training and testing speech. We discuss different modeling strategies that incorporate the emotions (affects) of speakers into the training stage of a Mandarin-based speaker recognition system and propose an alternative approach, which could optimize the utilization of the limited affective speech. The training speeches are partitioned and clustered by the trends of the prosodic variations. Multiple models are built based on the clustered speech for a given speaker. The prosodic differences are characterized by a combination of features that describe the changes of the fundamental frequencies and energy contours. The experiments were carried out based on the Mandarin Affective Speech Corpus. The result shows 73.37 % improvement in recognition rate over that of the traditional speaker verification tasks relatively and also achieves 63.53 % higher in performance over the structural training-based systems relatively.

Similar content being viewed by others

References

Adami AG (2007) Modeling prosodic difference for speaker recognition. Speech Commun 49(4):277–291

Amir N, Ron S (1998) Towards an automatic classification of emotions in speech. ICSLP, Sydney

Arcienega M, Drygajlo A (2001) Pitch-dependent GMM for Text-Independent Speaker Recognition Systems. EUROSPEECH, Scandinavia, pp 2821–2824

Atal BS (1976) Automatic recognition of speakers from their voices. In: Proceedings of IEEE, pp 460–475

Atkinson JE (1978) Correlation analysis of the physiological factors controlling fundamental voice frequency. J Acoust Soc Am 63(1):211–222

Cowie R, Douglas-Cowie EN (1996) Automatic statistical analysis of the signal and prosodic signs of emotion in speech. ICSLP, Philadelphia

Cowie R, Douglas-Cowie EN (2001) Emotion recognition in human–computer interaction. IEEE Singal Process Mag 18(1):32–80

Daniel K, Raquel T, Thomas K, Beate M (2004) Towards real life application in emotion recognition. ADS, Kloster Irsee

Dongdong L, Yingchun Y, Zhaohui W (2005) Emotion-state conversion for speaker recognition. ACII, Beijing

Dongdong L, Yingchun Y (2009) Emotional speech clustering based robust speaker recognition system. In: 2nd international Congress on image and signal processing, pp 4576–4580

Fant G, Kruckenberg A, Nord L (1991) Prosodic and segmental speaker variations. Speech Commun 10(2):521–531

Frick RW (1985) Communicating emotion: the role of prosodic features. Psychological 97(2):412–429

Gish H, Schmidt N (1994) Text-independent speaker identification. IEEE Singal Process Mag 11(4):18–32

Hassan E, Jean R (2001) Towards combining pitch and MFCC for speaker identification systems. EUROSPEECH, Aalborg

Hirschberg J (1999) Communication and prosody: functional aspects of prosody. In: Proceedings of the ESCA workshop dialogue and prosody, pp 7–15

Kemal S, Elizabeth S, Larry H, Mitchel W (1998) Modeling dynamic prosodic variation for speaker verifiction. ICSLP, Sydney

Klasmeyer G, Johnstone T, Banziger T, Sappok C, Scherer KR (2000) Emotional voice variability in speaker verification. In: The ISCA workshop on speech and emotion, Newcastle, Northern Ireland, UK, pp 213–218

Klatt DH, Klatt LC (1990) Analysis, synthesis, and perception of voice quality variations among female and male talkers. J Acoust Soc Am 87(2):820–857

Mammone RJ, Zhang XY, Ramachandran RP (1996) Robust speaker recognition. IEEE Singal Process Mag 13(5):58–70

Markov KP, Nakagawa S (1998) Text-independent speaker recognition using non-linear frame likelihood transformation. Speech Commun 24(3):193–209

Martin A, Doddington G, Kamm T, Ordowski M, Przybocki M (1997) The DET curve in assessment of detection task performance. EUROSPEECH, Rhodes

Matsui T, Furui S (1995) Likelihood normalization for speaker verification using a phoneme- and speaker-independent model. Speech Commun 17(1–2):109–116

Minematsu N, Nakagawa S (1998) Modeling of variations in cepstral coefficients caused by Fo changes and its application to Speech Processing. ICSLP, Sydney, Australia

Montero JM, Gutierrez-Arriola JM, Palazuelos S, Enriquez E, Aguilera S, Pardo JM (1998) Emotional speech synthesis: from speech database to TTS. ICSLP, Sydney

Murray IR, Arnott JL (1996) Synthesizing emotions in speech: Is it time to get excited?. ICASSP, Philadelphia

Murray IR, Arnott JL (2008) Applying an analysis of acted vocal emotions to improve the simulation of synthetic speech. Comput Speech Lang 22(2):107–129

Peskin B, Navratil J, Abramson J, Jones D, Reynolds D, Xiang B (2003) Using prosodic and conversational features for high-performance speaker recognition. ICASSP, HongKong

Pu Y, Yingchun Y, Zhaohui W (2005) Exploiting glottal information in speaker recognition using parallel GMM. AVBPA, Hilton Rye Town

Reynolds DA (1992) A Gaussian mixture modeling approach to text independent speaker identification. Georgia Institute of Technology

Reynolds DA (2003) Channel robust speaker verification via feature mapping. ICASSP, Hong Kong, pp 53–56

Reynolds DA (2003) The SuperSID Project: exploiting high-level information for high-accuracy speaker recognition. ICASSP, HongKong

Scherer KR (2000) A cross-cultural investigation of emotion inferences from voice and speech: implicationfor speech technology. ICSLP, Beijing

Scherer KR (2003) Vocal communication of emotion: a review of research paradigms. Speech Commun 40(1–2):227–256

Scherer KR, Johnstone T, Klasmeyer G (2000) Can automatic speaker verification be improved by training the algorithms on emotional speech?. ICSLP, Beijing

Scherer KR, Johnstone T, Banziger T (1998) Verification of emotionally stressed speakers: the problem of individual differences. SPECOM, pp 233–238

Schroder M (2001) Emotional speech synthesis: a review. EUROSPEECH, pp 561–564

Shao X, Milner B, Cox S (2003) Integrated pitch and MFCC extraction for speech reconstruction and speech recognition applications. Eurospeech, Geneva

Soong FK, Rosenberg AE (1988) On the use of instantaneous and transitional spectral information in speaker recognition. IEEE Trans Acoust Speech Signal Process 36(6):871–879

Tian W, Yingchun Y, Zhaohui W, Dongdong L (2005) Improving speaker recognition by training on emotion-added models. ACII, Beijing

Tian W, Yingchun Y, Zhaohui W, Dongdong L (2006) MASC: a speech corpus in mandarin for emotion analysis and affective speaker recognition.Odyssey, San Juan, Puerto Rico, pp 1–5

Ververidis D, Kotropoulos C (2004) Emotional speech recognition: resources, features, and methods. Speech Commun 48(9):1162–1181

Wei W, Thomas FZ, Xu MX, HuanJun B (2006) Study on speaker verification on emotional speech. Interspeech, pp 2102–2105

Zhaohui W, Dongdong L, Yingchun Y (2006) Rules based feature modification for affective speaker recognition. ICASSP, Toulouse

Zilca RD, Navratil J, Ramaswamy GN (2003) SynPitch: a pseudo pitch synchronous algorithm for speaker recognition, Eurospeech, pp 2649–2652

Acknowledgments

The author would like to offer sincere thanks to reviewers. Their comments and suggestions are very important to improve the presentation and technical sounds. This research was supported by Nature Science Foundation of Shanghai Municipality, China (No. 11ZR1409600) and partly supported by the Natural Science Foundation of China (No. 61272198, No. 1272198, No. 90924013, No. 91324010), Innovation Program of Shanghai Municipal Education Commission (No. 14ZZ054). This work is also supported by the Fundamental Research Funds for the Central Universities of China.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Li, D., Yuan, Y., Wu, Z. et al. Affect-insensitive speaker recognition systems via emotional speech clustering using prosodic features. Neural Comput & Applic 26, 473–484 (2015). https://doi.org/10.1007/s00521-014-1708-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00521-014-1708-8