Abstract

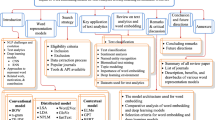

In order to provide benchmark performance for Urdu text document classification, the contribution of this paper is manifold. First, it provides a publicly available benchmark dataset manually tagged against 6 classes. Second, it investigates the performance impact of traditional machine learning-based Urdu text document classification methodologies by embedding 10 filter-based feature selection algorithms which have been widely used for other languages. Third, for the very first time, it assesses the performance of various deep learning-based methodologies for Urdu text document classification. In this regard, for experimentation, we adapt 10 deep learning classification methodologies which have produced best performance figures for English text classification. Fourth, it also investigates the performance impact of transfer learning by utilizing Bidirectional Encoder Representations from Transformers approach for Urdu language. Fifth, it evaluates the integrity of a hybrid approach which combines traditional machine learning-based feature engineering and deep learning-based automated feature engineering. Experimental results show that feature selection approach named as normalized difference measure along with support vector machine outshines state-of-the-art performance on two closed source benchmark datasets CLE Urdu Digest 1000k, and CLE Urdu Digest 1Million with a significant margin of 32% and 13%, respectively. Across all three datasets, normalized difference measure outperforms other filter-based feature selection algorithms as it significantly uplifts the performance of all adopted machine learning, deep learning, and hybrid approaches. The source code and presented dataset are available at Github repository https://github.com/minixain/Urdu-Text-Classification.

Similar content being viewed by others

Change history

27 October 2020

A Correction to this paper has been published: https://doi.org/10.1007/s00521-020-05435-z

Notes

References

Mulcahy M (2017) Big data statistics and facts for 2017. https://www.waterfordtechnologies.com/big-data-interesting-facts/. [Online; Accessed 1 Jan 2018]

Cave A (2017) What will we do when the world’s data hits 163 Zettabytes in 2025. https://www.forbes.com/sites/andrewcave/2017/04/13/what-will-we-do-when-the-worlds-data-hits-163-zettabytes-in-2025/#612b04f8349a/. [Online; Accessed 1 Jan 2018]

Marr B (2015) Big data: 20 mind-boggling facts everyone must Read. https://www.forbes.com/sites/bernardmarr/2015/09/30/big-data-20-mind-boggling-facts-everyone-must-read/#301b174517b1/. [Online; Accessed 1 Jan 2018]

Idris I, Selamat A, Nguyen NT, Omatu S, Krejcar O, Kuca K, Penhaker M (2015) A combined negative selection algorithm–particle swarm optimization for an email spam detection system. Eng Appl Artif Intell 39:33–44

Cheng N, Chandramouli R, Subbalakshmi KP (2011) Author gender identification from text. Digit Investig 8(1):78–88

Bhatt A, Patel A, Chheda H, Gawande K (2015) Amazon review classification and sentiment analysis. Int J Comput Sci Inf Technol 6(6):5107–5110

Dilrukshi I, De Zoysa K, Caldera A (2013) Twitter news classification using svm. In: 2013 8th International conference on computer science & Education (ICCSE), pp 287–291. IEEE

Krishnalal G, Babu RS, Srinivasagan KG (2010) A new text mining approach based on hmm-svm for web news classification. Int J Comput Appl 1(19):98–104

Kroha P, Baeza-Yates R (2005) A case study: news classification based on term frequency. In: Sixteenth international workshop on database and expert systems applications, 2005. Proceedings. pp 428–432. IEEE

Gahirwal M, Moghe S, Kulkarni T, Khakhar D, Bhatia J (2018) Fake news detection. Int J Adv Res Ideas Innov Technol 4(1):817–819

Conroy Niall J, Rubin Victoria L, Chen Y (2015) Automatic deception detection: methods for finding fake news. In: Proceedings of the 78th ASIS&T annual meeting: information science with impact: research in and for the community, pp 82. American Society for Information Science

Shu K, Sliva A, Wang S, Tang J, Liu H (2017) Fake news detection on social media: A data mining perspective. ACM SIGKDD Explorations Newsletter 19(1):22–36

Akram Q, Naseer A, Hussain S (2009) Assas-band, an affix-exception-list based urdu stemmer. In: Proceedings of the 7th workshop on Asian language resources, pp 40–46. Association for Computational Linguistics

Ali AR, Ijaz M (2009) Urdu text classification. In: Proceedings of the 7th international conference on frontiers of information technology, pp 21. ACM

Usman M, Shafique Z, Ayub S, Malik K (2016) Urdu text classification using majority voting. Int J Adv Comput Sci Appl 7(8):265–273

Ahmed K, Ali M, Khalid S, Kamran M (2016) Framework for urdu news headlines classification. J Appl Comput Sci Math 10(1):17–21

Sattar SA, Hina S, Khursheed N, Hamid A (2017) Urdu documents classification using naïve bayes. Indian J Sci Technol 10(29):1–4

Tehseen Z, Qaiser A, Muhammad Pervez A (2015) Evaluation of feature selection approaches for urdu text categorization. Int J Intell Syst Appl 7(6):33

Hussain S, Adeeba F, Akram Q (2016) Urdu text genre identification. In: Proceedings of conference on language and technology, 2016 (CLT 16), Lahore, Pakistan. CLE,

Chen G, Chen J (2015) A novel wrapper method for feature selection and its applications. Neurocomputing 159:219–226

Rehman A, Javed K, Babri HA, Asim N (2018) Selection of the most relevant terms based on a max–min ratio metric for text classification. Expert Syst Appl 114:78–96

Parlak B, Uysal AK (2016) The impact of feature selection on medical document classification. In: 2016 11th Iberian conference on information systems and technologies (CISTI), pp 1–5. IEEE

Prusa JD, Khoshgoftaar TM, Dittman DJ (2015) Impact of feature selection techniques for tweet sentiment classification. In: The Twenty-eighth international flairs conference

Alper Kursat Uysal and Serkan Gunal (2014) The impact of preprocessing on text classification. Inf Process Manag 50(1):104–112

Weston J, Watkins C (1998) Multi-class support vector machines. Technical report, Citeseer

Domingos P, Pazzani M (1997) On the optimality of the simple Bayesian classifier under zero-one loss. Mach Learn 29(2–3):103–130

Klenin J, Botov D (2017) Comparison of vector space representations of documents for the task of matching contents of educational course programmes. In: AIST (Supplement), pp 79–90

Li H, Caragea D, Li X, Caragea C (2018) Comparison of word embeddings and sentence encodings as generalized representations for crisis tweet classification tasks. en. In: New Zealand, pp 13

Almeida F, Xexéo G (2019) Word embeddings: a survey. arXiv preprint arXiv:1901.09069

Li Y, Wang X, Pengjian X (2018) Chinese text classification model based on deep learning. Future Internet 10(11):113

Kamath CN, Bukhari SS, Dengel A (2018) Comparative study between traditional machine learning and deep learning approaches for text classification. In: Proceedings of the ACM symposium on document engineering 2018, pp 14. ACM

Rubio JJ, Pan Y, Lughofer E, Chen M-Y, Qiu J (2020) Fast learning of neural networks with application to big data processes. Neurocomputing 390:294–296

José de Jesús Rubio (2009) Sofmls: online self-organizing fuzzy modified least-squares network. IEEE Trans Fuzzy Syst 17(6):1296–1309

Jesús Alberto Meda-Campaña (2018) On the estimation and control of nonlinear systems with parametric uncertainties and noisy outputs. IEEE Access 6:31968–31973

de José Rubio J, Enrique G, Genaro O, Israel E, David Ricardo C, Ricardo B, Jesus L, Juan Francisco N (2019) Unscented kalman filter for learning of a solar dryer and a greenhouse. J Intell Fuzzy Syst 37(5):6731–6741

Haider S (2018) Urdu word embeddings. In: Proceedings of the eleventh international conference on language resources and evaluation (LREC-2018)

Yang W, Lu W, Zheng VW (2019) A simple regularization-based algorithm for learning cross-domain word embeddings. arXiv preprint arXiv:1902.00184

You S, Ding D, Canini K, Pfeifer J, Gupta M (2017) Deep lattice networks and partial monotonic functions. In: Advances in neural information processing systems, pp 2981–2989

Niebler T, Becker M, Pölitz C, Hotho A (2017) Learning semantic relatedness from human feedback using metric learning. arXiv preprint arXiv:1705.07425

Peters ME, Neumann M, Iyyer M, Gardner M, Clark C, Lee K, Zettlemoyer L (2018) Deep contextualized word representations. arXiv preprint arXiv:1802.05365

Howard J, Ruder S (2018) Universal language model fine-tuning for text classification. arXiv preprint arXiv:1801.06146

Devlin J, Chang MW, Lee K, Toutanova K (2018) Bert: pre-training of deep bidirectional transformers for language understanding. arXiv preprint arXiv:1810.04805

You Y, Li J, Hseu J, Song X, Demmel J, Hsieh CJ (2019) Reducing bert pre-training time from 3 days to 76 minutes. arXiv preprint arXiv:1904.00962

You Y, Li J, Reddi S, Hseu J, Kumar S, Bhojanapalli S, Song X, Demmel J, Hsieh CJ (2019) Large batch optimization for deep learning: training bert in 76 minutes. arXiv preprint arXiv:1904.00962, 1(5)

Liu Y, Ott M, Goyal N, Du J, Joshi M, Chen D, Levy O, Lewis M, Zettlemoyer L, Stoyanov V(2019) Roberta: a robustly optimized bert pretraining approach. arXiv preprint arXiv:1907.11692

Asim MN, Khan MUG, Malik MI, Dengel A, Ahmed S (2019) A robust hybrid approach for textual document classification. In: 2019 International conference on document analysis and recognition (ICDAR), pp 1390–1396. IEEE

Abdur R, Javid K, Babri HA (2017) Feature selection based on a normalized difference measure for text classification. Inf Process Manag 53(2):473–489

Resnik P (1999) Semantic similarity in a taxonomy: an information-based measure and its application to problems of ambiguity in natural language. J Artif Intell Res 11:95–130

Thangaraj M, Sivakami M (2018) Text classification techniques: a literature review. Interdiscip J Inf Knowl Manag 13:117–135

Agarwal B, Mittal N (2014) Text classification using machine learning methods-a survey. In: Proceedings of the second international conference on soft computing for problem solving (SocProS 2012), December 28-30, 2012, pp 701–709. Springer

Cai J, Luo J, Wang S, Yang S (2018) Feature selection in machine learning: a new perspective. Neurocomputing 300:70–79

Asim MN, Wasim M, Ali MS, Rehman A (2017) Comparison of feature selection methods in text classification on highly skewed datasets. In: First international conference on latest trends in electrical engineering and computing technologies (INTELLECT), 2017 , pp 1–8. IEEE

Pouyanfar S, Sadiq S, Yan Y, Tian H, Tao Y, Reyes MP, Shyu M-L, Chen S-C, Iyengar SS (2018) A survey on deep learning: algorithms, techniques, and applications. ACM Comput Surveys (CSUR) 51(5):1–3

Ruder S, Peters ME, Swayamdipta S, Wolf T (2019) Transfer learning in natural language processing. In: Proceedings of the 2019 conference of the north American chapter of the association for computational linguistics: tutorials, pp 15–18

Forman G (2003) An extensive empirical study of feature selection metrics for text classification. J Mach Learn Res 3:1289–1305

Rehman A, Javed K, Babri HA, Saeed M (2015) Relative discrimination criterion-a novel feature ranking method for text data. Expert Syst Appl 42(7):3670–3681

Kent JT (1983) Information gain and a general measure of correlation. Biometrika 70(1):163–173

Chen J, Huang H, Tian S, Youli Q (2009) Feature selection for text classification with naïve bayes. Expert Syst Appl 36(3):5432–5435

Park H, Kwon S, Kwon HC (2010) Complete gini-index text (git) feature-selection algorithm for text classification. In: The 2nd international conference on software engineering and data mining, pp 366–371. IEEE

Gao Y, Wang HL (2009) A feature selection algorithm based on poisson estimates. In: 2009 Sixth international conference on fuzzy systems and knowledge discovery, volume 1, pp 13–18. IEEE

Korde V, Mahender CN (2012) Text classification and classifiers: a survey. Int J Artif Intell Appl 3(2):85

Zhang T (2004) Solving large scale linear prediction problems using stochastic gradient descent algorithms. In: Proceedings of the twenty-first international conference on Machine learning, p. 116. ACM

McCallum A, Nigam K et al (1998) A comparison of event models for naive bayes text classification. In: AAAI-98 workshop on learning for text categorization, volume 752, pp 41–48. Citeseer

Baoxun X, Guo X, Ye Y, Cheng J (2012) An improved random forest classifier for text categorization. JCP 7(12):2913–2920

Karegowda AG, Manjunath AS, Jayaram MA (2010) Comparative study of attribute selection using gain ratio and correlation based feature selection. Int J Inf Technol Knowl Manag 2(2):271–277

Yu L, Liu H (2003) Feature selection for high-dimensional data: a fast correlation-based filter solution. In: Proceedings of the 20th international conference on machine learning (ICML-03), pp 856–863

Tan S (2005) Neighbor-weighted k-nearest neighbor for unbalanced text corpus. Expert Syst Appl 28(4):667–671

Lopez MM, Kalita J (2017) Deep learning applied to nlp. arXiv preprint arXiv:1703.03091

Conneau A, Schwenk H, Barrault L, Lecun Y (2016) Very deep convolutional networks for natural language processing. arXiv preprint arXiv:1606.01781, 2

Camacho-Collados J, Pilehvar MT (2017) On the role of text preprocessing in neural network architectures: an evaluation study on text categorization and sentiment analysis. arXiv preprint arXiv:1707.01780

Ayedh A, Tan G, Alwesabi K, Rajeh H (2016) The effect of preprocessing on arabic document categorization. Algorithms 9(2):27

Malaviya C, Wu S, Cotterell R (2019) A simple joint model for improved contextual neural lemmatization. arXiv preprint arXiv:1904.02306

Yulia L (2008) Effect of preprocessing on extractive summarization with maximal frequent sequences. In: Mexican international conference on artificial intelligence, pp 123–132. Springer, 2008

Danisman T, Alpkocak A (2008) Feeler: emotion classification of text using vector space model. In: AISB 2008 convention communication, interaction and social intelligence, volume 1, pp 53

Sharma D, Cse M (2012) Stemming algorithms: a comparative study and their analysis. Int J Appl Inf Syst 4(3):7–12

Kanhirangat V, Gupta D (2016) A study on extrinsic text plagiarism detection techniques and tools. J Eng Sci Technol Rev 9(150–164):10

Latha K (2010) A dynamic feature selection method for document ranking with relevance feedback approach. ICTACT J Soft Comput 7(1):1–8

Kohavi R, John GH (1997) Wrappers for feature subset selection. Artif Intell 97(1–2):273–324

Lal TN, Chapelle O, Weston J, Elisseeff A (2006) Embedded methods. In: Feature extraction, pp 137–165. Springer

Guyon I, Elisseeff A (2003) An introduction to variable and feature selection. J Mach Learn Res 3:1157–1182

Dash M, Liu H (1997) Feature selection for classification. Intell Data Anal 1(1–4):131–156

Ogura H, Amano H, Kondo M (2011) Comparison of metrics for feature selection in imbalanced text classification. Expert Syst Appl 38(5):4978–4989

Ogura H, Amano H, Kondo M (2009) Feature selection with a measure of deviations from poisson in text categorization. Expert Syst Appl 36(3):6826–6832

Devasena CL, Sumathi T, Gomathi VV, Hemalatha M (2011) Effectiveness evaluation of rule based classifiers for the classification of iris data set. Bonfring Int J Man Mach Interface 1:05–09

Salton G, Buckley C (1988) Term-weighting approaches in automatic text retrieval. Inf Process Manag 24(5):513–523

Ranjana A, Madhura P (2013) A novel algorithm for automatic document clustering. In: Advance computing conference (IACC), 2013 IEEE 3rd International, pp 877–882. IEEE

Choi D Kim P (2012) Automatic image annotation using semantic text analysis. In: International conference on availability, reliability, and security, pp 479–487. Springer

Huang C, Tianjun F, Chen H (2010) Text-based video content classification for online video-sharing sites. J Am Soc Inform Sci Technol 61(5):891–906

Tang B, He H, Baggenstoss PM, Kay S (2016) A bayesian classification approach using class-specific features for text categorization. IEEE Trans Knowl Data Eng 28(6):1602–1606

Rusland NF, Wahid N, Kasim S, Hafit H (2017) Analysis of naïve bayes algorithm for email spam filtering across multiple datasets. In: IOP conference series: materials science and engineering, volume 226, p. 012091. IOP Publishing

Watkins CJCH (1989) Learning from delayed rewards. Ph. D. thesis, King’s College, Cambridge

Chitrakar R, Chuanhe H (2012) Anomaly detection using support vector machine classification with k-medoids clustering. In: 2012 Third Asian himalayas international conference on internet, pp 1–5. IEEE

Tripathy A, Agrawal A, Rath SK (2016) Classification of sentiment reviews using n-gram machine learning approach. Expert Syst Appl 57:117–126

Bouvrie J (2006) Notes on convolutional neural networks. http://cogprints.org/5869/

Goodfellow I, Bengio Y, Courville A (2016) Deep learning. MIT press, Cambridge

Lee CY, Gallagher PW, Tu Z (2016) Generalizing pooling functions in convolutional neural networks: mixed, gated, and tree. In: Artificial intelligence and statistics, pp 464–472

Ranzato M, Huang FJ, Boureau YL, LeCun Y (2007) Unsupervised learning of invariant feature hierarchies with applications to object recognition. In: 2007 IEEE conference on computer vision and pattern recognition, pp 1–8. IEEE

Scherer D, Müller A, Behnke S (2010) Evaluation of pooling operations in convolutional architectures for object recognition. In: International conference on artificial neural networks, pp 92–101. Springer

Wang T, Wu DJ, Coates A , Ng AY (2012) End-to-end text recognition with convolutional neural networks. In: Proceedings of the 21st international conference on pattern recognition (ICPR2012), pp 3304–3308. IEEE

LeCun YA, Bottou L, Orr GB, Müller KR (2012) Efficient backprop. In: Neural networks: tricks of the trade, pp 9–48. Springer

Xu B, Wang N, Chen T, Li M (2015) Empirical evaluation of rectified activations in convolutional network. arXiv preprint arXiv:1505.00853

Ramachandran P, Zoph B, Le QV (2017) Swish: a self-gated activation function. arXiv preprint arXiv:1710.05941, 7

Jiuxiang G, Liu T, Wang X, Wang G, Cai J, Chen T (2018) Recent advances in convolutional neural networks. Pattern Recognit 77:354–377

Hochreiter S (1998) The vanishing gradient problem during learning recurrent neural nets and problem solutions. Int J Uncertain Fuzziness Knowl-Based Syst 6(02):107–116

Nwankpa C, Ijomah W, Gachagan A, Marshall S (2018) Activation functions: comparison of trends in practice and research for deep learning. arXiv preprint arXiv:1811.03378

Ioffe S, Szegedy C (2015) Batch normalization: accelerating deep network training by reducing internal covariate shift. arXiv preprint arXiv:1502.03167

Hinton GE, Nitish S, Alex K, Ilya S, Salakhutdinov RR (2012) Improving neural networks by preventing co-adaptation of feature detectors. arXiv preprint arXiv:1207.0580

Srivastava N, Hinton G, Krizhevsky A, Sutskever I, Salakhutdinov R (2014) Dropout: a simple way to prevent neural networks from overfitting. J Mach Learn Res 15(1):1929–1958

Lin M, Chen Q, Yan S (2013) Network in network. arXiv preprint arXiv:1312.4400

Rawat W, Wang Z (2017) Deep convolutional neural networks for image classification: a comprehensive review. Neural Comput 29(9):2352–2449

Sutskever I, Martens J, Hinton GE (2011) Generating text with recurrent neural networks. In: ICML

Mandic D, Chambers J (2001) Recurrent neural networks for prediction: learning algorithms, architectures and stability. Wiley, Hoboken

Bengio Y, Simard P, Frasconi P (1994) Learning long-term dependencies with gradient descent is difficult. IEEE Trans Neural Networks 5(2):157–166

Pascanu R, Mikolov T, Bengio Y (2013) On the difficulty of training recurrent neural networks. In: International conference on machine learning, pp 1310–1318

Junyoung C, Caglar G, KyungHyun C, Yoshua B (2014) Empirical evaluation of gated recurrent neural networks on sequence modeling. arXiv preprint arXiv:1412.3555

Cho K, Van Merriënboer B, Gulcehre C, Bahdanau D, Bougares F, Schwenk H, Bengio Y (2014) Learning phrase representations using rnn encoder-decoder for statistical machine translation. arXiv preprint arXiv:1406.1078

Conn AR, Scheinberg K , Vicente LN (2009) Introduction to derivative-free optimization. SIAM

Jones DR, Schonlau M, Welch WJ (1998) Efficient global optimization of expensive black-box functions. J Global Optim 13(4):455–492

Villemonteix J, Vazquez E, Walter E (2009) An informational approach to the global optimization of expensive-to-evaluate functions. J Global Optim 44(4):509

Beyer H-G (2001) The theory of evolution strategies. Springer, Berlin

Bergstra J, Bengio Y (2012) Random search for hyper-parameter optimization. J Mach Learn Res 13(1):281–305

Yin W, Kann K, Yu M, Schütze H (2017) Comparative study of cnn and rnn for natural language processing. arXiv preprint arXiv:1702.01923

Yoon K (2014) Convolutional neural networks for sentence classification. arXiv preprint arXiv:1408.5882

Nal K, Edward G, Phil B (2014) A convolutional neural network for modelling sentences. arXiv preprint arXiv:1404.2188

Yin W, Schütze H (2016) Multichannel variable-size convolution for sentence classification. arXiv preprint arXiv:1603.04513

Zhang Y, Roller S, Wallace B (2016) Mgnc-cnn: a simple approach to exploiting multiple word embeddings for sentence classification. arXiv preprint arXiv:1603.00968

Yogatama D, Dyer C, Ling W, Blunsom P (2017) Generative and discriminative text classification with recurrent neural networks. arXiv preprint arXiv:1703.01898

Palangi H, Deng L, Shen Y, Gao J, He X, Chen J, Song X, Ward R (2016) Deep sentence embedding using long short-term memory networks: analysis and application to information retrieval. IEEE/ACM Trans Audio Speech Language Process 24(4):694–707

Vu NT, Adel H, Gupta P, Schütze H (2016) Combining recurrent and convolutional neural networks for relation classification. arXiv preprint arXiv:1605.07333

Wen Y, Zhang W, Luo R, Wang J (2016) Learning text representation using recurrent convolutional neural network with highway layers. arXiv preprint arXiv:1606.06905,

Wang J, Yu LC, Lai KR, Zhang X (2016) Dimensional sentiment analysis using a regional cnn-lstm model. In: Proceedings of the 54th annual meeting of the association for computational linguistics (Volume 2: Short Papers), pp 225–230

Chen T, Ruifeng X, He Y, Wang X (2017) Improving sentiment analysis via sentence type classification using bilstm-crf and cnn. Expert Syst Appl 72:221–230

Dauphin YN, Fan A, Auli M, Grangier D (2017) Language modeling with gated convolutional networks. In: Proceedings of the 34th international conference on machine learning-volume 70, pp 933–941. JMLR. org

Adel H, Schütze H (2016) Exploring different dimensions of attention for uncertainty detection. arXiv preprint arXiv:1612.06549

Hoffmann J, Navarro O, Kastner F, Janßen B, Hubner M (2017) A survey on cnn and rnn implementations. In: PESARO 2017: the seventh international conference on performance, safety and robustness in complex systems and applications

Tang D, Qin B, Liu T (2015) Document modeling with gated recurrent neural network for sentiment classification. In: Proceedings of the 2015 conference on empirical methods in natural language processing, pp 1422–1432

Hermanto A, Adji TB, Setiawan NA(2015) Recurrent neural network language model for english-indonesian machine translation: Experimental study. In: 2015 International conference on science in information technology (ICSITech), pp 132–136. IEEE, 2015

Messina R, Louradour J (2015) Segmentation-free handwritten chinese text recognition with lstm-rnn. In: 2015 13th International conference on document analysis and recognition (icdar), pp 171–175. IEEE, 2015

Sundermeyer M, Ney H, Schlüter R (2015) From feedforward to recurrent lstm neural networks for language modeling. IEEE/ACM Trans Audio Speech Language Process 23(3):517–529

Takase S, Suzuki J, Nagata M (2019) Character n-gram embeddings to improve rnn language models. arXiv preprint arXiv:1906.05506

Viswanathan S, Kumar MA, Soman KP (2019) A sequence-based machine comprehension modeling using lstm and gru. In: Emerging research in electronics, computer science and technology, pp 47–55. Springer

Lai S, Liheng X, Liu K, Zhao J (2015) Recurrent convolutional neural networks for text classification. AAAI 333:2267–2273

Chen G, Ye D, Xing Z, Chen J, Cambria E (2017) Ensemble application of convolutional and recurrent neural networks for multi-label text categorization. In: 2017 International joint conference on neural networks (IJCNN), pp 2377–2383. IEEE

Zhou C, Sun C, Liu Z, Lau F (2015) A c-lstm neural network for text classification. arXiv preprint arXiv:1511.08630

Wang X, Jiang W, Luo Z (2016) Combination of convolutional and recurrent neural network for sentiment analysis of short texts. In: Proceedings of COLING 2016, the 26th international conference on computational linguistics: technical papers, pp 2428–2437

Mikolov T, Sutskever I, Chen K, Corrado GS, Dean J (2013) Distributed representations of words and phrases and their compositionality. In: Advances in neural information processing systems, pp 3111–3119

Bojanowski P, Grave E, Joulin A, Mikolov T (2016) Enriching word vectors with subword information. Trans Assoc Comput Linguist 5:135–146

Jeffrey P, Richard S, Christopher M (2014) Glove: global vectors for word representation. In: Proceedings of the 2014 conference on empirical methods in natural language processing (EMNLP), pp 1532–1543, Doha, Qatar, October (2014). Association for Computational Linguistics

Ayinde BO, Inanc T, Zurada JM (2019) On correlation of features extracted by deep neural networks. arXiv preprint arXiv:1901.10900

Bigi B (2003) Using kullback-leibler distance for text categorization. In: European conference on information retrieval, pp 305–319. Springer

Stehlík M, Ruiz MP, Stehlíková S, Lu Y (2020) On equidistant designs, symmetries and their violations in multivariate models. In: Contemporary experimental design, multivariate analysis and data mining, pp 217–225. Springer

Tang J, Alelyani S, Liu H (2014) Feature selection for classification: a review. Data Classification: Algorithms and Applications, pp 37

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Funding

Not applicable.

Conflict of interest

Corresponding author on the behalf of all authors declares that no conflict of interest is present.

Availability of data and material

DSL Urdu news dataset, and other pre-processing resources will be available at Github repository (https://github.com/minixain/Urdu-Text-Classification).

Code availability

The source code will be available at Github repository (https://github.com/minixain/Urdu-Text-Classification).

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

The article Benchmarking performance of machine and deep learning-based methodologies for Urdu text document classification, written by Muhammad Nabeel Asim, Muhammad Usman Ghani, Muhammad Ali Ibrahim, Waqar Mahmood, Andreas Dengel, Sheraz Ahmed, was originally published electronically on the publisher’s internet portal (currently SpringerLink) on [10/2020] with open access. With the author(s)’ decision to step back from Open Choice, the copyright of the article changed on [10/2020] to © [Springer-Verlag London Ltd., part of Springer Nature] [2020] and the article is forthwith distributed under the terms of copyright.

Rights and permissions

About this article

Cite this article

Asim, M.N., Ghani, M.U., Ibrahim, M.A. et al. Benchmarking performance of machine and deep learning-based methodologies for Urdu text document classification. Neural Comput & Applic 33, 5437–5469 (2021). https://doi.org/10.1007/s00521-020-05321-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00521-020-05321-8