Abstract

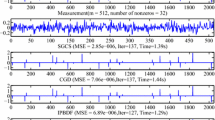

This paper considers the \(L_1\)-minimization problem for sparse signal and image reconstruction by using projection neural networks (PNNs). Firstly, a new finite-time converging projection neural network (FtPNN) is presented. Building upon FtPNN, a new fixed-time converging PNN (FxtPNN) is designed. Under the condition that the projection matrix satisfies the Restricted Isometry Property (RIP), the stability in the sense of Lyapunov and the finite-time convergence property of the proposed FtPNN are proved; then, it is proven that the proposed FxtPNN is stable and converges to the optimum solution regardless of the initial values in fixed time. Finally, simulation examples with signal and image reconstruction are carried out to show the effectiveness of our proposed two neural networks, namely FtPNN and FxtPNN.

Similar content being viewed by others

Data availability

Data sharing not applicable to this article as no datasets were generated or analyzed during the current study.

References

Donoho DL (2006) Compressed sensing. IEEE Trans Inform Theory 52(4):1289–1306

Candes EJ, Wakin MB (2008) An introduction to compressive sampling. IEEE Signal Process Mag 25(2):21–30

Gan L (2007) Block compressed sensing of natural images. In: 15th International conference on digital signal processing, pp 403–406

Zhao Y, Liao X, He X, Tang R, Deng W (2021) Smoothing inertial neurodynamic approach for sparse signal reconstruction via lP-norm minimization. Neural Netw 140:100–112

Aharon M, Elad M, Bruckstein A (2006) K-SVD: an algorithm for designing overcomplete dictionaries for sparse representation. IEEE Trans Signal Process 54(11):4311–4322

Zhao Y, Liao X, He X (2022) Novel projection neurodynamic approaches for constrained convex optimization. Neural Netw 150:336–349

Xu J, He X, Han X, Wen H (2022) A two-layer distributed algorithm using neurodynamic system for solving \(l_1\)-minimization. IEEE Trans Circuits Syst II Exp Br. https://doi.org/10.1109/TCSII.2022.3159814

Duarte MF, Davenport MA, Takhar D, Laska JN, Sun T, Kelly KF, Baraniuk RG (2008) Single-pixel imaging via compressive sampling. IEEE Signal Process Mag 25(2):83–91

Adcock B, Gelb A, Song G, Sui Y (2019) Joint sparse recovery based on variances. SIAM J Sci Comput 41(1):246–268

Xie J, Liao A, Lei Y (2018) A new accelerated alternating minimization method for analysis sparse recovery. Signal Process 145:167–174

Sant A, Leinonen M, Rao BD (2022) Block-sparse signal recovery via general total variation regularized sparse Bayesian learning. IEEE Trans Signal Process 70:1056–1071

Thomas TJ, Rani JS (2022) FPGA implementation of sparsity independent regularized pursuit for fast CS reconstruction. IEEE Trans Circuits Syst I Regul Pap 69(4):1617–1628

Figueiredo MAT, Nowak RD, Wright SJ (2007) Gradient projection for sparse reconstruction: application to compressed sensing and other inverse problems. IEEE J Sel Top Signal Process 1(4):586–597

Wang J, Kwon S, Shim B (2012) Generalized orthogonal matching pursuit. IEEE Trans Signal Process 60(12):6202–6216

Ji S, Xue Y, Carin L (2008) Bayesian compressive sensing. IEEE Trans Signal Process 56(6):2346–2356

Natarajan BK (1995) Sparse approximate solutions to linear systems. SIAM J Comput 24(2):227–234

Chen SS, Donoho DL, Saunders MA (1998) Atomic decomposition by basis pursuit. SIAM J Sci Comput 20(1):33–61

Liu Q, Wang J (2015) \(L_1\)-minimization algorithms for sparse signal reconstruction based on a projection neural network. IEEE Trans Neural Netw Learn Syst 27(3):698–707

Liu Q, Zhang W, Xiong J, Xu B, Cheng L (2018) A projection-based algorithm for constrained \(L_1\)-minimization optimization with application to sparse signal reconstruction. In: 2018 Eighth international conference on information science and technology (ICIST), pp 437–443

Feng R, Leung C, Constantinides AG, Zeng W (2017) Lagrange programming neural network for nondifferentiable optimization problems in sparse approximation. IEEE Trans Neural Net Learn Syst 28(10):2395–2407

Xu B, Liu Q (2018) Iterative projection based sparse reconstruction for face recognition. Neurocomputing 284:99–106

Guo C, Yang Q (2015) A neurodynamic optimization method for recovery of compressive sensed signals with globally converged solution approximating to \(l_{0}\) minimization. IEEE Trans Neural Net Learn Syst 26(7):1363–1374

Li W, Bian W, Xue X (2020) Projected neural network for a class of non-Lipschitz optimization problems with linear constraints. IEEE Trans Neural Net Learn Syst 31(9):3361–3373

Ma L, Bian W (2021) A simple neural network for sparse optimization with \(l_1\) regularization. IEEE Trans Netw Sci Eng 8(4):3430–3442

Wang D, Zhang Z (2019) KKT condition-based smoothing recurrent neural network for nonsmooth nonconvex optimization in compressed sensing. Neural Comput Appl 31(7):2905–2920

Wen H, He X, Huang T (2022) Sparse signal reconstruction via recurrent neural networks with hyperbolic tangent function. Neural Net. https://doi.org/10.1016/j.neunet.2022.05.022

Che H, Wang J, Cichocki A (2022) Sparse signal reconstruction via collaborative neurodynamic optimization. Neural Net 154:255–269

Li J, Che H, Liu X (2022) Circuit design and analysis of smoothed \(l_0\) norm approximation for sparse signal reconstruction. Circ Syst Signal Process 42:1–25

Zhang X, Li C, Li H (2022) Finite-time stabilization of nonlinear systems via impulsive control with state-dependent delay. J Frankl Inst 359(3):1196–1214

Zhang X, Li X, Cao J, Miaadi F (2018) Design of memory controllers for finite-time stabilization of delayed neural networks with uncertainty. J Frankl Inst 355(13):5394–5413

Li H, Li C, Huang T, Zhang W (2018) Fixed-time stabilization of impulsive Cohen-Grossberg bam neural networks. Neural Netw 98:203–211

Li H, Li C, Huang T, Ouyang D (2017) Fixed-time stability and stabilization of impulsive dynamical systems. J Frankl Inst 354(18):8626–8644

Garg K, Panagou D (2021) Fixed-time stable gradient flows: applications to continuous-time optimization. IEEE Trans Autom Control 66(5):2002–2015

Ju X, Hu D, Li C, He X, Feng G (2022) A novel fixed-time converging neurodynamic approach to mixed variational inequalities and applications. IEEE Trans Cybern 52(12):12942–12953

Ju X, Li C, Che H, He X, Feng G (2022) A proximal neurodynamic network with fixed-time convergence for equilibrium problems and its applications. IEEE Trans Neural Netw Learn Syst. https://doi.org/10.1109/TNNLS.2022.3144148

Garg K, Baranwal M, Gupta R, Benosman M (2022) Fixed-time stable proximal dynamical system for solving MVIPs. IEEE Trans Autom Control. https://doi.org/10.1109/TAC.2022.3214795

Bhat SP, Bernstein DS (2000) Finite-time stability of continuous autonomous systems. SIAM J Control Optim 38(3):751–766

Polyakov A (2012) Nonlinear feedback design for fixed-time stabilization of linear control systems. IEEE Trans Autom Control 57(8):2106–2110

Parikh N, Boyd S (2013) Proximal algorithms. Found Trends Optim 1:123–231

Candes E, Tao T (2007) The DANTZIG selector: statistical estimation when p is much larger than n. Ann Stat 35(6):2313–2351

Meana H, Miyatake M, Guzm V (2004) Analysis of a wavelet-based watermarking algorithm. In: International conference on electronics, communications, and computers. IEEE computer society, Los Alamitos, CA, USA, p 283

LaSalle JP (ed) (1967) An invariance principle in the theory of stability. New York, Academic, pp 277–286

Acknowledgements

This work was founded by the National Natural Science Foundation of China under Grant Nos. 62373310, 62176218; and in part by the Graduate Student Research Innovation Project of Chongqing under Grant CYB22152.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors have no relevant conflicts of interest to disclose.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix A

Proof of Theorem 1

The derivative of \(V_1\) with respect to t is given as follows:

\(\textit{u}^*\) is a constant, we can get

As stated in the definition, FtPNN (11) has a equilibrium point \(\textit{u}^*\), which indicates that

where \(Fin(\textit{u}):= \{\textit{u} \in R^n: -Pu^* -(I - 2P)g(\textit{u}^*) + q = 0\}\).

Then, we can get it by inserting (24) into (23).

As a result of Eqs. (25) and (16), we can obtain

The value of \(G(\tilde{\textit{u}})\) is now discussed. According to Eq. (7), we can acquire that the function \(g(\cdot )\) is non-decreasing. For \(\tilde{\textit{u}}_i \ge 0, {z_{1i}}(s)=g(s + \textit{u}_i^*) - g(\textit{u}_i^*)\), hence we can deduce that \(\forall s \le 0, {z_{1i}}(s) \ge 0\), and consequently

Further,

therefore,

For \(\tilde{\textit{u}}_i \ge 0, {z_{2i}}(s)=g(\textit{u}_i^*) - g(s + \textit{u}_i^*)\), hence we can deduce that \(\forall s \le 0, {z_{2i}}(s) \ge 0\), and consequently

Correspondingly, when \(\forall s \le 0, {z_{2i}}(s) \le -s\),

As a result, the following conclusion can be drawn:

The following is a summary of the above findings:

Using Eq. (14) and (28), it is not difficult to obtain that

It is said that P is nonnegative (positive), and the matrix \((I-P)\) is a positive semi-definite matrix. The maximum eigenvalue is specified as \(\delta _{\max }(\cdot )\). Define \(\gamma\) such as:

One obtains

with \(\gamma > 0\). It follows from Eq. (28) that

Deduce \(V_1\), then let be a radically unbounded Lyapunov function that is positive semi-definite based on (23) and (30). We can conclude that the FtPNN (11) is Lyapunov stable based on (27) and (30). According to the LaSalle invariance principle [42], we currently recognize that \(\tilde{\textit{u}}\) will converge to an invariant subset of \(M \triangleq \, \{\tilde{\textit{u}}\ \, \vert P\tilde{b} + (I-P)\tilde{c} = 0\}\) called \(U_{inv}\). It is clear from (26) and (27) that \(\dot{V}_1 = 0\) implies \(\dot{\tilde{\textit{u}}} = 0\), hence all states of U are invariant. As a consequence, \(U_{inv} = U\). Certainly, we can get \(\textit{u}\) to converge to U, and then \(g(\textit{u})\) to converge to a collection of optimal solutions (2). Theorem 1 has also been proven.

Proof of Theorem 2

Firstly, the relation of \(\tilde{\textit{u}}\) and \(\tilde{c}\) will be proved. Under the condition 2 of Lemma 3, \(\textit{u}_i(t)\) will have the same sign as \(\textit{u}^*\), after a finite time \(t_1 < \infty\). We will consider the following two cases, respectively. (1): if \(\vert \textit{u}^*_i(t)\vert < 1\), we can get \(\vert \tilde{c}_i \vert =\vert g(\tilde{\textit{u}}_i+\textit{u}^*_i)-g(\textit{u}^*_i)\vert \le \vert \tilde{\textit{u}}_i \vert\). (2): if \(\vert \textit{u}^*_i(t)\vert > 1\), we can get \(\tilde{c}_i=g(\textit{u}_i)-g(\textit{u}^*_i) = g(\tilde{\textit{u}}_i+\textit{u}^*_i)-g(\textit{u}^*_i)\). Based on the condition 2 of Lemma 3, the presented FtPNN is globally convergent. It indicates that \(\vert \tilde{\textit{u}}_i \vert\) is tiny, for any small \(\mathfrak {p} > 0\), there is \(t(\omega ) < \infty\), such that \(\vert \tilde{\textit{u}}_i(t)\vert < \ell , \forall t>t(\ell )\). Then, \(t>t_2\), define \(t_2 = t(1-\mathfrak {p})\), we can get \(\vert \tilde{\textit{u}}_i(t)\vert < 1-\mathfrak {p}\) that \(\textit{u}^*_i\) and \(\tilde{\textit{u}}_i + \textit{u}^*_i\) have the same sign, \(\tilde{c}_i = 0\) obtained.

According to Assumption 1, P is an idempotent matrix that is also symmetric, we have \(P^2=P, P\tilde{\textit{u}} = P^T P\tilde{\textit{u}}=\tilde{\textit{u}}\), then \(P\tilde{c}=\tilde{c}\). Consequently,

In the sequel, there exists a \(t_e=\max \{t_1,t_2\}<\infty\), such that \(\forall t, t>t_e\). We have, if \(\vert \tilde{\textit{u}}_i^*\vert <1\), \(\vert \tilde{c}_i\vert \le \vert \tilde{\textit{u}}_i\vert\), else if \(\vert \tilde{\textit{u}}_i^*\vert >1\), \(\tilde{c}_i = 0\). For simplicity, if \(\vert \tilde{\textit{u}}_i^*\vert <1\), we denote \(i \in \Gamma _c\), if \(\vert \tilde{\textit{u}}_i^* \vert >1\), we denote \(i \in \Gamma _b\). Therefore, \(\Vert \tilde{c}\Vert \le \Vert \tilde{\textit{u}}\Vert = \Vert \tilde{\textit{u}_{\Gamma _b}}\Vert +\Vert \tilde{\textit{u}_{\Gamma _c}}\Vert\), then \(\Vert \tilde{c}\Vert \le \Vert \tilde{\textit{u}_{\Gamma _b}}\Vert\).

Utilizing Assumption 1, \(P_{\Gamma _b}\) and \(P_{\Gamma _c}\) is not singular, thus \(\Vert P\tilde{\textit{u}} + (I-2P)\tilde{c}\Vert ^2_2 > 0\) so long as \(\Vert \tilde{\textit{u}}\Vert ^2_2>0\). Then, there is a small value \(\chi\) such that \(\Vert \tilde{c}\Vert \le \chi \Vert \tilde{\textit{u}}\Vert\), then

where \((1-\chi ) >0\).

Next, the FtPNN (11) are considered, according to Eqs. (27) and (31), for \(t>t_e\), we have

For all \(t>t_e\), \(V_1(\tilde{\textit{u}})\) converges to zero in finite time, and \(t_f>t_e\). At last, one obtains \(\forall t>t_f, \textit{u}=\textit{u}^*\).

From (A7) and (A10), one obtains,

In view of the above,

Therefore, one observes that,

Thus, we can get the FtPNN (11) can converge within \(\frac{4}{(3-\alpha )(1-\chi )^{\frac{1+\alpha }{2}} \gamma ^{-\frac{1+\alpha }{4}}}V_1(\tilde{\textit{u}}(0))^{\frac{3-\alpha }{4}}\). Theorem 2 is proved. \(\square\)

Appendix B

Proof of Lemma 4

Consider the following matrix \(H(\tilde{\textit{u}}_i(t))\). According to \(\varrho (\cdot )\), it is possible to have

as a result,

we know that the function \(\varrho (\cdot )\) is non-decreasing and \(\alpha _i(s) = \varrho (s+\tilde{\textit{u}}_i^*)+\varrho (\tilde{\textit{u}}_i^*)\), and it indicates that \(\tilde{\textit{u}}_i(t) \cdot \alpha _i(\tilde{\textit{u}}_i(t)) \ge 0\).

If \(\tilde{\textit{u}}_i(t) \le 0, \alpha _i(\tilde{\textit{u}}_i(t)) \le 0\) and \(\tilde{\textit{u}}_i(t) \le \alpha _i(\tilde{\textit{u}}_i(t))\), one obtains

therefore,

Similarly, if \(\tilde{\textit{u}}_i(t) \ge 0, \alpha _i(\tilde{\textit{u}}_i(t)) \ge 0\) and \(\tilde{\textit{u}}_i(t) \ge \alpha _i(\tilde{\textit{u}}_i(t))\), we have

Consequently, we can obtain the following:

Therefore, we have \(0 \le H_i(\tilde{\textit{u}}_i(t)) \le ((\tilde{\textit{u}}_i^2(t))/(2))\), for any \(\tilde{\textit{u}}_i(t)\).

As shown in the previous demonstration, the same property on \(G(\tilde{\textit{u}}(t))\) holds, which means that the conclusion holds.

Proof of Lemma 5

Consider the boundary of \(\vert H_i(\tilde{\textit{u}}_j(t))\vert\). When \(i \ne j\), one obtains

Furthermore, according to the condition 2 of Lemma 3, \(\tilde{\textit{u}}(t)\), \(\tilde{c}(t)\), and \(\tilde{b}(t)\) are bounded, there are three constants \(\tau _1\), \(\tau _2\), and \(\tau _3\) that make the following inequations established for each \(\rho _{ij}(s)\) before the FxtMPNN (17) converges, i.e., \(\Vert \tilde{\textit{u}}\Vert _2\):

where \(\tau _1 > 0\), \(\tau _2 > 0\), and \(\tau _3 > 0\).

In addition,

therefore,

According to Lemma 4, one obtains

Let \(\tau ^{'}=\max \{\tau _1 \tau _2, 1/2\}\), we have

one obtains,

where \(\zeta\) is defined in (56).

Correspondingly, the following conclusion is reached based on the preceding discussion:

where \(\tau ^{''}=\max \{\tau _2 \tau _3, 1/2\}\), one obtains,

Using Lemma 4, one obtains,

it follows from (38) and (40) that

As a result, a positive constant \(\psi\) exists, one obtains \(V_2(\tilde{\textit{u}}(t)) \le \psi \Vert \tilde{\textit{u}}_i(t)\Vert _2^2\), where \(\psi = (\zeta \tau ^{'}(N^2-N)+\tau ^{'}N) + \frac{N}{2} + \tau ^{''}(N^2-N)+\tau ^{''}N\). Since \(\tau ^{'} \ge 1/2, \tau ^{''} \ge 1/2\), thus there exists \(\psi \ge 3N/2\).

Proof of Lemma 6

According to the proof of Theorem 2, the FxtPNN is Lyapunov stable. \(V_2\) is bounded, which is proved by Lemma 5. Hence, according to LaSalle theorem [42], the FxtPNN (17) converges to a invariant set L, where

As we know, the solution of FxtPNN is unique. That is to say, the invariant set L has one unique element, i.e. \(\tilde{\textit{u}}=0\) and \(V_2(\tilde{\textit{u}})=0\). Accordingly, \(\Vert P\tilde{c}+(I-P)\tilde{b}\Vert ^2_2 \ne 0\) and \(\Vert \tilde{\textit{u}}\Vert ^2_2 \ne 0\) are tenable before the FxtPNN converges. Under this premise, there exists two constants \(0<\kappa <1\) and o, such that

where

with \(o \in (0,1)\).

Proof of Lemma 7

Since \(\tilde{\textit{u}} = \textit{u}(t)-\textit{u}^*\), \(\textit{u}^*\) is a constant, one obtains,

From FxtPNN (17), \(\textit{u}^*\) is the equilibrium point, one obtains,

we can get \(c^*\) and \(b^*\) are the equilibrium points, such that,

then, combing (42) and (44), one obtains

We have a derivation rule based on the changeable upper limit of the integral, therefore

and

Therefore,

and

Thus, by (20), we get

we get \(\dot{V}_2 \le 0\).

Proof of Theorem 3

With respect to Lemma 5, one obtains,

and, form Lemma 4, we have

so that we can obtain

By Eq. (51), we get

Based on Lemmas 6 and 2, the following conclusion can be drawn. The settling time \(\mathcal {T}\) satisfies:

Using Lemma 3, for all \(t \ge \mathcal {T}\) and random initial conditions, \(V_2=0\).

And from (B31), one obtains,

Define a positive constant \(t_1\) to prove fixed-time convergence, and \(V_2(\tilde{\textit{u}}(t_1))=1\). This leads to

And then, the converge time can be obtained as follows:

that is,

Meanwhile,

then,

Substituting (60) into (58) yields the converge time,

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Xu, J., Li, C., He, X. et al. Projection neural networks with finite-time and fixed-time convergence for sparse signal reconstruction. Neural Comput & Applic 36, 425–443 (2024). https://doi.org/10.1007/s00521-023-09015-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00521-023-09015-9