Abstract

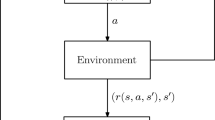

Reinforcement learning (RL) attracts much attention as a technique for realizing computational intelligence such as adaptive and autonomous decentralized systems. In general, however, it is not easy to put RL to practical use. The difficulty includes the problem of designing suitable state and action spaces for an agent. Previously, we proposed an adaptive state space construction method which is called a “state space filter,” and an adaptive action space construction method which is called “switching RL,” after the other space has been fixed. In this article, we reconstitute these two construction methods as one method by treating the former and the latter as a combined method for mimicking an infant’s perceptual development. In this method, perceptual differentiation progresses as an infant become older and more experienced, and the infant’s motor development, in which gross motor skills develop before fine motor skills, also progresses. The proposed method is based on introducing and referring to “entropy.” In addition, a computational experiment was conducted using a so-called “path planning problem” with continuous state and action spaces. As a result, the validity of the proposed method has been confirmed.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.References

Sutton RS, Barto AG (1998) Reinforcement learning. Bradford Books, MIT Press, Cambridge

Nagayoshi M, Murao H, Tamaki H (2006) A state space filter for reinforcement learning. Proceedings AROB 11th’ 06, pp 615–618 (GS1–3)

Nagayoshi M, Murao H, Tamaki H (2010) A reinforcement learning with switching controllers for continuous action space. Artif Life Robotics 15:97–100

Author information

Authors and Affiliations

Corresponding author

Additional information

This work was presented in part at the 16th International Symposium on Artificial Life and Robotics, Oita, Japan, January 27–29, 2011

About this article

Cite this article

Nagayoshi, M., Murao, H. & Tamaki, H. Adaptive co-construction of state and action spaces in reinforcement learning. Artif Life Robotics 16, 48–52 (2011). https://doi.org/10.1007/s10015-011-0883-2

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10015-011-0883-2