Abstract

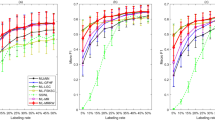

This paper studies the use of a semisupervised learning algorithm from different information sources. We first offer a theoretical explanation as to why minimising the disagreement between individual models could lead to the performance improvement. Based on the observation, this paper proposes a semisupervised learning approach that attempts to minimise this disagreement by employing a co-updating method and making use of both labeled and unlabeled data. Three experiments to test the effectiveness of the approach are presented in this paper: (i) webpage classification from both content and hyperlinks; (ii) functional classification of gene using gene expression data and phylogenetic data and (iii) machine self-maintaining from both sensory and image data. The results show the effectiveness and efficiency of our approach and suggest its application potentials.

Similar content being viewed by others

References

Abney S (2002) Bootstrapping. In: Proceedings of the 40th annual meeting of the Association for Computational Linguistics (ACL 2002). Morgan Kaufmann, San Francisco, CA, pp 360–367

Allwein EL, Schapire RE, Singer Y (2000) Reducing multiclass to binary: a unifying approach for margin classifiers. In: Langley P (ed) Proceedings of the 17th international conference on machine learning (ICML 2000). Morgan Kaufmann, San Francisco, CA, pp 9–16

Becker S (1996) Mutual information maximization: models of cortical self-organization. Netw Comput Neural Syst 7:7–31

Bennett KP, Demiriz A, Maclin R (2002) Exploiting unlabeled data in ensemble methods. In: Proceedings of the eighth ACM SIGKDD international conference on knowledge discovery and data mining (SIGKDD 2002). ACM Press, New York, NY, pp 289–296

Blum A, Chawla S (2001) Learning from labeled and unlabeled data using graph mincuts. In: Brodley CE, Danyluk AP (eds) Proceedings of the eighteenth international conference on machine learning (ICML 2001). Morgan Kaufmann, San Francisco, CA, pp 19–26

Blum A, Mitchell T (1998) Combining labeled and unlabeled data with co-training. In: Proceedings of the eleventh annual conference on computational learning theory (COLT 1998). ACM Press, New York, NY, pp 92–100

Brown MPS, Grundy WN, Lin D, Cristianini N, Sugnet C, Furey TS, Manuel Ares J, Haussler D (2000) Knowledge-based analysis of microarray gene expression data using support vector machines. Proceedings of the National Academy of Sciences 97(1):262–267

Buc F, Grandvalet Y, Ambroise C (2002) Semi-supervised marginboost. In: Dietterich TG, Becker S, Ghahramani Z (eds) Advances in neural information processing systems 14 (NIPS 2001). The MIT Press, Cambridge, MA, pp 553–560

Castelli V, Cover T (1996) The relative value of labeled and unlabeled samples in pattern recognition with an unknown mixing parameter. IEEE Trans Inf Theory 42:2102–2117

Collins M, Singer Y (1999) Unsupervised models for named entity classification. In: Proceedings of the 1999 joint SIGDAT conference on empirical methods in natural language processing and very large corpora. ACM Press, New York, NY, pp 100–110

Cozman FG, Cohen I (2002) Unlabeled data can degrade classification performance of generative classifiers. In: Proceedings of the fifteenth international Florida artificial intelligence society conference (FLAIRS 2002), pp 327–331

Dasgupta S, Littman ML, McAllester D (2001) PAC generalization bounds for co-training. In: Dietterich TG, Becker S, Ghahramani Z (eds) Advances in neural information processing systems 14 (NIPS 2001). The MIT Press, Cambridge, MA, pp 375–382

De Sa VR, Ballard D (1998) Category learning through multimodality sensing. Neural Comput 10:1097–1117

Dempster A, Laird N, Rubin D (1977) Maximum likelihood from incomplete data via the EM algorithm. J Roy Stat Soc B 39(1):1–38

Fung G, Mangasarian O (2001) Semi-supervised support vector machines for unlabeled data classification. Optim Methods Softw 15:29–44

Ghahramani Z, Jordan MI (1994) Supervised learning from incomplete data via an EM approach. In: Cowan JD, Tesauro G, Alspector J (eds) Advances in neural information processing systems 6 (NIPS 1993). Morgan Kaufmann, San Francisco, CA, pp 120–127

Goldman S, Zhou Y (2000) Enhancing supervised learning with unlabeled data. In: Langley P (ed) Proceedings of the 17th international conference on machine learning (ICML 2000). Morgan Kaufmann, San Francisco, CA, pp 327–334

Li T, Zhu S, Li Q, Ogihara M (2003) Gene functional classification by semi-supervised learning from heterogeneous data. In: Proceedings of the 18th annual ACM symposium on applied computing (SAC’03). ACM Press, New York, NY, pp 78–82

McGurk H, MacDonald J (1976) Hearing lips and seeing voices. Nature 264:746–748

Mitchell TM (1997) Machine learning. McGraw-Hill

Muslea I, Minton S, Knoblock CA (2000) Selective sampling with redundant views. In: Proceedings of the seventeenth national conference on artificial intelligence and twelfth conference on innovative applications of artificial intelligence (AAAI/IAAI 2000). The MIT Press, Cambridge, MA, pp 621–626

Neal R, Hinton G (1998) A view of the EM algorithm that justifies incremental, sparse, and other variants. In: Jordan MI (ed) Learning in graphical models. The MIT Press, Cambridge, MA, pp 355–368

Nigam K (2001) Using unlabeled data to improve text classification. Doctoral Dissertation, Computer Science Department, Carnegie Mellon University, Pittsburgh, PA

Nigam K, Ghani R (2000) Analyzing the effectiveness and applicability of co-training. In: Proceedings of the ninth international conference on information and knowledge management (CIKM 2000). ACM Press, New York, NY, pp 86–93

Nigam K, McCallum AK, Thrun S, Mitchell TM (2000) Text classification from labeled and unlabeled documents using EM. Mach Learn 39:103–134

Pavlidis P, Weston J, Cai J, Grundy WN (2001) Gene functional classification from heterogeneous data. In: Proceedings of fifth annual international conference on computational molecular biology (RECOMB 2001). ACM Press, New York, NY, pp 249–255

Quinlan J (1993) C4.5: Programs for machine learning. Morgan Kaufmann, San Francisco, CA

Roth D, Zelenko D (2000) Toward a theory of learning coherent concepts. In: Proceedings of the seventeenth national conference on artificial intelligence and twelfth conference on innovative applications of artificial intelligence (AAAI/IAAI 2000). The MIT Press, Cambridge, MA, pp 639–644

Seeger M (2000) Learning with labeled and unlabeled data. Technical report, Institute for Adaptive and Neural Computation, University of Edinburgh, Edinburgh, UK

Vapnik VN (1998) Statistical learning theory. Wiley, New York, NY

Weiss G, Provost F (2001) The effect of class distribution on classifier learning: an empirical study. Technical report ML-TR 44, Department of Computer Science, Rutgers University, New Brunswick, NJ

Wu L, Oviatt SL, Cohen PR (1999) Multimodal integration—a statistical view. IEEE Trans Multimedia 1:334–341

Zhang T, Oles F (2000) A probability analysis on the value of unlabeled data for classification problems. In: Langley P (ed) Proceedings of the 17th international conference on machine learning (ICML 2000). Morgan Kaufmann, San Francisco, CA, pp 1191–1198

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Li, T., Ogihara, M. Semisupervised learning from different information sources. Knowl Inf Syst 7, 289–309 (2005). https://doi.org/10.1007/s10115-004-0155-8

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10115-004-0155-8