Abstract

This paper deals with a continuous-time Markov decision process in Borel state and action spaces and with unbounded transition rates. Under history-dependent policies, the controlled process may not be Markov. The main contribution is that for such non-Markov processes we establish the Dynkin formula, which plays important roles in establishing optimality results for continuous-time Markov decision processes. We further illustrate this by showing, for a discounted continuous-time Markov decision process, the existence of a deterministic stationary optimal policy (out of the class of history-dependent policies) and characterizing the value function through the Bellman equation.

Similar content being viewed by others

Notes

We do not explicitly indicate the topology of the a Borel space.

Below by measurable we always mean Borel-measurable.

It can be easily verified that \(\forall ~(x,a)\in K,\) \(\left( \frac{q(dy|x,a)}{1+\bar{q}_x}+I\{x\in dy\}\right) \) is a probability measure on \((S,{\fancyscript{B}}(S))\).

References

Bertsekas D, Shreve S (1978) Stochastic optimal control. Academic Press, NY

Feinberg E (2004) Continuous time discounted jump Markov decision processes: a discrete-event approach. Math Oper Res 29:492–524

Feinberg E (2012) Reduction of discounted continuous-time MDPs with unbounded jump and reward rates to discrete-time total-reward MDPs. In: Hernández-Hernández D, Minjarez-Sosa A (eds) Optimization, control, and application of stochastic systems, Birkhauser, Basel, pp 77–97

Guo X (2007) Continuous-time Markov decision processes with discounted rewards: the case of Polish spaces. Math Oper Res 32:73–87

Guo X, Hernández-Lerma O (2009) Continuous-time Markov decision processes: theory and applications. Springer, Heidelberg

Guo X, Piunovskiy A (2011) Discounted continuous-time Markov decision processes with constraints: unbounded transition and loss rates. Math Oper Res 36:105–132

Guo X, Song X (2011) Discounted continuous-time constrained Markov decision processes in Polish spaces. Ann Appl Probab 21:2016–2049

Guo X, Zhu W (2002) Denumerable-state continuous-time Markov decision processes with unbounded transition and reward rates under the discounted criterion. J Appl Probab 39:233–250

Guo X, Hernández-Lerma O, Prieto-Rumeau T (2006) A survey of recent results on continuous-time Markov decision processes. Top 14:177–257

Guo X, Huang Y, Song X (2012) Linear programming and constrained average optimality for general continuous-time Markov decision processes in history-dependent policies. SIAM J Control Optim 50:23–47

Guo X, Vykertas M, Zhang Y (2013) Absorbing continuous-time Markov decision processes with total cost criteria. Adv Appl Probab 45 (to appear)

Hernández-Lerma O, Lasserre JB (1996) Discrete-time Markov control processes. Springer, NY

Hernández-Lerma O, Lasserre JB (1999) Further topics on discrete-time Markov control processes. Springer, NY

Jacod J (1975) Multivariate point processes: predictable projection, Radon–Nykodym derivatives, representation of martingales. Z Wahrscheinlichkeitstheorie verw Gebite 31:235–253

Kakumanu P (1971) Continuously discounted Markov decision models with countable state and action spaces. Ann Math Statist 42:919–926

Kitaev M (1986) Semi-Markov and jump Markov controlled models: average cost criterion. Theory Probab Appl 30:272–288

Kitaev M, Rykov V (1995) Controlled queueing systems. CRC Press, Boca Raton

Piunovskiy A (1998) A controlled jump discounted model with constraints. Theory Probab Appl 42:51–71

Piunovskiy A, Zhang Y (2011a) Accuracy of fluid approximations to controlled birth-and-death processes: absorbing Case. Math Meth Oper Res 73:159–187

Piunovskiy A, Zhang Y (2011b) Discounted continuous-time Markov decision processes with unbounded rates: the convex analytic approach. SIAM J Control Optim 49:2032–2061

Piunovskiy A, Zhang Y (2011c) Discounted continuous-time Markov decision processes with unbounded rates: the dynamic programming approach. http://arxiv.org/abs/1103.0134

Piunovskiy A, Zhang Y (2012) The transformation method for continuous-time Markov decision processes. J Optim Theory Appl 154:691–712

Prieto-Rumeau T, Hernández-Lerma O (2012) Selected topics in continuous-time controlled Markov chains and Markov games. Imperial College Press, London

Puterman M (1994) Markov decision processes: discrete stochastic dynamic programming. Wiley, New York

Yan H, Zhang J, Guo X (2003) Continuous-time Markov decision processes with unbounded transition and discounted-reward rates. Stoch Anal Appl 26:209–231

Author information

Authors and Affiliations

Corresponding author

Appendix

Appendix

In this appendix, we establish some lemmas, and prove the main statements.

Proof of Theorem 2

Step 1. We prove that Eq. (9) holds for \(r(x):= u(x)I\{x\in S_l\}\), where \(S_l\) is defined in Condition 1. We obviously have

Indeed, by Condition 3(a, b) and Theorem 1(a),

and

It then follows from the previous calculations that

and

Now in order to establish equation (9) for \(r(x)=u(x)I\{x\in S_l\}\), it only remains to integrate formally \(r(\cdot )\) over \(S\) with respect to \(P_x^\pi (\xi _t\in \cdot )\) and use (5) in Theorem 1(b).

Step 2. We prove that Eq. (9) holds for any \(u\in \mathbf{B}_{w^{\prime }}(S)\). By putting \(S_{-1}:= \emptyset \) and observing \(E_x^\pi \left[ \sum _{l=-1}^\infty |u(\xi _t)|I\{\xi _t\in S_{l+1}{\setminus } S_l\}\right] <\infty ,\) we have

where the second last equality follows from formally applying the result obtained in Step 1 of this proof, i.e., (9) holds for \(r(x).\) The involved interchange of the order of integrations, summations and expectations is legal, as can be easily verified similarly to (16) and (17).

Step 3. We prove that Eq. (10) holds for any \(u\in \mathbf{B}_{w^{\prime }}(S).\) In this proof we repeatedly apply (9) to \(E_x^\pi [u(\xi _t)]\in \mathbf{B}_{w^{\prime }}(S).\) On the one hand, we have

On the other hand, we have the following two observations. Firstly,

where the interchange of the order of integrals in the first and the last equalities is legal as Condition 3(a) and Theorem 1(c) imply that for each \(u\in \mathbf{B}_{w^{\prime }}(S),\)

and

Secondly, integration by parts results in

These two observations, together with the expression for the LHS of (10) obtained in the above, finally lead to

as required. \(\square \)

Lemma 1

Suppose Condition 1(b) and Condition 4 are satisfied. Then for each \(u\in \mathbf{B}_{w}(S),\) the function \(v\) given by

is measurable in \(x\in S\).

Proof

By Remark 3, Condition 1(b) and Condition 4, we refer to the proof of Hernández-Lerma and Lasserre (1999, Lem. 8.3.7(a)) for that \(\forall ~u\in \mathbf{B}_w(S),x\in S,\) the functionFootnote 3 \(\int _S u(y)\left( \frac{q(dy|x,a)}{1+\bar{q}_x}+I\{x\in dy\}\right) \) is lower semicontinuous in \(a\in A(x).\) Indeed, the proof of Hernández-Lerma and Lasserre (1999, Lem. 8.3.7(a)) shows that \(\forall ~u\in \mathbf{B}_w(S),\) the function \(\int _S (u(y)+||u||_w w(y))\left( \frac{q(dy|x,a)}{1+\bar{q}_x}+I\{x\in dy\}\right) \) is lower semicontinuous in \(a\in A(x),\) so that it remains to apply the facts that the sum of two lower semicontinuous functions is still lower semicontinuous, and the function \(-||u||_w \int _Sw(y)\left( \frac{q(dy|x,a)}{1+\bar{q}_x}+I\{x\in dy\}\right) \) is lower semicontinuous in \(a\in A(x).\)

It follows from the above and Condition 4(c) that \(\forall ~ x\in S, u\in \mathbf{B}_{w}(S),\) the function

is lower semicontinuous in \(a\in A(x).\) By Bertsekas and Shreve (1978, Prop. 7.29), \(\forall ~u\in \mathbf{B}_{w}(S),\) the function

is measurable on \(K\). Now it remains to apply Hernández-Lerma and Lasserre (1996, D.5 Prop.), see also Bertsekas and Shreve (1978, Prop. 7.33), for the statement of this lemma. \(\square \)

Remark 6

From the above proof, we incidentally obtain that for each \(u\in \mathbf{B}_{w}(S),\) \(\int _S u(y)q(dy|x,a)\) is lower semicontinuous in \(a\in A(x).\)

Proof of Theorem 3

Throughout this proof, \(x\in S\) is arbitrarily fixed. Due to Lemma 1, functions \(u^{(n)},n=0,1,2,\ldots \) are measurable. Now the proof goes in steps.

Step 1. We prove that \(\{u^{(n)},n=0,1,\ldots \}\) is a non-increasing sequence.

Straightforward calculations result in

and thus

where the last inequality follows from Condition 1(b) and Condition 2(c). Now the result of Step 1 follows from this and the monotonicity of the RHS of (12) with respect to \(u^{(n)}\).

Step 2. We prove that \(\forall ~n=0,1,\ldots ,\)

On the one hand, the result of Step 1 implies that for each \(n=0,1,\ldots ,\)

On the other hand, we have that

and thus

where the second inequality is because of Condition 2(c), \(\frac{M(\alpha w(y)+b)}{\alpha (\alpha -\rho )}+\frac{c}{\alpha }\ge 0\) and the fact of \(\frac{q(dy|x,a)}{1+\bar{q}_x}+I\{x\in dy\}\) being a probability measure. It follows now that

where the last inequality follows from Condition 1(b). This and an inductive argument lead to that \(\forall ~ n=0,1,\ldots , u^{(n)}(x)\ge -\left( \frac{M(\alpha w(y)+b)}{\alpha (\alpha -\rho )}+\frac{c}{\alpha }\right) .\) Thus, Step 2 is completed.

It follows from the results of Step 1 and Step 2 that \(u^*(x)=\lim _{n\rightarrow \infty }u^{(n)}(x)\) exists and \(u^{*}\in \mathbf{B}_{w}(S).\) It remains to prove that \(u^*\in \mathbf{B}_w(S)\) solves the Bellman equation (11). For convenience we introduce the operator from \(\mathbf{B}_w(S)\) to \(\mathbf{B}_w(S)\) via

\(\forall ~x\in S, u\in \mathbf{B}_w(S).\) Clearly, the operator \(T\) is monotonic, and indeed increasing. So on the one hand, by \(u^*(x)\le u^{(n)}(x),\) we have \(T\circ u^*(x)\le T\circ u^{(n)}(x),\forall ~x\in S, n=0,1,\ldots ,\) where passing to the limit as \(n\rightarrow \infty \) gives \(T\circ u^*(x)\le u^*(x),\forall ~x\in S.\) On the other hand, \(u^{(n+1)}(x)=T\circ u^{n}(x)\le \frac{c_0(x,a)}{\alpha +1+\bar{q}_x}+\frac{1+\bar{q}_x}{\alpha +1+\bar{q}_x}\int \limits _S u^{(n)}(y)\left( \frac{q(dy|x,a)}{1+\bar{q}_x}+I\{x\in dy\}\right) \), where passing to the limit as \(n\rightarrow \infty ,\) which is permitted by the dominated convergence theorem, leads to \(u^*(x)\le \frac{c_0(x,a)}{\alpha +1+\bar{q}_x}+\frac{1+\bar{q}_x}{\alpha +1+\bar{q}_x}\int \limits _S u^*(y)\left( \frac{q(dy|x,a)}{1+\bar{q}_x}+I\{x\in dy\}\right) ,\) and thus \(u^*(x)\le T\circ u^{*}(x),\forall ~x\in S.\) Therefore, \(u^*(x)=T\circ u^*(x),\forall ~x\in S,\) i.e.,

which, under the imposed conditions, is equivalent to the Bellman equation, as can be seen after some rearrangements. \(\square \)

Lemma 2

Suppose Condition 1, Condition 2(a, b, c) and Condition 3(a, b)

are satisfied. Then under any policy \(\pi ,\)

where \(u\in \mathbf{B}_{w^{\prime }}(S)\) is an arbitrary function.

Proof

By applying Dynkin’s formula (10), we have

The expectations of all particular summands are finite here. According to Theorem 1(c), see also its proof, we can formally add \(E_\gamma ^\pi \left[ \int _0^te^{-\alpha v}\int _A\pi (da|\omega ,v)c_0(\xi _v,a) dv\right] \) to the both sides of the above equation, and take the limit as \(t\rightarrow \infty \). We emphasize that \(\lim _{t\rightarrow \infty }e^{-\alpha t}E_\gamma ^\pi \left[ u(\xi _t)\right] =0\) because of Theorem 1(a) and Condition 2(b). \(\square \)

The next result is a trivial corollary of Lemma 2. A weaker version, which is restricted to a specific class of Markov policies \(\pi ,\) is also established in Guo (2007, Lem. 5.3) under stronger conditions requiring Condition 4(a, b).

Corollary 1

Suppose Condition 1, Condition 2(b, c) and Condition 3(a, b) are satisfied. Then under any fixed Markov policy \(\pi ,\) \(\forall ~x\in S\), \(u\in \mathbf{B}_{w^{\prime }}(S),\) the following assertions hold.

-

(a)

If \(\alpha u(x)\ge \int _A \pi (da|x,t)c_0(x,a)+\int _S\int _A\pi (da|x,t)q(dy|x,a)u(y),\forall ~x\in S,t\ge 0,\) then \(u(x)\ge V_0(x,\pi ).\)

-

(b)

If \(\alpha u(x)\le \int _A \pi (da|x,t)c_0(x,a)+\int _S\int _A\pi (da|x,t)q(dy|x,a)u(y),\forall ~x\in S,t\ge 0,\) then \(u(x)\le V_0(x,\pi ).\)

Proof of Theorem 4

(a) Under the conditions of the theorem, \(\mathbf{B}_{w^{\prime }}(S)\subseteq \mathbf{B}_{w}(S).\) So by Remark 6 and Hernández-Lerma and Lasserre (1996, Prop. D5), there is a (Borel-)measurable selector \(\phi ^*:S\rightarrow A\) whose graph is contained in \(K\) such that \(\alpha u^*(x)=\inf _{a\in A(x)}\left\{ c_0(x,a)+\int _Sq(dy|x,a)u^*(y)\right\} = c_0(x,\phi ^*(x))+\int _Sq(dy|x,\phi ^*(x))u^*(y).\) By Lemma 2 and the definition of the function \(u^*\in \mathbf{B}_{w^{\prime }}(S)\), see Remark 4, for any policy \(\pi ,\)

and \(V_0(x,\phi ^*)= u^*(x),\) where \(x\in S\) is arbitrarily fixed. Thus, the deterministic stationary policy given by \(\phi ^*\) is optimal. From the above argument, the last two assertions are obvious.

Parts (b, c) are trivial consequences of part (a) of this theorem.

(d) We observe that the Bellman function \(u^*(\cdot )\) is feasible for linear program (13). Consider any function \(v(\cdot )\) that is also feasible for linear program (13). By referring to Corollary 1(b), we have that under any Markov policy \(\pi ,\) \(v(x)\le V_0(x,\pi ).\) Now suppose \(\int _S\gamma (dy)v(y)>\int _S\gamma (dy)u^*(y).\) Then there exist some \(\hat{x} \in S\) and constant \(\delta >0\) such that \(u^*(\hat{x})<v(\hat{x})-\delta .\) Hence, \(u^*(\hat{x})<V_0(\hat{x},\pi )-\delta ,\) where \(\pi \) is any Markov policy. But this contradicts part (a) of this theorem. Therefore, any feasible solution \(v\) to linear program (13) satisfies \(\int _S\gamma (dy)v(y)\le \int _S\gamma (dy)u^*(y),\) as required.

(e) From part (d) of this theorem, we know that the optimal value of linear program (13) is given by \(\int _S u^*(y)\gamma (dy).\) Therefore, if some feasible solution \(v\) to linear program (13) satisfies \(u^*(x)=v(x)\) a.s. with respect to \(\gamma \), then it solves the linear program, too. Hence we conclude the sufficiency part of the statement.

As for the necessity, let \(v\) be any optimal solution to linear program (13). Suppose the relation of \(v=u^*\) a.s. with respect to \(\gamma \) is false. Then there exist measurable subsets \(\varGamma _1,\varGamma _2\subseteq S\), such that the following conditions are satisfied: \(\varGamma _1\bigcap \varGamma _2=\emptyset ,\) \(v(x)>u^*(x)\) on \(\varGamma _1,\) \(v(x)<u^*(x)\) on \(\varGamma _2,\) \(v(x)=u^*(x)\) on \(S{\setminus }\varGamma _1\setminus \varGamma _2,\) and the case \(\gamma (\varGamma _1)=\gamma (\varGamma _2)=0\) is excluded. Now let us define a function \(\hat{v}\) by \(\hat{v}(x)=I\{x\in S{\setminus }\varGamma _2\}v(x)+I\{x\in \varGamma _2\}u^*(x),\) which is feasible for linear program (13). Indeed, firstly, it is evident that \(\hat{v}\in \mathbf{B}_{w^{\prime }}(S)\). Secondly, we have that \(\forall ~x\in S{\setminus }\varGamma _2,\)

and \(\forall ~x\in \varGamma _2,\)

However, \(\int _S\hat{v}(y)\gamma (dy)=\int _{S{\setminus }{\varGamma _2}}v(x) \gamma (dx)+\int \limits _{S{\setminus } {\varGamma _2}}u^*(x)\gamma (dx)> \int \limits _Sv(x)\gamma (dx),\) which is a contradiction against that \(v\) is optimal for linear program (13). Now the necessity part follows. \(\square \)

Proof of Proposition 1

(a) We take functions \(w\) and \(w^{\prime }\) in the form

and put \(S_0=\{0\}\), \(S_l=S_0\cup \left( \frac{1}{l+1},1\right] \), \(l=1,2,\ldots .\) Now Condition 1(a, c) is obviously satisfied.

Condition 1(b) can be verified for \(\rho := 4\lambda \) and \(b=0\) as follows:

-

if \(x=0\) then

$$\begin{aligned} \int \limits _S q(dy|x,a) w(y)=5\lambda \int \limits _0^1\frac{1}{y^4} y^4 dy-\lambda =4\lambda =\rho w(0); \end{aligned}$$ -

if \(x\in (0,1]\) then

$$\begin{aligned} \int \limits _S q(dy|x,a)w(y)=\frac{a}{x} w(0)-\frac{a}{x} w(x)=\frac{a}{x}\left( 1-\frac{1}{x^4}\right) \le 0<\rho w(x). \end{aligned}$$

For Condition 2, it is sufficient to notice that \(\forall ~x\in (0,1],\)

\(\inf _{a\in A(0)}c_0(0,a)=0,\) and \(\alpha >4\lambda =\rho .\)

Condition 3(b, c, d) can be verified similarly to what is presented above by taking \(\rho ^{\prime }=\frac{2\lambda }{3}\), \(b^{\prime }=0\). Since

\(\forall ~x\in (0,1],A(x)=[0,\frac{\bar{A}}{x}]\), and \(A(0)=\{0\},\) we have \(\forall ~x\in (0,1],\bar{q}_x\le \frac{\bar{A}}{x^2}\) and \(\bar{q}_{0}=\lambda .\) From this and trivial calculations, we see that Condition 3(a) is also satisfied.

Finally, Condition 4 obviously holds.

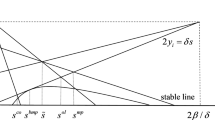

(b) If we denote \(z^{(n+1)}=f(z^{(n)})\) then, for \(z>\frac{\epsilon }{2}>0\), where \(\epsilon >0\) is any fixed constant, the function \(f\) is differentiable:

where

so that \(\forall ~z\in (\frac{\epsilon }{2},\infty ), 0<\frac{df}{dz}<\frac{\lambda }{\alpha +\lambda }<1\).

It remains to estimate \(z^{(1)}\):

for each \(x\in (0,1]\);

because \(\alpha >4\lambda \) and \(C_1<2\alpha \). The map \(z\rightarrow f(z)\) is contracting on \([\epsilon ,\infty )\), e.g., for \(\epsilon =z^{(1)}\). Since

we conclude that \(z^*< \frac{10}{7} C_2\lambda +\frac{\alpha +\lambda }{\alpha }\).

(c) Clearly, the function \(u^*(x)\) (supplemented by \(u^*(0)=1-z^*\)) is bounded; hence \(u^*\in \mathbf{B}_{w^{\prime }}(S)\). Therefore, according to Theorem 4, it is sufficient to check that \(u^*\) solves equation (11) and \(\phi ^*\) provides the infimum.

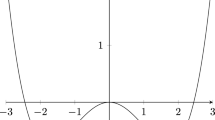

The expression in the parenthesis of (11) equals

and

Therefore,

and \(\phi ^*(x)\) given by (15) provides the infimum. (Note that \(u^*(x)+z^*\ge -2\alpha C_2 x^2+2\sqrt{\alpha ^2 C_2^2 x^4}=0.\)) Finally, at \(x>0\), the RHS of (11) equals \(C_1 x-\frac{(u^*(x)+z^*)^2}{4x^2 C_2}\), and the equation

holds because \(u^*(x)=-2\alpha C_2 x^2-z^*+2\sqrt{\alpha ^2 C_2^2 x^4+C_1C_2 x^3+\alpha C_2 x^2 z^*}.\) \(\square \)

Rights and permissions

About this article

Cite this article

Piunovskiy, A., Zhang, Y. Discounted continuous-time Markov decision processes with unbounded rates and randomized history-dependent policies: the dynamic programming approach. 4OR-Q J Oper Res 12, 49–75 (2014). https://doi.org/10.1007/s10288-013-0236-1

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10288-013-0236-1