Abstract

Ever since the success of naive Bayes (NB) in achieving excellent classification performance and the least computational overhead, more and more researchers have focused their attention on the Bayesian network classifiers (BNCs). Among numerous approaches to refining NB, averaged one-dependence estimators (AODE) achieves excellent classification performance although its discriminative independence assumption for each member rarely holds in practice. Robust AODE with high expressivity and low bias is in urgent need with the ever increasing data quantity. In this paper, the log likelihood function \(LL({\mathscr{B}}|D)\) is introduced to measure the number of bits which is encoded in the network topology \({\mathscr{B}}\) for describing training data D. An efficient heuristic search strategy is applied to maximize \(LL({\mathscr{B}}|D)\) and relax the independence assumption of AODE by exploring higher-order conditional dependencies between attributes. The proposed approach, averaged tree-augmented one-dependence estimators (ATODE), inherits the effectiveness of AODE and gains more flexibility for modelling higher-order dependencies. The extensive experimental comparison results on 36 datasets demonstrate that, compared to state-of-the-art learners including single-model BNCs (e.g., CFWNB and SKDB) and variants of AODE (e.g., TAODE), our proposed out-of-core learner can achieve competitive or better classification performance.

Similar content being viewed by others

References

Scanagatta M, Salmerón A, Stella F (2019) A survey on Bayesian network structure learning from data. Prog Artif Intell 8(4):425–539

Yuan C, Lim H, Lu TC (2011) Most relevant explanation in Bayesian networks. J Artif Intell Res 42:309–352

Liu D, Huang Y, Yu Q, Chen J, Jia H (2012) A search problem in complex diagnostic Bayesian networks. Knowl-based Syst 30:95–103

Chickering DM, Heckerman D, Meek C (2004) Large-sample learning of Bayesian networks is NP-hard. J Artif Intell Res 5:1287–1330

Bielza C, Larranaga P (2014) Discrete Bayesian network classifiers: a survey. ACM Comput Surv 47(1):1–43

Jiang L, Cai Z, Wang D, Zhang H (2012) Improving tree augmented naive Bayes for class probability estimation. Knowl-based Syst 26:239–245

Martínez AM, Webb GI, Chen S, Zaidi NA (2016) Scalable learning of Bayesian network classifiers. J Mach Learn Res 17:1515–1549

Wang L, Chen J, Liu Y, Sun M (2020) Self-adaptive attribute value weighting for averaged one-dependence estimators. IEEE Access 8:27887–27900

Jiang L, Zhang L, Li C, Wu J (2019) A correlation-based feature weighting filter for naive Bayes. IEEE Trans Knowl Data Eng 31(2):201–213

Wang L, Wang G, Duan Z, Lou H, Sun M (2019) Optimizing the topology of Bayesian network classifiers by applying conditional entropy to mine causal relationships between attributes. IEEE Access 7:134271–134279

Duan Z, Wang L, Chen S, Sun M (2020) Instance-based weighting filter for superparent one-dependence estimators. Knowl-based Syst 203:106085

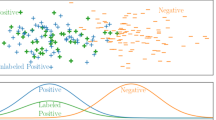

Duan Z, Wang L, Sun M (2020) Efficient heuristics for learning Bayesian network from labeled and unlabeled data. Intell Data Anal 24(2):385–408

Jiang L, Zhang H, Cai Z (2009) A novel Bayes model: hidden naive Bayes. IEEE Trans Knowl Data Eng 21(10):1361–1371

Zaidi NA, Cerquides J, Carman MJ, Webb GI (2013) Alleviating naive Bayes attribute independence assumption by attribute weighting. J Mach Learn Res 14(1):1947–1988

Xiang Z, Yu X, Kang D (2016) Experimental analysis of naive Bayes classifier based on an attribute weighting framework with smooth kernel density estimations. Appl Intell 44(3):611–620

Sun X, Liu Y, Xu M, Chen H, Han J, Wang K (2013) Feature selection using dynamic weights for classification. Knowl-based Syst 37:541–549

Flores MJ, Gámez JA, Martínez AM (2014) Domains of competence of the semi-naive Bayesian network classifiers. Inf Sci 260:120–148

Yang Y, Webb GI, Cerquides J, Korb KB, Boughton J, Ting KM (2007) To select or to weigh: a comparative study of linear combination schemes for superparent-one-dependence estimators. IEEE Trans Knowl Data Eng 19(12):1652–1665

Chen S, Martinez AM, Webb GI, Wang L (2016) Sample-based attribute selective an DE for large data. IEEE Trans Knowl Data Eng 29(1):172–185

Brain D, Webb GI (1999) On the effect of data set size on bias and variance in classification learning. In: Proceedings of the 4th Australian knowledge acquisition workshop, pp 117–128

Friedman N, Geiger D, Goldszmidt M (1997) Bayesian network classifiers. Mach Learn 29(2-3):131–163

Langley P, Sage S (1994) Induction of selective Bayesian classifiers. In: Proceedings of the 10th international conference on uncertainty in artificial intelligence, pp 399–406

Chen S, Webb GI, Liu L, Ma X (2020) A novel selective naive Bayes algorithm. Knowl-based Syst 105361:192

Jiang L, Zhang L, Yu L, Wang D (2019) Class-specific attribute weighted naive Bayes. Pattern Recognit 88:321–330

Lee C, Gutierrez F, Dou D (2011) Calculating feature weights in naive bayes with kullback-leibler measure. In: Proceedings of IEEE 11th international conference on data mining, pp 1146–1151

Jiang L, Li C, Wang S, Zhang L (2016) Deep feature weighting for naive Bayes and its application to text classification. Eng Appl Artif Intell 52:26–39

Yang Y, Korb K, Ting KM, Webb GI (2005) Ensemble selection for superparent one-dependence estimators. In: Proceedings of 18th Australian joint conference on artificial intelligence, vol 3809, pp 102–112

Zheng F, Webb GI, Suraweera P, Zhu L (2012) Subsumption resolution: an efficient and effective technique for semi-naive Bayesian learning. Mach Learn 87(1):93–125

Jiang L, Zhang H, Cai Z, Wang D (2012) Weighted average of one-dependence estimators. J Exp Theor Artif Intell 24(2):219–230

Yu L, Jiang L, Wang D, Zhang L (2017) Attribute value weighted average of one-dependence estimators. Entropy 19(9):501

Wu J, Cai Z (2011) Learning averaged one-dependence estimators by attribute weighting. J Inf Comput Sci 8(7):1063–1073

Jiang L, Zhang H (2006) Lazy averaged one-dependence estimators. In: Proceedings of the 19th Canadian conference on artifical intelligence, pp 515–525

Wang L, Liu Y, Mammadov M, Sun M, Qi S (2019) Discriminative structure learning of bayesian network classifiers from training dataset and testing instance. Entropy 21(5):489

Liu Y, Wang L, Mammadov M (2020) Learning semi-lazy Bayesian network classifier under the c.i.i.d assumption. Knowl-based Syst 208:106422

Sahami M (1996) Learning limited dependence Bayesian classifiers. In: Proceedings of the 2nd international conference on knowledge discovery and data mining, pp 335–338

Bache K, Lichman M (2013) UCI Machine Learning Repository, Available online: https://archive.ics.uci.edu/ml/datasets.html

Fayyad U, Irani K (1993) Multi-interval discretization of continuous-valued attributes for classification learning. In: Proceedings of the 13th international joint conference on artificial intelligence, pp 1022–1029

Kohavi R, Wolpert DH (1996) Bias plus variance decomposition for zero-one loss functions. In: Proceedings of the 13th international conference on machine learning, pp 275–283

Hyndman RJ, Koehler AB (2006) Another look at measures of forecast accuracy. Int J Forecast 22(4):679–688

Friedman M (1937) The use of ranks to avoid the assumption of normality implicit in the analysis of variance. J Am Stat Assoc 32(200):675–701

Nemenyi P (1963) Distribution-free multiple comparisons. Ph.D. Thesis, Princeton University, Princeton, NJ, USA

Demšar J (2006) Statistical comparisons of classifiers over multiple data sets. J Mach Learn Res 7:1–30

Acknowledgments

The authors would like to thank the editor and the anonymous reviewers for their insightful comments and suggestions. And this work was supported by the Scientific and Technological Developing Scheme of Jilin Province under Grant No. 20200201281JC.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interests

The authors declare that they have no conflict of interest.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

Rights and permissions

About this article

Cite this article

Kong, H., Shi, X., Wang, L. et al. Averaged tree-augmented one-dependence estimators. Appl Intell 51, 4270–4286 (2021). https://doi.org/10.1007/s10489-020-02064-w

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10489-020-02064-w