Abstract

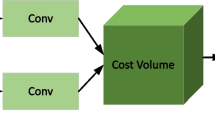

Deep learning-based stereo matching methods have made remarkable progress in recent years. However, it is still a challenging task to achieve high accuracy in real time. In response to this challenge, we propose a Spatial Attention-Guided Upsampling network (SAGU-Net) for accurate and real-time stereo matching. First, a Spatial Attention-Guided Cost Volume Upsampling (SAG-CVU) module is proposed for upsampling the low-resolution cost volume, which calculates each upsampled matching cost as the sum of neighboring coarse costs under the guidance of spatial attention. Different from the recently popular coarse-to-fine (CTF) strategy that prefers upsampling the coarse disparity map, the low-resolution cost volume is upsampled by the SAG-CVU module which allows more raw information to propagate to subsequent procedures and can alleviate the problem of losing high-frequency information. To ensure fast running speed, a medium-resolution disparity map is directly regressed from the upsampled cost volume and then upsampled to full resolution with a Spatial Attention-Guided Disparity Map Upsampling (SAG-DMU) module. Unlike most CTF-based methods which usually build and aggregate narrow cost volumes iteratively until a full-resolution disparity map is obtained, the SAG-DMU module helps the proposed network avoid the iterative procedure to ensure fast running speed. In addition, we propose a simple yet effective gradient loss function that plays the role of a discontinuity-preserving regularizer, which further improves the overall accuracy, especially at depth discontinuities. These design choices lead to the proposed SAGU-Net which can obtain accurate results in real time. Extensive experimental results demonstrate that SAGU-Net and its variants outperform not only state-of-the-art real-time networks but also many accuracy-oriented models on multiple datasets.

Similar content being viewed by others

Data Availability

Data will be made available on reasonable request.

References

Luo C, Yu L, Ren P (2018) A vision-aided approach to perching a bioinspired unmanned aerial vehicle. IEEE Trans Ind Electron 65(5):3976–3984

Li Y, Ma L, Zhong Z, Liu F, Chapman MA, Cao D, Li J (2021) Deep learning for lidar point clouds in autonomous driving: A review. IEEE Trans Neural Netw Learn Syst 32(8):3412–3432

Zhao L, Liu Y, Men C, Men Y (2022) Double propagation stereo matching for urban 3-d reconstruction from satellite imagery. IEEE Trans Geosci Remote Sens 60:1–17

Xia W, Chen ECS, Pautler S, Peters TM (2022) A robust edge-preserving stereo matching method for laparoscopic images. IEEE Trans Med Imaging 41(7):1651–1664

Pan B, Zhang L, Wang H (2020) Multi-stage feature pyramid stereo network-based disparity estimation approach for two to three-dimensional video conversion. IEEE Trans Circ Syst Video Technol 31(5):1862–1875

Zhang Y-J (2023) Binocular Stereo Vision. Springer, pp 169–203

Dinh VQ, Pham CC, Jeon JW (2017) Robust adaptive normalized cross-correlation for stereo matching cost computation. IEEE Trans Circ Syst Video Technol 27(7):1421–1434

Taniai T, Matsushita Y, Sato Y, Naemura T (2018) Continuous 3d label stereo matching using local expansion moves. IEEE Trans Pattern Anal Mach Intell 40(11):2725–2739

Xu C, Wu C, Qu D, Xu F, Sun H, Song J (2021) Accurate and efficient stereo matching by log-angle and pyramid-tree. IEEE Trans Circ Syst Video Technol 31(10):4007–4019

Song X, Yang G, Zhu X, Zhou H, Ma Y, Wang Z, Shi J (2022) Adastereo: An efficient domain-adaptive stereo matching approach. Int J Comput Vis 130(2):226–245

Laga H, Jospin LV, Boussaid F, Bennamoun M (2022) A survey on deep learning techniques for stereo-based depth estimation. IEEE Trans Pattern Anal Mach Intell 44(4):1738–1764

Chong A-X, Yin H, Wan J, Liu Y-T, Du Q-Q (2022) Sa-net: Scene-aware network for cross-domain stereo matching. Appl Intell 53(9):9978–9991

Kendall A, Martirosyan H, Dasgupta S, Henry P, Kennedy R, Bachrach A, Bry A (2017) End-to-end learning of geometry and context for deep stereo regression. In: IEEE International Conference on Computer Vision (ICCV), pp 66–75

Chang J-R, Chen Y-S (2018) Pyramid stereo matching network. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp 5410–5418

Guo X, Yang K, Yang W, Wang X, Li H (2019) Group-wise correlation stereo network. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp 3273–3282

Wang Y, Lai Z, Huang G, Wang BH, Van Der Maaten L, Campbell M, Weinberger KQ (2019) Anytime stereo image depth estimation on mobile devices. In: 2019 International Conference on Robotics and Automation (ICRA), pp 5893–5900

Wang Q, Shi S, Zheng S, Zhao K, Chu X (2020) Fadnet: A fast and accurate network for disparity estimation. In: IEEE International Conference on Robotics and Automation (ICRA), pp 101–107

Tonioni A, Tosi F, Poggi M, Mattoccia S, Stefano LD (2019) Real-time self-adaptive deep stereo. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp 195–204

Dai H, Zhang X, Zhao Y, Sun H, Zheng N (2022) Adaptive disparity candidates prediction network for efficient real-time stereo matching. IEEE Trans Circ Syst Video Technol 32(5):3099-3110

Deng Y, Xiao J, Zhou SZ, Feng J (2021) Detail preserving coarse-to-fine matching for stereo matching and optical flow. IEEE Trans Image Process 30:5835–5847

Duggal S, Wang S, Ma W-C, Hu R, Urtasun R (2019) Deeppruner: Learning efficient stereo matching via differentiable patchmatch. In: Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), pp 4384–4393

Dovesi PL, Poggi M, Andraghetti L, Martí M, Kjellström H, Pieropan A, Mattoccia S (2020) Real-time semantic stereo matching. In: 2020 IEEE International Conference on Robotics and Automation (ICRA), pp 10780–10787

Zhang F, Prisacariu V, Yang R, Torr PH (2019) Ga-net: Guided aggregation net for end-to-end stereo matching. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp 185–194

Pan B, Zhang L, Wang H (2021) Multi-stage feature pyramid stereo network-based disparity estimation approach for two to three-dimensional video conversion. IEEE Trans Circ Syst Video Technol 31(5):1862–1875

Xie Y, Zheng S, Li W (2021) Feature-guided spatial attention upsampling for real-time stereo matching network. IEEE MultiMedia 28(1):38–47

Khamis S, Fanello S, Rhemann C, Kowdle A, Valentin J, Izadi S (2018) Stereonet: Guided hierarchical refinement for real-time edge-aware depth prediction. In: Proceedings of the European Conference on Computer Vision (ECCV), pp 573–590

Shamsafar F, Woerz S, Rahim R, Zell A (2022) Mobilestereonet: Towards lightweight deep networks for stereo matching. In: 2022 IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), pp 677–686

Yang M, Wu F, Li W (2020) Waveletstereo: Learning wavelet coefficients of disparity map in stereo matching. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp 12885–12894

Shen Z, Dai Y, Rao Z (2021) Cfnet: Cascade and fused cost volume for robust stereo matching. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp 13906–13915

Yang F, Sun Q, Jin H, Zhou Z (2020) Superpixel segmentation with fully convolutional networks. In: 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp 13961–13970

Xu B, Xu Y, Yang X, Jia W, Guo Y (2021) Bilateral grid learning for stereo matching networks. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp 12497–12506

Xu H, Zhang J (2020) Aanet: Adaptive aggregation network for efficient stereo matching. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp 1959–1968

Menze M, Geiger A (2015) Object scene flow for autonomous vehicles. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp 3061–3070

Ye X, Sang X, Chen D, Wang P, Wang K, Yan B, Liu B, Wang H, Qi S (2022) Superpixel guided network for three-dimensional stereo matching. IEEE Trans Comput Imaging 8:54–68

Yang X, Feng Z, Zhao Y, Zhang G, He L (2022) Edge supervision and multi-scale cost volume for stereo matching. Image Vision Comput 117:104336

Kang J, Chen L, Deng F, Heipke C (2019) Context pyramidal network for stereo matching regularized by disparity gradients. ISPRS J Photogramm Remote Sens 157:201–215

Guo C, Chen D, Huang Z (2020) End-to-end stereo matching network with local adaptive awareness. In: Proceedings of the 2020 2nd International Conference on Image, Video and Signal Processing, pp 107–114

Hua S, Sun Z, Song B, Liang P, Cheng E (2022) Pseudo segmentation for semantic information-aware stereo matching. IEEE Sig Process Lett 29:837–841

Lee H, Shin Y (2019) Real-time stereo matching network with high accuracy. In: IEEE International Conference on Image Processing (ICIP), pp 4280–4284

Bangunharcana A, Cho JW, Lee S, Kweon IS, Kim K-S, Kim S (2021) Correlate-and-excite: Real-time stereo matching via guided cost volume excitation. In: 2021 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp 3542–3548

Mayer N, Ilg E, Häusser P, Fischer P, Cremers D, Dosovitskiy A, Brox T (2016) A large dataset to train convolutional networks for disparity, optical flow, and scene flow estimation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp 4040–4048

Geiger A, Lenz P, Urtasun R (2012) Are we ready for autonomous driving? the kitti vision benchmark suite. In: IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp 3354–3361

Scharstein D, Hirschmüller H, Kitajima Y, Krathwohl G, Nešić N, Wang X, Westling P (2014) High-resolution stereo datasets with subpixel-accurate ground truth. In: German Conference on Pattern Recognition. Springer, pp 31–42

Schöps T, Schönberger JL, Galliani S, Sattler T, Schindler K, Pollefeys M, Geiger A (2017) A multi-view stereo benchmark with high-resolution images and multi-camera videos. In: 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp 2538–2547

Huang G, Gong Y, Xu Q, Wattanachote K, Zeng K, Luo X (2020) A convolutional attention residual network for stereo matching. IEEE Access 8:50828–50842

Badki A, Troccoli A, Kim K, Kautz J, Sen P, Gallo O (2020) Bi3d: Stereo depth estimation via binary classifications. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp 1600–1608

Song X, Zhao X, Fang L, Hu H, Yu Y (2020) Edgestereo: An effective multi-task learning network for stereo matching and edge detection. Int J Comput Vis 128(4):910–930

Zhang Y, Li Y, Kong Y, Liu B (2020) Attention aggregation encoder-decoder network framework for stereo matching. IEEE Sig Process Lett 27:760–764

Liang Z, Feng Y, Guo Y, Liu H, Chen W, Qiao L, Zhou L, Zhang J (2018) Learning for disparity estimation through feature constancy. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp 2811–2820

Gan W, Wu W, Chen S, Zhao Y, Wong PK (2023) Rethinking 3d cost aggregation in stereo matching. Pattern Recogn Lett 167:75–81

Yao C, Jia Y, Di H, Li P, Wu Y (2021) A decomposition model for stereo matching. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp 6091–6100

Xu G, Cheng J, Guo P, Yang X (2022) Attention concatenation volume for accurate and efficient stereo matching. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp 12981–12990

Zhang F, Qi X, Yang R, Prisacariu V, Wah B, Torr P (2020) Domain-invariant stereo matching networks. In: European Conference on Computer Vision (ECCV), Springer, pp 420–439

Chuah W, Tennakoon R, Hoseinnezhad R, Bab-Hadiashar A, Suter D (2022) Itsa: An information-theoretic approach to automatic shortcut avoidance and domain generalization in stereo matching networks. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp 13022–13032

Yin Z, Darrell T, Yu F (2019) Hierarchical discrete distribution decomposition for match density estimation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp 6044–6053

Acknowledgements

This work was supported in part by the Natural Science Foundation of Shaanxi Province under Grant 2021JQ-487, in part by the Natural Science Fund Project of Hubei Province under Grant 2022CFB538, in part by the Science and Technology Research Project of Department of Education of Hubei Province under Grant Q20201801, in part by the Scientific and Technological Innovation Programs of Higher Education Institutions in Shanxi under Grant 2022L476 and in part by the Scientific Research Project of Yuncheng University under Grant CY-2019019 and Grant CY-2019022.

Author information

Authors and Affiliations

Contributions

Zhong Wu: Conceptualization, Data curation, Investigation, Methodology, Software, Validation, Writing - original draft. Hong Zhu: Funding acquisition, Supervision, Writing - review & editing. Lili He: Investigation, Visualization, Writing - review & editing. Qiang Zhao: Validation. Jing Shi: Funding acquisition, Resources. Wenhuan Wu: Funding acquisition, Investigation.

Corresponding author

Ethics declarations

Competing interests

The authors declare that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Wu, Z., Zhu, H., He, L. et al. Real-time stereo matching with high accuracy via Spatial Attention-Guided Upsampling. Appl Intell 53, 24253–24274 (2023). https://doi.org/10.1007/s10489-023-04646-w

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10489-023-04646-w