Abstract

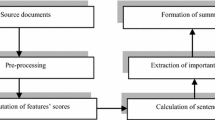

In this paper, we propose to adapt the F-measure to evaluate an automatic summaries of texts; we the main key to our proposal is to prove that the automatic summary task can be modeled in supervised classification. First, we will start the research by to make a comparison between the automatic summary task and the supervised classification. After that, we are going to define how to draw a confusion matrix which classification evaluation base, and from it we calculate the F-Measure. In this vein, we must prove that the new measure is valid and trustworthy, And for this we will calculates the correlation with ROUGE Evaluation. Before ending we analyze and interpret the main results in order to answer the questions put forward in this research. At the end, we are going to conclude our study with a set of facts based on the collected data.

Similar content being viewed by others

References

Moiyadi, H.S., Desai, H., Pawar, D., Agrawal, G., Patil, N.M.: NLP Based text summarization using semantic analysis. Int. J. Adv. Eng. Manag. Sci. 2(10), 1812–1818 (2016)

Inderjeet, M., Maybury, M.T.: Advances in Automatic Text Summarization. MIT, Cambridge (1999)

Boudia, M.A., Hamou, R.M., Amine, A., Rahmani, M.E., Rahmani, A.: A new multi-layered approach for automatic text summaries mono-document based on social spiders. In: Computer Science and Its Applications (pp. 193–204). Springer International Publishing (2015)

Allahyari, M., Pouriyeh, S., Assefi, M., Safaei, S., Trippe, E. D., Gutierrez, J.B., Kochut, K.: Text summarization techniques: a brief survey. (2017). arXiv:1707.02268

Jones, K.S., Galliers, J.R. (eds.): Evaluating Natural Language Processing Systems: An Analysis and Review, vol. 1083. Springer, Berlin (1996)

Radev, D.R., Otterbacher, J., Qi, H., Tam, D.: Mead reducs: Michigan at duc 2003. In: Proceedings of DUC 2003 (2003)

Nenkova, A., Passonneau, R.: Evaluating Content Selection in Summarization: The Pyramid Method

Gupta, V., Lehal, G.S.: A survey of text summarization extractive techniques. J. Emerg. Technol. Web Intell. 2(3), 258–268 (2010)

Yogan, J.K., Goh, O.S., Halizah, B., Ngo, H.C., Puspalata, C.: A review on automatic text summarization approaches. J. Comput. Sci. 12(4), 178–190 (2016)

Louis, A., Nenkova, A.: Automatically evaluating content selection in summarization without human models. In: Proceedings of the 2009 Conference on Empirical Methods in Natural Language Processing: Volume 1-Volume 1 (pp. 306–314). Association for Computational Linguistics (2009)

Artusi, R., Verderio, P., Marubini, E.: Bravais-Pearson and Spearman correlation coefficients: meaning, test of hypothesis and confidence interval. Int. J. Biol. Markers 17(2), 148–151 (2002)

Boudia, M.A., Hamou, R.M., Amine, A., Rahmani, A.: A New biomimetic method based on the power saves of social bees for automatic summaries of texts by extraction. Int. J. Softw. Sci. Comput. Intell. 7(1), 18–38 (2015)

Papineni, K., Roukos, S., Ward, T., Zhu, W.J.: BLEU: a method for automatic evaluation of machine translation. In: Proceedings of the 40th annual meeting on association for computational linguistics (pp. 311–318). Association for Computational Linguistics (2012)

Lokbani, A.C.: A new metric of validation for automatic text summarization by extraction. Int. J. Strateg. Inf. Technol. Appl. 8(3), 20–40 (2017)

Donaway, R.L., Drummey, K.W., Mather, L.A.: A comparison of rankings produced by summarization evaluation measures. In: Proceedings of the 2000 NAACL-ANLPWorkshop on Automatic summarization, Volume 4, pp. 69–78. Association for Computational Linguistics (2000)

Boudia, M.A., Hamou, R.M., Amine, A., Rahmani, M.E., Rahmani, A.: Hybridization of Social Spiders and Extractions Techniques for Automatic Text Summaries. Int. J. Cognit. Inform. Nat. Intell. 9(3), 65–86 (2015)

Pastra, K., Saggion, H.:. Colouring summaries BLEU. In: Proceedings of the EACL 2003 Workshop on Evaluation Initiatives in Natural Language Processing: are evaluation methods, metrics and resources reusable? (pp. 35–42). Association for Computational Linguistics (2003)

Lin, C.Y. :Rouge: a package for automatic evaluation of summaries. In: Text Summarization Branches Out: Proceedings of the ACL-04 Workshop (pp. 74–81) (2004)

Lin, W.H., Hauptmann, A.: Are these documents written from different perspectives?: a test of different perspectives based on statistical distribution divergence. In: Proceedings of the 21st International Conference on Computational Linguistics and the 44th annual meeting of the Association for Computational Linguistics (pp. 1057–1064). Association for Computational Linguistics (2006)

Kullback, S., Leibler, R.A.: On information and sufficiency. Ann. Math. Stat. 22, 79–86 (1951)

Lin, J.: Divergence measures based on the Shannon entropy. IEEE Trans. Inf. Theory 37(1), 145–151 (1991)

Boudia, M.A., Rahmani, A., Rahmani, M.E., Djebbar, A., Bouarara, H.A., Kabli, F., Guandouz, M.: Hybridization between scoring technique and similarity technique for automatic summarization by extraction. Int. J. Organ. Collect. Intell. 6(1), 1–14 (2016)

Lin C.-Y.: ROUGE: A package for automatic evaluation of summaries. In: M.-F. Moens & S. SZPAKOWICZ, Eds., Text Summarization Branches Out: ACL-04 Workshop, p. 74–81, Barcelona (2004)

Over, P., Dang, H., Harman, D.: DUC in context. IPM 43(6), 1506–1520 (2007)

Acknowledgment

We thank Directorate General For Scientific Research And Technological Developement - Ministry of Higher Education and Scientific Research for supporting this paper and our laboratory (GeCoDe Lab).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Boudia, M.A., Hamou, R.M., Amine, A. et al. An adaptation of a F-measure for automatic text summarization by extraction. Cluster Comput 23, 2389–2398 (2020). https://doi.org/10.1007/s10586-019-03019-8

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10586-019-03019-8