Abstract

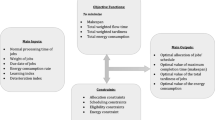

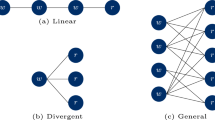

As a promising distributed paradigm, cloud computing provides a cost-effective deploying environment for hosting scientific applications due to its provisioning elastic, heterogeneous resources in a pay-per-use model. More and more applications modeled as workflows are being moved to the cloud, and time and cost become important for workflow execution. However, scheduling workflows is still a challenge due to their large-scale and complexity, as well as the cloud’s dynamic characteristics and different quotations. In this work, we propose a Weighted Double Deep Q-Network-based Reinforcement Learning algorithm (WDDQN-RL) for scheduling multiple workflows to obtain near-optimal solutions in a relatively short time with both makespan and cost minimized. Specifically, we first introduce a dynamic coefficient-based adaptive balancing method into WDDQN to improve the accuracy of the target value estimation by making a trade-off between Deep Q-Network (DQN) overestimation and Double Deep Q-Network (DDQN) underestimation. Second, pointer network-based agents and a two-level scheduling strategy are designed, where pointer networks are used to process a variable candidate task set in the first-level and one selected task is fed to agents in the second-level for allocating resources. Third, we present a dynamic sensing mechanism by adjusting the model’s attention to each individual objective for increasing the diversity of solutions while guaranteeing their quality. Experimental results show that our algorithm outperforms the benchmarking approaches in various indicators.

Similar content being viewed by others

Data availability

The data used to support the findings of this study are available from the corresponding author upon request.

References

Geng, X., Mao, Y., Xiong, M., Liu, Y.: An improved task scheduling algorithm for scientific workflow in cloud computing environment. Clust. Comput. 22(3), 7539–7548 (2019). https://doi.org/10.1007/s10586-018-1856-1

Ghahramani, M., Zhou, M., Hon, C.T.: Toward cloud computing QoS architecture: analysis of cloud systems and cloud services. IEEE/CAA J. Autom. Sin. 4(1), 6–18 (2017). https://doi.org/10.1109/JAS.2017.7510313

Sharma, G., Miglani, N., Kumar, A.: PLB: a resilient and adaptive task scheduling scheme based on multi-queues for cloud environment. Clust. Comput. 24, 2615–2637 (2021). https://doi.org/10.1007/s10586-021-03280-w

Kaur, G., Bala, A.: OPSA: an optimized prediction based scheduling approach for scientific applications in cloud environment. Clust. Comput. 24, 1955–1974 (2021). https://doi.org/10.1007/s10586-021-03232-4

Yuan, H., Bi, J., Zhou, M., Ammari, A.C.: Time-aware multi-application task scheduling with guaranteed delay constraints in green data center. IEEE Trans. Autom. Sci. Eng. 15(3), 1138–1151 (2018). https://doi.org/10.1109/TASE.2017.2741965

Juve, G., Chervenak, A., Deelman, E., Bharathi, S., Mehta, G., Vahi, K.: Characterizing and profiling scientific workflows. Fut. Gener. Comput. Syst. Int. J. Sci. 29(3), 682–692 (2013). https://doi.org/10.1016/j.future.2012.08.015

Li, W., Xia, Y., Zhou, M., Sun, X., Zhu, Q.: Fluctuation-aware and predictive workflow scheduling in cost-effective infrastructure-as-a-service clouds. IEEE Access 6, 61488–61502 (2018). https://doi.org/10.1109/ACCESS.2018.2869827

Toussi, G.K., Naghibzadeh, M.: A divide and conquer approach to deadline constrained cost-optimization workflow scheduling for the cloud. Clust. Comput. 24, 1711–1733 (2021). https://doi.org/10.1007/s10586-020-03223-x

Lin, W., Wang, H., Zhang, Y., Qi, D., Wang, J.Z., Chang, V.: A cloud server energy consumption measurement system for heterogeneous cloud environments. Inf. Sci. 468, 47–62 (2018). https://doi.org/10.1016/j.ins.2018.08.032

Thennarasu, S.R., Selvam, M., Srihari, K.: A new whale optimizer for workflow scheduling in cloud computing environment. J. Ambient Intell. Hum. Comput. (2020). https://doi.org/10.1007/s12652-020-01678-9

Sampaio, A.M., Barbosa, J.G.: Workflow scheduling with amazon EC2 spot instances: building reliable compute environments. Int. J. Mach. Learn. Comput. 10(1), 140–147 (2020). https://doi.org/10.18178/ijmlc.2020.10.1.911

Rizvi, N., Ramesh, D.: Fair budget constrained workflow scheduling approach for heterogeneous clouds. Clust. Comput. 23(4), 3185–3201 (2020). https://doi.org/10.1007/s10586-020-03079-1

Rodriguez, M.A., Buyya, R.: A taxonomy and survey on scheduling algorithms for scientific workflows in IaaS cloud computing environments. Concurr. Comput. 29(8), e4041 (2017). https://doi.org/10.1002/cpe.4041

Wu, L., Wang, Y.: Scheduling multi-workflows over heterogeneous virtual machines with a multi-stage dynamic game-theoretic approach. Int. J. Web Serv. Res. 15(4), 82–96 (2018). https://doi.org/10.4018/IJWSR.2018100105

Wang, Y., Zuo, X.: An effective cloud workflow scheduling approach combining PSO and idle time slot-aware rules. IEEE/CAA J. Autom. Sin. 8(5), 1079–1094 (2021). https://doi.org/10.1109/JAS.2021.1003982

Silver, D., Schrittwieser, J., Simonyan, K., Antonoglou, I., Huang, A., Guez, A., Hubert, T., Baker, L., Lai, M., Bolton, A., Chen, Y.T., Lillicrap, T., Hui, F., Sifre, L., van den Driessche, G., Graepel, T., Hassabis, D.: Mastering the game of Go without human knowledge. Nature 550(7676) (2017). https://doi.org/10.1038/nature24270

Peng, Z., Lin, J., Cui, D., Li, Q., He, J.: A multi-objective trade-off framework for cloud resource scheduling based on the Deep Q-network algorithm. Clust. Comput. 23(4), 2753–2767 (2020). https://doi.org/10.1007/s10586-019-03042-9

Bertsekas, D.: Multiagent reinforcement learning: rollout and policy iteration. IEEE/CAA J. Autom. Sin. 8(2), 249–272 (2021). https://doi.org/10.1109/JAS.2021.1003814

Cui, D., Ke, W., Peng, Z., Zuo, J.: Multiple DAGs workflow scheduling algorithm based on reinforcement learning in cloud computing. In: Proceedings of the 7th international symposium on computational intelligence and intelligent systems, vol. 575, pp. 305–311. Springer, Guangzhou, China (2015). https://doi.org/10.1007/978-981-10-0356-1\_31

Wei, Y., Kudenko, D., Liu, S., Pan, L., Wu, L., Meng, X.: A reinforcement learning based workflow application scheduling approach in dynamic cloud environment. In: Proceedings of the international conference on collaborative computing—networking, applications and worksharing, pp. 120–131. Springer, Edinburgh, UK (2018). https://doi.org/10.1007/978-3-030-00916-8\_12

Kaur, A., Singh, P., Batth, R.S., Lim, C.P.: Deep-Q learning-based heterogeneous earliest finish time scheduling algorithm for scientific workflows in cloud. Software (2020). https://doi.org/10.1002/spe.2802

Ma, S., Ilyushkin, A., Stegehuis, A., Iosup, A.: ANANKE: a Q-learning-based portfolio scheduler for complex industrial workflows. In: Proceedings of the IEEE international conference on autonomic computing, pp. 227–232. IEEE, Columbus, OH, USA (2017). https://doi.org/10.1109/ICAC.2017.21

Li, H., Huang, J., Wang, Y., Wang, B., Gu, C.: DQN based reinforcement learning algorithm for scheduling workflows in the cloud. In: Proceedings of the 9th international symposium on computational intelligence and industrial applications. Beijing, China (2020)

Tong, Z., Chen, H., Deng, X., Li, K., Li, K.: A scheduling scheme in the cloud computing environment using deep Q-learning. Inf. Sci. 512, 1170–1191 (2020). https://doi.org/10.1016/j.ins.2019.10.035

Wang, B., Li, H., Lin, Z., Xia, Y.: Temporal fusion pointer network-based reinforcement learning algorithm for multi-objective workflow scheduling in the cloud. In: Proceedings of the 2020 international joint conference on neural networks, pp. 1–8. IEEE, Glasgow, UK (2020). https://doi.org/10.1109/IJCNN48605.2020.9207151

Wang, Y., Liu, H., Zheng, W., Xia, Y., Li, Y., Chen, P., Guo, K., Xie, H.: Multi-objective workflow scheduling with deep-Q-network-based multi-agent reinforcement learning. IEEE Access 7, 39974–39982 (2019). https://doi.org/10.1109/ACCESS.2019.2902846

Kumar, D.S., Kannan, R.J.: Reinforcement learning-based controller for adaptive workflow scheduling in multi-tenant cloud computing. Int. J. Electric. Eng. Educ. (2020). https://doi.org/10.1177/0020720919894199

Nascimento, A., Olimpio, V., Silva, V., Paes, A., de Oliveira, D.: A reinforcement learning scheduling strategy for parallel cloud-based workflows. In: Proceedings of the 2019 IEEE international parallel and distributed processing symposium workshops, pp. 817–824. IEEE, Rio de Janeiro, Brazil (2019). https://doi.org/10.1109/IPDPSW.2019.00134

Durillo, J.J., Prodan, R.: Multi-objective workflow scheduling in Amazon EC2. Clust. Comput. 17(2), 169–189 (2014). https://doi.org/10.1007/s10586-013-0325-0

Poola, D., Garg, S.K., Buyya, R., Yang, Y., Ramamohanarao, K.: Robust scheduling of scientific workflows with deadline and budget constraints in clouds. In: Proceedings of the 2014 IEEE 28th international conference on advanced information networking and applications, pp. 858–865. IEEE, Victoria, BC, Canada (2014). https://doi.org/10.1109/AINA.2014.105

Deb, K., Pratap, A., Agarwal, S., Meyarivan, T.: A fast and elitist multiobjective genetic algorithm: NSGA-II. IEEE Trans. Evol. Comput. 6(2), 182–197 (2002). https://doi.org/10.1109/4235.996017

Coello, C.A.C., Pulido, G.T., Lechuga, M.S.: Handling multiple objectives with particle swarm optimization. IEEE Trans. Evol. Comput. 8(3), 256–279 (2004). https://doi.org/10.1109/TEVC.2004.826067

Tesauro, G.: Temporal difference learning and TD-gammon. Commun. ACM 38(3), 58–68 (1995). https://doi.org/10.1145/203330.203343

Mnih, V., Kavukcuoglu, K., Silver, D., Rusu, A.A., Veness, J., Bellemare, M.G., Graves, A., Riedmiller, M., Fidjeland, A.K., Ostrovski, G.: Human-level control through deep reinforcement learning. Nature 518, 529–533 (2015). https://doi.org/10.1038/nature14236

Kalyan Chakravarthi, K., Shyamala, L., Vaidehi, V.: Budget aware scheduling algorithm for workflow applications in IaaS clouds. Clust. Comput. 23(4), 3405–3419 (2020). https://doi.org/10.1007/s10586-020-03095-1

Liu, N., Li, Z., Xu, J., Xu, Z., Lin, S., Qiu, Q., Tang, J., Wang, Y.: A hierarchical framework of cloud resource allocation and power management using deep reinforcement learning. In: Proceedings of the IEEE international conference on distributed computing systems, pp. 372–382. IEEE, Atlanta, GA, USA (2017). https://doi.org/10.1109/ICDCS.2017.123

Rajasekar, P., Palanichamy, Y.: Scheduling multiple scientific workflows using containers on IaaS cloud. J. Ambient Intell. Humaniz. Comput. (2020). https://doi.org/10.1007/s12652-020-02483-0

Dong, T., Xue, F., Xiao, C., Li, J.: Task scheduling based on deep reinforcement learning in a cloud manufacturing environment. Concurr. Comput. 32(11), e5654 (2020). https://doi.org/10.1002/cpe.5654

Van Hasselt, H., Guez, A., Silver, D.: Deep reinforcement learning with double Q-learning. In: Proceedings of the 30th AAAI conference on artificial intelligence, pp. 2094–2100. AAAI press, Phoenix, AZ, United states (2016). https://arxiv.org/abs/1509.06461

Zhang, Z., Pan, Z., Kochenderfer, M.J.: Weighted double Q-learning. In: Proceedings of the 26th international joint conference on artificial intelligence, pp. 3455–3461. International joint conferences on artificial intelligence, Melbourne, VIC, Australia (2017). https://doi.org/10.24963/ijcai.2017/483

Zheng, Y., Hao, J., Zhang, Z.: Weighted double deep multiagent reinforcement learning in stochastic cooperative environments. In: Proceedings of the Pacific Rim international conference on artificial intelligence, pp. 421–429. Springer, Nanjing, China (2018). https://doi.org/10.1007/978-3-319-97310-4\_48

Zheng, Y., Hao, J., Zhang, Z., Meng, Z., Hao, X.: Efficient multiagent policy optimization based on weighted estimators in stochastic cooperative environments. J. Comput. Sci. Technol. 35(2), 268–280 (2020). https://doi.org/10.1007/s11390-020-9967-6

Wu, J., Liu, Q., Chen, S., Yan, Y.: Averaged weighted double deep Q-network. J. Comput. Res. Dev. 57(3), 576–589 (2020). https://doi.org/10.7544/issn1000-1239.2020.20190159

Gu, S., Hao, T., Yao, H.: A pointer network based deep learning algorithm for unconstrained binary quadratic programming problem. Neurocomputing 390, 1–11 (2020). https://doi.org/10.1016/j.neucom.2019.06.111

Niu, M., Cheng, B., Feng, Y., Chen, J.: GMTA: a geo-aware multi-agent task allocation approach for scientific workflows in container-based cloud. IEEE Trans. Netw. Serv. Manag. 17(3), 1568–1581 (2020). https://doi.org/10.1109/TNSM.2020.2996304

Bharathi, S., Chervenak, A., Deelman, E., Mehta, G., Su, M., Vahi, K.: Characterization of scientific workflows. In: Proceedings of the workshop on workflows in support of large-scale science, pp. 1–10. IEEE, Austin, TX, USA (2008). https://doi.org/10.1109/WORKS.2008.4723958

Pegasus: Workflow data (2021). https://confluence.pegasus.isi.edu/display/pegasus/Workflow+Data

Amazon: Amazon EC2 on-demand pricing (2021). https://aws.amazon.com/cn/ec2/pricing/on-demand/

Li, H., Wang, B., Yuan, Y., Zhou, M., Fan, Y., Xia, Y.: Scoring and dynamic hierarchy-based NSGA-II for multiobjective workflow scheduling in the cloud. IEEE Trans. Autom. Sci. Engi. (2021). https://doi.org/10.1109/TASE.2021.3054501

Xue, B., Zhang, M., Browne, W.N.: Particle swarm optimization for feature selection in classification: a multi-objective approach. IEEE Trans. Cybernet. 43(6), 1656–1671 (2013). https://doi.org/10.1109/TSMCB.2012.2227469

Funding

This work is supported in part by the National Key Research and Development Program of China under Grant No. 2018YFB1003700; and in part by the National Natural Science Foundation of China under Grant No. 61836001.

Author information

Authors and Affiliations

Contributions

All authors contributed to this work from different aspects. HL, JH, BW and YF: conceptualization, methodology, material preparation, data collection, validation and results analysis were performed. HL and JH: The original draft of this manuscript was written, and all authors commented on previous versions of this manuscript, and then read and approved its current version.

Corresponding author

Ethics declarations

Conflicts of interests

The authors have no conflicts of interest to declare that are relevant to the content of this article.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Li, H., Huang, J., Wang, B. et al. Weighted double deep Q-network based reinforcement learning for bi-objective multi-workflow scheduling in the cloud. Cluster Comput 25, 751–768 (2022). https://doi.org/10.1007/s10586-021-03454-6

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10586-021-03454-6