Abstract

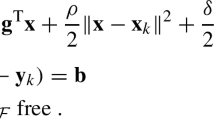

When solving nonlinear least-squares problems, it is often useful to regularize the problem using a quadratic term, a practice which is especially common in applications arising in inverse calculations. A solution method derived from a trust-region Gauss-Newton algorithm is analyzed for such applications, where, contrary to the standard algorithm, the least-squares subproblem solved at each iteration of the method is rewritten as a quadratic minimization subject to linear equality constraints. This allows the exploitation of duality properties of the associated linearized problems. This paper considers a recent conjugate-gradient-like method which performs the quadratic minimization in the dual space and produces, in exact arithmetic, the same iterates as those produced by a standard conjugate-gradients method in the primal space. This dual algorithm is computationally interesting whenever the dimension of the dual space is significantly smaller than that of the primal space, yielding gains in terms of both memory usage and computational cost. The relation between this dual space solver and PSAS (Physical-space Statistical Analysis System), another well-known dual space technique used in data assimilation problems, is explained. The use of an effective preconditioning technique is proposed and refined convergence bounds derived, which results in a practical solution method. Finally, stopping rules adequate for a trust-region solver are proposed in the dual space, providing iterates that are equivalent to those obtained with a Steihaug-Toint truncated conjugate-gradient method in the primal space.

Similar content being viewed by others

References

Akkraoui, A.E., Gauthier, P., Pellerin, S., Buis, S.: Intercomparison of the primal and dual formulations of variational data assimilation. Q. J. R. Meteorol. Soc. 134, 1015–1025 (2008)

Arioli, M.: A stopping criterion for the conjugate gradient algorithm in a finite element framework. Numer. Math. 97, 1–24 (2004)

Ashby, S.F., Holst, M.J., Manteuffel, T.A., Saylor, P.E.: The role of the inner product in stopping criteria for conjugate gradient iterations. BIT 41(1), 026–052 (2001)

Axelsson, O.: Iterative Solution Methods. Cambridge University Press, Cambridge (1996)

Björck, Å.: Numerical Methods for Least Squares Problems. SIAM, Philadelphia (1996)

Bouttier, F., Courtier, P.: Data assimilation concepts and methods. Technical report, ECMWF, Reading, England, 1999

Cartis, C., Gould, N.I.M., Toint, P.L.: Adaptive cubic overestimation methods for unconstrained optimization. Part I: Motivation, convergence and numerical results. Math. Program., Ser. A (2009). doi:10.1007/s10107-009-0286-5, 51 pp.

Conn, A.R., Gould, N.I.M., Toint, P.L.: Trust-Region Methods. MPS-SIAM Series on Optimization, vol. 01. SIAM, Philadelphia (2000)

Courtier, P.: Dual formulation of four-dimensional variational assimilation. Q. J. R. Meteorol. Soc. 123, 2449–2461 (1997)

Courtier, P., Thépaut, J.-N., Hollingsworth, A.: A strategy for operational implementation of 4D-Var using an incremental approach. Q. J. R. Meteorol. Soc. 120, 1367–1388 (1994)

Dennis, J.E., Schnabel, R.B.: Numerical Methods for Unconstrained Optimization and Nonlinear Equations. Prentice-Hall, Englewood Cliffs (1983). Reprinted as Classics in Applied Mathematics, vol. 16. SIAM, Philadelphia (1996)

Fisher, M., Nocedal, J., Tremolet, Y., Wright, S.J.: Data assimilation in weather forecasting: a case study in PDE-constrained optimization. Optim. Eng. 10, 409–426 (2009)

Giering, R., Kaminski, T.: Recipes for adjoint code construction. ACM Trans. Math. Softw. 24(4), 437–474 (1998)

Golub, G.H., Van Loan, C.F.: Matrix Computations, 2nd edn. Johns Hopkins University Press, Baltimore (1989)

Gratton, S., Tshimanga, J.: An observation-space formulation of variational assimilation using a restricted preconditioned conjugate-gradient algorithm. Q. J. R. Meteorol. Soc. 135, 1573–1585 (2009)

Gratton, S., Lawless, A., Nichols, N.K.: Approximate Gauss-Newton methods for nonlinear least-squares problems. SIAM J. Optim. 18, 106–132 (2007)

Kelley, C.T.: Iterative Methods for Optimization. Frontiers in Applied Mathematics. SIAM, Philadelphia (1999)

Lampe, J., Rojas, M., Sorensen, D.C., Voss, H.: Accelerating the LSTRS algorithm. SIAM J. Sci. Comput. 33(1), 175–194 (2011)

Morales, J.L., Nocedal, J.: Automatic preconditioning by limited-memory quasi-Newton updating. SIAM J. Optim. 10, 1079–1096 (2000)

Nocedal, J., Wright, S.J.: Numerical Optimization. Series in Operations Research. Springer, Heidelberg (1999)

Rojas, M., Santos, S.A., Sorensen, D.C.: Algorithm 873: LSTRS: MATLAB software for large-scale trust-region subproblems and regularization. ACM Trans. Math. Softw. 34(2), 11 (2008)

Roux, F.X.: Acceleration of the outer conjugate gradient by reorthogonalization for a domain decomposition method for structural analysis problems. In: Proceedings of the 3rd International Conference on Supercomputing’, ICS’89, pp. 471–476. ACM, New York (1989)

Stoll, M., Wathen, A.: Combination preconditioning and the Bramble-Pasciak+ preconditioner. SIAM J. Matrix Anal. Appl. 30(2), 582–608 (2008)

Tarantola, A.: Inverse Problem Theory. Methods for Data Fitting and Model Parameter Estimation. Elsevier, Amsterdam (1987)

Tschimanga, Jean: On a class of limited memory preconditioners for large-scale nonlinear least-squares problems (with application to variational ocean data assimilation). PhD thesis, Department of Mathematics, FUNDP, University of Namur, Namur, Belgium (2007)

Tshimanga, J., Gratton, S., Weaver, A., Sartenaer, A.: Limited-memory preconditioners with application to incremental four-dimensional variational data assimilation. Q. J. R. Meteorol. Soc. 134, 751–769 (2008)

van der Vorst, H.A.: Iterative Krylov Methods for Large Linear Systems. Cambridge University Press, Cambridge (2003)

Weaver, A.T., Vialard, J., Anderson, D.L.T.: Three- and four-dimensional variational assimilation with a general circulation model of the tropical Pacific ocean, Part 1: Formulation, internal diagnostics and consistency checks. Mon. Weather Rev. 131, 1360–1378 (2003)

Acknowledgements

The third author gratefully acknowledges partial support from CERFACS.

Author information

Authors and Affiliations

Corresponding author

Appendix: Proof for the Lemma 2.4

Appendix: Proof for the Lemma 2.4

Proof

If the singular value decomposition for H is given by

a possible theoretical choice for \(\check{H}\) could be

Denoting \(\check{R}= ( U_{1}^{T}R^{-1}U_{1} )^{-1}\), direct computations show that

Using the assumption (2.23) and denoting \(\check{G}=U_{1}^{T} G U_{1}\), we also obtain that \(F\check{H}^{T} = B \check{H}^{T} \check{G}\).

The matrix \(\check{H}\) is now a r×n matrix of rank r and Lemma 2.3 can then be applied using r, \(\check{R}\), \(\check{H}\) and \(\check{G}\) instead of m, R, H and G, yielding the desired result where ν 1,…,ν r the eigenvalues of \(\check{G}(I_{r}+\check{R}^{-1}\check{H}B\check{H}^{T})\), replace those of G(I m +R −1 HBH T) in (2.48). We next investigate the relations between these two sets of eigenvalues.

Using the relation on \(\check{H}\), \(\check{R}\) and \(\check{G}\), it can be shown that

where \(\widehat{A}\) is defined in (2.21). This says that U 1 is an invariant subspace of \(U_{1}U_{1}^{T}G\widehat{A}\) and every eigenvalue of \(\check{G}\check{A}\) is an eigenvalue of \(U_{1}U_{1}^{T}G\widehat{A}\). Therefore, the nonzero eigenvalues of \(U_{1}U_{1}^{T}G\widehat{A}\) are equal to the eigenvalues of \(\check{G}\check{A}\) using the fact that \(U_{1}U_{1}^{T}G\widehat{A}\) has (m−r) null eigenvalues. We now consider the relations between the eigenvalues of \(U_{1}U_{1}^{T}G\widehat{A}\) and \(G\widehat{A}\), and start by rewriting these matrices blockwise.

Using the relation (A.1), it can be shown that

Defining

HBH T can thus be rewritten in a block matrix form as

where \(M_{r} = U_{r} \Sigma_{r}V_{1}^{T}BV_{1}\Sigma_{r}U_{r}^{T} \) has full rank r. Using the equality (2.23), we can write that

Hence, HBH T G is symmetric due to the symmetry of HPH T. Using the relation (A.5) and defining

where G r is r×r matrix and G m−r is a m−r×m−r matrix, we can write HBH T G as

From the symmetry of HBH T G given by (A.8), M r G 2=0 which implies that G 2=0 since M r is a full rank matrix. Thus, G has the form

We next derive a block matrix form of \(\widehat{A}\). Defining R −1 as

and using (A.5) and the definition of \(\widehat{A}\), we can write \(\widehat{A}\) as

where \(\widehat{A}_{r} = I + R^{-1}_{r}M_{r}\). From (A.4), (A.9) and (A.11), we deduce that

From (A.12), the eigenvalues of \(G\widehat{A}\) are the eigenvalues of G r A r and the eigenvalues of G m−r . Also, the eigenvalues of \(U_{1}U_{1}^{T}G\widehat{A}\) are the eigenvalues of G r A r and (m−r) null eigenvalues. Therefore, the nonzero eigenvalues of \(U_{1}U_{1}^{T}G\widehat{A}\) which are equal to the eigenvalues of \(\check{G}\check{A}\) form a subset of the eigenvalues of \(G\widehat{A}\). As a result, the eigenvalues of \(\check{G}(I_{r}+\check{R}^{-1}\check {H}B\check{H}^{T})\) can be used in (2.47) instead of those G(I m +R −1 HBH T), which completes the proof. □

Rights and permissions

About this article

Cite this article

Gratton, S., Gürol, S. & Toint, P.L. Preconditioning and globalizing conjugate gradients in dual space for quadratically penalized nonlinear-least squares problems. Comput Optim Appl 54, 1–25 (2013). https://doi.org/10.1007/s10589-012-9478-7

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10589-012-9478-7