Abstract

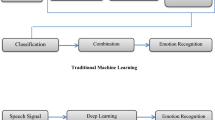

Gender (Male/Female) classification plays a primary vital role to develop a robust Automatic Tamil Speech Recognition (ASR) applications due to the diversity in the vocal tract of speakers. Various features including Formants (F1, F2, F3, F4), Zero Crossings, and Mel-Frequency Cepstral Coefficients (MFCCs) etc. have appeared in the literature especially for speech/signal classification/recognition. Recently Dalal et al. have proposed a feature called as Histogram of Oriented Gradients (HOG) for extracting feature from an image for efficient detection/classification of objects. We extend and apply the HOG for spectrogram of speech signal and hence called as Spectral Histogram of Oriented Gradients (SHOGs). The results of Tamil language male/female speaker classification using SHOGs features shows good improvement in the classification rate when compared to other features. The results of combination of various features with SHOGs are also promissing.

Similar content being viewed by others

References

Al-Haddad, S. A. R., Samad, S. A., Hussain, A., & Ishak, K. A. (2008). Isolated Malay digit recognition using pattern recognition fusion of dynamic time warping and hidden Markov models. American Journal of Applied Sciences, 5(6), 714–720.

Anusuya, M. A., & Katti, S. K. (2009). Speech recognition by machine: a review. International Journal of Computer Science and Information Security, 6(3), 181–205.

Boril, H., & Hansen, J. H. L. (2010). Unsupervised equalization of Lombard effect for speech recognition in noisy adverse environments. IEEE Transactions on Audio, Speech, and Language Processing, 18(6), 1379–1393.

Cherif, M., Korba, A., Messadeg, D., Djemili, R., & Bourouba, H. (2008). Robust speech recognition using perceptual wavelet denoising and mel-frequency product spectrum cepstral coefficient features. Informatica, 32, 283–288.

Dalal, N., & Triggs, B. (2005). Histograms of oriented gradients for human detection. In Conference on computer vision and pattern recognition (CVPR).

Dharanipragada, S., Yapanel, U. H., & Rao, B. D. (2007). Robust feature extraction for continuous speech recognition using the MVDR spectrum estimation method. IEEE Transactions on Audio, Speech, and Language Processing, 15(1), 224–234.

Frankel, J., & King, S. (2007). Speech recognition using linear dynamic models. IEEE Transactions on Audio, Speech, and Language Processing, 15(1), 246–256.

Gläser, C., Heckmann, M., Joublin, F., & Goerick, C. (2010). Combining auditory preprocessing and Bayesian estimation for robust formant tracking. IEEE Transactions on Audio, Speech, and Language Processing, 18(2), 224–236.

Jankowski, C. R. Jr., Hoang-Doan, H. V., & Lippmann, R. P. (1995). A comparison of signal processing front ends for automatic word recognition. IEEE Transactions on Speech and Audio Processing, 3(4), 286–293.

Jia, H.-X., & Zhang, Y.-J. (2007). Fast human detection by boosting histograms of oriented gradients. In Proc. IEEE fourth international conference on image and graphics (pp. 683–688).

Kolossa, D., Fernandez Astudillo, R., Hoffmann, E., & Orglmeister, R. (2010). Independent component analysis and time-frequency masking for speech recognition in multitalker conditions. EURASIP Journal on Audio, Speech, and Music Processing, 2010, 651420, pp. 1–13.

Lee, C.-H., Han, C.-C., & Chuang, C.-C. (2008). Automatic classification of bird species from their sounds using two-dimensional cepstral coefficients. IEEE Transactions on Audio, Speech, and Language Processing, 16(8), 1541–1550.

Levy, C., Linares, G., & Bonastre, J.-F. (2009). Compact acousticmodels for embedded speech recognition. EURASIP Journal on Audio, Speech, and Music Processing, 2009, 806186, pp. 1–13.

Maier, A., Haderlein, T., Stelzle, F., Noth, E., Nkenke, E., Rosanowski, F., Schutzenberger, A., & Schuster, M. (2010). Automatic speech recognition systems for the evaluation of voice and speech disorders in head and neck cancer. EURASIP Journal on Audio, Speech, and Music Processing, 2010, 926951, pp. 1–7.

Morales, N., Torre Toledano, D., Hansen, J. H. L., & Garrido, J. (2009). Feature compensation techniques for ASR on band-limited speech. IEEE Transactions on Audio, Speech, and Language Processing, 17(4), 758–774.

Morales-Cordovilla, J. A., Peinado, A. M., Sánchez, V., & González, J. A. (2011). Feature extraction based on pitch-synchronous averaging for robust speech recognition. IEEE Transactions on Audio, Speech, and Language Processing, 19(3), 640–651.

Muda, L., Begam, M., & Elamvazuthi, I. (2010). Voice recognition algorithms using mel frequency cepstral coefficient (MFCC) and dynamic time warping (DTW) techniques. Journal of Computing, 2(3), 138–143.

Muthamizh Selvan, A., & Rajesh, R. (2011). Word classification using neural network. In Proc. of international conference on advances in computing and communications (ACC 2011), Part III (pp. 497–502). Berlin: Springer. CCIS 192.

Panagiotakis, C., & Tziritas, G. (2005). A speech/music discriminator based on RMS and zero-crossings. IEEE Transactions on Multimedia, 7(1), 155–166.

Park, H., Takiguchi, T., & Ariki, Y. (2009). Integrated phoneme subspace method for speech feature extraction. EURASIP Journal on Audio, Speech, and Music Processing, 2009, 690451, pp. 1–6.

Pikrakis, A., Giannakopoulos, T., & Theodoridis, S. (2008). A speech/music discriminator of radio recordings based on dynamic programming and Bayesian networks. IEEE Transactions on Multimedia, 10(5), 846–857.

Rajesh, R., Rajeev, K., Gopakumar, V., Suchithra, K., & Lekhesh, V. P. (2011). On experimenting with pedestrian classification using neural network. In Proc. of 3rd international conference on electronics computer technology (ICECT) (pp. 107–111).

Scheirer, E., & Slaney, M. (1997). Construction and evaluation of a robust multifeature speech/music discriminator. International Conference on Acoustics, Speech, and Signal Processing Proceedings (ICASSP), 2, 1331–1334.

Tomasi, C., & Manduchi, R. (1997). Bilateral filtering for gray and color images. In Proc. IEEE int. conference on computer vision.

Wang, N., Ching, P. C., Zheng, N., & Lee, T. (2011). Robust speaker recognition using denoised vocal source and vocal tract features. IEEE Transactions on Audio, Speech, and Language Processing, 19(1), 196–205.

Yin, H., Nadeu, C., & Hohmann, V. (2009). Pitch and formant based order adaptation of the fractional Fourier transformand its application to speech recognition. EURASIP Journal on Audio, Speech, and Music Processing, 2009, 304579, pp. 1–14.

Yu, D., Deng, L., Droppo, J., Wu, J., Gong, Y., & Acero, A. (2008). A minimum-mean-square-error noise reduction algorithm on mel-frequency cepstra for robust speech recognition. In Proc. int. conference on acoustics, speech and signal processing (ICASSP) (pp. 4041–4044).

Zhang, T., & Jay Kuo, C. C. (2001). Audio content analysis for online audiovisual data segmentation and classification. IEEE Transactions on Speech and Audio Processing, 9(4), 441–457.

Acknowledgements

The first author is gratified to National Testing Service (NTS)—India, Central Institute of Indian Languages (CIIL), Ministry of HRD, Govt. of India for the valuable fellowship and thankful to the Ph.D. Supervisor and to Bharathiar University for their valuable support.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Muthamizh Selvan, A., Rajesh, R. Spectral histogram of oriented gradients (SHOGs) for Tamil language male/female speaker classification. Int J Speech Technol 15, 259–264 (2012). https://doi.org/10.1007/s10772-012-9138-4

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10772-012-9138-4