Abstract

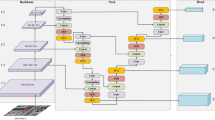

Feature matching can establish reliable points correspondences between two images, which plays a vital role in indirect Visual Simultaneous Localization and Mapping (VSLAM). The VSLAM system performs data association between image frames through feature matching. There are a large number of incorrect correspondences in the unfiltered matching results, and the accuracy of data association will directly determine the localization performance. This paper proposes a convolutional neural network with dense connectivity to eliminate the outliers in the matching results. It repeatedly concatenates low-level and high-level features into a dense feature map, which allows both local and global information to be used for mismatch removal. Furthermore, we introduce an outlier rejection module based on our network into the classic monocular visual odometry (VO) pipeline, in order to enhance the reliability of data association. Experiments on several benchmark datasets show that our method achieves favorable performance against other algorithms, especially under challenging scenes, such as low texture. Also, we verify the performance of our system on a mobile robot in real-world localization task.

Similar content being viewed by others

Data Availability

Not applicable.

Code Availability

Not applicable.

References

Shi, J.: Good features to track. In: Proc. IEEE Conference on Computer Vision and Pattern Recognition, pp. 593–600 (1994)

Rosten, E., Drummond, T.: Machine learning for high-speed corner detection. In: European Conference on Computer Vision, pp. 430–443 (2006)

Rublee, E., Rabaud, V., Konolige, K., Bradski, G.: ORB: An efficient alternative to SIFT or SURF. In: 2011 International Conference on Computer Vision, pp. 2564–2571 (2011)

Davison, A.J., Reid, I.D., Molton, N.D., Stasse, O.: MonoSLAM: Real-time single camera SLAM. IEEE Trans. Pattern Anal. Machine Intell. 29(6), 1052–1067 (2007)

Klein, G., Murray, D.: Parallel tracking and mapping for small AR workspaces. In: 2007 6th IEEE and ACM international symposium on mixed and augmented reality, pp. 225–234 (2007)

Mur-Artal, R., Tardós, J.D.: ORB-SLAM2: an open-source SLAM system for monocular, stereo, and RGB-D cameras. IEEE Trans. Robot. 33(5), 1255–1262 (2017)

Lowe, D.G.: Distinctive image features from scale-invariant keypoints. Int. J. Comput. Vis. 60(2), 91–110 (2004)

Collins, T., Mesejo, P., Bartoli, A.: An analysis of errors in graph-based keypoint matching and proposed solutions. In: European conference on computer vision, pp. 138–153 (2014)

Lin, W.Y.D., Cheng, M.M., Lu, J., Yang, H., Do, M.N., Torr, P.: Bilateral functions for global motion modeling. In: European Conference on Computer Vision, pp. 341–356 (2014)

Lin, W.Y., Wang, F., Cheng, M.M., Yeung, S.K., Torr, P.H., Do, M.N., Lu, J.: CODE: Coherence based decision boundaries for feature correspondence. IEEE Trans. Pattern Anal. Machine Intell. 40(1), 34–47 (2017)

Bian, J., Lin, W.Y., Matsushita, Y., Yeung, S.K., Nguyen, T.D., Cheng, M.M.: Gms: Grid-based motion statistics for fast, ultra-robust feature correspondence. In: Proc. IEEE conference on computer vision and pattern recognition, pp. 4181–4190 (2017)

Jiang, X., Ma, J., Jiang, J., Guo, X.: Robust feature matching using spatial clustering with heavy outliers. IEEE Trans. Image Process. 29, 736–746 (2019)

Ma, J., Zhao, J., Jiang, J., Zhou, H., Guo, X.: Locality preserving matching. Int. J. Comput. Vis. 127(5), 512–531 (2019)

Fischler, M.A., Bolles, R.C.: Random sample consensus: a paradigm for model fitting with applications to image analysis and automated cartography. Commun. ACM 24(6), 381–395 (1981)

Torr, P.H., Zisserman, A.: MLESAC: A new robust estimator with application to estimating image geometry. Comput. Vis. Image Underst. 78(1), 138–156 (2000)

Chum, O., Matas, T.: Matching with PROSAC - progressive sample consensus. In: 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’05), vol.1, pp. 220–226 (2005)

Raguram, R., Chum, O., Pollefeys, M., Matas, J., Frahm, J.M.: USAC: A universal framework for random sample consensus. IEEE Trans. Pattern Anal. Machine Intell. 35(8), 2022–2038 (2012)

Brachmann, E., Rother, C.: Neural-guided RANSAC: Learning where to sample model hypotheses. In: Proc. IEEE/CVF International Conference on Computer Vision, pp. 4322–4331 (2019)

Lin, T., Wang, X.: Hierarchical clustering matching for features with repetitive patterns in visual odometry. J. Intell. Robot Syst. 100(3), 1139–1155 (2020)

Zhao, Y., Vela, P.A.: Good feature selection for least squares pose optimization in VO/VSLAM. In: 2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 1183–1189 (2018)

Yi, K.M., Trulls, E., Ono, Y., Lepetit, V., Salzmann, M., Fua, P.: Learning to find good correspondences. In: Proc. IEEE conference on computer vision and pattern recognition, pp. 2666–2674 (2018)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proc. IEEE conference on computer vision and pattern recognition, pp. 770–778 (2016)

Huang, G., Liu, Z., Van Der Maaten, L., Weinberger, K.Q.: Densely connected convolutional networks. In: Proc. IEEE conference on computer vision and pattern recognition, pp. 4700–4708 (2017)

Longuet-Higgins, H.C.: A computer algorithm for reconstructing a scene from two projections. Nature 293(5828), 133–135 (1981)

Thomee, B., Shamma, D. A., Friedland, G., Elizalde, B., Ni, K., Poland, D., … Li,L. J.: YFCC100M: The new data in multimedia research. Commun. ACM. 59(2), 64–73 (2016)

Xiao, J., Owens, A., Torralba, A.: Sun3d: A database of big spaces reconstructed using sfm and object labels. In Proc. IEEE international conference on computer vision, pp. 1625–1632 (2013)

Sturm, J., Engelhard, N., Endres, F., Burgard, W., Cremers, D.: A benchmark for the evaluation of RGB-D SLAM systems. In: 2012 IEEE/RSJ International Conference on Intelligent Robots and Systems, pp. 573–580 (2012)

Burri, M., Nikolic, J., Gohl, P., Schneider, T., Rehder, J., Omari, S., … Siegwart, R.: The EuRoC micro aerial vehicle datasets. Int. J. Rob. Res. 35(10), 1157–1163 (2016)

Geiger, A., Lenz, P., Stiller, C., Urtasun, R.: Vision meets robotics: The kitti dataset. Int. J. Rob. Res. 32(11), 1231–1237 (2013)

Funding

This work was supported by Department of science and technology of Guangdong Province (No:2021B01420003) and Department of science and technology of Foshan Nanhai District (No: 201811020005).

Author information

Authors and Affiliations

Contributions

All authors contributed to the methodology conception and design. Data collection and analysis were performed by Jinze Xu and Lingfeng Su. Jinze Xu, Lingfeng Su and Feng Ye wrote the manuscript. Kuo Li and Yizong Lai reviewed and approved the manuscript.

Corresponding author

Ethics declarations

Ethical Approval

Not applicable.

Consent to Participate

Not applicable.

Consent to Publish

Not applicable.

Conflict of Interest

The authors declare that they do not have any conflicts of interest.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Xu, J., Su, L., Ye, F. et al. DenseFilter: Feature Correspondence Filter Based on Dense Networks for VSLAM. J Intell Robot Syst 106, 18 (2022). https://doi.org/10.1007/s10846-022-01735-9

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10846-022-01735-9