Abstract

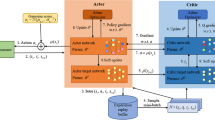

In searching for the stable motion re-planning as required by autonomous quadrotors, deep reinforcement learning (DRL) techniques play a vital role in balancing the reference trajectory tracking and flight safety. In this research, our intention amounts to improving the traditional DRL strategies in terms of the following two essential factors: 1). searching the best optimization without losing efficiency under the conditions of unstructured disturbances, e.g., continuously changing wind perturbation or slight collisions; 2). balancing the safety and flight aggressiveness according to the intensity of the wind disturbance and the complexity of the environment. To be specific, in caring about the prompt convergence, in the present study, the reference trajectory with a re-timing publication mechanism was well-adopted for providing reasonable initial parameters for the proposed DRL model, hence improving the optimization performance of DRL strategies and meeting the requirements of smooth flight actions. Furthermore, the problem of motion re-planning in different environments was formulated into a series of partially observable Markov decision problems (POMDPs). Correspondingly, a learned objective function was introduced into the model agnostic meta-learning (MAML) framework and a MAML algorithm with mixed objective function (OMAML) was proposed for solving raised POMDPs while simultaneously enhancing the present method with the ability to balance flight aggressiveness and safety. Finally, sufficient simulation experiments were conducted in comparison with the state-of-the-art methods both in trajectory tracking and collision avoidance tasks, so as to demonstrate the effectiveness of the proposed method.

Similar content being viewed by others

References

Zhou, B., Zhang, Y., Chen, X., Shen, S.: Fuel: fast uav exploration using incremental frontier structure and hierarchical planning. IEEE Robot. Autom. Lett. 6(2), 779–786 (2021). https://doi.org/10.1109/LRA.2021.3051563

Gao, F., Wang, L., Zhou, B., Zhou, X., Pan, J., Shen, S.: Teach-repeat-replan: a complete and robust system for aggressive flight in complex environments. IEEE Trans. Robot. 36(5), 1526–1545 (2020). https://doi.org/10.1109/TRO.2020.2993215

Achermann, F., Lawrance, N.R., Ranftl, R., Dosovitskiy, A., Chung, J.J., Siegwart, R.: Learning to predict the wind for safe aerial vehicle planning. In: 2019 International Conference on Robotics and Automation (ICRA), pp. 2311–2317. https://doi.org/10.1109/ICRA.2019.8793547. IEEE (2019)

Bisheban, M., Lee, T.: Computational geometric identification for quadrotor dynamics in wind fields. In: 2017 IEEE Conference on Control Technology and Applications (CCTA), pp. 1153–1158. https://doi.org/10.1109/CCTA.2017.8062614. IEEE (2017)

Pi, C. -H., Ye, W. -Y., Cheng, S.: Robust quadrotor control through reinforcement learning with disturbance compensation. Appl. Sci. 11(7), 3257 (2021). https://doi.org/10.3390/app11073257

Bisheban, M., Lee, T.: Geometric adaptive control for a quadrotor uav with wind disturbance rejection. In: 2018 IEEE Conference on Decision and Control (CDC), pp. 2816–2821. https://doi.org/10.1109/CDC.2018.8619390. IEEE (2018)

Perozzi, G., Efimov, D., Biannic, J.-M., Planckaert, L., Coton, P.: Wind rejection via quasi-continuous sliding mode technique to control safely a mini drone. https://doi.org/10.13009/EUCASS2017-329 (2017)

Zhou, B., Gao, F., Wang, L., Liu, C., Shen, S.: Robust and efficient quadrotor trajectory generation for fast autonomous flight. IEEE Robot. Autom. Lett. 4(4), 3529–3536 (2019). https://doi.org/10.1109/LRA.2019.2927938

Ji, J., Zhou, X., Xu, C., Gao, F.: Cmpcc: Corridor-based model predictive contouring control for aggressive drone flight. In: International Symposium on Experimental Robotics, pp. 37–46. https://doi.org/10.1007/978-3-030-71151-1∖_4. Springer (2020)

Wu, Y., Ding, Z., Xu, C., Gao, F.: External forces resilient safe motion planning for quadrotor. arXiv:2103.11178 (2021)

Neumann, P. P., Bartholmai, M.: Real-time wind estimation on a micro unmanned aerial vehicle using its inertial measurement unit. Sensors and Actuators A: Physical 235, 300–310 (2015). https://doi.org/10.1016/j.sna.2015.09.036

Gill, R., D’Andrea, R.: Propeller Thrust and Drag in Forward Flight. In: 2017 IEEE Conference on control technology and applications (CCTA), pp. 73–79. https://doi.org/10.1109/CCTA.2017.8062443 (2017)

Tomić, T., Ott, C., Haddadin, S.: External wrench estimation, collision detection, and reflex reaction for flying robots. IEEE Trans. Robot. 33(6), 1467–1482 (2017). https://doi.org/10.1109/TRO.2017.2750703

Yang, H., Cheng, L., Xia, Y., Yuan, Y.: Active disturbance rejection attitude control for a dual closed-loop quadrotor under gust wind. IEEE Trans. Control Syst. Technol. 26(4), 1400–1405 (2017). https://doi.org/10.1109/TCST.2017.2710951

Wang, Y., Sun, J., He, H., Sun, C.: Deterministic policy gradient with integral compensator for robust quadrotor control. IEEE Transactions on Systems, Man, and Cybernetics: Systems. https://doi.org/10.1109/TSMC.2018.2884725 (2019)

Gao, F., Wang, L., Zhou, B., Zhou, X., Pan, J., Shen, S.: Teach-repeat-replan: a complete and robust system for aggressive flight in complex environments. IEEE Trans. Robot. 36(5), 1526–1545 (2020). https://doi.org/10.1109/TRO.2020.2993215

Wang, C., Wang, J., Shen, Y., Zhang, X.: Autonomous navigation of uavs in large-scale complex environments: a deep reinforcement learning approach. IEEE Trans. Veh. Technol. 68(3), 2124–2136 (2019). https://doi.org/10.1109/TVT.2018.2890773

Faust, A., Malone, N., Tapia, L.: Preference-balancing motion planning under stochastic disturbances. In: 2015 IEEE International Conference on Robotics and Automation (ICRA), pp. 3555–3562. https://doi.org/10.1109/ICRA.2015.7139692. IEEE (2015)

Faust, A., Oslund, K., Ramirez, O., Francis, A., Tapia, L., Fiser, M., Davidson, J.: Prm-rl: Long-range robotic navigation tasks by combining reinforcement learning and sampling-based planning. In: 2018 IEEE International Conference on Robotics and Automation (ICRA), pp. 5113–5120. https://doi.org/10.1109/ICRA.2018.8461096. IEEE (2018)

Fridovich-Keil, D., Herbert, S.L., Fisac, J.F., Deglurkar, S., Tomlin, C.J.: Planning, fast and slow: A framework for adaptive real-time safe trajectory planning. In: 2018 IEEE International Conference on Robotics and Automation (ICRA), pp. 387–394. https://doi.org/10.1109/ICRA.2018.8460863. IEEE (2018)

Li, Z., Arslan, Ö., Atanasov, N.: Fast and safe path-following control using a state-dependent directional metric. In: 2020 IEEE International Conference on Robotics and Automation (ICRA), pp. 6176–6182. https://doi.org/10.1109/ICRA40945.2020.9197377. IEEE (2020)

Quan, L., Zhang, Z., Zhong, X., Xu, C., Gao, F.: Eva-Planner: Environmental adaptive quadrotor planning. In: 2021 IEEE International Conference on Robotics and Automation (ICRA), pp. 398–404. https://doi.org/10.1109/ICRA48506.2021.9561759 (2021)

Rubí, B., Morcego, B., Pérez, R.: Deep reinforcement learning for quadrotor path following with adaptive velocity. Auton. Robot. 45(1), 119–134 (2021). https://doi.org/10.1007/s10514-020-09951-8

Chen, Y., Ye, R., Tao, Z., Liu, H., Chen, G., Peng, J., Ma, J., Zhang, Y., Zhang, Y., Ji, J.: Reinforcement learning for robot navigation with adaptive executionduration (aed) in a semi-markov model. arXiv:2108.06161 (2021)

Hoffmann, G., Huang, H., Waslander, S., Tomlin, C.: Quadrotor Helicopter flight dynamics and control: theory and experiment. In: AIAA Guidance, Navigation and Control Conference and Exhibit, p. 6461. https://doi.org/10.2514/6.2007-6461 (2007)

Tordesillas, J., How, J. P.: Mader: trajectory planner in multiagent and dynamic environments. IEEE Trans. Robot. 38(1), 463–476 (2022). https://doi.org/10.1109/TRO.2021.3080235

Wang, Z., Zhou, X., Xu, C., Gao, F.: Geometrically constrained trajectory optimization for multicopters. IEEE Trans. Robot., 1–10. https://doi.org/10.1109/TRO.2022.3160022 (2022)

Finn, C., Abbeel, P., Levine, S.: Model-agnostic meta-learning for fast adaptation of deep networks. In: International Conference on Machine Learning, pp. 1126–1135. https://doi.org/10.48550/arXiv.1703.03400. PMLR (2017)

Schulman, J., Wolski, F., Dhariwal, P., Radford, A., Klimov, O.: Proximal policy optimization algorithms. arXiv:1707.06347 (2017)

Kirsch, L., van Steenkiste, S., Schmidhuber, J.: Improving generalization in meta reinforcement learning using learned objectives. In: International Conference on Learning Representations. https://doi.org/10.48550/arXiv.1910.04098 (2020)

Silver, D., Lever, G., Heess, N., Degris, T., Wierstra, D., Riedmiller, M.: Deterministic policy gradient algorithms. In: International Conference on Machine Learning, pp. 387–395. PMLR (2014)

Furrer, F., Burri, M., Achtelik, M., Siegwart, R.: Rotors—a modular gazebo MAV simulator framework. In: Koubaa, A (ed.) Robot Operating System (ROS). Studies in Computational Intelligence, vol. 625. Springer, Cham (2016), https://doi.org/10.1007/978-3-319-26054-9∖_23

Cabecinhas, D., Cunha, R., Silvestre, C.: A globally stabilizing path following controller for rotorcraft with wind disturbance rejection. IEEE Trans. Control Syst. Technol. 23(2), 708–714 (2015). https://doi.org/10.1109/TCST.2014.2326820

Nobahari, H., Asghari, J.: A Fuzzy-PLOS guidance law for precise trajectory tracking of a UAV in the presence of wind. J. Intell. Robot. Syst. 105(1), 18 (2022). https://doi.org/10.1007/s10846-022-01635-y. Accessed 21 Sept 2022

Moeini, A., Lynch, A.F., Zhao, Q.: Exponentially stable motion control for multirotor UAVs with rotor drag and disturbance compensation. J. Intell. Robot. Syst. 103(1), 15 (2021). https://doi.org/10.1007/s10846-021-01452-9. Accessed 21 Sept 2022

Shu, P., Li, F., Zhao, J., Oya, M.: Robust adaptive control for a novel fully-actuated octocopter UAV with wind disturbance. J. Intell. Robot. Syst. 103(1), 6 (2021). https://doi.org/10.1007/s10846-021-01450-x. Accessed 21 Sept 2022

Escareno~, J., Salazar, S., Romero, H., Lozano, R.: Trajectory control of a quadrotor subject to 2D wind disturbances: robust-adaptive approach. J. Intell. Robot. Syst. 70(1-4), 51–63 (2013). https://doi.org/10.1007/s10846-012-9734-1. Accessed 21 Sept 2022

Dhadekar, D.D., Sanghani, P.D., Mangrulkar, K.K., Talole, S.E.: Robust control of quadrotor using uncertainty and disturbance estimation. J. Intell. Robot. Syst. 101(3), 60 (2021). https://doi.org/10.1007/s10846-021-01325-1. Accessed 21 Sept 2022

Wang, C., Song, B., Huang, P., Tang, C.: Trajectory tracking control for quadrotor robot subject to payload variation and wind gust disturbance. J. Intell. Robot. Syst 83(2), 315–333 (2016). https://doi.org/10.1007/s10846-016-0333-4. Accessed 21 Sept 2022

Ji, J., Zhou, X., Xu, C., Gao, F.: CMPCC: corridor-based model predictive contouring control for aggressive drone flight. arXiv:2007.03271 [cs]. Accessed 21 Sept 2022 (2021)

Gao, F., Wu, W., Lin, Y., Shen, S.: Online safe trajectory generation for Quadrotors using fast marching method and Bernstein basis polynomial. In: 2018 IEEE International Conference on Robotics and Automation (ICRA), pp. 344–351. https://doi.org/10.1109/ICRA.2018.8462878 (2018)

Schulman, J., Moritz, P., Levine, S., Jordan, M., Abbeel, P.: High-dimensional continuous control using generalized advantage estimation. arXiv:1506.02438 (2015)

Funding

This work was supported by the National Natural Science Foundation of China (61773262, 62006152), and the China Aviation Science Foundation (20142057006).

Author information

Authors and Affiliations

Contributions

All authors contributed to the study conception and design. Material preparation, data collection, and analysis were performed by Qiuyu Yu. The first draft of the manuscript was written by Qiuyu Yu and all authors commented on previous versions of the manuscript. The language was checked by Lingkun Luo. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Ethics Approval

Not applicable.

Consent to Participate

Not applicable

Consent for Publication

Not applicable

Competing interests

The authors have no relevant financial or non1109 financial interests to disclose.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Yu, Q., Luo, L., Liu, B. et al. Re-planning of Quadrotors Under Disturbance Based on Meta Reinforcement Learning. J Intell Robot Syst 107, 13 (2023). https://doi.org/10.1007/s10846-022-01788-w

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10846-022-01788-w