Abstract

Recent years have shown that data pre-processing is an essential task in machine learning modeling, and one of the crucial steps in data pre-processing is dimensionality reduction, considering that many data used in machine learning are large datasets that contain redundant information, making them challenging to manage without reduction and cleaning. In this study, we offer an optimized method to reduce dimensions that combines bivariate copulas to feature selection. We use the copula function as a tool to detect inter-correlation and model the dependency (redundancy). Our method will be performed alongside well-known methods, and compared against them in term of reduction and classification accuracy using several models.

Similar content being viewed by others

Data availibility

The data that support the findings of this study are openly available in “UCI machine learning repository” (Dua and Graff 2017) at https://archive.ics.uci.edu and LIBSVM repository at https://www.csie.ntu.edu.tw/~cjlin/libsvmtools/datasets/.

References

Abrevaya J (1999) Computation of the maximum rank correlation estimator. Econ Lett 62(3):279–285. https://doi.org/10.1016/S0165-1765(98)00255-9

Agatonovic-Kustrin S, Beresford R (2000) Basic concepts of artificial neural network (ANN) modeling and its application in pharmaceutical research. J Pharm Biomed Anal 22(5):717–727. https://doi.org/10.1016/S0731-7085(99)00272-1

Badakhshan Farahabadi F, Fathi Vajargah K, Farnoosh R (2021) Dimension reduction big data using recognition of data features based on copula function and principal component analysis. Adv Math Phys. https://doi.org/10.1155/2021/9967368

Bao R, Gu B, Huang H (2020) Fast OSCAR and OWL regression via safe screening rules. In: International conference on machine learning. PMLR, pp 653–663

Chatterjee S (2016) fastAdaboost: a fast implementation of adaboost. R package version 1(0)

Dua D, Graff C (2017) UCI machine learning repository. http://archive.ics.uci.edu/ml

Efron B, Hastie T, Johnstone I, Tibshirani R (2004) Least angle regression. Ann Stat 32(2):407–499. https://doi.org/10.1214/009053604000000067

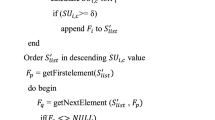

Femmam K, Femmam S (2022) Fast and efficient feature selection method using bivariate copulas. J Adv Inf Technol. 8:9

Filzmoser P, Fritz H, Kalcher K (2021) pcaPP: robust PCA by projection pursuit. R package version 1.9-74

Fonti V, Belitser E (2017) Feature selection using lasso. VU Amsterdam Res Pap Bus Anal 30:1–25

Frank EH (2015) Regression modeling strategies: with applications to linear models, logistic and ordinal regression, and survival analysis. Springer. https://doi.org/10.1007/978-1-4757-3462-1

Fritsch S, Guenther F, Guenther MF (2019) Package ‘neuralnet’. Training of Neural Networks

Genest C, Rémillard B, Beaudoin D (2009) Goodness-of-fit tests for copulas: a review and a power study. Insur Math Econ 44(2):199–213. https://doi.org/10.1016/j.insmatheco.2007.10.005

Hastie T, Efron B (2022) lars: least angle regression, lasso and forward stagewise. https://CRAN.R-project.org/package=lars, R package version 1.3

Hofert M, Kojadinovic I, Maechler M, Yan J (2020) Copula: multivariate dependence with copulas. R package version 1.0-1

Houari R, Bounceur A, Kechadi MT, Tari AK, Euler R (2016) Dimensionality reduction in data mining: a Copula approach. Expert Syst Appl 64:247–260. https://doi.org/10.1016/j.eswa.2016.07.041

Jang SW, Lee SH (2020) Feature selection based on Euclid distance and neurofuzzy system. J Adv Inf Technol. https://doi.org/10.12720/jait.11.3.155-160

Kadhum M, Manaseer S, Dalhoum A et al (2021) Evaluation feature selection technique on classification by using evolutionary ELM wrapper method with features priorities. J Adv Inf Technol 12:1

Knight WR (1966) A computer method for calculating Kendall’s tau with ungrouped data. J Am Stat Assoc 61(314):436–439. https://doi.org/10.1080/01621459.1966.10480879

Kuhn M (2022) Caret: classification and regression training. R package version 6.0-92

Kuhn M, Johnson K (2019) Feature engineering and selection: a practical approach for predictive models. CRC Press. https://doi.org/10.1201/9781315108230

Lall S, Sinha D, Ghosh A, Sengupta D, Bandyopadhyay S (2021) Stable feature selection using copula based mutual information. Pattern Recogn 112:107697. https://doi.org/10.1016/j.patcog.2020.107697

Liaw A, Wiener M et al (2002) Classification and regression by random forest. R News 2(3):18–22

Lin P, Zhang J, An R (2014) Data dimensionality reduction approach to improve feature selection performance using sparsified SVD. In: 2014 International joint conference on neural networks (IJCNN). IEEE, pp 1393–1400. https://doi.org/10.1109/IJCNN.2014.6889366

Liu C, Yang SX, Deng L (2015) A comparative study for least angle regression on NIR spectra analysis to determine internal qualities of navel oranges. Expert Syst Appl 42(22):8497–8503. https://doi.org/10.1016/j.eswa.2015.07.005

Marill T, Green D (1963) On the effectiveness of receptors in recognition systems. IEEE Trans Inf Theory 9(1):11–17. https://doi.org/10.1109/TIT.1963.1057810

Mesiar R, Sheikhi A (2021) Nonlinear random forest classification: a copula-based approach. Appl Sci 11(15):7140. https://doi.org/10.3390/app11157140

Nelsen RB (2007) An introduction to copulas. Springer

Singh DAAG, Leavline EJ, Priyanka R, Priya PP (2016) Dimensionality reduction using genetic algorithm for improving accuracy in medical diagnosis. Int J Intell Syst Appl 8(1):67. https://doi.org/10.5815/ijisa.2016.01.08

Tiwari R, Singh MP (2010) Correlation-based attribute selection using genetic algorithm. Int J Comput Appl 4(8):28–34

Wickham H, François R, Henry L, Müller K (2022) dplyr: a grammar of data manipulation. R package version 1:9

Funding

The authors declare that no funds, grants, or other support were received during the preparation of this manuscript.

Author information

Authors and Affiliations

Contributions

K. Femmam carried out the research under the supervision of B. Brahimi and S. Femmam, and all authors approved the final version.

Corresponding author

Ethics declarations

Conflict of interest

The authors have no relevant financial or non-financial interests to disclose.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Femmam, K., Brahimi, B. & Femmam, S. An optimized feature selection technique based on bivariate copulas “GBCFS”. J Comb Optim 45, 74 (2023). https://doi.org/10.1007/s10878-023-01006-9

Accepted:

Published:

DOI: https://doi.org/10.1007/s10878-023-01006-9