Abstract

A genetic algorithm for solving systems of nonlinear equations that uses a self-reproduction operator bases on residual approaches is presented and analyzed. To ensure convergence the elitist model is used. A convergence analysis is given. With the aim of showing the advantages of the proposed genetic algorithm an extensive set of numerical experiments with standard test problems and some specific applications are reported.

Similar content being viewed by others

References

Floudas, C.A., Pardalos, P.M., Adjiman, C., Esposito, W., Gumus, Z., Harding, S., Klepeis, J., Meyer, C., Schweiger, C.: Handbook of Test Problems in Local and Global Optimization. Kluwer Academic Publishers, Dordrecht (1999). https://doi.org/10.1007/978-1-4757-3040-1

Kelley, C.T.: Iterative Methods for Linear and Nonlinear Equations. SIAM, Philadelphia (1995). https://doi.org/10.1137/1.9781611970944

Ortega, J., Rheinboldt, W.: Iterative solution of nonlinear equations in several variables. SIAM, Philadelphia (2000). https://doi.org/10.1137/1.9780898719468

Strongin, R., Sergeyev, Y.: Global Optimization with Non-Convex Constraints. Sequential and Parallel Algorithms. Nonconvex Optimization and Its Applications. Springer-Science+Business Media, Boston (2000). https://doi.org/10.1007/978-1-4615-4677-1

Yuan, G., Li, T., Hu, W.: A conjugate gradient algorithm for large-scale nonlinear equations and image restoration problems. Applied Numerical Mathematics 147, 129–141 (2020). https://doi.org/10.1016/j.apnum.2019.08.022

Yuan, G., Zhang, M.: A three-terms polak-ribière-polyak conjugate gradient algorithm for large-scale nonlinear equations. Journal of Computational and Applied Mathematics 286, 186–195 (2015). https://doi.org/10.1016/j.cam.2015.03.014

Khamisov, O.: Finding roots of nonlinear equations using the method of concave support functions. Math. Notes 98, 484–491 (2015). https://doi.org/10.1134/S000143461509014X

Gruzdeva, T., Khamisov, O.: In: Olenev, N., Evtushenko, Y., Jaćimović, M., Khachay, M., Malkova V. (eds.) Optimization and Applications, pp. 110–120. Springer International Publishing, Cham (2021). https://doi.org/10.1007/978-3-030-91059-4_8

Goldberg, D.: Genetic Algorithms in Search, Optimization and Machine Learning, 1st edn. Addison-Wesley, Reading, Massachusetts (1989)

Katoch, S., Chauhan, S.S., Kumar, V.: A review on genetic algorithm: past, present, and future. Multimed Tools Appl. 80, 8091–8126 (2021). https://doi.org/10.1007/s11042-020-10139-6

Ss, V., Mishra, D.: Variable search space converging genetic algorithm for solving system of non-linear equations. Journal of Intelligent Systems 30, 142–164 (2021). https://doi.org/10.1515/jisys-2019-0233

Mangla, C., Ahmad, M., Uddin, M.: Solving system of nonlinear equations using genetic algorithm. Journal of Computer and Mathematical Sciences 10(4), 877–886 (2019). https://doi.org/10.29055/jcms/1072

Mangla, C., Bhasin, H., Ahmad, M., U. M.: Novel Solution of Nonlinear Equations Using Genetic Algorithm, pp. 249–257. Springer, Singapore, (2017). Industrial and Applied Mathematics. https://doi.org/10.1007/978-981-10-3758-0_17

Gopesh, J., Bala, K.M.: Solving system of non-linear equations using Genetic Algorithm, pp. 1302–1308. (2014), 2014 International Conference on Advances in Computing, Communications and Informatics (ICACCI). https://doi.org/10.1109/ICACCI.2014.6968423

Ren, H., Wu, L., Bi, W., Argyros, I.: Solving nonlinear equations system via an efficient genetic algorithm with symmetric and harmonious individuals. Applied Mathematics and Computation 219(23), 10967–10973 (2013). https://doi.org/10.1016/j.amc.2013.04.041

Pourrajabian, A., Ebrahimi, R., Mirzaei, M., Shams, M.: Applying genetic algorithms for solving nonlinear algebraic equations. Applied Mathematics and Computation 219, 11483–11494 (2013). https://doi.org/10.1016/j.amc.2013.05.057

Duan-Cai, Y.: Hybrid genetic algorithm for solving systems of nonlinear equations. Jisuan Lixue Xuebao/Chinese Journal of Computational Mechanics 22(1), 109–114 (2005)

La Cruz, W., Raydan, M.: Nonmonotone spectral methods for large-scale nonlinear systems. Optimization Methods & Software 18, 583–599 (2003). https://doi.org/10.1080/10556780310001610493

La Cruz, W., Martínez, J.M., Raydan, M.: Spectral residual method without gradient information for solving large-scale nonlinear systems of equations. Math. Comput. 75, 1429–1448 (2006). https://doi.org/10.1090/S0025-5718-06-01840-0

La Cruz, W.: A spectral algorithm for large-scale systems of nonlinear monotone equations. Numer. Algor. 76, 1109–1130 (2017). https://doi.org/10.1007/s11075-017-0299-8

Kauffman, S.A.: The Origins of Order. Self-Organization and Selection in Evolution. Oxford University Press, New York (1993). https://doi.org/10.1142/9789814415743_0003

Srikant, R.: The Mathematics of Internet Congestion Control. Birkhäuser, Boston (2004). https://doi.org/10.1007/978-0-8176-8216-3

Cortes, J., Martinez, S., Karatas, T., Bullo, F.: Coverage control for mobile sensing networks. IEEE Transactions on Automatic Control 20(2), 243–255 (2004). https://doi.org/10.1109/TRA.2004.824698

Zhao, C., Topcu, U., Li, N., Low, S.: Design and stability of loadside primary frequency control in power systems. IEEE Transactions on Automatic Control 59(5), 1177–1189 (2014). https://doi.org/10.1109/TAC.2014.2298140

Wright, A.: Genetic algorithms for real parameter optimization. Foundations of Genetic Algorithms 1, 205–218 (1991). https://doi.org/10.1016/b978-0-08-050684-5.50016-1

Davis, L.: The Handbook of Genetic Algorithms. Van Nostrand Reingold, New York (1991)

Michalewicz, Z.: Genetic Algorithms + Data Structures = Evolution Programs, 3rd edn. Springer-Verlag, New York (1996). https://doi.org/10.1007/978-3-662-03315-9

De Jong, K.A., Spears, W.M.: A formal analysis of the role of multi-point crossover in genetic algorithms. Ann. Math. Artif. Intell. 5, 1–26 (1992). https://doi.org/10.1007/BF01530777

Spall, J.C.: Introduction to Stochastic Search and Optimization: Estimation, Simulation, and Control. Wiley-Interscience series in discrete mathematics and optimization. Wiley-Interscience, New Jersey (2003). https://doi.org/10.1002/0471722138

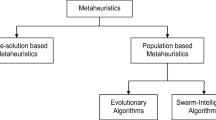

Bozorg-Haddad, O., Solgi, M., Loáiciga, H.A.: Meta-Heuristic and Evolutionary Algorithms for Engineering Optimization. Wiley Series in Operations Research and Management Science. John Wiley & Sons, New Jersey (2017). https://doi.org/10.1007/978-0-8176-8216-3

Barzilai, J., Borwein, J.: Two point step gradient methods. IMA J. Numer. Anal. 8, 141–148 (1988). https://doi.org/10.1093/imanum/8.1.141

Dai, Y.H., Liao, L.Z.: R-linear convergence of the Barzilai and Borwein gradient method. IMA J. Numer. Anal. 22, 1–10 (2002). https://doi.org/10.1093/imanum/22.1.1

Fletcher, R.: On the Barzilai-Borwein method, Springer, Boston (2005), Applied Optimization, vol. 96, chap. 10, pp. 235–256. https://doi.org/10.1007/0-387-24255-4_10

Raydan, M.: On the Barzilai and Borwein choice of the steplength for the gradient method. IMA J. Numer. Anal. 13, 321–326 (1993). https://doi.org/10.1093/imanum/13.3.321

Dekking, F.M., Kraaikamp, C., Lopuhaä, H.P., Meester, L.E.: A Modern Introduction to Probability and Statistics. Understanding Why and How. Springer, London (2005). https://doi.org/10.1007/1-84628-168-7

Blank, J., Deb, K.: Pymoo: Multi-objective optimization in python. IEEE Access 8, 89497–89509 (2020). https://doi.org/10.1109/ACCESS.2020.2990567

Rahnamayan, S., Tizhoosh, H.R., Salama, M.M.A.: A novel population initialization method for accelerating evolutionary algorithms. Computers & Mathematics with Applications 53(10), 1605–1614 (2007). https://doi.org/10.1016/j.camwa.2006.07.013

Dolan, E.D., Moré, J.J.: Benchmarking optimization software with performance profiles. Math. Program. 91, 201–213 (2002). https://doi.org/10.1007/s101070100263

He, J., Lin, G.: Average convergence rate of evolutionary algorithms. IEEE Transactions on Evolutionary Computation 20(3), 316–321 (2016). https://doi.org/10.1109/tevc.2015.2444793

Antoniou, A., Lu, W.S.: Practical Optimization: Algorithms and Engineering Applications. Springer, New York (2007). https://doi.org/10.1007/978-0-387-71107-2

Jakovetić, D., Moura, J., Xavier, J.: in 51st IEEE Conference on Decision and Control, ed. by H. Maui (2012), pp. 5459–5464. https://doi.org/10.1109/CDC.2012.6425938

Xiao, L., Boyd, S.: Optimal scaling of a gradient method for distributed resource allocation. J. Optim Theory Appl. 129(3), 469–488 (2006). https://doi.org/10.1007/s10957-006-9080-1

Grosan, C., Abraham, A.: A new approach for solving nonlinear equations systems. IEEE Transactions on Systems, Man, and Cybernetics - Part A: Systems and Humans 38(3), 698–714 (2008). https://doi.org/10.1109/tsmca.2008.918599

Hong, H., Stahl, V.: Safe starting regions by fixed points and tightening. Computing 53(3–4), 323–335 (1994). https://doi.org/10.1007/bf02307383

Hentenryck, P.V., McAllester, D., Kapur, D.: Solving polynomial systems using a branch and prune approach. SIAM J. Numer. Anal. 34(2), 797–827 (1997). https://doi.org/10.1137/S0036142995281504

Morgan, A.: Solving Polynomial Systems Using Continuation for Engineering and Scientific Problems, SIAM. Philadelphia, (2009). https://doi.org/10.1137/1.9780898719031

Moré, J., Garbow, B., Hillstrom, K.: Testing unconstrained optimization software. ACM Transactions on Mathematical Software 7(1), 17–41 (1981). https://doi.org/10.1145/355934.355936

Gasparo, M.: A nonmonotone hybrid method for nonlinear systems. Optimization Meth. & Soft. 13, 79–94 (2000). https://doi.org/10.1080/10556780008805776

Gomez-Ruggiero, M., Martínez, J.M., Moretti, A.: Comparing algorithms for solving sparse nonlinear systems of equations. SIAM J. Sci. Comp. 13(2), 459–483 (1992). https://doi.org/10.1137/0913025

Raydan, M.: The Barzilai and Borwein gradient method for the large scale unconstrained minimization problem. SIAM J. Opt. 7, 26–33 (1997). https://doi.org/10.1137/S1052623494266365

Bing, Y., Lin, G.: An efficient implementation of Merrill’s method for sparse or partially separable systems of nonlinear equations. SIAM Journal on Optimization 2, 206–221 (1991). https://doi.org/10.1137/0801015

Incerti, S., Zirilli, F., Parisi, V.: Algorithm 111: A fortran subroutine for solving systems of nonlinear simultaneous equations. Computer Journal 24, 87–91 (1981)

Alefeld, G., Gienger, A., Potra, F.: Efficient validation of solutions of nonlinear systems. SIAM Journal on Numerical Analysis 31, 252–260 (1994). https://doi.org/10.1137/0731013

Roberts, S., Shipman, J.: On the closed form solution of troesch’s problem. Journal of Computational Physical 21(3), 291–304 (1976). https://doi.org/10.1016/0021-9991(76)90026-7

Zhou, W., Li, D.: A globally convergent BFGS method for nonlinear monotone equations without any merit functions. Math. Comput. 77(264), 2231–2240 (2008). https://doi.org/10.1090/S0025-5718-08-02121-2

Acknowledgements

We are grateful to two anonymous referees for their valuable suggestions which greatly improved the quality and presentation of this paper.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Availability of data and materials

Not applicable availability of data and materials.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix A Test problems

Appendix A Test problems

This appendix provides the test functions \(F({\mathbf {x}})=(f_{1}({\mathbf {x}}),\ldots ,f_{n}({\mathbf {x}}))^{\top }\), and the vectors \({\mathbf {l}}=(l_{1},\ldots ,l_{n})^{\top }\) and \({\mathbf {u}}=(u_{1},\ldots ,u_{n})^{\top }\) used to generate the initial population \(P_{0}\) of the genetic algorithms.

-

1.

Function 1 [15]

$$\begin{aligned} f_{1}({\mathbf {x}})&= x_1 - x_2 + 1 + \frac{1}{9}\mid x_1 - 1\mid , \\ f_{2}({\mathbf {x}})&= x_2^2 + x_1 - 7 + \frac{1}{9}\mid x_2\mid ,\\ {\mathbf {l}}&= (-2,-2)^{\top },\quad {\mathbf {u}}=(6,6)^{\top } \end{aligned}$$ -

2.

Function 2 [43]

$$\begin{aligned} f_{1}({\mathbf {x}})&= \cos (2x_1) - \cos (2x_2) - 0.4, \\ f_{2}({\mathbf {x}})&= 2(x_2-x_1) + \sin (2x_2) - \sin (2x_1) - 1.2,\\ {\mathbf {l}}&= (-1,-1)^{\top },\quad {\mathbf {u}}=(1,1)^{\top } \end{aligned}$$ -

3.

Function 3 [43]

$$\begin{aligned} f_{1}({\mathbf {x}})&= e^{x_1} + x_1\,x_2 - 1, \\ f_{2}({\mathbf {x}})&= \sin (x_1\,x_2) + x_1 + x_2 - 1,\\ {\mathbf {l}}&= (-2,-2)^{\top },\quad {\mathbf {u}}=(2,2)^{\top } \end{aligned}$$ -

4.

Function 4 [15]

$$\begin{aligned} f_{1}({\mathbf {x}})&= 3x_1^2 + \sin (x_1\,x_2) - x_3^2 + 2, \\ f_{2}({\mathbf {x}})&= 2x_1^2 - x_2^2 - x_3 + 3,\\ f_{3}({\mathbf {x}})&= \sin (2x_1) + \cos (x_1\,x_2) + x_2 - 1,\\ {\mathbf {l}}&= (-5,-1,-5)^{\top },\quad {\mathbf {u}}=(5,3,5)^{\top } \end{aligned}$$ -

5.

Interval Arithmetic Benchmark [44]

$$\begin{aligned} f_{1}({\mathbf {x}})&= x_1 - 0.25428722 - 0.18324757x_4x_3x_9, \\ f_{2}({\mathbf {x}})&= x_2 - 0.37842197 - 0.16275449x_1x_{10}x_6,\\ f_{3}({\mathbf {x}})&= x_3 - 0.27162577 - 0.16955071x_1x_2x_{10},\\ f_{4}({\mathbf {x}})&= x_4 - 0.19807914 - 0.15585316x_7x_1x_6,\\ f_{5}({\mathbf {x}})&= x_5 - 0.44166728 - 0.19950920x_7x_6x_3,\\ f_{6}({\mathbf {x}})&= x_6 - 0.14654113 - 0.18922793x_8x_5x_{10},\\ f_{7}({\mathbf {x}})&= x_7 - 0.42937161 - 0.21180486x_2x_5x_8,\\ f_{8}({\mathbf {x}})&= x_8 - 0.07056438 - 0.17081208x_1x_7x_6,\\ f_{9}({\mathbf {x}})&= x_9 - 0.34504906 - 0.19612740x_{10}x_6x_8,\\ f_{10}({\mathbf {x}})&= x_{10} - 0.42651102 - 0.21466544x_4x_8x_1,\\ {\mathbf {l}}&=(-5,-5,\ldots ,-5)^{\top },\quad {\mathbf {u}}=(5,5,\ldots ,5)^{\top } \end{aligned}$$ -

6.

Combustion problem [45]

$$\begin{aligned} f_{1}({\mathbf {x}})&= x_2 + 2x_6 + x_9 + 2x_{10} - 10^{-5}, \\ f_{2}({\mathbf {x}})&= x_3 + x_8 - 3\cdot 10^{-5},\\ f_{3}({\mathbf {x}})&= x_1 + x_3 + 2x_5 + 2x_8 + x_9 + x_{10} - 5\cdot 10^{-5},\\ f_{4}({\mathbf {x}})&= x_4 + 2x_7 - 10^{-5},\\ f_{5}({\mathbf {x}})&= 0.5140437\cdot 10^{-7} - x_1^2,\\ f_{6}({\mathbf {x}})&= 0.1006932\cdot 10^{-6}x_6 - 2x_2^2,\\ f_{7}({\mathbf {x}})&= 0.7816278\cdot 10^{-15}x_7 - x_4^2,\\ f_{8}({\mathbf {x}})&= 0.1496236\cdot 10^{-6}x_8 - x_1x_3,\\ f_{9}({\mathbf {x}})&= 0.6194411\cdot 10^{-7}x_9 - x_1x_2,\\ f_{10}({\mathbf {x}})&= 0.2089296\cdot 10^{-14}x_{10} - x_1x_2^2,\\ {\mathbf {l}}&=\left( -\frac{1}{4},-\frac{1}{4},\ldots ,-\frac{1}{4}\right) ^{\top },\quad {\mathbf {u}}=\left( \frac{1}{4},\frac{1}{4},\ldots ,\frac{1}{4}\right) ^{\top } \end{aligned}$$ -

7.

Neurophysiology problem [45]

$$\begin{aligned} f_{1}({\mathbf {x}})&= x_1^2 + x_3^2 - 1, \\ f_{2}({\mathbf {x}})&= x_2^2 + x_4^2 - 1,\\ f_{3}({\mathbf {x}})&= x_5x_3^3 + x_6x_4^3,\\ f_{4}({\mathbf {x}})&= x_5x_1^3 + x_6x_2^3,\\ f_{5}({\mathbf {x}})&= x_5x_1x_3^2 + x_6x_4^2x_2,\\ f_{6}({\mathbf {x}})&= x_5x_1^2x_3 + x_6x_2^2x_4,\\ {\mathbf {l}}&=\left( -1,-1,\ldots ,-1\right) ^{\top },\quad {\mathbf {u}}=\left( 1,1,\ldots ,1\right) ^{\top } \end{aligned}$$ -

8.

Chemical equilibrium problem [45]

$$\begin{aligned} f_{1}({\mathbf {x}})&= x_1x_2 + x_1 - 3x_5, \\ f_{2}({\mathbf {x}})&= 2x_1x_2 + x_1 + x_2x_3^2 + R_8x_2 - Rx_5 + 2R_{10}x_2^2\\&\qquad + R_7x_2x_3 + R_9x_2x_4,\\ f_{3}({\mathbf {x}})&= 2x_2x_3^2 + 2R_5x_3^2 - 8x_5 + R_6x_3 + R_7x_2x_3,\\ f_{4}({\mathbf {x}})&= R_9x_2x_4 + 2x_4^2 - 4Rx_5,\\ f_{5}({\mathbf {x}})&= x_1(x_2 + 1) + R_{10}x_2^2 + x_2x_3^2 + R_8x_2\\&\qquad +R_5x_3^2 + x_4^2 - 1 + R_6x_3 + R_7x_2x_3 + R_9x_2x_4,\\ {\mathbf {l}}&=\left( -1,-1,\ldots ,-1\right) ^{\top },\quad {\mathbf {u}}=\left( 1,1,\ldots ,1\right) ^{\top }, \end{aligned}$$where

$$\begin{aligned} \begin{array}{llll} R = 10, &{} R_5 = 0.193, &{} R_6 = \frac{0.002597}{\sqrt{40}}, &{} R_7 = \frac{0.003448}{\sqrt{40}}, \\ R_8 = \frac{0.00001799}{40}, &{} R_9 = \frac{0.0002155}{\sqrt{40}}, &{} R_{10} = \frac{0.00003846}{40} \end{array} \end{aligned}$$ -

9.

Kinematic application [45]

$$\begin{aligned} f_{i}({\mathbf {x}})&= x_i^2 + x_{i+1}^2 - 1,\quad \text{ for } =1,2,3,4,\\ f_{4+i}({\mathbf {x}})&= a_{1i}x_1x_3 + a_{2i}x_1x_4 + a_{3i}x_2x_3 + a_{4i}x_2x_4\\&\qquad + a_{5i}x_2x_7 + a_{6i}x_5x_8 + a_{7i}x_6x_7 \\&\quad + a_{8i}x_6x_8 + a_{9i}x_1 + a_{10i}x_2 + a_{11i}x_3 + a_{12i}x_4 \\&\qquad + a_{13i}x_5 + a_{14i}x_6 + a_{15i}x_7 \\&\quad +a_{16i}x_8 + a_{17i},\quad \text{ for } =1,2,3,4,\\ {\mathbf {l}}&= \left( -1,-1,\ldots ,-1\right) ^{\top },\quad {\mathbf {u}}=\left( 1,1,\ldots ,1\right) ^{\top }, \end{aligned}$$where the matrix \(A=(a_{ki})\) is given by:

$$\begin{aligned} A = \begin{pmatrix} -0.249150680 &{} 0.125016350 &{} -0.635550077 &{} 1.48947730 \\ 1.609135400 &{} -0.686607360 &{} -0.115719920 &{} 0.23062341 \\ 0.279423430 &{} -0.119228110 &{} -0.666404480 &{} 1.32810730 \\ 1.434801600 &{} -0.719940470 &{} 0.110362110 &{} -0.25864503 \\ 0.000000000 &{} -0.432419270 &{} 0.290702030 &{} 1.16517200 \\ 0.400263840 &{} 0.000000000 &{} 1.258776700 &{} -0.26908494 \\ -0.800527680 &{} 0.000000000 &{} -0.629388360 &{} 0.53816987 \\ 0.000000000 &{} -0.864838550 &{} 0.581404060 &{} 0.58258598 \\ 0.074052388 &{} -0.037157270 &{} 0.195946620 &{} -0.20816985 \\ -0.083050031 &{} 0.035436896 &{} -1.228034200 &{} 2.68683200 \\ -0.386159610 &{} -0.085383482 &{} -0.000000000 &{} -0.69910317 \\ -0.755266030 &{} 0.000000000 &{} -0.079034221 &{} 0.35744413 \\ 0.504201680 &{} -0.039251961 &{} 0.026187877 &{} 1.24991170 \\ -1.091628700 &{} 0.000000000 &{} -0.057131430 &{} 1.46773600 \\ 0.000000000 &{} -0.432419270 &{} -1.162808100 &{} 1.16517200 \\ 0.049207290 &{} 0.000000000 &{} 1.258776100 &{} 1.07633970 \\ 0.049207290 &{} 0.013873010 &{} 2.162575000 &{} -0.69686809 \end{pmatrix} \end{aligned}$$ -

10.

Economics Modeling Application [46]

$$\begin{aligned} f_{i}({\mathbf {x}})&= \left( x_{i} + \sum _{k=1}^{n-k-1}x_{k}x_{k+i}\right) x_{n} - c_{i},\quad \text{ for } =1,\ldots ,n-1,\\ f_{n}({\mathbf {x}})&= \sum _{k=1}^{n-1}x_{k} + 1,\\ {\mathbf {l}}&= \left( -1,-1,\ldots ,-1\right) ^{\top },\quad {\mathbf {u}}=\left( 1,1,\ldots ,1\right) ^{\top }, \end{aligned}$$where the constants \(c_i\) can be randomly chosen. In the numerical experiment, set \(c_{i}=0\).

-

11.

Discrete boundary value problem [47]

$$\begin{aligned} f_{1}({\mathbf {x}})&= 2x_1 + 0.5h^2(x_1+h)^3 - x_2, \\ f_{i}({\mathbf {x}})&= 2x_i + 0.5h^2(x_i+hi)^3 - x_{i-1} + x_{i+1},\quad \text{ for } i=2,\ldots ,n-1 \\ f_{n}({\mathbf {x}})&= 2x_n + 0.5h^2(x_n+hn)^3 - x_{n-1}, \\ h&= 1/(n+1),\\ {\mathbf {l}}&= (-5,-5,\ldots ,-5)^{\top },\quad {\mathbf {u}}=(5,5,\ldots ,5)^{\top } \end{aligned}$$ -

12.

Brown’s almost linear system

$$\begin{aligned} f_i({\mathbf {x}})&= x_i + \sum _{j=1}^n x_j - (n+1),\quad \text{ for } i=1,2,\dots ,n-1, \\ f_n(x)&= \prod _{j=1}^n x_j - 1,\\ {\mathbf {l}}&=(-2,-2,\ldots ,-2)^{\top },\quad {\mathbf {u}}=(2,2,\ldots ,2)^{\top } \end{aligned}$$ -

13.

Function 13

$$\begin{aligned} f_{i}({\mathbf {x}})&= \frac{1}{2n}\left( i + \sum _{k=1}^{n} x_{i}^{3}\right) - x_{i}, \quad i = 1,2,\ldots ,n,\\ {\mathbf {l}}&= (-2,-2,\ldots ,-5)^{\top },\quad {\mathbf {u}}=(2,2,\ldots ,5)^{\top } \end{aligned}$$ -

14.

Exponential function 1 [18]

$$\begin{aligned} f_1({\mathbf {x}})&= e^{x_1-1}-1, \\ f_i({\mathbf {x}})&= i\left( e^{x_i-1}-x_i\right) ,\quad \text {for }i=2,3,\dots ,n, \\ {\mathbf {l}}&=(-2,-2,\ldots ,-2)^{\top },\quad {\mathbf {u}}=(2,2,\ldots ,2)^{\top } \end{aligned}$$ -

15.

Exponential function 2 [18]

$$\begin{aligned} f_1({\mathbf {x}})&=e^{x_1}-1, \\ f_i({\mathbf {x}})&=\frac{i}{10}\left( e^{x_i}+x_{i-1}-1\right) ,\quad \text {for }i=2,3,\dots ,n, \\ {\mathbf {l}}&=(-2,-2,\ldots ,-2)^{\top },\quad {\mathbf {u}}=(2,2,\ldots ,2)^{\top } \end{aligned}$$ -

16.

Exponential function 3 [18]

$$\begin{aligned} f_i({\mathbf {x}})&=\frac{i}{10}\left( 1-x_i^2-e^{-x_i^2}\right) ,\quad \text {for }i=2,3,\dots ,n-1, \\ f_n({\mathbf {x}})&=\frac{n}{10}\left( 1-e^{-x_n^2}\right) ,\\ {\mathbf {l}}&=(-2,-2,\ldots ,-2)^{\top },\quad {\mathbf {u}}=(2,2,\ldots ,2)^{\top } \end{aligned}$$ -

17.

Extended Rosenbrock function (n is even) [48, p. 89]

$$\begin{aligned} f_{2i-1}({\mathbf {x}})&= 10\left( x_{2i}-x_{2i-1}^2\right) ,\quad \text {for }i=1,2,\ldots ,n/2, \\ f_{2i}({\mathbf {x}})&= 1-x_{2i-1},\quad \text {for }i=1,2,\ldots ,n/2, \\ {\mathbf {l}}&=(-2,-2,\ldots ,-2)^{\top },\quad {\mathbf {u}}=(2,2,\ldots ,2)^{\top } \end{aligned}$$ -

18.

Chandrasekhar’s H-equation [2, p. 198]

$$\begin{aligned} f_{i}({\mathbf {x}})&= x_i-\left( 1-\frac{c}{2n}\sum _{j=1}^n\frac{\mu _i x_j}{\mu _i+\mu _j}\right) ^{-1}, \quad \text {for }i=1,2,\dots ,n,\\ {\mathbf {l}}&=(-5,-5,\ldots ,-5)^{\top },\quad {\mathbf {u}}=(5,5,\ldots ,5)^{\top }, \end{aligned}$$where \(c\in [0,1)\) and \(\mu _i=(i-1/2)/n\), for \(1\le i\le n\). (In the numerical experiment, set \(c=0.9\)).

-

19.

Badly scaled augmented Powell’s function (n is a multiple of 3) [48, p. 89]

$$\begin{aligned} f_{3i-2}({\mathbf {x}})&= 10^4x_{3i-2}x_{3i-1}-1,\quad \text {for }i=1,2,\ldots ,n/3, \\ f_{3i-1}({\mathbf {x}})&= \exp \left( -x_{3i-2}\right) +\exp \left( -x_{3i-1}\right) -1.0001, \quad \text {for }i=1,2,\ldots ,n/3,\\ f_{3i}({\mathbf {x}})&= \phi (x_{3i}),\quad \text {for }i=1,2,\ldots ,n/3,\\ {\mathbf {l}}&=(-1,-1,\ldots ,-1)^{\top },\quad {\mathbf {u}}=(1,1,\ldots ,1)^{\top }, \end{aligned}$$where

$$\begin{aligned} \phi (t)={\left\{ \begin{array}{ll} 0.5t-2 &{} t\le -11\\ \left( -592t^3+888t^2+4551t-1924\right) /1998, &{} -1<t<2\\ 0.5t+2 &{} t\ge 2. \end{array}\right. } \end{aligned}$$ -

20.

Trigonometric function

$$\begin{aligned} f_{i}({\mathbf {x}})&= 2\left( n+i(1-\cos x_i)-\sin x_i-\sum _{j=1}^n\cos x_j\right) \left( 2\sin x_i-\cos x_i\right) ,\\&\text{ for } i=1,2,\dots ,n,\\ {\mathbf {l}}&=(-1,-1,\ldots ,-1)^{\top },\quad {\mathbf {u}}=(1,1,\ldots ,1)^{\top } \end{aligned}$$ -

21.

Singular function [18]

$$\begin{aligned} f_1({\mathbf {x}})&= \frac{1}{3} x_1^3+\frac{1}{2} x_2^2 \\ f_{i}({\mathbf {x}})&= -\frac{1}{2} x_i^2+\frac{i}{3}x_i^3+\frac{1}{2} x_{i+1}^2, \quad \text {for }i=2,3,\dots ,n-1,\\ f_n(x)&= -\frac{1}{2} x_n^2+\frac{n}{3} x_n^3, \\ {\mathbf {l}}&=(-10,-10,\ldots ,-10)^{\top },\quad {\mathbf {u}}=(10,10,\ldots ,10)^{\top } \end{aligned}$$ -

22.

Broyden Tridiagonal function [49, pp. 471-472]

$$\begin{aligned} f_1({\mathbf {x}})&=(3-0.5x_1)x_1-2x_2+1,\\ f_{i}({\mathbf {x}})&= (3-0.5x_i)x_i-x_{i-1}-2x_{i+1}+1, \quad \text {for }i=2,3,\dots ,n-1,\\ f_n({\mathbf {x}})&= (3-0.5x_n)x_n-x_{n-1}+1,\\ {\mathbf {l}}&=(-1,-1,\ldots ,-1)^{\top },\quad {\mathbf {u}}=(1,1,\ldots ,1)^{\top } \end{aligned}$$ -

23.

Trigexp function [49, p. 473]

$$\begin{aligned} f_1({\mathbf {x}})&= x_1^3+2x_2-5+\sin (x_1-x_2)\sin (x_1+x_2),\\ f_{i}({\mathbf {x}})&= -x_{i-1}e^{(x_{i-1}-x_i)}+x_i(4+3x_i^2)+2x_{i+1}+ \sin (x_i-x_{i+1})\sin (x_i+x_{i+1})-8,\\&\text{ for } i=2,3,\dots ,n-1,\\ f_n({\mathbf {x}})&= -x_{n-1}e^{(x_{n-1}-x_n)}+4x_n-3,\\ {\mathbf {l}}&= (-1,-1,\ldots ,-1)^{\top },\quad {\mathbf {u}}=(1,1,\ldots ,1)^{\top } \end{aligned}$$ -

24.

Strictly convex function 1 [50, p. 29]. F is is the gradient of \(h({\mathbf {x}})=\sum _{i=1}^n\left( e^{x_1}-x_i\right) \).

$$\begin{aligned} f_{i}({\mathbf {x}})&= e^{x_i}-1,\quad \text {for }i=1,2,\dots ,n,\\ {\mathbf {l}}&= (-2,-2,\ldots ,-2)^{\top },\quad {\mathbf {u}}=(2,2,\ldots ,2)^{\top } \end{aligned}$$ -

25.

Strictly convex function 2 [50, p. 30]. F is is the gradient of \(h({\mathbf {x}})=\sum _{i=1}^n\frac{i}{10}\left( e^{x_1}-x_i\right) \).

$$\begin{aligned} f_{i}({\mathbf {x}})&= \frac{i}{10}\left( e^{x_i}-1\right) ,\quad \text {for }i=1,2,\dots ,n,\\ {\mathbf {l}}&= (-2,-2,\ldots ,-2)^{\top },\quad {\mathbf {u}}=(2,2,\ldots ,2)^{\top } \end{aligned}$$ -

26.

Zero Jacobian function [18]

$$\begin{aligned} f_{1}({\mathbf {x}})&= \sum _{j=1}^n x_j^2, \\ f_{i}({\mathbf {x}})&= -2x_1x_i,\quad \text{ for } i=2,\dots ,n,\\ {\mathbf {l}}&= (-1,-1,\ldots ,-1)^{\top },\quad {\mathbf {u}}=(1,1,\ldots ,1)^{\top } \end{aligned}$$ -

27.

Freudenstein and Roth extended function (n par) [51]

$$\begin{aligned} f_{2i-1}({\mathbf {x}})&= x_{2i-1} + ((5-x_{2i})x_{2i}-2) x_{2i} - 13,\quad \text{ for } i=2,\dots ,n/2,\\ f_{2i}({\mathbf {x}})&= x_{2i-1} + ((x_{2i}+1))x_{2i} - 14) x_{2i} - 29,\quad \text{ for } i=2,\dots ,n/2,\\ {\mathbf {l}}&= (-1,-1,\ldots ,-1)^{\top },\quad {\mathbf {u}}=(1,1,\ldots ,1)^{\top } \end{aligned}$$ -

28.

Cragg and Levy’s extended problem (n múltiplo de 4) [47]

$$\begin{aligned} f_{4i-3}({\mathbf {x}})&= (\exp (x_{4i-3})-x_{4i-2})^2,\quad \text{ for } i=2,\dots ,n/4,\\ f_{4i-2}({\mathbf {x}})&= 10(x_{4i-2}-x_{4i-1})^3,\quad \text{ for } i=2,\dots ,n/4,\\ f_{4i-1}({\mathbf {x}})&= \tan ^2(x_{4i-1}-x_{4i}),\quad \text{ for } i=2,\dots ,n/4,\\ f_{4i}({\mathbf {x}})&= x_{4i} - 1,\quad \text{ for } i=2,\dots ,n/4,\\ {\mathbf {l}}&= (-5,-5,\ldots ,-5)^{\top },\quad {\mathbf {u}}=(5,5,\ldots ,5)^{\top } \end{aligned}$$ -

29.

Wood’s extended problem (n múltiplo de 4) [52]

$$\begin{aligned} f_{4i-3}({\mathbf {x}})&= -200x_{4i-3}\left( x_{4i-2}-x_{4i-3}^2\right) -(1-x_{4i-3}),\quad \text{ for } i=2,\dots ,n/4,\\ f_{4i-2}({\mathbf {x}})&= 200\left( x_{4i-2}-x_{4i-3}^2\right) +20(x_{4i-2}-1)+19.8(x_{4i}-1),\quad \text{ for } i=2,\dots ,n/4,\\ f_{4i-1}({\mathbf {x}})&= -180x_{4i-1}\left( x_{4i}-x_{4i-1}^2\right) -(1-x_{4i-1}),\quad \text{ for } i=2,\dots ,n/4,\\ f_{4i}({\mathbf {x}})&= 180 \left( x_{4i}-x_{4i-1}^2\right) + 20.2(x_{4i}-1) + 19.8(x_{4i-2}-1),\quad \text{ for } i=2,\dots ,n/4,\\ {\mathbf {l}}&= (-2,-2,\ldots ,-2)^{\top },\quad {\mathbf {u}}=(2,2,\ldots ,2)^{\top } \end{aligned}$$ -

30.

Exponential tridiagonal problem [51]

$$\begin{aligned} f_{1}({\mathbf {x}})&= x_1 - \exp (\cos (h(x_1+x_2))), \\ f_{i}({\mathbf {x}})&= x_i - \exp (\cos (h(x_{i-1}+x_i+x_{i+1}))),\quad \text{ for } i=2,\ldots ,n-1 \\ f_{n}({\mathbf {x}})&= x_n - \exp (\cos (h(x_{n-1}+x_n))), \\ h&= 1/(n+1),\\ {\mathbf {l}}&= (-5,-5,\ldots ,-5)^{\top },\quad {\mathbf {u}}=(5,5,\ldots ,5)^{\top } \end{aligned}$$ -

31.

Brent problem [53]

$$\begin{aligned} f_{1}({\mathbf {x}})&= 3x_1 (x_2-2x_1) + x_2^2/4, \\ f_{i}({\mathbf {x}})&= 3x_i(x_{i+1}-2x_i+x_{i-1})+(x_{i+1}-x_{i-1})^2/4,\quad \text{ for } i=2,\ldots ,n-1,\\ f_{n}({\mathbf {x}})&= 3x_n(20-2x_n+x_{n-1}) + (20-x_{n-1})^2/4, \\ h&= 1/(n+1),\\ {\mathbf {l}}&= (-5,-5,\ldots ,-5)^{\top },\quad {\mathbf {u}}=(5,5,\ldots ,5)^{\top } \end{aligned}$$ -

32.

Troesch problem [54]

$$\begin{aligned} f_{1}({\mathbf {x}})&= 2x_1 + \rho h^2\sinh (\rho x_1) - x_2, \\ f_{i}({\mathbf {x}})&= 2x_i + \rho h^2\sinh (\rho x_i) - x_{i-1}-x_{i+1},\quad \text{ for } i=2,\ldots ,n-1,\\ f_{n}({\mathbf {x}})&= 2x_n + \rho h^2\sinh (\rho x_n) - x_{n-1}, \\ \rho&= 10,\;\;h = 1/(n+1),\\ {\mathbf {l}}&= (-1,-1,\ldots ,-1)^{\top },\quad {\mathbf {u}}=(1,1,\ldots ,1)^{\top } \end{aligned}$$ -

33.

Function 33 [55]

$$\begin{aligned} f_{i}({\mathbf {x}})&= 2x_i - \sin \mid x_i\mid ,\quad \text{ for } i=1,2,\ldots ,n,\\ {\mathbf {l}}&= (-1,-1,\ldots ,-1)^{\top },\quad {\mathbf {u}}=(1,1,\ldots ,1)^{\top } \end{aligned}$$ -

34.

Function 34

$$\begin{aligned} f_{i}({\mathbf {x}})&= \min \left\{ \left( x_{i}-\frac{i}{n}\right) ^2,\, \mid e^{x_{i}-\frac{i}{n}}-1\mid \right\} , \quad i = 1,2,\ldots ,n,\\ {\mathbf {l}}&= (-5,-5,\ldots ,-5)^{\top },\quad {\mathbf {u}}=(5,5,\ldots ,5)^{\top } \end{aligned}$$

Rights and permissions

About this article

Cite this article

La Cruz, W. A genetic algorithm with a self-reproduction operator to solve systems of nonlinear equations. J Glob Optim 84, 1005–1032 (2022). https://doi.org/10.1007/s10898-022-01189-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10898-022-01189-1