Abstract

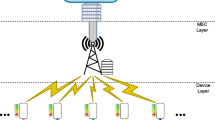

With the surge of intelligent devices, applications of Internet of Things (IoT) are growing at a rapid pace. As a result, a massive amount of raw data is generated, which must be processed and stored. IoT devices standalone are not enough to handle large amount of data. Hence, to improve the performance, users started to push some jobs to far-situated cloud data centers, which would lead to more complications such as high bandwidth usage, service latency, and energy consumption. Fog computing emerges as a key enabling technology that brings cloud services closer to the end-user. However, owing to the unpredictability of tasks and Quality of Service (QoS) requirements of users, efficient task scheduling and resource allocation mechanisms are needed to balance the demand. To handle the problem efficiently, we have designed the task offloading problem as Markov Decision Process (MDP) by considering various user QoS factors including end-to-end latency, energy consumption, task deadline, and priority. Three different model-free off-policy Deep Reinforcement Learning (DRL) based solutions are outlined to maximize the reward in terms of resource utilization. Finally, extensive experimentation is conducted to validate and compare the efficiency and effectiveness of proposed mechanisms. Results show that with the proposed method, on average 96.23% of tasks can satisfy the deadline with an 8.25% increase.

Similar content being viewed by others

Data Availability

The datasets generated during and/or analyzed during the current study are available from the corresponding author upon reasonable request.

References

Gupta, A., Singh, A.: An intelligent healthcare cyber physical framework for encephalitis diagnosis based on information fusion and soft-computing techniques. New Gener. Comput. (2022). https://doi.org/10.1007/s00354-022-00175-1

Natesha, B., Guddeti, R.M.R.: Meta-heuristic based hybrid service placement strategies for two-level fog computing architecture. J. Netw. Syst. Manage. 30(3), 1–23 (2022)

Jing, W., Zhao, C., Miao, Q., Song, H., Chen, G.: Qos-dpso: Qos-aware task scheduling for cloud computing system. J. Netw. Syst. Manage. 29(1), 1–29 (2021)

Talaat, F.M., Ali, S.H., Saleh, A.I., Ali, H.A.: Effective load balancing strategy (elbs) for real-time fog computing environment using fuzzy and probabilistic neural networks. J. Netw. Syst. Manage. 27(4), 883–929 (2019)

Singh, P., Singh, R.: Energy-efficient delay-aware task offloading in fog-cloud computing system for iot sensor applications. J. Netw. Syst. Manage. 30(1), 1–25 (2022)

Adhikari, M., Mukherjee, M., Srirama, S.N.: Dpto: a deadline and priority-aware task offloading in fog computing framework leveraging multilevel feedback queueing. IEEE Internet Things J. 7(7), 5773–5782 (2019)

Sun, W., Liu, J., Yue, Y., Zhang, H.: Double auction-based resource allocation for mobile edge computing in industrial internet of things. IEEE Trans. Ind. Inform. 14(10), 4692–4701 (2018)

Alli, A.A., Alam, M.M.: Secoff-fciot: machine learning based secure offloading in fog-cloud of things for smart city applications. Internet Things 7, 100070 (2019)

Xiao, Y., Krunz, M.: Qoe and power efficiency tradeoff for fog computing networks with fog node cooperation. In: IEEE INFOCOM 2017-IEEE conference on computer communications, pp. 1–9. IEEE (2017)

Ning, Z., Dong, P., Kong, X., Xia, F.: A cooperative partial computation offloading scheme for mobile edge computing enabled internet of things. IEEE Internet Things J. 6(3), 4804–4814 (2018)

Zhou, Z., Liao, H., Gu, B., Mumtaz, S., Rodriguez, J.: Resource sharing and task offloading in iot fog computing: a contract-learning approach. IEEE Trans. Emerg. Top. Comput. Intell. 4(3), 227–240 (2019)

Pu, L., Chen, X., Xu, J., Fu, X.: D2d fogging: an energy-efficient and incentive-aware task offloading framework via network-assisted d2d collaboration. IEEE J. Sel. Areas Commun. 34(12), 3887–3901 (2016)

Zhang, H., Xiao, Y., Bu, S., Niyato, D., Yu, F.R., Han, Z.: Computing resource allocation in three-tier iot fog networks: a joint optimization approach combining stackelberg game and matching. IEEE Internet Things J. 4(5), 1204–1215 (2017)

Gao, X., Huang, X., Bian, S., Shao, Z., Yang, Y.: Pora: Predictive offloading and resource allocation in dynamic fog computing systems. IEEE Internet Things J. 7(1), 72–87 (2019)

Vu, T.T., Nguyen, D.N., Hoang, D.T., Dutkiewicz, E., Nguyen, T.V.: Optimal energy efficiency with delay constraints for multi-layer cooperative fog computing networks. IEEE Trans. Commun. 69(6), 3911–3929 (2021)

Zhang, K., Mao, Y., Leng, S., Zhao, Q., Li, L., Peng, X., Pan, L., Maharjan, S., Zhang, Y.: Energy-efficient offloading for mobile edge computing in 5g heterogeneous networks. IEEE Access 4, 5896–5907 (2016)

Jiao, L., Wu, Y., Dong, J., Jiang, Z.: Toward optimal resource scheduling for internet of things under imperfect csi. IEEE Internet Things J. 7(3), 1572–1581 (2019)

Haghi Kashani, M., Rahmani, A.M., Jafari Navimipour, N.: Quality of service-aware approaches in fog computing. Int. J. Commun. Syst. 33(8), 4340 (2020)

Sun, W., Liu, J., Yue, Y., Wang, P.: Joint resource allocation and incentive design for blockchain-based mobile edge computing. IEEE Trans. Wirel. Commun. 19(9), 6050–6064 (2020)

Rahbari, D., Nickray, M.: Task offloading in mobile fog computing by classification and regression tree. Peer-to-Peer Netw. Appl. 13(1), 104–122 (2020)

Misra, S., Saha, N.: Detour: dynamic task offloading in software-defined fog for iot applications. IEEE J. Sel. Areas Commun. 37(5), 1159–1166 (2019)

Rahman, F.H., Au, T.-W., Newaz, S.S., Suhaili, W.S., Lee, G.M.: Find my trustworthy fogs: a fuzzy-based trust evaluation framework. Future Gener. Comput. Syst. 109, 562–572 (2020)

Yang, L., Li, M., Zhang, H., Ji, H., Xiao, M., Li, X.: Distributed resource management for blockchain in fog-enabled iot networks. IEEE Internet Things J. 8(4), 2330–2341 (2020)

Yu, Y., Bu, X., Yang, K., Wu, Z., Han, Z.: Green large-scale fog computing resource allocation using joint benders decomposition, dinkelbach algorithm, admm, and branch-and-bound. IEEE Internet Things J. 6(3), 4106–4117 (2018)

Fan, Q., Ansari, N.: Towards workload balancing in fog computing empowered iot. IEEE Trans. Netw. Sci. Eng. 7(1), 253–262 (2018)

Jain, V., Kumar, B.: Blockchain enabled trusted task offloading scheme for fog computing: a deep reinforcement learning approach. Trans. Emerg. Telecommun. Technol. (2022). https://doi.org/10.1002/ett.4587

Jain, V., Kumar, B.: Combinatorial auction based multi-task resource allocation in fog environment using blockchain and smart contracts. Peer-to-Peer Netw. Appl. 14(5), 3124–3142 (2021)

Liu, L., Qi, D., Zhou, N., Wu, Y.: A task scheduling algorithm based on classification mining in fog computing environment. Wirel. Commun. Mob. Comput. (2018). https://doi.org/10.1155/2018/2102348

Rahbari, D., Nickray, M.: Task offloading in mobile fog computing by classification and regression tree. Peer-to-Peer Netw. Appl. 13(1), 104–122 (2020). https://doi.org/10.1007/s12083-019-00721-7

Abbasi, M., Yaghoobikia, M., Rafiee, M., Jolfaei, A., Khosravi, M.R.: Efficient resource management and workload allocation in fog-cloud computing paradigm in iot using learning classifier systems. Comput. Commun. 153, 217–228 (2020)

Shahidinejad, A., Ghobaei-Arani, M.: Joint computation offloading and resource provisioning for e dge-cloud computing environment: a machine learning-based approach. Softw.: Pract. Exp. 50(12), 2212–2230 (2020)

Baek, J., Kaddoum, G.: Heterogeneous task offloading and resource allocations via deep recurrent reinforcement learning in partial observable multifog networks. IEEE Internet Things J. 8(2), 1041–1056 (2020)

Baek, J.-Y., Kaddoum, G., Garg, S., Kaur, K., Gravel, V.: Managing fog networks using reinforcement learning based load balancing algorithm, pp. 1–7. IEEE (2019)

Sun, Y., Peng, M., Mao, S.: Deep reinforcement learning-based mode selection and resource management for green fog radio access networks. IEEE Internet Things J. 6(2), 1960–1971 (2018)

Rahman, G.S., Dang, T., Ahmed, M.: Deep reinforcement learning based computation offloading and resource allocation for low-latency fog radio access networks. Intell. Converg. Netw. 1(3), 243–257 (2020)

Cui, G., Long, Y., Xu, L., Wang, W.: Joint offloading and resource allocation for satellite assisted vehicle-to-vehicle communication. IEEE Syst. J. 15(3), 3958–3969 (2020)

Yu, L., Wang, R., Shi, M., Wu, J.: Dynamic offloading design in time-varying mobile edge networks with deep reinforcement learning approach. (2021). arXiv preprint arXiv:2103.02174

Zou, B., Shen, J., Huang, Z., Zheng, S., Zhang, J., Li, W.: Service offloading algorithm based on depth deterministic policy gradient in fog computing environment. In: International conference on computer engineering and networks, pp. 1456–1465. Springer (2020)

Wei, Y., Yu, F.R., Song, M., Han, Z.: Joint optimization of caching, computing, and radio resources for fog-enabled iot using natural actor-critic deep reinforcement learning. IEEE Internet Things J. 6(2), 2061–2073 (2018)

Huang, X., Cui, Y., Chen, Q., Zhang, J.: Joint task offloading and qos-aware resource allocation in fog-enabled internet-of-things networks. IEEE Internet Things J. 7(8), 7194–7206 (2020)

Wang, S., Li, X., Sheng, Q.Z., Beheshti, A.: Performance analysis and optimization on scheduling stochastic cloud service requests: a survey. IEEE Trans. Netw. Serv. Manage. (2022). https://doi.org/10.1109/TNSM.2022.3181145

Xiang, Y., Lan, T., Aggarwal, V., Chen, Y.-F.: Optimizing differentiated latency in multi-tenant, erasure-coded storage. IEEE Trans. Netw. Serv. Manage. 14(1), 204–216 (2017)

Di Mauro, M., Liotta, A.: Statistical assessment of ip multimedia subsystem in a softwarized environment: a queueing networks approach. IEEE Trans. Netw. Serv. Manage. 16(4), 1493–1506 (2019)

Jain, V., Kumar, B., Gupta, A.: Cybertwin-driven resource allocation using deep reinforcement learning in 6g-enabled edge environment. J. King Saud Univ.-Comput. Inf. Sci. (2022). https://doi.org/10.1016/j.jksuci.2022.02.005

Arulkumaran, K., Deisenroth, M.P., Brundage, M., Bharath, A.A.: Deep reinforcement learning: a brief survey. IEEE Signal Process. Mag. 34(6), 26–38 (2017)

François-Lavet, V., Henderson, P., Islam, R., Bellemare, M.G., Pineau, J., et al.: An introduction to deep reinforcement learning. Found. Trends Mach. Learn. 11(3–4), 219–354 (2018)

Huang, Y.: Deep q-networks. In: Deep reinforcement learning, pp. 135–160. Springer, Singapore (2020)

Lillicrap, T.P., Hunt, J.J., Pritzel, A., Heess, N., Erez, T., Tassa, Y., Silver, D., Wierstra, D.: Continuous control with deep reinforcement learning. (2015). arXiv preprint arXiv:1509.02971

Deep Deterministic Policy Gradient—Spinning Up documentation. https://spinningup.openai.com/en/latest/algorithms/ddpg.html. Accessed 14 May 2021

Soft Actor-Critic—Spinning Up documentation. https://spinningup.openai.com/en/latest/algorithms/sac.html. Accessed 14 May 2021

Haarnoja, T., Zhou, A., Abbeel, P., Levine, S.: Soft actor-critic: off-policy maximum entropy deep reinforcement learning with a stochastic actor, pp. 1861–1870. PMLR (2018)

Tran, T.X., Pompili, D.: Joint task offloading and resource allocation for multi-server mobile-edge computing networks. IEEE Trans. Veh. Technol. 68(1), 856–868 (2018)

Yousefpour, A., Ishigaki, G., Gour, R., Jue, J.P.: On reducing iot service delay via fog offloading. IEEE Internet things J. 5(2), 998–1010 (2018)

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

On behalf of all the authors, the corresponding author declares that there is no conflict of interest involved in conducting this research.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Jain, V., Kumar, B. QoS-Aware Task Offloading in Fog Environment Using Multi-agent Deep Reinforcement Learning. J Netw Syst Manage 31, 7 (2023). https://doi.org/10.1007/s10922-022-09696-y

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10922-022-09696-y