Abstract

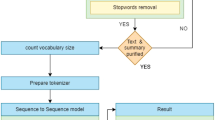

Abstractive Text Summarization (ATS), which is the task of constructing summary sentences by merging facts from different source sentences and condensing them into a shorter representation while preserving information content and overall meaning. It is very difficult and time consuming for human beings to manually summarize large documents of text. In this paper, we propose an LSTM-CNN based ATS framework (ATSDL) that can construct new sentences by exploring more fine-grained fragments than sentences, namely, semantic phrases. Different from existing abstraction based approaches, ATSDL is composed of two main stages, the first of which extracts phrases from source sentences and the second generates text summaries using deep learning. Experimental results on the datasets CNN and DailyMail show that our ATSDL framework outperforms the state-of-the-art models in terms of both semantics and syntactic structure, and achieves competitive results on manual linguistic quality evaluation.

Similar content being viewed by others

References

Angeli G, Tibshirani J, Wu J et al (2014) Combining distant and partial supervision for relation extraction[C]. EMNLP, pp 1556–1567

Bing L, Li P, Liao Y et al (2015) Abstractive multi-document summarization via phrase selection and merging[J]. arXiv preprint arXiv:1506.01597

Cao Z, Li W, Li S et al (2016) Attsum: joint learning of focusing and summarization with neural attention[J]. arXiv preprint arXiv:1604.00125

Chen M, Weinberger KQ, Sha F (2013) An alternative text representation to TF-IDF and Bag-of-Words[J]. arXiv preprint arXiv:1301.6770

Cheng J, Lapata M (2016) Neural summarization by extracting sentences and words[J]. arXiv preprint arXiv:1603.07252

Chopra S, Auli M, Rush AM (2016) Abstractive sentence summarization with attentive recurrent neural networks[C]. Proceedings of the 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, pp 93–98

Colmenares CA, Litvak M, Mantrach A et al (2015) HEADS: headline generation as sequence prediction using an abstract feature-rich space[C]. HLT-NAACL, pp 133–142

Erkan G, Radev DR (2004) Lexrank: graph-based lexical centrality as salience in text summarization[J]. J Artif Intell Res 22:457–479

Filippova K, Altun Y (2013) Overcoming the lack of parallel data in sentence compression[C]. EMNLP, pp 1481–1491

Gu J, Lu Z, Li H et al (2016) Incorporating copying mechanism in sequence-to-sequence learning[J]. arXiv preprint arXiv:1603.06393

Hu B, Chen Q, Zhu F (2015) Lcsts: a large scale chinese short text summarization dataset[J]. arXiv preprint arXiv:1506.05865

Li J, Luong MT, Jurafsky D (2015) A hierarchical neural autoencoder for paragraphs and documents[J]. arXiv preprint arXiv:1506.01057

Lin CY, Hovy E (2003) Automatic evaluation of summaries using n-gram co-occurrence statistics[C]. Proceedings of the 2003 Conference of the North American Chapter of the Association for Computational Linguistics on Human Language Technology-Volume 1. Association for Computational Linguistics, pp 71–78

Litvak M, Last M (2008) Graph-based keyword extraction for single-document summarization[C]. Proceedings of the workshop on Multi-source Multilingual Information Extraction and Summarization. Association for Computational Linguistics, pp 17–24

Lopyrev K (2015) Generating news headlines with recurrent neural networks[J]. arXiv preprint arXiv:1512.01712

Nallapati R, Zhou B, Gulcehre C et al (2016) Abstractive text summarization using sequence-to-sequence rnns and beyond[J]. arXiv preprint arXiv:1602.06023

Nallapati R, Zhai F, Zhou B (2017) Summarunner: a recurrent neural network based sequence model for extractive summarization of documents. In: Proc. Thirty-First AAAI Conference on Artificial Intelligence, pp 3075–3081

Ribeiro R, Marujo L, Martins de Matos D et al (2013) Self reinforcement for important passage retrieval[C]. Proceedings of the 36th international ACM SIGIR conference on Research and development in information retrieval. ACM, pp 845–848

Riedhammer K, Favre B, Hakkani-Tür D (2010) Long story short–global unsupervised models for keyphrase based meeting summarization[J]. Speech Comm 52(10):801–815

Rush AM, Chopra S, Weston J (2015) A neural attention model for abstractive sentence summarization[J]. arXiv preprint arXiv:1509.00685

Sarkar K (2012) Bengali text summarization by sentence extraction[J]. arXiv preprint arXiv:1201.2240

Wong KF, Wu M, Li W (2008) Extractive summarization using supervised and semi-supervised learning[C]. Proceedings of the 22nd International Conference on Computational Linguistics-Volume 1. Association for Computational Linguistics, pp 985–992

Yousefi-Azar M, Text HL (2017) summarization using unsupervised deep learning[J]. Expert Syst Appl 68:93–105

Zhang Y, Shen D, Wang G et al (2017) Deconvolutional paragraph representation learning[C]. Advances in Neural Information Processing Systems, pp 4172–4182

Zhou Q (2016) Research on heterogeneous data integration model of group enterprise based on cluster computing[J]. Clust Comput 19(3):1275–1282. https://doi.org/10.1007/s10586-016-0580-y

Zhou Q (2018) Multi-layer affective computing model based on emotional psychology[J]. Electron Commer Res 18(1):109–124. https://doi.org/10.1007/s10660-017-9265-8

Zhou Q, Liu R (2016) Strategy optimization of resource scheduling based on cluster rendering[J]. Clust Comput 19(4):2109–2117. https://doi.org/10.1007/s10586-016-0655-9

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Song, S., Huang, H. & Ruan, T. Abstractive text summarization using LSTM-CNN based deep learning. Multimed Tools Appl 78, 857–875 (2019). https://doi.org/10.1007/s11042-018-5749-3

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-018-5749-3