Abstract

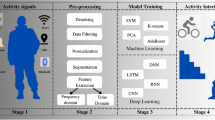

Human pose estimation methods have difficulties predicting the correct pose for persons due to challenges in scale variation. Existing works in this domain mainly focus on single-person pose estimation. To counter this challenge we have developed a system that can efficiently estimate both one and two individual poses. We termed remarkable joint based, Waveform, Angle, and Alpha characteristics, as R-WAA. R-WAA is a novel up-bottom human pose estimation method developed using two-dimensional body skeletal joint points. They are capturing all required spatial information using waveform characteristics, angle characteristics, and alpha characteristics. All pose estimator characteristics are developed using a remarkable joint, which is the origin of all poses. The proposed algorithm is evaluated for one and two individuals databases: KARD- Kinect Activity Recognition Dataset and SBU Kinect Interaction Dataset. The results of experiments validate that R-WAA outperforms state-of-the-art approaches.

Similar content being viewed by others

References

Aly S, Sayed A (2019) Human action recognition using bag of global and local Zernike moment features. Multim Tools Appl 78(17):24923–24953

Ashwini K, Amutha R (2020) Skeletal Data based Activity Recognition System. In 2020 International Conference on Communication and Signal Processing (ICCSP), pp 444–447. IEEE

Baradel F, Wolf C, Mille J (2017) Pose-conditioned spatio-temporal attention for human action recognition. arXiv preprint arXiv:1703.10106

Bulbul MF, Saiful I, Hazrat A (2019) 3D human action analysis and recognition through GLAC descriptor on 2D motion and static posture images. Multimed Tools Appl 78(15):21085–21111

Cao Z, Tomas S, Shih-En W, Yaser S (2017) Realtime multi-person 2d pose estimation using part affinity fields. In Proceedings of the IEEE conference on computer vision and pattern recognition, pp 7291–7299

Chen W, Jiang Z, Guo H, Ni X (2020) Fall detection based on key points of human-skeleton using openpose. Symmetry 12(5):744

Chen Y, Zhicheng W, Yuxiang P, Zhiqiang Z, Gang Y, Jian S (2018) Cascaded pyramid network for multi-person pose estimation. In Proceedings of the IEEE conference on computer vision and pattern recognition, pp 7103–7112

Cheng B, Bin X, Jingdong W, Honghui S, Thomas SH, Lei Z (2020) HigherHRNet: scale-aware representation learning for bottom-up human pose estimation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp 5386–5395

Cippitelli E, Gasparrini S, Gambi E, Spinsante S (2016) A human activity recognition system using skeleton data from rgbd sensors. Comput Intell Neurosci 2016:21

Devanne M, Hazem W, Stefano B, Pietro P, Mohamed D, Alberto DB (2014) 3-d human action recognition by shape analysis of motion trajectories on riemannian manifold. IEEE Transn Cybern 45(7):1340–1352

Du Y, Yun F, Liang W (2015) Skeleton based action recognition with convolutional neural network. In 2015 3rd IAPR Asian Conference on Pattern Recognition (ACPR), pp 579–583. IEEE

Gaglio S, Giuseppe LR, Marco M (2014) Human activity recognition process using 3-D posture data. IEEE Trans Hum-Mach Syst 45(5):586–597

Gori I, Aggarwal JK, Larry M, Michael SR (2016) Multitype activity recognition in robot-centric scenarios. IEEE Robotics Autom Lett 1(1):593–600

Gou J, Lan Du, Zhang Y, Xiong T (2012) A new distance-weighted k-nearest neighbor classifier. J Inf Comput Sci 9(6):1429–1436

Gu Y, Xiaofeng Y, Weihua S, Yongsheng O, Yongqiang L (2020) Multiple stream deep learning model for human action recognition. Image Vis Comput 93:103818

He K, Georgia G, Piotr D, Ross G (2017) Mask r-cnn. In Proceedings of the IEEE international conference on computer vision, pp 2961–2969

Huang Z, Chengde W, Thomas P, Luc VG (2017) Deep learning on lie groups for skeleton-based action recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition, pp 6099–6108

Hu T, Zhu X, Guo W, Wang S, Zhu J (2019) Human action recognition based on scene semantics. Multimedia Tools Appl 78(20):28515–28536

Islam MS, Bakhat K, Khan R et al (2021) Action recognition using interrelationships of 3D joints and frames based on angle sine relation and distance features using interrelationships. Appl Intell. https://doi.org/10.1007/s10489-020-02176-3

Islam MS, Mansoor I, Nuzhat N, Khush B, Mattah Islam M, Shamsa K, Zhongfu Y (2019) CAD: Concatenated Action Descriptor for one and two Person (s), using Silhouette and Silhouette's Skeleton. IET Image Processing

Jalal A, Khalid N, Kim K (2020) Automatic recognition of human interaction via hybrid descriptors and maximum entropy markov model using depth sensors. Entropy 22(8):817

Janbu N (1973) Slope stability computations. Publication of: Wiley (John) and Sons, Incorporated

Ji Y, Guo Y, Hong C (2014) Interactive body part contrast mining for human interaction recognition. In 2014 IEEE International Conference on Multimedia and Expo Workshops (ICMEW), pp 1–6. IEEE

Ke Q, An S, Bennamoun M, Sohel F, Boussaid F (2017) Skeletonnet: mining deep part features for 3-d action recognition. IEEE Signal Process Lett 24(6):731–735

Ke Q, Mohammed B, Senjian A, Ferdous S, Farid B (2017) A new representation of skeleton sequences for 3d action recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition, pp 3288–3297

Keller JM, Gray MR, Givens JA (1985) A fuzzy k-nearest neighbor algorithm. IEEE Trans Syst Man Cybern 4:580–585

Kendall A, Yarin G (2017) What uncertainties do we need in bayesian deep learning for computer vision?. In Advances in neural information processing systems, pp 5574–5584

Khowaja SA, Seok-Lyong L (2020) Semantic image networks for human action recognition. Int J Comput Vis 128(2):393–419

Kreiss S, Lorenzo B, Alexandre A (2019) Pifpaf: Composite fields for human pose estimation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp 11977–11986

Leng L, Jiashu Z, Jing X, Muhammad KK, Khaled A (2010) Dynamic weighted discrimination power analysis in DCT domain for face and palmprint recognition. In 2010 international conference on information and communication technology convergence (ICTC), pp 467–471. IEEE

Liao Y, Rao V (2002) Use of k-nearest neighbor classifier for intrusion detection. Comput Secur 21(5):439–448

Li C, Qiaoyong Z, Di X, Shiliang P (2018) Co-occurrence feature learning from skeleton data for action recognition and detection with hierarchical aggregation. arXiv preprint arXiv:1804.0605

Liu J, Gang W, Ping H, Ling-Yu D, Alex CK (2017) Global context-aware attention LSTM networks for 3D action recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp 1647–1656

Ma M, Marturi N, Li Y, Leonardis A, Stolkin R (2018) Region-sequence based six-stream CNN features for general and fine-grained human action recognition in videos. Pattern Recogn 76:506–521

Mehta D, Sridhar S, Sotnychenko O, Rhodin H, Shafiei M, Seidel H-P, Weipeng Xu, Casas D, Theobalt C (2017) Vnect: Real-time 3d human pose estimation with a single rgb camera. ACM Trans Graphics (TOG) 36(4):1–14

Newell A, Zhiao H, Jia D (2017) Associative embedding: end-to-end learning for joint detection and grouping. In Advances in neural information processing systems, pp 2277–2287

Papandreou G, Tyler Z, Nori K, Alexander T, Jonathan T, Chris B, Kevin M (2017) Towards accurate multi-person pose estimation in the wild. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp 4903–4911

Papadopoulos K, Demisse G, Ghorbel E, Antunes M, Aouada D, Ottersten B (2019) Localized trajectories for 2D and 3D action recognition. Sensors 19(16):3503

Papadopoulos K, Michel A, Djamila A, Björn O (2017) Enhanced trajectory-based action recognition using human pose. In 2017 IEEE International Conference on Image Processing (ICIP), pp 1807–1811. IEEE

Papandreou G, Tyler Z, Liang-Chieh C, Spyros G, Jonathan T, Kevin M (2018) Personlab: Person pose estimation and instance segmentation with a bottom-up, part-based, geometric embedding model. In Proceedings of the European Conference on Computer Vision (ECCV), pp 269–286

Peterson LE (2009) K-nearest neighbor. Scholarpedia 4(2):1883

Proffitt DR, Gilden DL (1989) Understanding natural dynamics. J Exp Psychol Hum Percept Perform 15(2):384

Song S, Cuiling L, Junliang X, Wenjun Z, Jiaying L (2017) An end-to-end spatio-temporal attention model for human action recognition from skeleton data. In Thirty-first AAAI conference on artificial intelligence

Suma EA, Belinda L, Albert SR, David MK, Mark B (2011) Faast: The flexible action and articulated skeleton toolkit. In 2011 IEEE Virtual Reality Conference, pp 247–248. IEEE

Sun K, Bin X, Dong L, Jingdong W (2019) Deep high-resolution representation learning for human pose estimation. In Proceedings of the IEEE conference on computer vision and pattern recognition, pp 5693–5703

Sun X, Bin X, Fangyin W, Shuang L, Yichen W (2018) Integral human pose regression. In Proceedings of the European Conference on Computer Vision (ECCV), pp 529–545

Villaroman N, Dale R, Bret S (2011) Teaching natural user interaction using OpenNI and the Microsoft Kinect sensor. In Proceedings of the 2011 conference on Information technology education, pp 227–232

Wang J, Sun K, Cheng T, Jiang B, Deng C, Zhao Y, Liu D et al. (2020) Deep high-resolution representation learning for visual recognition. IEEE transactions on pattern analysis and machine intelligence.

Wang Y, Xiaofei J, Zhuangzhuang J (2020) Research on Human Interaction Recognition Algorithm Based on Interest Point of Depth Information Fusion. In International Conference on Robotics and Rehabilitation Intelligence, pp 98–109. Springer, Singapore

Xiao B, Haiping W, Yichen W (2018) Simple baselines for human pose estimation and tracking. In Proceedings of the European conference on computer vision (ECCV), pp 466–481

Youdas JW, Garrett TR, Suman VJ, Bogard CL, Hallman HO, Carey JR (1992) Normal range of motion of the cervical spine: an initial goniometric study. Phys Ther 72(11):770–780

Yun K, Jean H, Debaleena C, Tamara L, Dimitris S (2012) Two-person interaction detection using body-pose features and multiple instance learning. In 2012 IEEE Computer Society Conference on Computer Vision and Pattern Recognition Workshops, pp 28–35. IEEE

Zhu W, Cuiling L, Junliang X, Wenjun Z, Yanghao L, Li S, Xiaohui X (2016) Co-occurrence feature learning for skeleton based action recognition using regularized deep LSTM networks. In Thirtieth AAAI Conference on Artificial Intelligence

Acknowledgements

This work is supported by the Fundamental Research Funds for the Central Universities (Grant no. WK2350000002).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Islam, M., Bakhat, K., Khan, R. et al. Single and two-person(s) pose estimation based on R-WAA. Multimed Tools Appl 81, 681–694 (2022). https://doi.org/10.1007/s11042-021-11374-1

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-021-11374-1