Abstract

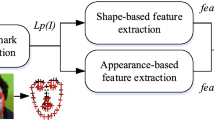

This paper is addressed on the idea of building up a model to control computer systems by utilizing facial landmarks like eyes, nose and head gestures. The face recognition systems mainly detect and recognize eyes, nose and head gestures to control the movement of the mouse cursor in order to operate computer system in real time. This paper proposes the facial landmarks based human-computer interaction model in which histogram of oriented gradients (HOG) has been taken for global facial feature identification and extraction that is considered as HOG descriptors. Furthermore, pre-trained linear SVM classifier gets extracted features to detect whether it is a human face or not, including use of pyramid based images and sliding window algorithm. Moreover pre-trained ensemble of Regression Trees algorithm is applied to recognize facial landmarks such as eyes, eyebrows, nose, mouth, and jawline. The main purpose is to effectively utilize facial landmarks and allow the user to perform activities mapped to explicit eye blinks, nose and head motions using PC webcam. In this model, eye blinks has been detected through estimated value of eye aspect ratio (EAR) and newly proposed β parameter. Accordingly classification report has generated for both estimation and analysed best results for β parameter in terms of accuracy with 98.33%, precision with 100%, recall with 98.33% and F1 score with 99.16% under good lighting conditions.

Similar content being viewed by others

Data availability

All the data and the supplementary material can be made available from the corresponding author, upon reasonable request.

Code availability

Codes used in this study can be made available from the corresponding author, upon reasonable request.

References

Akakin HC, Sankur B (2011) Robust classification of face and head gestures in video. Image Vis Comput 29(7):470–483

Ananthakumar, A. (2018) Efficient face and gesture recognition for time sensitive application," 2018 IEEE southwest symposium on image analysis and interpretation (SSIAI), Las Vegas, NV, pp. 117–120, https://doi.org/10.1109/SSIAI.2018.8470351.

Bisen D (2021) Deep convolutional neural network based plant species recognition through features of leaf", has been accepted for publication in multimedia tools and applications 80:6443–6456.

Bulling A, Gellersen H (2010) Toward mobile eye-based human-computer interaction. IEEE Pervasive Comput 9:8–12

Caschera MC, Ferri F, Grifoni P (2007) Multimodal interaction systems: information and time features. International Journal of Web and Grid Services (IJWGS) 3(1):82–99

Chaubey G, Bisen D, Arjaria S, Yadav V (2020) Thyroid disease prediction using machine learning approaches. Natl Acad Sci Lett Springer 44:233–238. https://doi.org/10.1007/s40009-020-00979-z

Colaco S and Han DS (2020) Deep learning-based facial landmarks localization with loss comparison," 2020 international conference on information and communication technology convergence (ICTC), pp. 584-587, https://doi.org/10.1109/ICTC49870.2020.9289429

Dalal, N., Triggs B. (2005) Histograms of Oriented Gradients for Human Detection,2005 IEEE Conference on Computer Vision and Pattern Recognition, San Diego, California, 1:886–893 https://doi.org/10.1109/CVPR.2005.177.

Elleuch H, Wali A, Alimi AM (2014) Smart tablet monitoring by a real-time head movement, eye gestures recognition system. In: international conference on future internet of things and cloud, pp. 393–398, Spain.

Elleuch H., Wali A., Samet A., Alimi A.M. (2016) A Real-Time Eye Gesture Recognition System Based on Fuzzy Inference System for Mobile Devices Monitoring. In: Blanc-Talon J., Distante C., Philips W., Popescu D., Scheunders P. (eds) Advanced Concepts for Intelligent Vision Systems. ACIVS 2016. Lecture notes in computer science, vol 10016. Springer, Cham.

Fernandez A, Ortega M, Penedo MG, Cancela B, Gigirey LM (2013) Automatic Eye Gesture Recognition in Audiometries for Patients with Cognitive Decline. In: Kamel M., Campilho A. (eds) Image Analysis and Recognition. ICIAR 2013. Lecture notes in computer science, vol 7950. Springer, Berlin, Heidelberg.

Geetha A, Ramalingam V, Palanivel S, Palaniappan B (2009) Facial expression recognition - a real time approach. Expert Syst Appl 36(1):303–308

Johnston B, Chazal P (2018) A review of image-based automatic facial landmark identification techniques. J Image Video Proc 2018:86. https://doi.org/10.1186/s13640-018-0324-4

Kazemi V, Sullivan J (2014) One millisecond face alignment with an Ensemble of Regression Trees. 2014 IEEE conference on computer vision and pattern recognition, pp 1867-1874. https://doi.org/10.1109/CVPR.2014.241

Konstantin K and Larysa K (2020) Fast facial landmark detection and applications: a survey. https://doi.org/10.13140/RG.2.2.32735.07847/1

Liu J, Furusawa K, Tateyama T et al (2019) An improved hand gesture recognition with two-stage convolution neural networks using a hand color image and its Pseudo-depth image. IEEE international conference on image processing (ICIP), pp 375-379. https://doi.org/10.1109/ICIP.2019.8802970

Maior CS, Moura M, Santana JM, et al. (2018) Real-time SVM classification for drowsiness detection using eye aspect ratio, proceedings of probabilistic safety assessment and management PSAM 14, September 2018, Los Angeles

Mardanbegi D, Hansen DW, Pederson T (2012) Eye-based head gestures. In: Proceedings of the symposium on eye tracking research and applications (ETRA ‘12). Association for Computing Machinery, New York, NY, USA, pp 139–146. https://doi.org/10.1145/2168556.2168578

Rautaray SS, Agrawal A (2015) Vision based hand gesture recognition for human computer interaction: a survey. Artif Intell Rev 43:1–54. https://doi.org/10.1007/s10462-012-9356-9

Rosebrock A (2021) Facial landmarks with dlib, OpenCV, and Python [Online]. Available: https://www.pyimagesearch.com/2017/04/03/facial-landmarks-dlib-opencv-python/.

Sagonas C, Antonakos E, Tzimiropoulos G, Zafeiriou S, Pantic M (2016) 300 faces in-the-wild challenge: Database and results. Image Vis Comput 47:3–18

Sidenmark L, Gellersen H (2019) Eye & Head: synergetic eye and head movement for gaze pointing and selection. In: Proceedings of the 32nd annual ACM symposium on user Interface software and technology (UIST '19). Association for Computing Machinery, New York, NY, USA, pp 1161–1174. https://doi.org/10.1145/3332165.3347921

Skodras E, Fakotakis N (2015) Precise localization of eye centers in low resolution color images. J Image Vis Comput 36:51–60

Soukupova T, Cech J (2016) Real-time eye blink detection using facial landmarks. 21st computer vision winter workshop, Luke cehovin, Rok mandeljc, Vitomir Strue (eds.) Rimske Toplice, Slovenia. Online: https://vision.fe.uni-lj.si/cvww2016/proceedings/papers/05.pdf.

Stoimenov S, Tsenov GT, Mladenov V (2016) Face recognition system in android using neural networks, 016 13th symposium on neural networks and applications (NEUREL). Valeri Mladenov's Lab. https://doi.org/10.1109/NEUREL.2016.7800138

Vaitukaitis V and Bulling A (2012) Eye gesture recognition on portable devices. In Proceedings of the 2012 ACM conference on ubiquitous computing (UbiComp ‘12). Association for Computing Machinery, New York, NY, USA, pp. 711–714. https://doi.org/10.1145/2370216.2370370.

Zaytseva E, Seguí S, Vitrià J (2012) Sketchable Histograms of Oriented Gradients for Object Detection. In: Alvarez L., Mejail M., Gomez L., Jacobo J. (eds) Progress in Pattern Recognition, Image Analysis, Computer Vision, and Applications. CIARP 2012. Lecture notes in computer science, vol 7441. Springer, Berlin, Heidelberg https://doi.org/10.1007/978-3-642-33275-3_46.

Zhang T, Lin H, Ju Z, Yang C (2020) Hand gesture recognition in complex background based on convolutional pose machine and fuzzy Gaussian mixture models. Int J Fuzzy Syst 22:1330–1341. https://doi.org/10.1007/s40815-020-00825-w

Zhao J, Allison RS (2017) Real-time head gesture recognition on head-mounted displays using cascaded hidden Markov models, 2017 IEEE international conference on systems, man, and cybernetics (SMC). Banff, AB 2017:2361–2366. https://doi.org/10.1109/SMC.2017.8122975

Zielasko D, Horn S, Freitag S, Weyers B, Kuhlen TW (2016) Evaluation of hands-free HMD-based navigation techniques for immersive data analysis. In: 2016 IEEE symposium on 3D user interfaces, pp 113–119.

Funding

The Author(s) declares that this research has not been funded by any agency.

Author information

Authors and Affiliations

Contributions

• Dhananjay Bisen and Rishabh Shukla: Methodology; Writing original draft.

• Narendra Rajpoot and Praphull Maurya: Literature Review; Editing; Reviewing the Manuscript.

• Atul Kr. Uttam and Siddhartha kr. Arjaria: Reviewing the Manuscript & final drafting.

Corresponding author

Ethics declarations

The Author(s) permits the publisher to publish this research article in this esteemed Journal. The Corresponding Author gives consent from individuals to publish the data associated with this research article.

Ethical approval

All the author(s) agrees to the guidelines provided by the Journal. This research has considered all ethical issues associated with the methodology being employed.

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Bisen, D., Shukla, R., Rajpoot, N. et al. Responsive human-computer interaction model based on recognition of facial landmarks using machine learning algorithms. Multimed Tools Appl 81, 18011–18031 (2022). https://doi.org/10.1007/s11042-022-12775-6

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-022-12775-6