Abstract

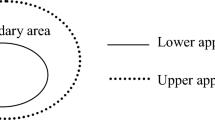

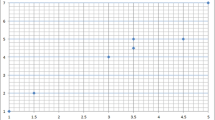

Rough k-means algorithm is one of the widely used soft clustering methods for clustering. However, the rough k-means clustering algorithm has certain issues like it is very sensitive to random initial cluster centroid and subjectivity involved in fixing the constant value of zeta parameter to decide the fuzzy elements. Also, there are no appropriate performance measures for the rough k-means algorithm. This study proposes a new initialization algorithm to address the issue of random allocation of data elements to improve the performance of the clustering. A new method to determine the best zeta values for the rough k-means algorithm is evolved. Also, new performance criteria are introduced, such as distance between the centroid and the farthest element in the cluster, number of elements in the intersection area and distance between the centroid of clusters. The seven experiments were carried out by using several benchmark datasets such as Microarray, Synthetic and Forest cover datasets. The performance criteria were compared across the Proposed algorithms, Peters (k-means++), Peters PI, Ioannis’ algorithm, Vijay algorithm Mano algorithm, and Peters (random) initialization methods. The Root mean square standard deviation (RMSSTD) and S/T index were used to validate the performance of the rough k-means clustering algorithm. Also, the frequency table was constructed to gauge the degree of fuzziness in each algorithm. The program completion time of each initialization algorithm was used to measure the variability among the proposed and existing rough k-means clustering algorithms. It was found that the initialization algorithms excelled in all criteria and performed better than the existing rough k-means clustering algorithms.

Similar content being viewed by others

Data availability

The datasets generated during and/or analysed during the current study are available in the UCI repository, [https://archive.ics.uci.edu/}.

References

Acharjya DP, Rathi R (2021) An extensive study of statistical, rough, and hybridized rough computing in bankruptcy prediction. Multimed Tools Appl 80:35387–35413. https://doi.org/10.1007/s11042-020-10167-2

Anderberg MR (1973) Cluster analysis for applications. Probab math stat 19 CN - QA278 A5 1973

Arthur D, Vassilvitskii S (2007) K-means++: the advantages of careful seeding. Proc eighteenth Annu ACM-SIAM Symp Discret algorithms:1027–1025. https://doi.org/10.1145/1283383.1283494

Berahmand K, Nasiri E, Li Y (2021) Spectral clustering on protein-protein interaction networks via constructing affinity matrix using attributed graph embedding. Comput Biol Med 138:104933. https://doi.org/10.1016/j.compbiomed.2021.104933

Berahmand K, Haghani S, Rostami M, Li Y (2022a) A new attributed graph clustering by using label propagation in complex networks. J King Saud Univ - Comput Inf Sci 34:1869–1883. https://doi.org/10.1016/j.jksuci.2020.08.013

Berahmand K, Mohammadi M, Faroughi A, Mohammadiani RP (2022b) A novel method of spectral clustering in attributed networks by constructing parameter-free affinity matrix. Clust Comput 25:869–888. https://doi.org/10.1007/s10586-021-03430-0

Bhargava R, Tripathy BK, Tripathy A, et al (2013) Rough intuitionistic fuzzy C-means algorithm and a comparative analysis. In: compute 2013 - 6th ACM India computing convention: next generation computing paradigms and technologies

Bubeck S, Meila M, von Luxburg U (2009) How the initialization affects the stability of the k-means algorithm 16:436–452. https://doi.org/10.1051/ps/2012013

Cheng CH (2020) A DWPT domain transform and COM statistics method combined with rough set for images classification. Multimed Tools Appl 79:29845–29864. https://doi.org/10.1007/s11042-020-09517-x

Cui Y, Yang YC, Yang DK (2015) Anchoring strength of dual rubbed alignment layers in liquid crystal cells. Jpn J Appl Phys 54:91–99. https://doi.org/10.7567/JJAP.54.061701

Darken C, Moody J (1990) Fast adaptive k-means clustering: some empirical results. IJCNN Int Jt Conf Neural Networks:233–238. https://doi.org/10.1109/ijcnn.1990.137720

Fan GF, Zhang LZ, Yu M, Hong WC, Dong SQ (2022) Applications of random forest in multivariable response surface for short-term load forecasting. Int J Electr Power Energy Syst 139:108073. https://doi.org/10.1016/j.ijepes.2022.108073

Flynn PJ (2000) Data Clustering : A Review 31. https://doi.org/10.1145/331499.331504

Frank A, Asuncion A (2010) {UCI} machine learning repository. In: Univ. Calif. Irvine. http://archive.ics.uci.edu/ml

Gonzalez F (1985) Clustering to minimize INTERCLUSTER distance *. 38:293–306

Jain AK, Murty MN, Flynn PJ (1999) Data clustering: a review. ACM Comput Surv 31:264–323. https://doi.org/10.1145/331499.331504

Katsavounidis I, Kuo CCJ, Zhang Z (1994) A new initialization technique for generalized Lloyd iteration. IEEE Signal Process Lett 1:144–146. https://doi.org/10.1109/97.329844

Kumar DM, Satyanarayana D, Prasad MNG (2021) An improved Gabor wavelet transform and rough K-means clustering algorithm for MRI brain tumor image segmentation. Multimed Tools Appl 80:6939–6957. https://doi.org/10.1007/s11042-020-09635-6

Lingras P, Peters G (2011) Rough clustering. Wiley Interdiscip Rev Data Min Knowl Discov 1:64–72. https://doi.org/10.1002/widm.16

Lingras P, West C (2004) Interval set clustering of web users with rough K-means. J Intell Inf Syst 23:5–16. https://doi.org/10.1023/B:JIIS.0000029668.88665.1a

Maji P, Pal SK (2007) Rough set based generalized fuzzy C-means algorithm and quantitative indices. IEEE Trans Syst Man, Cybern Part B 37:1529–1540. https://doi.org/10.1109/TSMCB.2007.906578

Manochandar S, Punniyamoorthy M, Jeyachitra RK (2020) Development of new seed with modified validity measures for k-means clustering. Comput Ind Eng:141. https://doi.org/10.1016/j.cie.2020.106290

Milligan GW, Romesburg HC (2006) Cluster analysis for researchers. J Mark Res. https://doi.org/10.2307/3151374

Mitra S, Banka H (2007) Application of rough sets in pattern recognition. In: lecture notes in computer Science (including subseries lecture notes in artificial intelligence and lecture notes in bioinformatics). Pp 151–169

Moore W (2001) K-means and hierarchical clustering. Stat Data Min Tutorials:1–24

Munusamy S, Murugesan P (2020) Modified dynamic fuzzy c-means clustering algorithm – application in dynamic customer segmentation. Appl Intell 50:1922–1942. https://doi.org/10.1007/s10489-019-01626-x

Murtagh F, Contreras P (2012) Algorithms for hierarchical clustering: an overview. Wiley Interdiscip Rev Data Min Knowl Discov 2:86–97. https://doi.org/10.1002/widm.53

Murugesan VP, Murugesan P (2020) A new initialization and performance measure for the rough k-means clustering. Soft Comput 24:11605–11619. https://doi.org/10.1007/s00500-019-04625-9

Namburu A, Samay K, Edara SR (2017) Soft fuzzy rough set-based MR brain image segmentation. Appl Soft Comput J 54:456–466. https://doi.org/10.1016/j.asoc.2016.08.020

Nazari Z, Kang D, Asharif MR et al (2015) A new hierarchical clustering algorithm. Int Conf Intell Informatics Biomed Sci 2015:148–152. https://doi.org/10.1109/ICIIBMS.2015.7439517

Pawlak Z (1982) Rough sets. Int J Comput Inform Sci 11:341–356. https://doi.org/10.1007/BF01001956

Pawlak Z, Skowron A (2007) Rough sets : Some extensions 177:28–40. https://doi.org/10.1016/j.ins.2006.06.006

Peters G (2005) Outliers in rough k-means clustering. 702–707

Peters G (2006) Some refinements of rough k-means clustering. Pattern Recogn 39:1481–1491. https://doi.org/10.1016/j.patcog.2006.02.002

Peters G (2014) Rough clustering utilizing the principle of indifference. Inf Sci (Ny) 277:358–374. https://doi.org/10.1016/j.ins.2014.02.073

Peters G (2015) Is there any need for rough clustering? Pattern Recogn Lett 53:31–37. https://doi.org/10.1016/j.patrec.2014.11.003

Peters G, Lampart M, Weber R (2008) Evolutionary Rough K-medoid clustering. Lect Notes Comput Sci (including Subser Lect Notes Artif Intell Lect Notes Bioinformatics) 5084(LNCS):289–306. https://doi.org/10.1007/978-3-642-02962-2_9

Peters G, Crespo F, Lingras P, Weber R (2013) Soft clustering - fuzzy and rough approaches and their extensions and derivatives. Int J Approx Reason 54:307–322. https://doi.org/10.1016/j.ijar.2012.10.003

Sarle WS, Jain AK, Dubes RC (2006) Algorithms for clustering data. Technometrics. https://doi.org/10.2307/1268876

Sivaguru M, Punniyamoorthy M (2021) Performance-enhanced rough k -means clustering algorithm. Soft Comput 25:1595–1616. https://doi.org/10.1007/s00500-020-05247-2

Su T, Dy JG (2007) In search of deterministic methods for initializing K-means and Gaussian mixture clustering. Intell Data Anal 11:319–338. https://doi.org/10.3233/ida-2007-11402

Ubukata S, Notsu A, Honda K (2021) Objective function-based rough membership C-means clustering. Inf Sci (Ny) 548:479–496. https://doi.org/10.1016/j.ins.2020.10.037

Vijaya Prabhagar M, Punniyamoorthy M (2020) Development of new agglomerative and performance evaluation models for classification. Neural Comput Applic 32:2589–2600. https://doi.org/10.1007/s00521-019-04297-4

Zafar MH, Ilyas M (2015) A clustering based study of classification algorithms. Int J Database Theory Appl 8(1):11–22. https://doi.org/10.14257/ijdta.2015.8.1.02

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that we have no conflict of interest.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Murugesan, V.P. Impact of new seed and performance criteria in proposed rough k-means clustering. Multimed Tools Appl 82, 43671–43700 (2023). https://doi.org/10.1007/s11042-023-14414-0

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-023-14414-0