Abstract

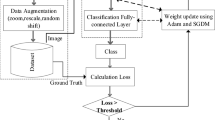

Sign Language Recognition (SLR) helps to bridge the gap between ordinary and hearing-impaired people. But various difficulties and challenges are faced by SLR system during real-time implementation. The major complexity associated with SLR is the inability to provide a consistent recognition process and it shows lesser recognition accuracy. To handle this issue, this research concentrates on adopting the finest classification approach to provide a feasible end-to-end system using deep learning approaches. This process transforms sign language into the voice for assisting the people to hear the sign language. The input is taken from the ROBITA Indian Sign Language Gesture Database and some essential pre-processing steps are done to avoid unnecessary artefacts. The proposed model is incorporated with the encoder Multi-Layer Convolutional Neural Networks (ML-CNN) for evaluating the scalability, accuracy of the end-to-end SLR. The encoder analyses the linear and non-linear features (higher level and lower level) to improve the quality of recognition. The simulation is carried out in a MATLAB environment where the performance of the ML-CNN model outperforms the existing approaches and establishes the trade-off. Some performance metrics like accuracy, precision, F-measure, recall, Matthews Correlation Coefficient (MCC), Mean Absolute Error (MAE) are evaluated to show the significance of the model. The prediction accuracy of the proposed ML-CNN with encoder is 87.5% in the ROBITA sign gesture dataset and it’s increased by 1% and 3.5% over the BLSTM and HMM respectively.

Similar content being viewed by others

References

Abid E, Petriu M, Amjadian E (2015) Dynamic sign language recognition for smart home interactive application using stochastic linear formal grammar. IEEE Trans Instrum Meas 64(3):596–605

Al-Hammadi G, Muhammad W, Abdul MA, Hossain MS (2020) Hand gesture recognition using 3D-CNN model. IEEE Consum Electron Mag 9(1):95–101

Ali, Z., Muhammad, G. and Alhamid, M.F. “An automatic health monitoring system for patients suffering from voice complications in smart cities,” IEEE Access, vol. 5, pp. 3900–3908, 2017

Assaleh T, Shanableh M, Fanaswala FA, Bajaj H (2010) Continuous Arabic sign language recognition in user-dependent mode. J Intell Learn Syst Appl 2(1):19–27

Cihan Camgoz N, Hadfield S, Koller O, Bowden R (2017) “SubUNets: End-to-end hand shape and continuous sign language recognition,” in Proc. IEEE Int. Conf. Comput. Vis. (ICCV). pp. 3075–3084

Cui R, Liu H, Zhang C (2017) “Recurrent convolutional neural networks for continuous sign language recognition by staged optimization,” in Proc. IEEE Conf. Comput. Vis. Pattern Recognit. (CVPR). pp. 7361–7369

Fan, YJ (2019) “Autoencoder node saliency: Selecting relevant latent representations,” Pattern Recognit., vol. 88. pp. 643–653

Graves A, Fernández S, Gomez F, Schmidhuber J (2006) “Connectionist temporal classification: Labelling unsegmented sequence data with recurrent neural networks”, in Proc. 23rd Int. Conf. Mach. Learn. (ICML). pp. 369–376

Guo D, Zhou W, Li H, Wang M (2018) “Hierarchical LSTM for sign language translation,” in Proc. 32nd AAAI Conf. Artif. Intell. pp. 1–8

Guo S Wang QT, Wang M (2019) “Dense temporal convolution network for sign language translation,” in Proc. 28th Int. Joint Conf. Artif. Intell. pp. 744–750

Hosoe H, Sako S, Kwolek B (2017) “Recognition of JSL finger spelling using convolutional neural networks”, In: Proc. 15th IAPR Int. Conf. Mach. Vis. Appl. (MVA). pp. 85–88

Huang J, Zhou W, Zhang Q, Li H, Li W (2018) “Video-based sign language recognition without temporal segmentation,” in Proc. 32nd AAAI Conf. Artif. Intell. pp. 1–8

Ijjina, E.P. and Chalavadi, K.M. (2016) “Human action recognition using genetic algorithms and convolutional neural networks,” Pattern Recognit., vol. 59. pp. 199–212.

Kim Y, Yoon WC (2014) Generating task-oriented interactions of service robots. IEEE Trans Syst, Man, Cybern Syst 44(8):981–994

Kim T et al. (2017) “Lexicon-free fingerspelling recognition from the video: Data, models, and signer adaptation,” Comput. Speech Lang., vol. 46. pp. 209–232

Koller O, Ney H, Bowden R (2016) “Deep hand: How to train a CNN on 1 million hand images when your data is continuous and weakly labelled”, In: Proc. IEEE Conf. Comput. Vis. Pattern Recognit. (CVPR). pp. 3793–3802

Lim AW, Tan C, Tan SC (2016) Block-based histogram of optical flow for isolated sign language recognition. J Vis Commun Image Represent 40:538–545

Mousa A, Schuller B (2017) Contextual bidirectional long short-term memory recurrent neural network language models: A generative approach to sentiment analysis. Proc 15th Conf Eur Chapter Assoc Comput Linguistics 1:1023–1032

Muhammad M, Alhamid F, Alsulaiman M, Gupta B (2018) Edge computing with cloud for voice disorder assessment and treatment. IEEE Commun Mag 56(4):60–65

Nandy A, Mondal S, Prasad JS, Chakraborty P, Nandi GC (2010) “Recognizing & Interpreting Indian Sign Language Gesture for human robot interaction” - in the proceeding of ICCCT’10. IEEE Xplore Digital Library, September, pp 712–717

Nandy A, Prasad JS, Mondal S, Chakraborty P, Nandi GC (2010) “Recognition of Isolated Indian Sign Language gesture in Real Time” - In the book of (Information Processing and Management) Springer LNCS-CCIS, Vol. 70. pp. 102–107

Nimisha K; Jacob A (2021) A brief review of the recent trends in sign language recognition. In Proceedings of the IEEE 2020 International Conference on Communication and Signal Processing (ICCSP), Virtual, 16–18. pp. 186–190

Poon K, Kwan C, Pang W-M (2019) Occlusion-robust bimanual gesture recognition by fusing multi-views. Multimed Tools Appl 78(16):23469–23488

Pu J, Zhou W, Li H (2018) “Dilated convolutional network with iterative optimization for continuous sign language recognition,” in Proc. IJCAI, vol. 3. p. 7

Quesada L, López G, Guerrero L (2017) Automatic recognition of the American sign language fingerspelling alphabet to assist people living with speech or hearing impairments. J Ambient Intell Humaniz Comput 8(4):625–635

Saha S, Bhattacharya S, Konar A (2013) A novel approach to gesture recognition in sign language applications using AVL Tree and SVM. In: Progress in Intelligent Computing Techniques: Theory, Practice, and Applications, pp. 271–277. Springer

Sincan OM, Keles HY (2020) Autsl: a large scale multi-modal turkish sign language dataset and baseline methods. IEEE Access 8:181340–181355

Stefanidis K, Konstantinidis D, Kalvourtzis A; Dimitropoulos K, Daras P (2020) 3D technologies and applications in sign language. In Recent Advances in 3D Imaging, Modeling, and Reconstruction; IGI Global: Hershey, PA, USA. pp. 50–78

Tran L Bourdev R Fergus L. Torresani, and M. Paluri (2015) “Learning spatiotemporal features with 3D convolutional networks,” in Proc. IEEE Int. Conf. Comput. Vis. (ICCV), Santiago, Chile. pp. 4489–4497

Wang S, Guo D, Zhou WG, Zha ZJ, Wang M (2018) “Connectionist temporal fusion for sign language translation,” in Proc. ACM Multimedia Conf. (MM). pp. 1483–1491

Wu J, Tian Z, Sun L, Estevez L, Jafari R (2015) “Real-time american sign language recognition using wrist-worn motion andsurface emg sensors,” in Proc. of IEEE BSN, pp. 1–6.

Zhang Y, Han J, Tang QH, Jiang J (2017) Semi-supervised image to-video adaptation for video action recognition. IEEE Trans Cybern 47(4):960–973

Zhao T, Liu J, Wang Y, Liu H, Chen Y (2018) “Ppg-based fingerlevel gesture recognition leveraging wearables,” in Proc. of IEEE INFOCOM, pp. 1457–1465.

Zhao J, Mao X, Chen L (2019) Speech emotion recognition using deep 1D & 2D CNN LSTM networks. Biomed Signal Process Control 47:312–323

Zhou H, Zhou W, Li H (2019) “Dynamic pseudo label decoding for continuous sign language recognition,” in Proc. IEEE Int. Conf. Multimedia Expo (ICME), pp. 1282–1287

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

The authors declare that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Arun Prasath, G., Annapurani, K. Prediction of sign language recognition based on multi layered CNN. Multimed Tools Appl 82, 29649–29669 (2023). https://doi.org/10.1007/s11042-023-14548-1

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-023-14548-1