Abstract

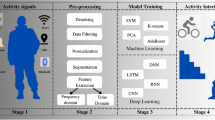

The increase in the use of electronic devices and the high rate of data stream production such as video reveals the importance of analyzing the content of such data. Content analysis of video data for human activity recognizing (HAR) has a significant application in the science of machine vision. So far, vast studies have been conducted to HAR subject. Also, despite many challenges in the research field of video data content analysis, previous researchers have proposed many effective methods in field of human activity recognition. However, the literature reveals lacking of proper context for identification, analysis and evaluation of the HAR methods and challenges in a coherent and uniform form to achieve a macro vision of the HAR subject. Hence, it seems necessary to present a comprehensive and comparative analytical review regarding the HAR on video data relying on methods and challenges. The novelty of this research is to present a comparative analytical framework called HAR-CO, which provide a macro vision, coherent structure and deeper understanding concerning to the HAR. The HAR-CO consists of three main parts. Firstly, categorizing the HAR methods in a coherent and structured way based on data collection hardware. Secondly, categorizing HAR challenges in a systematic based on the sensor attachment. Thirdly, a comparative analytical evaluation of each class of HAR approaches according to challenges toward researchers. We think that the HAR-CO framework can serve as road map and guide to select a more appropriate of HAR methods and provide new research directions by researchers.

Similar content being viewed by others

Data availability

The dataset is publicly available through UCI repository.

References

Barlas T, Avci DE, Cinici B, Ozkilicaslan H, Yalcin MM, Altinova AE (2023) The quality and reliability analysis of YouTube videos about insulin resistance. Int J Med Informatics 170:104960

Li Y, Yang G, Su Z, Li S, Wang Y (2023) Human activity recognition based on multienvironment sensor data. Information Fusion 91:47–63

Qin Z, Zhang Y, Meng S, Qin Z, Choo K-KR (2020) Imaging and fusing time series for wearable sensor-based human activity recognition. Information Fusion 53:80–87

Dang LM, Min K, Wang H, Piran MJ, Lee CH, Moon H (2020) Sensor-based and vision-based human activity recognition: a comprehensive survey. Pattern Recogn 108:107561

Yuan D, Shu X, Liu Q, Zhang X, He Z (2023) Robust thermal infrared tracking via an adaptively multi-feature fusion model. Neural Comput Appl 35(4):3423–3434

Nadeem A, Jalal A, Kim K (2020) Accurate physical activity recognition using multidimensional features and Markov model for smart health fitness. Symmetry 12(11):1766

Ahmed N, Rafiq JI, Islam MR (2020) Enhanced human activity recognition based on smartphone sensor data using hybrid feature selection model. Sensors 20(1):317

Sousa Lima W, Souto E, El-Khatib K, Jalali R, Gama J (2019) Human activity recognition using inertial sensors in a smartphone: An overview. Sensors 19(14):3213

Xia K, Huang J, Wang H (2020) LSTM-CNN architecture for human activity recognition. IEEE Access 8:56855–56866

Mario M-O (2018) Human activity recognition based on single sensor square HV acceleration images and convolutional neural networks. IEEE Sens J 19(4):1487–1498

Sovacool BK, Del Rio DDF (2020) Smart home technologies in Europe: a critical review of concepts, benefits, risks and policies. Renew Sustain Energy Rev 120:109663

Kim E (2020) Interpretable and accurate convolutional neural networks for human activity recognition. IEEE Trans Industr Inf 16(11):7190–7198

Abdel-Basset M, Hawash H, Chakrabortty RK, Ryan M, Elhoseny M, Song H (2020) ST-DeepHAR: deep learning model for human activity recognition in IoHT applications. IEEE Internet Things J 8(6):4969–4979

Ahmadi-Karvigh S, Ghahramani A, Becerik-Gerber B, Soibelman L (2018) Real-time activity recognition for energy efficiency in buildings. Appl Energy 211:146–160

Berrezueta-Guzman J, Pau I, Martín-Ruiz M-L, Máximo-Bocanegra N (2020) Smart-home environment to support homework activities for children. IEEE Access 8:160251–160267

Wang J, Chen Y, Hao S, Peng X, Hu L (2019) Deep learning for sensor-based activity recognition: a survey. Pattern Recogn Lett 119:3–11

Jalal A, Quaid MAK, ud din Tahir SB, Kim K (2020) A study of accelerometer and gyroscope measurements in physical life-log activities detection systems. Sensors 20(22):6670

Jansi R, Amutha R (2020) Detection of fall for the elderly in an indoor environment using a tri-axial accelerometer and Kinect depth data. Multidimension Syst Signal Process 31(4):1207–1225

Franco A, Magnani A, Maio D (2020) A multimodal approach for human activity recognition based on skeleton and RGB data. Pattern Recogn Lett 131:293–299

Lara OD, Labrador MA (2012) A survey on human activity recognition using wearable sensors. IEEE Commun Surv Tutorials 15(3):1192–1209

Xing Y, Lv C, Wang H, Cao D, Velenis E, Wang F-Y (2019) Driver activity recognition for intelligent vehicles: a deep learning approach. IEEE Trans Veh Technol 68(6):5379–5390

Li Q, Gravina R, Li Y, Alsamhi SH, Sun F, Fortino G (2020) Multi-user activity recognition: challenges and opportunities. Information Fusion 63:121–135

Ramanujam E, Perumal T, Padmavathi S (2021) Human activity recognition with smartphone and wearable sensors using deep learning techniques: a review. IEEE Sens J 21(12):13029–13040

Suman S, Etemad A, Rivest F (2021) Potential impacts of smart homes on human behavior: a reinforcement learning approach. arXiv preprint arXiv:2102.13307

Wang Y, Cang S, Yu H (2019) A survey on wearable sensor modality centred human activity recognition in health care. Expert Syst Appl 137:167–190

Yuan D, Chang X, Huang P-Y, Liu Q, He Z (2020) Self-supervised deep correlation tracking. IEEE Trans Image Process 30:976–985

Helmi AM, Al-qaness MA, Dahou A, Abd Elaziz M (2023) Human activity recognition using marine predators algorithm with deep learning. Futur Gener Comput Syst 142:340–350

Morshed MG, Sultana T, Alam A, Lee Y-K (2023) Human action recognition: a taxonomy-based survey, updates, and opportunities. Sensors 23(4):2182

Ferrari A, Micucci D, Mobilio M, Napoletano P (2023) Deep learning and model personalization in sensor-based human activity recognition. J Reliable Intell Environ 9(1):27–39

Hussain Z, Sheng QZ, Zhang WE (2020) A review and categorization of techniques on device-free human activity recognition. J Netw Comput Appl 167:102738

Koutrintzes D, Spyrou E, Mathe E, Mylonas P (2023) A multimodal fusion approach for human activity recognition. Int J Neural Syst 33(01):2350002

Gupta N, Gupta SK, Pathak RK, Jain V, Rashidi P, Suri JS (2022) Human activity recognition in artificial intelligence framework: a narrative review. Artif Intell Rev 55(6):4755–4808

Beddiar DR, Nini B, Sabokrou M, Hadid A (2020) Vision-based human activity recognition: a survey. Multimed Tools Appl 79(41):30509–30555

Arshad MH, Bilal M, Gani A (2022) Human activity recognition: review, taxonomy and open challenges. Sensors 22(17):6463

Yuan D, Chang X, Li Z, He Z (2022) Learning adaptive spatial-temporal context-aware correlation filters for UAV tracking. ACM Trans Multimedia Comput Commun Appl (TOMM) 18(3):1–18

Yuan D, Shu X, Liu Q, He Z (2022) Aligned spatial-temporal memory network for thermal infrared target tracking. IEEE Trans Circuits Syst II Express Briefs 70(3):1224–1228

Yuan D, X Chang, Q Liu, Y Yang, D Wang, M Shu, Z He G Shi (2023) Active learning for deep visual tracking. IEEE Transact Neural Netw Learn Syst 1–13. https://doi.org/10.1109/TNNLS.2023.3266837

Morales J, Akopian D (2017) Physical activity recognition by smartphones, a survey. Biocybern Biomed Eng 37(3):388–400

Zhang Z, Wang W, An A, Qin Y, Yang F (2023) A human activity recognition method using wearable sensors based on convtransformer model. Evol Syst 1-17. https://doi.org/10.1007/s12530-022-09480-y

Qu Y, Tang Y, Yang X, Wen Y, Zhang W (2023) Context-aware mutual learning for semi-supervised human activity recognition using wearable sensors. Expert Syst Appl 219:119679

Kańtoch E (2017) Human activity recognition for physical rehabilitation using wearable sensors fusion and artificial neural networks. In: Computing in Cardiology (CinC). IEEE, pp 1–4

Hoang ML, Carratù M, Paciello V, Pietrosanto A (2021) Body temperature—indoor condition monitor and activity recognition by MEMS accelerometer based on IoT-alert system for people in quarantine due to COVID-19. Sensors 21(7):2313

Zhong C-L (2020) Internet of things sensors assisted physical activity recognition and health monitoring of college students. Measurement 159:107774

Jagannath S, Sarcevic A, Marsic I (2018) An analysis of speech as a modality for activity recognition during complex medical teamwork. In: Proceedings of the 12th EAI International conference on pervasive computing technologies for healthcare, pp 88–97

Jia R, Liu B (2013) Human daily activity recognition by fusing accelerometer and multi-lead ECG data. In: IEEE International conference on signal processing, communication and computing (ICSPCC 2013), IEEE, pp 1–4

Pawar T, Chaudhuri S, Duttagupta SP (2006) Analysis of ambulatory ECG signal. In: International conference of the IEEE engineering in medicine and biology society. IEEE, pp 3094–3097

Salehzadeh A, Calitz AP, Greyling J (2020) Human activity recognition using deep electroencephalography learning. Biomed Signal Process Control 62:102094

Graña M, Aguilar-Moreno M, De Lope Asiain J, Araquistain IB, Garmendia X (2020) Improved activity recognition combining inertial motion sensors and electroencephalogram signals. Int J Neural Syst 30(10):2050053

Liang H, Tao Y, Wang M, Guo Y, Zhao X (2021) System-level temperature compensation method for the RLG-IMU Based on HHO-RVR. J Sens 1–16

Abedin A, Rezatofighi SH, Shi Q, Ranasinghe DC (2019) Sparsesense: human activity recognition from highly sparse sensor data-streams using set-based neural networks. arXiv preprint arXiv:1906.02399

Gu Y, Yu C, Li Z, Li W, Xu S, Wei X, Shi Y (2019) Accurate and low-latency sensing of touch contact on any surface with finger-worn IMU sensor. In: Proceedings of the 32nd annual ACM symposium on user interface software and technology, pp 1059–1070

Ma C, Li W, Cao J, Du J, Li Q, Gravina R (2020) Adaptive sliding window based activity recognition for assisted livings. Information Fusion 53:55–65

Antar AD, Ahmed M, Ahad MAR (2019) Challenges in sensor-based human activity recognition and a comparative analysis of benchmark datasets: a review. In: Joint 8th International conference on informatics, electronics & vision (ICIEV) and 3rd international conference on imaging, vision & pattern recognition (icIVPR). IEEE, pp 134–139

Nandy A, Saha J, Chowdhury C (2020) Novel features for intensive human activity recognition based on wearable and smartphone sensors. Microsyst Technol 26(6):1889–1903

Garcia-Gonzalez D, Rivero D, Fernandez-Blanco E, Luaces MR (2020) A public domain dataset for real-life human activity recognition using smartphone sensors. Sensors 20(8):2200

Shojaedini SV, Beirami MJ (2020) Mobile sensor based human activity recognition: distinguishing of challenging activities by applying long short-term memory deep learning modified by residual network concept. Biomed Eng Lett 10(3):419–430

Bettini C, Civitarese G, Presotto R (2020) Caviar: context-driven active and incremental activity recognition. Knowl-Based Syst 196:105816

Beirami MJ, Shojaedini SV (2020) Residual network of residual network: a new deep learning modality to improve human activity recognition by using smart sensors exposed to unwanted shocks. Health Manag Inf Sci 7(4):228–239

Matsuyama H, Yoshida T, Hayashida N, Fukushima Y, Yonezawa T, Kawaguchi N (2020) Nurse care activity recognition challenge: a comparative verification of multiple preprocessing approaches. In: Adjunct proceedings of the acm international joint conference on pervasive and ubiquitous computing and proceedings of the ACM International Symposium on Wearable Computers. ACM, pp 414–418

Chung S, Lim J, Noh KJ, Kim G, Jeong H (2019) Sensor data acquisition and multimodal sensor fusion for human activity recognition using deep learning. Sensors 19(7):1716

Han DY, Park BO, Kim JW, Lee JH, Lee WG (2020) Non-verbal communication and touchless activation of a radio-controlled car via facial activity recognition. Int J Precis Eng Manuf 21(6):1035–1046

Commission I (1990) Aids and appliances for people with disabilities. J S Dev in Africa 3(1):39–53

Hassan MM, Ullah S, Hossain MS, Alelaiwi A (2021) An end-to-end deep learning model for human activity recognition from highly sparse body sensor data in internet of medical things environment. J Supercomput 77(3):2237–2250

Luo F, Poslad S, Bodanese E (2020) Temporal convolutional networks for multiperson activity recognition using a 2-d lidar. IEEE Internet Things J 7(8):7432–7442

Acar A, Fereidooni H, Abera T, Sikder AK, Miettinen M, Aksu H, Conti M, Sadeghi A-R, Uluagac S (2020) Peek-a-boo: I see your smart home activities, even encrypted. In: Proceedings of the 13th ACM conference on security and privacy in wireless and mobile networks. ACM, pp 207–218

Guo J, Mu Y, Xiong M, Liu Y, Gu J (2019) Activity feature solving based on TF-IDF for activity recognition in smart homes. Complexity 1–10

Jethanandani M, Sharma A, Perumal T, Chang J-R (2020) Multi-label classification based ensemble learning for human activity recognition in smart home. Internet Things 12:100324

Barna A, Masum AKM, Hossain ME, Bahadur EH, Alam MS (2019) A study on human activity recognition using gyroscope, accelerometer, temperature and humidity data. In: International conference on electrical, computer and communication engineering (ECCE). IEEE, pp 1–6

Xu Z, Wei J, Zhu J, Yang W (2017) A robust floor localization method using inertial and barometer measurements. In: International conference on indoor positioning and indoor navigation (IPIN). IEEE, pp 1–8

Zhang W, Zhao X, Li Z (2019) A comprehensive study of smartphone-based indoor activity recognition via Xgboost. IEEE Access 7:80027–80042

Bharti P, De D, Chellappan S, Das SK (2018) HuMAn: complex activity recognition with multi-modal multi-positional body sensing. IEEE Trans Mob Comput 18(4):857–870

Zhou B, Yang J, Li Q (2019) Smartphone-based activity recognition for indoor localization using a convolutional neural network. Sensors 19(3):621

Tong C, Tailor SA, Lane ND (2020) Are accelerometers for activity recognition a dead-end? In: Proceedings of the 21st international workshop on mobile computing systems and applications, pp 39–44

Meng Y, Lin C-C, Panda R, Sattigeri P, Karlinsky L, Oliva A, Saenko K, Feris R (2020) Ar-net: Adaptive frame resolution for efficient action recognition. In: Computer vision–ECCV 2020: 16th European conference, Glasgow, UK, August 23–28, 2020, proceedings, part VII 16. Springer International Publishing, pp 86–104

Naik K, Pandit T, Naik N, Shah P (2021) Activity recognition in residential spaces with internet of things devices and thermal imaging. Sensors 21(3):988

Yu M, Naqvi SM, Rhuma A, Chambers J (2012) One class boundary method classifiers for application in a video-based fall detection system. IET Comput Vision 6(2):90–100

Auvinet E, Multon F, Saint-Arnaud A, Rousseau J, Meunier J (2010) Fall detection with multiple cameras: an occlusion-resistant method based on 3-d silhouette vertical distribution. IEEE Trans Inf Technol Biomed 15(2):290–300

Rougier C, Meunier J, St-Arnaud A, Rousseau J (2011) Robust video surveillance for fall detection based on human shape deformation. IEEE Trans Circuits Syst Video Technol 21(5):611–622

Dwivedi N, Singh DK, Kushwaha DS (2020) Orientation invariant skeleton feature (oisf): a new feature for human activity recognition. Multimed Tools Appl 79(29):21037–21072

Kong X, Meng Z, Meng L, Tomiyama H (2018) A privacy protected fall detection IoT system for elderly persons using depth camera. In: International conference on advanced mechatronic systems (ICAMechS). IEEE, pp 31–35

Win S, Thein TLL (2020) Real-time human motion detection, tracking and activity recognition with skeletal model. In: IEEE Conference on computer applications (ICCA). IEEE, pp 1–5

Gaglio S, Re GL, Morana M (2014) Human activity recognition process using 3-D posture data. IEEE Transact Human-Machine Syst 45(5):586–597

Kim K, Jalal A, Mahmood M (2019) Vision-based human activity recognition system using depth silhouettes: a smart home system for monitoring the residents. J Electr Eng Technol 14(6):2567–2573

Blumrosen G, Miron Y, Intrator N, Plotnik M (2016) A real-time kinect signature-based patient home monitoring system. Sensors 16(11):1965

Zhao F, Cao Z, Xiao Y, Mao J, Yuan J (2018) Real-time detection of fall from bed using a single depth camera. IEEE Trans Autom Sci Eng 16(3):1018–1032

Bulling A, Blanke U, Schiele B (2014) A tutorial on human activity recognition using body-worn inertial sensors. ACM Computing Surveys (CSUR) 46(3):1–33

Gholamiangonabadi D, Kiselov N, Grolinger K (2020) Deep neural networks for human activity recognition with wearable sensors: leave-one-subject-out cross-validation for model selection. IEEE Access 8:133982–133994

Weng E-J, Fu L-C (2012) On-line human action recognition by combining joint tracking and key pose recognition. In: IEEE/RSJ International conference on intelligent robots and systems. IEEE, pp 4112–4117

Kowsar Y, Moshtaghi M, Velloso E, Kulik L, Leckie C (2016) Detecting unseen anomalies in weight training exercises. In: Proceedings of the 28th Australian conference on computer-human interaction, pp 517–526

Rashidi P, Cook DJ (2011) Activity knowledge transfer in smart environments. Pervasive Mob Comput 7(3):331–343

Kim Y-J, B-N Kang and D Kim (2015) Hidden markov model ensemble for activity recognition using tri-axis accelerometer. In: IEEE International conference on systems, man, and cybernetics. IEEE, pp 3036–3041

Singha S, Johansson AM, Doulgeris AP (2020) Robustness of SAR sea ice type classification across incidence angles and seasons at L-band. IEEE Trans Geosci Remote Sens 59(12):9941–9952

Su X, Tong H, Ji P (2014) Activity recognition with smartphone sensors. Tins Sci Tech 19(3):235–249

Amft O, M Kusserow and G Tröster (2007) Probabilistic parsing of dietary activity events. In: 4th International workshop on wearable and implantable body sensor networks (BSN 2007) March 26–28, 2007 RWTH Aachen University, Germany. Springer Berlin, Heidelberg, pp 242–247

Lv T, Wang X, Jin L, Xiao Y, Song M (2020) Margin-based deep learning networks for human activity recognition. Sensors 2(7):1871–1890

Minusa S, T Tanaka and H Kuriyama (2020) Visualizing worklog based on human working activity recognition using unsupervised activity pattern encoding. In: 42nd Annual International conference of the ieee engineering in medicine & biology society (EMBC). IEEE, pp 4165–4168

Drumetz L, Chanussot J, Jutten C (2019) Variability of the endmembers in spectral unmixing. In: Data handling in science and technology, vol. 32. Elsevier, pp 167–203

Hendre M, Mukherjee P, Godse M (2020) Utility of neural embeddings in semantic similarity of text data. In: Evolution in computational intelligence: frontiers in intelligent computing: theory and applications (FICTA 2020), Volume 1. Springer, Singapore, pp 223–231

Tong W, Chen W, Han W, Li X, Wang L (2020) Channel-attention-based DenseNet network for remote sensing image scene classification. IEEE J Sel Top Appl Earth Obs Remote Sens 13:4121–4132

Singh R, Kushwaha AKS, Srivastava R (2023) Recent trends in human activity recognition–A comparative study. Cogn Syst Res 77:30–44

Baldominos A, Saez Y, Isasi P (2018) Evolutionary design of convolutional neural networks for human activity recognition in sensor-rich environments. Sensors 18(4):1288

Chavarriaga R, Sagha H, Calatroni A, Digumarti ST, Tröster G, Millán JDR, Roggen D (2013) The opportunity challenge: a benchmark database for on-body sensor-based activity recognition. Pattern Recogn Lett 34(15):2033–2042

Qiu S, Zhao H, Jiang N, Wang Z, Liu L, An Y, Zhao H, Miao X, Liu R, Fortino G (2022) Multi-sensor information fusion based on machine learning for real applications in human activity recognition: state-of-the-art and research challenges. Information Fusion 80:241–265

Cruciani F, Vafeiadis A, Nugent C, Cleland I, McCullagh P, Votis K, Giakoumis D, Tzovaras D, Chen L, Hamzaoui R (2020) Feature learning for human activity recognition using convolutional neural networks. CCF Trans Pervasive Comp Interact 2(1):18–32

Partridge K, Golle P (2008) On using existing time-use study data for ubiquitous computing applications. In: Proceedings of the 10th international conference on Ubiquitous computing, pp 144–153

Mao Z, Zhang F, Huang X, Jia X, Gong Y, Zou Q (2021) Deep neural networks for road sign detection and embedded modeling using oblique aerial images. Remote Sens 13(5):879

Zhou X, Liang W, Kevin I, Wang K, Wang H, Yang LT, Jin Q (2020) Deep-learning-enhanced human activity recognition for Internet of healthcare things. IEEE Internet Things J 7(7):6429–6438

Asim Y, Azam MA, Ehatisham-ul-Haq M, Naeem U, Khalid A (2020) Context-aware human activity recognition (CAHAR) in-the-Wild using smartphone accelerometer. IEEE Sens J 20(8):4361–4371

Velloso E, A Bulling and H Gellersen (2013) Motionma: motion modelling and analysis by demonstration. In: Proceedings of the SIGCHI conference on human factors in computing systems, pp 1309–1318

Hamad RA, Kimura M, Lundström J (2020) Efficacy of imbalanced data handling methods on deep learning for smart homes environments. SN Comput Sci 1(4):1–10

Shahi A, Deng JD, Woodford BJ (2017) A streaming ensemble classifier with multi-class imbalance learning for activity recognition. In International Joint Conference on Neural Networks (IJCNN) (pp. 3983-3990). IEEE.

Chawla NV, Japkowicz N, Kotcz A (2004) Special issue on learning from imbalanced data sets. ACM SIGKDD Explorations Newsl 6(1):1–6

Hu Y, Zhang X-Q, Xu L, He FX, Tian Z, She W, Liu W (2020) Harmonic loss function for sensor-based human activity recognition based on LSTM recurrent neural networks. IEEE Access 8:135617–135627

Hamad RA, Yang L, Woo WL, Wei B (2020) Joint learning of temporal models to handle imbalanced data for human activity recognition. Appl Sci 10(15):5293

Wu D, Wang Z, Chen Y, Zhao H (2016) Mixed-kernel based weighted extreme learning machine for inertial sensor based human activity recognition with imbalanced dataset. Neurocomputing 190:35–49

Irvine N, Nugent C, Zhang S, Wang H, Ng WW (2019) Neural network ensembles for sensor-based human activity recognition within smart environments. Sensors 20(1):216

Ding X, Jiang T, Li Y, Xue W, Zhong Y (2020) Device-free location-independent human activity recognition using transfer learning based on CNN. In IEEE International Conference on Communications Workshops (ICC Workshops) (pp. 1-6). IEEE.

Kwon H, Tong C, Haresamudram H, Gao Y, Abowd GD, Lane ND, Ploetz T (2020) IMUTube: automatic extraction of virtual on-body accelerometry from video for human activity recognition. Proc ACM Interact Mob Wearable Ubiquitous Technol 4(3):1–29

Javed AR, Sarwar MU, Khan S, Iwendi C, Mittal M, Kumar N (2020) Analyzing the effectiveness and contribution of each axis of tri-axial accelerometer sensor for accurate activity recognition. Sensors 20(8):2216

Yurur O, Liu CH, Moreno W (2014) A survey of context-aware middleware designs for human activity recognition. IEEE Commun Mag 52(6):24–31

Qi J, Yang P, Newcombe L, Peng X, Yang Y, Zhao Z (2020) An overview of data fusion techniques for Internet of Things enabled physical activity recognition and measure. Information Fusion 55:269–280

Gil-Martín M, San-Segundo R, Fernández-Martínez F, de Córdoba R (2020) Human activity recognition adapted to the type of movement. Comput Electr Eng 88:106822

Ustev YE, Durmaz Incel O, Ersoy C (2013) User, device and orientation independent human activity recognition on mobile phones: challenges and a proposal. In: Proceedings of the 2013 ACM conference on Pervasive and ubiquitous computing adjunct publication. ACM, pp 1427–1436

Slaton T, Hernandez C, Akhavian R (2020) Construction activity recognition with convolutional recurrent networks. Autom Constr 113:103138

Altuve M, Lizarazo P, Villamizar J (2020) Human activity recognition using improved complete ensemble EMD with adaptive noise and long short-term memory neural networks. Biocybern Biomed Eng 40(3):901–909

Mekruksavanich S, Jitpattanakul A (2020) Exercise activity recognition with surface electromyography sensor using machine learning approach. In: Joint international conference on digital arts, media and technology with ECTI Northern section conference on electrical, electronics, computer and telecommunications engineering (ECTI DAMT & NCON). IEEE, pp 75–78

Sheishaa O, M Tamazin and I Morsi (2020) A context-aware motion mode recognition system using embedded inertial sensors in portable smart devices. Journal (Issue): 275–290

Mekruksavanich S, Jitpattanakul A (2021) Biometric user identification based on human activity recognition using wearable sensors: an experiment using deep learning models. Electronics 10(3):308

Wan S, Qi L, Xu X, Tong C, Gu Z (2020) Deep learning models for real-time human activity recognition with smartphones. Mobile Netw Appl 25(2):743–755

De Vita A, Pau D, Parrella C, Di Benedetto L, Rubino A, Licciardo GD (2020) Low-power HWAccelerator for AI edge-computing in human activity recognition systems. In: 2nd IEEE international conference on artificial intelligence circuits and systems (AICAS). IEEE, pp 291–295

Yi Z (2020) Discriminative dimensionality reduction for sensor drift compensation in electronic nose: a robust, low-rank, and sparse representation method. Expert Syst Appl 148:113238

Weygers I, Kok M, De Vroey H, Verbeerst T, Versteyhe M, Hallez H, Claeys K (2020) Drift-free inertial sensor-based joint kinematics for long-term arbitrary movements. IEEE Sens J 20(14):7969–7979

Twomey N, Diethe T, Fafoutis X, Elsts A, McConville R, Flach P, Craddock I (2018) A comprehensive study of activity recognition using accelerometers. Informatics 5(2):27

Demrozi F, Pravadelli G, Bihorac A, Rashidi P (2020) Human activity recognition using inertial, physiological and environmental sensors: a comprehensive survey. IEEE Access 8:210816–210836

Zolfaghari S, Keyvanpour MR (2016) SARF: Smart activity recognition framework in ambient assisted living. In: Federated conference on computer science and information systems (FedCSIS). IEEE, pp 1435–1443

Deng S, Hua W, Wang B, Wang G, Zhou X (2020) Few-shot human activity recognition on noisy wearable sensor data. In: Database systems for advanced applications: 25th international conference, DASFAA 2020, Jeju, South Korea, September 24–27, 2020, proceedings, Part II 25. Springer International Publishing, pp 54–72

Sanabria AR, Ye J (2020) Unsupervised domain adaptation for activity recognition across heterogeneous datasets. Pervasive Mob Comput 64:101147

Kunze K, Lukowicz P (2008) Dealing with sensor displacement in motion-based onbody activity recognition systems. In: Proceedings of the 10th international conference on Ubiquitous computing, pp 20–29

Ayvaz U, Elmoughni H, Atalay A, Atalay Ö, Ince G (2020) Real-time human activity recognition using textile-based sensors. In: EAI International conference on body area networks. Springer International Publishing, Cham, pp 168–183

Chen J, Sun Y, Sun S (2021) Improving human activity recognition performance by data fusion and feature engineering. Sensors 21(3):692

Pérez-Torres R, Torres-Huitzil C, Galeana-Zapién H (2016) Power management techniques in smartphone-based mobility sensing systems: a survey. Pervasive Mob Comput 31:1–21

Wang Y, Lin J, Annavaram M, Jacobson QA, Hong J, Krishnamachari B, Sadeh N (2009) A framework of energy efficient mobile sensing for automatic user state recognition. In: Proceedings of the 7th international conference on mobile systems, applications, and services, pp 179–192

Yang X, Cao R, Zhou M, Xie L (2020) Temporal-frequency attention-based human activity recognition using commercial WiFi devices. IEEE Access 8:137758–137769

Yang R, Wang B (2016) PACP: a position-independent activity recognition method using smartphone sensors. Information 7(4):72

Gjoreski H, Stankoski S, Kiprijanovska I, Nikolovska A, Mladenovska N, Trajanoska M, Velichkovska B, Gjoreski M, Luštrek M, Gams M (2020) Wearable sensors data-fusion and machine-learning method for fall detection and activity recognition. Challenges and trends in multimodal fall detection for healthcare 81–96

Cook DJ, Youngblood M, Heierman EO, Gopalratnam K, Rao S, Litvin A, Khawaja F (2003) MavHome: an agent-based smart home. In: Proceedings of the First IEEE International conference on pervasive computing and communications, 2003. (PerCom 2003). IEEE, pp 521–524

Chen L, Nugent CD, Wang H (2011) A knowledge-driven approach to activity recognition in smart homes. IEEE Trans Knowl Data Eng 24(6):961–974

Muzammal M, Talat R, Sodhro AH, Pirbhulal S (2020) A multi-sensor data fusion enabled ensemble approach for medical data from body sensor networks. Information Fusion 53:155–164

Fu B, Damer N, Kirchbuchner F, Kuijper A (2020) Sensing technology for human activity recognition: a comprehensive survey. IEEE Access 8:83791–83820

Subasi A, Khateeb K, Brahimi T, Sarirete A (2020) Human activity recognition using machine learning methods in a smart healthcare environment. In: Innovation in health informatics. Academic Press. pp 123–144

Li T, Fong S, Wong KK, Wu Y, Yang X-s, Li X (2020) Fusing wearable and remote sensing data streams by fast incremental learning with swarm decision table for human activity recognition. Information Fusion 60:41–64

Jung M, Chi S (2020) Human activity classification based on sound recognition and residual convolutional neural network. Autom Constr 114:103177

Nizam Y, Jamil MMA (2020) A novel approach for human fall detection and fall risk assessment. In: Ponce H, Martínez-Villaseñor L, Brieva J, Moya-Albor E (eds) Challenges and trends in multimodal fall detection for healthcare. Studies in Systems, Decision and Control, vol. 273. Springer, Cham. https://doi.org/10.1007/978-3-030-38748-8_10

Chen K, Zhang D, Yao L, Guo B, Yu Z, Liu Y (2021) Deep learning for sensor-based human activity recognition: overview, challenges, and opportunities. ACM Computing Surveys (CSUR) 54(4):1–40

Dua N, Singh SN, Challa SK, Semwal VB, Sai Kumar M (2023) A survey on human activity recognition using deep learning techniques and wearable sensor data. In: International conference on machine learning, image processing, network security and data sciences. Springer Nature Switzerland, Cham, pp 52–71

Gu Y, Han Y, Liu X, Zhang N, Zhang X, Pan M, Wang S, Dong W, Liu T (2023) A flexible sensor and MIMUs based multi-sensor wearable system for human motion analysis. IEEE Sens J 23(4):4107–4117

Venkateswara Rao T, Bisht DS (2022) A review of human activity recognition (HAV) techniques. In: Deepak BBVL, Parhi D, Biswal B, Jena PC (eds) Applications of computational methods in manufacturing and product design. Lecture notes in mechanical engineering. Springer, Singapore. https://doi.org/10.1007/978-981-19-0296-3_59

Geppert M, Larsson V, Speciale P, Schönberger JL, Pollefeys M (2020) Privacy preserving structure-from-motion. In: Vedaldi A, Bischof H, Brox T, Frahm JM (eds) Computer Vision – ECCV 2020. ECCV 2020. Lecture Notes in Computer Science, vol. 12346. Springer, Cham, , pp 333–350. https://doi.org/10.1007/978-3-030-58452-8_20

Zhang Y, Yin Y, Wang Y, Ai J, Wu D (2023) CSI-based location-independent human activity recognition with parallel convolutional networks. Comput Commun 197:87–95

Yang J, Xu Y, Cao H, Zou H, Xie L (2022) Deep learning and transfer learning for device-free human activity recognition: a survey. J Autom Intell 1(1):100007

Brito R, Biuk-Aghai RP, Fong S (2021) GPU-based parallel Shadow Features generation at neural system for improving gait human activity recognition. Multimed Tools Appl 80(8):12293–12308

Ray A, Kolekar MH, Balasubramanian R, Hafiane A (2023) Transfer learning enhanced vision-based human activity recognition: a decade-long analysis. Int J Inform Manag Data Insights 3(1):100142

Ishwarya K, Alice Nithya A (2022) Performance-enhanced real-time lifestyle tracking model based on human activity recognition (PERT-HAR) model through smartphones. J Supercomput 78(4):5241–5268

Ezatzadeh S, Keyvanpour MR (2017) Fall detection for elderly in assisted environments: video surveillance systems and challenges. In: 9th international conference on information and knowledge technology (IKT). IEEE, pp 93–98

Zhu Q, Huang S, Hu H, Li H, Chen M, Zhong R (2021) Depth-enhanced feature pyramid network for occlusion-aware verification of buildings from oblique images. ISPRS J Photogramm Remote Sens 174:105–116

Wang K, Peng X, Yang J, Meng D, Qiao Y (2020) Region attention networks for pose and occlusion robust facial expression recognition. IEEE Trans Image Process 29:4057–4069

Zhang Y, Sun S, Lei L, Liu H, Xie H (2021) STAC: Spatial-Temporal Attention on Compensation information for activity recognition in FPV. Sensors 21(4):1106

Chen C, Zhu Z, Hammad A (2020) Automated excavators activity recognition and productivity analysis from construction site surveillance videos. Autom Constr 110:103045

Poppe R (2010) A survey on vision-based human action recognition. Image Vis Comput 28(6):976–990

Seyfioğlu MS, Özbayoğlu AM, Gürbüz SZ (2018) Deep convolutional autoencoder for radar-based classification of similar aided and unaided human activities. IEEE Trans Aerosp Electron Syst 54(4):1709–1723

Tabatabaee Malazi H, Davari M (2018) Combining emerging patterns with random forest for complex activity recognition in smart homes. Appl Intell 48(2):315–330

Manivannan A, Chin WCB, Barrat A, Bouffanais R (2020) On the challenges and potential of using barometric sensors to track human activity. Sensors 20(23):6786

Qin Z, Zhao P, Zhuang T, Deng F, Ding Y, Chen D (2023) A survey of identity recognition via data fusion and feature learning. Information Fusion 91:694–712

Li X, He Y, Fioranelli F, Jing X (2021) Semisupervised human activity recognition with radar micro-Doppler signatures. IEEE Trans Geosci Remote Sens 60:1–12

Abedin A, Ehsanpour M, Shi Q, Rezatofighi H, Ranasinghe DC (2021) Attend and discriminate: beyond the state-of-the-art for human activity recognition using wearable sensors. Proc ACM Interact Mob Wearable Ubiquitous Technol 5(1):1–22

Guo Y, Chu Y, Jiao B, Cheng J, Yu Z, Cui N, Ma L (2021) Evolutionary dual-ensemble class imbalance learning for human activity recognition. IEEE Trans Emerg Top Comput Intell 6(4):728–739

Meng L, Zhang A, Chen C, Wang X, Jiang X, Tao L, Fan J, Wu X, Dai C, Zhang Y (2021) Exploration of human activity recognition using a single sensor for stroke survivors and able-bodied people. Sensors 21(3):799

Anagnostis A, Benos L, Tsaopoulos D, Tagarakis A, Tsolakis N, Bochtis D (2021) Human activity recognition through recurrent neural networks for human–robot interaction in agriculture. Appl Sci 11(5):2188

Bouchabou D, Nguyen SM, Lohr C, LeDuc B, Kanellos I (2021) A survey of human activity recognition in smart homes based on IoT sensors algorithms: Taxonomies, challenges, and opportunities with deep learning. Sensors 21(18):6037

Buoncompagni L, Kareem SY, Mastrogiovanni F (2021) Human activity recognition models in ontology networks. IEEE Trans Cybern 52(6):5587–5606

Alemdar H, Ertan H, Incel OD, Ersoy C (2013) ARAS human activity datasets in multiple homes with multiple residents. In; 7th International conference on pervasive computing technologies for healthcare and workshops. IEEE, pp 232–235

Nguyen B, Coelho Y, Bastos T, Krishnan S (2021) Trends in human activity recognition with focus on machine learning and power requirements. Mach Lear Appl 5:100072

Madokoro H, Nix S, Woo H, Sato K (2021) A mini-survey and feasibility study of deep-learning-based human activity recognition from slight feature signals obtained using privacy-aware environmental sensors. Appl Sci 11(24):11807

Raeis H, Kazemi M, Shirmohammadi S (2021) Human activity recognition with device-free sensors for well-being assessment in smart homes. IEEE Instrum Meas Mag 24(6):46–57

Sharma AK, Tomar S, Gupta K (2021) Various approaches of human activity recognition: a review. In: 5th International conference on computing methodologies and communication (ICCMC). IEEE, pp 1668–1676

Gu T, Chen S, Tao X, Lu J (2010) An unsupervised approach to activity recognition and segmentation based on object-use fingerprints. Data Knowl Eng 69(6):533–544

Funding

The authors declare that their research work is not supported by anybody or any organization.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The authors declare that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this article.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Keyvanpour, M.R., Mehrmolaei, S., Shojaeddini, S.V. et al. HAR-CO: A comparative analytical review for recognizing conventional human activity in stream data relying on challenges and approaches. Multimed Tools Appl 83, 40811–40856 (2024). https://doi.org/10.1007/s11042-023-16795-8

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-023-16795-8