Abstract

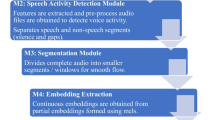

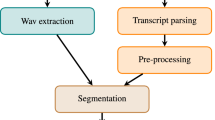

In AI pandemic applications, the online automatic AI recording apparatus for official councils such as court trials, business conferences and commercial meetings will become imperative because it could let the opinion identification and consensus of participants be synchronically available to implicitly diminish social costs such as follow-up disputes and controversies. Hence, in this study, an automatic on-line multi-dialogue recording system is completely constructed, where the unbounded interleaved-state recurrent neural networks (UIS-RNN) with proposed crux improvements is exploited to achieve confident speaker-diarization. For keeping the systematic robustness, a denoising spectral-LSTM, which is precisely modified from the dual-signal transformation LSTM (DTLN), can strengthen its subsequent crux-improved UIS-RNN and automatic speech recognition (ASR). Finally, the MacBERT model is set to rectify the possible wrong words in conversed sentences according to the learned rational context. For making our system being a practical software apparatus in the use of unmarked multi-person councils, we have also completed the convenient interfaces for the operations of ASR and speaker-diarization, which can exhibit on-line denoising efficacy and speaker-diarization results as well as offer real-time hand-crafted rectifications to common users. In extensive experiments, the proposed recording system can promise high accuracy rates of online speaker diarization and speech-separated ASR. Our proposed system had been examined by the cooperated law court staffs, who offered the noise-embedded speeches of practical court field to test our system. Since the tight recording burden had been indeed noticeably alleviated in their legal-action councils, the court staffs had endorsed that the proposed entire system could be a friendly labor-saving AI apparatus for on-line automatic multi-dialogue recording.

Similar content being viewed by others

Data availability

All the datasets and materials for training, validation and testing can be available from resources supported in [31, 32] and [33] as well as the datasets collected by ourselves temporarily partially opened and available at https://drive.google.com/drive/folders/17tGk99_iywuSDT0H_dMBqc_xUcG4Zkui?usp=drive_link.

References

Dehak N et al. (2011) Front-end factor analysis for speaker verification. In: Proc. of IEEE Transactions on Audio, Speech, and Language Processing. https://ieeexplore.ieee.org/document/5545402

Zhu W, Pelecanos J (2016) Online speaker diarization using adapted i-vector transforms. In: Proc. of IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). https://doi.org/10.1109/ICASSP.2016.7472638

Variani E et al. (2014) Deep neural networks for small footprint text-dependent speaker verification. In: Proc. of IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). https://ieeexplore.ieee.org/document/6854363

Snyder D et al. (2018) X-vectors: Robust DNN embeddings for speaker recognition. In: Proc. of IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). https://ieeexplore.ieee.org/document/8461375

Zajíc Z et al. (2017) Speaker diarization using convolutional neural network for statistics accumulation refinement. In: INTERSPEECH. https://www.kky.zcu.cz/cs/publications/1/ZajicZbynek_2017_SpeakerDiarization.pdf

Wang Q et al. (2018) Speaker diarization with LSTM. In: Proc. of IEEE International conference on acoustics, speech and signal processing (ICASSP). https://ieeexplore.ieee.org/document/8462628

Garcia-Romero D et al.(2017) Speaker diarization using deep neural network embeddings. In: Proc. of International Conference on Acoustics, Speech and Signal Processing (ICASSP). https://ieeexplore.ieee.org/document/7953094

Westhausen N-L et al.(2020) Dual-signal transformation LSTM network for real-time noise suppression. In: Proc. of INTERSPEECH. https://arxiv.org/abs/2005.07551

Zhang A et al. (2019) Fully supervised speaker diarization. In: Proc. of IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). https://ieeexplore.ieee.org/document/8683892

Higuchi Y et al. (2020) Speaker embeddings incorporating acoustic conditions for diarization. In: Proc. ofIEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). doi:https://doi.org/10.1109/ICASSP40776.2020.9054273

Li Z et al. (2021) Compositional embedding models for speaker identification and diarization with simultaneous speech from 2+ speakers. In: Proc. of IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). https://ieeexplore.ieee.org/document/9413752

Gan Z et al. (2022) End-to-end speaker diarization of Tibetan based on BLSTM. In: Global Conference on Robotics, Artificial Intelligence and Information Technology (GCRAIT). https://ieeexplore.ieee.org/document/9898377

Kanda N et al. (2022) Transcribe-to-diarize: Neural speaker diarization for unlimited number of speakers using end-to-end speaker-attributed ASR. In: Proc. of IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). https://ieeexplore.ieee.org/document/9746225

Ayasi A et al. (2022) Speaker diarization using BiLSTM and BiGRU with self-attention. In: Proc. ofSecond International Conference on Next Generation Intelligent Systems (ICNGIS). https://doi.org/10.1109/ICNGIS54955.2022.10079831

Cheng S-W et al. (2022) An attention-based neural network on multiple speaker diarization. In: Proc. of IEEE 4th International Conference on Artificial Intelligence Circuits and Systems (AICAS). https://doi.org/10.1109/AICAS54282.2022.9870007

Pang B et al. (2022) TSUP speaker diarization system for conversational short-phrase speaker diarization challenge. In: Proc. of13th International Symposium on Chinese Spoken Language Processing (ISCSLP). https://doi.org/10.1109/ISCSLP57327.2022.10037846

Ravanelli M et al (2018) Light gated recurrent units for speech recognition. IEEE Trans Emerg Top Comput Intell 2(2):92102. https://doi.org/10.1109/TETCI.2017.2762739

Li J et al. (2019) Improving RNN transducer modeling for end-to-end speech recognition. In: Proc. of IEEE Automatic Speech Recognition and Understanding Workshop (ASRU). https://doi.org/10.1109/ASRU46091.2019.9003906

Hou J, Zhao S (2021) A real-time speech enhancement algorithm based on convolutional recurrent network and Wiener filter. In: Proc. ofIEEE 6th International Conference on Computer and Communication Systems (ICCCS). https://doi.org/10.1109/ICCCS52626.2021.9449307

Hu Y et al. (2020) Deep complex convolution recurrent network for phase-aware speech enhancement. In: INTERSPEECH. https://arxiv.org/abs/2008.00264

Hung J-W et al. (2021) Exploiting the non-uniform frequency-resolution spectrograms to improve the deep denoising auto-encoder for speech enhancement. In: Proc. of7th International Conference on Applied System Innovation (ICASI). https://doi.org/10.1109/ICASI52993.2021.9568478

Jannu C, Vanambathina S-D (2023) An attention based densely connected U-NET with convolutional GRU for speech enhancement. In: Proc. of 3rd International conference on Artificial Intelligence and Signal Processing (AISP). https://doi.org/10.1109/AISP57993.2023.10134933

Rethage D et al. (2018) A wavenet for speech denoising. In: Proc. of IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). https://ieeexplore.ieee.org/document/8462417

Hao X et al. (2021) Fullsubnet: A fullband and sub-band fusion model for real-time single-channel speech enhancement. In: Proc. of IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). https://arxiv.org/abs/2010.15508

Zhang C et al. (2021) Denoispeech: denoising text to speech with frame-level noise modeling. In: Proc. ofIEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). https://doi.org/10.1109/ICASSP39728.2021.9413934

Yang G et al. (2022) Speech signal denoising algorithm and simulation based on wavelet threshold. In: Proc. of4th International Conference on Natural Language Processing (ICNLP). doi:https://doi.org/10.1109/ICNLP55136.2022.00055

Kong Z et al. (2022) Speech denoising in the waveform domain with self-attention. In: Proc. ofIEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). https://doi.org/10.1109/ICASSP43922.2022.9746169

Zhao S et al. (2021) Monaural Speech Enhancement with Complex Convolutional Block Attention Module and Joint Time Frequency Losses. In: Proc. ofIEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). https://doi.org/10.1109/ICASSP39728.2021.9414569

Cui Y et al. (2020) Revisiting pre-trained models for Chinese natural language processing. Association for Computational Linguistics, vol. findings of the association for computational linguistics: EMNLP, arXiv preprint arXiv:2004.13922

Rix A-W et al. (2001) Perceptual evaluation of speech quality (PESQ)-a new method for speech quality assessment of telephone networks and codecs. In: Proc. of IEEE international conference on acoustics, speech, and signal processing. https://ieeexplore.ieee.org/document/941023

Dataset of Human Voice Speech Denoising Competition hosted by Industrial Technology Research Institute (ITRI), Taiwan, https://aidea-web.tw/topic/8d381596-ee9d-45d5-b779-188909ccb0c8

Formosa Language Understanding public dataset provided by National Center University, Taiwan, https://scidm.nchc.org.tw/dataset/grandchallenge

Audio-visual dataset VoxCeleb, https://www.robots.ox.ac.uk/~vgg/data/voxceleb/

Rombach R et al. (2022) High-resolution image synthesis with latent diffusion models, In: Proc. of IEEE international conference on Computer Vision and Pattern Recognition (CVPR). https://arxiv.org/abs/2112.10752

Acknowledgements

This work was supported by the funding of Ministry of Science and Technology of Taiwan under Grant MOST 111-2221-E006-177-MY2. Also, we would like to thank the AIGO competition for inspiring us to develop this system and then giving the high approval. Particularly, we are grateful to the Tainan District Court as the mainly cooperated institution for examining our proposed system and consecutively feeding key advices back via their practical applications.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interests

The authors have no conflicts of interest to declare that are relevant to the content of this article.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Chan, D.Y., Wang, JF. & Chin, HT. A new speaker-diarization technology with denoising spectral-LSTM for online automatic multi-dialogue recording. Multimed Tools Appl 83, 45407–45422 (2024). https://doi.org/10.1007/s11042-023-17283-9

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-023-17283-9