Abstract

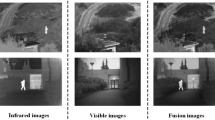

Due to the sampling and pooling operations, deep learning-based infrared and visible-light image fusion methods often result into detail loss problem, especially under low illumination. Therefore, we propose a novel cross-attention fusion network (CAFNET) to fuse infrared and low illumination visible-light image merely based on the first layer of the pre-trained VGG16 network. Firstly, features with the same size of source images are extracted by the first layer of pre-trained VGG16 respectively. Then, based on the extracted features, cross attention is calculated to distinguish the differences between infrared and visible-light image, and the spatial attention is computed to reflect the characteristics of infrared and visible-light image. After that, weight maps are gained through modulating the cross attention and spatial attention, based on which the source images are pre-fused. Finally, Gaussian blur-based details injection is performed to further enhance the details of the pre-fused image. Experiment results show that, compared with the traditional multi-scale and state-of-the-art deep learning-based fusion methods, our approach can achieve better performance both in subjective and objective evaluations.

Similar content being viewed by others

References

Liu Y, Zhou D, Nie R, Ding Z, Guo Y, Ruan X, Xia W, Hou R (2022) Tse_fuse: two stage enhancement method using attention mechanism and feature-linking model for infrared and visible image fusion. Dig Signal Process. https://doi.org/10.1016/j.dsp.2022.103387

Zhao F, Zhao W, Yao L, Liu Y (2021) Self-supervised feature adaption for infrared and visible image fusion. Inform Fus 76:189–203. https://doi.org/10.1016/j.inffus.2021.06.002

He K, Zhou D, Zhang X, Nie R, Wang Q, Jin X (2017) Infrared and visible image fusion based on target extraction in the nonsubsampled contourlet transform domain. J Appl Remote Sens 11(1):015011. https://doi.org/10.1117/1.JRS.11.015011

Naidu V (2011) Image fusion technique using multi-resolution singular value decomposition. Def Sci J 61(5):479. https://doi.org/10.14429/dsj.61.705

Bavirisetti DP, Dhuli R (2015) Fusion of infrared and visible sensor images based on anisotropic diffusion and karhunen-loeve transform. IEEE Sens J 16(1):203–209. https://doi.org/10.1109/JSEN.2015.2478655

Bavirisetti DP, Xiao G, Liu G (2017) Multi-sensor image fusion based on fourth order partial differential equations. In: 2017 20th international conference on information fusion (Fusion). pp. 1–9 . https://doi.org/10.23919/ICIF.2017.8009719

Meher B, Agrawal S, Panda R, Abraham A (2019) A survey on region based image fusion methods. Inform Fus 48:119–132. https://doi.org/10.1016/j.inffus.2018.07.010

Cui G, Feng H, Xu Z, Li Q, Chen Y (2015) Detail preserved fusion of visible and infrared images using regional saliency extraction and multi-scale image decomposition. Opt Commun 341:199–209. https://doi.org/10.1016/j.optcom.2014.12.032

Zhang Y, Zhang L, Bai X, Zhang L (2017) Infrared and visual image fusion through infrared feature extraction and visual information preservation. Infrar Phys Technol 83:227–237. https://doi.org/10.1016/j.infrared.2017.05.007

Meher B, Agrawal S, Panda R, Dora L, Abraham A (2022) Visible and infrared image fusion using an efficient adaptive transition region extraction technique. Eng Sci Technol Int J 29:101037. https://doi.org/10.1016/j.jestch.2021.06.017

Li G, Lin Y, Qu X (2021) An infrared and visible image fusion method based on multi-scale transformation and norm optimization. Inform Fus 71:109–129. https://doi.org/10.1016/j.inffus.2021.02.008

Shen D, Zareapoor M, Yang J (2021) Multimodal image fusion based on point-wise mutual information. Image Vis Comput 105:104047. https://doi.org/10.1016/j.imavis.2020.104047

Liu Y, Chen X, Cheng J, Peng H, Wang Z (2018) Infrared and visible image fusion with convolutional neural networks. Int J Wavel Multiresol Inform Process 16(03):1850018. https://doi.org/10.1142/S0219691318500182

Li H, Wu X-J, Durrani TS (2019) Infrared and visible image fusion with resnet and zero-phase component analysis. Infra Phys Technol 102:103039. https://doi.org/10.1016/j.infrared.2019.103039

Ma J, Yu W, Liang P, Li C, Jiang J (2019) Fusiongan: a generative adversarial network for infrared and visible image fusion. Inform Fus 48:11–26. https://doi.org/10.1016/j.inffus.2018.09.004

Fu Y, Wu X-J, Durrani T (2021) Image fusion based on generative adversarial network consistent with perception. Inform Fus 72:110–125. https://doi.org/10.1016/j.inffus.2021.02.019

Song A, Duan H, Pei H, Ding L (2022) Triple-discriminator generative adversarial network for infrared and visible image fusion. Neurocomputing 483:183–194. https://doi.org/10.1016/j.neucom.2022.02.025

Fu Y, Wu X-J, Durrani T (2021) Image fusion based on generative adversarial network consistent with perception. Inform Fus 72:110–125. https://doi.org/10.1016/j.inffus.2021.02.019

Liu L, Chen M, Xu M, Li X (2021) Two-stream network for infrared and visible images fusion. Neurocomputing 460:50–58. https://doi.org/10.1016/j.neucom.2021.05.034

Li H, Wu X-J, Kittler J (2021) Rfn-nest: an end-to-end residual fusion network for infrared and visible images. Inform Fus 73:72–86. https://doi.org/10.1016/j.inffus.2021.02.023

Xu H, Ma J, Jiang J, Guo X, Ling H (2020) U2fusion: a unified unsupervised image fusion network. IEEE Trans Patt Analy Mach Intell 44(1):502–518. https://doi.org/10.1109/TPAMI.2020.3012548

Simonyan K, Zisserman A (2014) Very deep convolutional networks for large-scale image recognition. Comput Sci https://doi.org/10.48550/arXiv.1409.1556

Toet A (2014) TNO image fusion dataset. https://figshare.com/articles/TN_Image_Fusion_Dataset/1008029

Haghighat MBA, Aghagolzadeh A, Seyedarabi H (2011) A non-reference image fusion metric based on mutual information of image features. Comput Elect Eng 37(5):744–756. https://doi.org/10.1016/j.compeleceng.2011.07.012

Acknowledgements

This work was supported by the National Natural Science Foundation of China under Grant Nos. 61876049 and 62172118, and the Nature Science key Foundation of Guangxi (2021GXNSFDA196002) and the Guangxi Key Laboratory of Image and Graphic Intelligent Processing under Grants ( GIIP2006, GIIP2007, GIIP2008) and the Innovation Project of Guangxi Graduate Education under Grants (YCB2021070 , YCBZ2018052, 2021YCXS071).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Zhou, X., Jiang, Z. & Okuwobi, I.P. CAFNET: Cross-Attention Fusion Network for Infrared and Low Illumination Visible-Light Image. Neural Process Lett 55, 6027–6041 (2023). https://doi.org/10.1007/s11063-022-11125-9

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11063-022-11125-9