Abstract

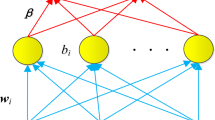

Kernel methods have become a standard solution for a large number of data analyses, and extensively utilized in the field of signal processing including analysis of speech, image, time series, and DNA sequences. The main difficulty in applying the kernel method is in designing the appropriate kernel for the specific data, and multiple kernel learning (MKL) is one of the principled approaches for kernel design problem. In this paper, a novel multiple kernel learning method based on the notion of Gaussianity evaluated by entropy power inequality is proposed. The notable characteristics of the proposed method are in utilizing the entropy power inequality for kernel learning, and in realizing an MKL algorithm which only optimizes the kernel combination coefficients, while the conventional methods need optimizing both combination coefficients and classifier parameters. The proposed kernel learning approach is shown to have good classification accuracy through a set of standard datasets for dichotomy classification.

Similar content being viewed by others

References

Aronszajn, N. (1950). Theory of reproducing kernels. Transactions of the American Mathematical Society, 68, 337–404.

Beirlant, J., Dudewicz, E. J., Györfi, L., Meulen, E. C. (1997). Nonparametric Entropy Estimation: An Overview. International Journal of the Mathematical Statistics Sciences, 6, 17–39.

Bertsekas, D. P. (1996). Constrained Optimization and Lagrange Multiplier Methods (Optimization and Neural Computation Series), 1st edn: Athena Scientific.

Cortes, C., Mohri, M., Rostamizadeh, A. (2012). Algorithms for Learning Kernels Based on Centered Alignment. Journal of Machine Learning Research, 13, 795–828.

Cristianini, N., Shawe-Taylor, J., Kandola, J. (2002). On kernel target alignment. Advances in Neural Information Processing Systems, 14, 367–373.

Do, H., Kalousis, A., Woznica, A., Hilario, M. (2009). Margin and radius based multiple kernel learning. ECML/PKDD (1), 330–343.

Duda, R. O., Hart, P. E., Stork, D. G. (2000). Pattern Classification: Wiley-Interscience Publication.

Faivishevsky, L., & Goldberger, J (2009). ICA based on a smooth estimation of the differential entropy. Advances in Neural Information Processing Systems, 21, 433–440.

Faivishevsky, L., & Goldberger, J. (2010). A Nonparametric Information Theoretic Clustering Algorithm. International Conference on Machine Learning.

Fisher, R. A. (1936). The use of multiple measurements in taxonomic problems. Annals of Eugenics, 7(2), 179–188.

Fletcher, R. (1987). Practical methods of optimization, 2nd ed.: Wiley.

Hino, H. (2010). A conditional entropy minimization criterion for dimensionality reduction and multiple kernel learning. Neural Computation, 22(11), 2887–2923.

Hino, H., & Reyhani, N. (2012). Multiple kernel learning with gaussianity measures. Neural Computatation, 24(7), 1853–1881.

Hino, H., Wakayama, K., Murata, N. (2013). Entropy-based sliced inverse regression. Computational Statistics Data Analysis, 67, 105–114.

Izenman, A. J. (2008). Modern multivariate statistical techniques: regression, classification, and manifold learning: Springer.

Kim, S., Magnani, A., Boyd, S. (2006). Optimal kernel selection in kernel fisher discriminant analysis. International Conference on Machine Learning, 465–472.

Lanckriet, G. R. G., Cristianini, N., Bartlett, P., Ghaoui, L. E., Jordan, M. I. (2004). Learning the kernel matrix with semidefinite programming. Journal of Machine Learning Research, 5, 27–72.

Ledoux, M., & Talagrand, M. (1991). Probability in Banach Spaces: Springer.

Lukacs, E. (1960). Characteristic Functions. London: Charles Griffin & Company, ltd.

Mehmet, G., & Ethem, A. (2011). Multiple Kernel Learning Algorithms. Journal of Machine Learning Research, 12, 2211–2268.

Mika, S., Rätsch, G., Weston, J., Schölkopf, B., Müllers, K. R. (1999). Neural Networks for Signal Processing, IX, 41–48.

R Development Core Team. (2011) R: A Language and Environment for Statistical Computing, R Foundation for Statistical Computing, Vienna, Austria.

Rakotomamonjy, A., Bach, F. R., Canu, S., Grandvalet, Y. (2008). SimpleMKL. Journal of Machine Learning Research, 9, 2491–2521.

Reed, M., & Simon, B. (1981). Functional Analysis. California: Academic Press.

Reyhani, N. (2013). Multiple Spectral Kernel Learning and a Gaussian Complexity Computation. Neural Computation, 25(7), 1926–1951.

Rätsch, G., Onoda, T., Müller, K. R. (2001). Soft margins for adaboost. Machine Learning, 42(3), 287–320.

Shannon, C. E. (1948). A mathematical theory of communication. Bell Systems Technical Journal, 27 379–423, 623–656.

Shawe-Taylor, J., & Cristianini, N. (2004). Kernel Methods for Pattern Analysis. New York: Cambridge University Press.

Sonnenburg, S., Rätsch, G., Schäfer, C., Schölkopf, B. (2006). Large scale multiple kernel learning. Journal of Machine Learning Research, 7, 1531–1565.

Yan, F., Kittler, J., Mikolajczyk, K., Tahir, A. (2009). Non-sparse multiple kernel learning for fisher discriminant analysis. International Conference on Data Mining, 1064–1069.

Author information

Authors and Affiliations

Corresponding author

Additional information

Part of this work is supported by JSPS Kakenhi No.25870811.

Rights and permissions

About this article

Cite this article

Hino, H. Entropy Power Inequality for Learning Optimal Combination of Kernel Functions. J Sign Process Syst 79, 201–210 (2015). https://doi.org/10.1007/s11265-014-0899-7

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11265-014-0899-7