Abstract

Recent research on semi-supervised learning (SSL) is mainly based on the method of consistency regularization, which relies on domain-specific data augmentation. Pseudo-labeling is a more general method that has no such restrictions but performs limited by noisy training. We combine both approaches and focus on generating pseudo-labels using domain-independent weak augmentation. In this article, we propose ReFixMatch-LS and apply it to the classification of medical images. First, we reduce the impact of noisy artificial labels by label smoothing and consistent regularization. Then, by recording high-confidence pseudo-labels generated from each epoch during training, we reuse the generated pseudo-labels to train the model in the subsequent epochs. ReFixMatch-LS effectively increases the number of pseudo-labels and improves the model performance. We validate the effectiveness of ReFixMatch-LS on skin lesion diagnosis in the ISIC 2018 and ISIC 2019 challenge datasets, obtaining AUCs of 91.54%, 93.68%, 94.55%, and 95.47% on the four proportions of labeled data from ISIC 2018.

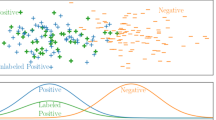

Graphical Abstract

Similar content being viewed by others

Abbreviations

- SSL:

-

Semi-supervised learning

- KL:

-

Kullback–Leibler divergence

- ISIC:

-

The International Skin Imaging Collaboration

- \(LS\) :

-

Label smoothing

- SRC:

-

Sample relation consistency paradigm

- PLCt :

-

Pseudo-label collection

- MT:

-

Mean teacher

- EMA:

-

Exponential moving average

- ACPL:

-

Anti-curriculum pseudo-labeling

- PL avg acc:

-

The average accuracy of pseudo-labeling

- AUC:

-

Area under curve

- K-class:

-

Number of categories

- \(\mathrm{\alpha }\) :

-

Label smoothing factor

- \({\mathrm{y}}_{\mathrm{i}}\), \({\mathrm{y}}_{\mathrm{b}}\) :

-

True label (one-hot coded) or hard label

- \({\mathrm{y}}_{\mathrm{i}}^{\mathrm{LS}}\) :

-

Soft label after smoothing of the hard label

- \(\mathrm{X}\) :

-

A batch of labeled examples and their labels

- \(\mathrm{U}\) :

-

A batch of unlabeled examples

- \({\mathrm{x}}_{\mathrm{b}}\) :

-

A labeled example

- \({\mathrm{x}}_{{\mathrm{u}}_{\mathrm{b}}}\) :

-

An unlabeled example

- \({\mathrm{p}}_{\mathrm{model}}(\mathrm{y }|\mathrm{ x})\) :

-

The model’s predicted distribution of sample x

- \({\mathrm{q}}_{{\mathrm{u}}_{\mathrm{b}}}\) :

-

A guessed label distribution for an unlabeled example

- \(\uplambda\) :

-

A hyperparameter weighting the contribution of the unlabeled examples to the training loss.

- \(\lambda \left(t\right)\) :

-

\(\lambda \left(t\right)=1*{e}^{{(-5(1-t/\theta )}^{2})}\) Is the warming up function as \(\uplambda\)

- \(\theta\) :

-

\(\theta\) Is the total rising epoch

- \(\upbeta\) :

-

A parameter to balance categories in consistency loss, which is determined by dividing the total number of labeled data by the number of samples in each category

- \(\upgamma\) :

-

A hyperparameter to balance consistency loss and SRC loss

- \(T\) :

-

A hyperparameter for filtering unlabeled confidence thresholds

- \({\widehat{q}}_{{u}_{b}}\) :

-

An artificial labels (pseudo label) to unlabeled samples

- \({{\widehat{q}}_{{u}_{b}}}^{^{\prime}}\) :

-

The pseudo-label from PLC

- \({\mathrm{p}}_{\mathrm{model}}\) :

-

Student network

- \({\mathrm{p}}_{\mathrm{model}}^{^{\prime}}\) :

-

Teacher network, obtained from the student model by EMA

- \({{x}_{\mathrm{i}}}^{^{\prime}}\) and \({x}_{\mathrm{i}}\) :

-

\({{x}_{\mathrm{i}}}^{^{\prime}}\) And \({x}_{\mathrm{i}}\) are different versions of the same sample with weak augmentation

References

Liu Q, Yu L, Luo L et al (2020) Semi-supervised medical image classification with relation-driven self-ensembling model. IEEE Trans Med Imaging PP.99:1–1

Sohn K, Berthelot D, Li C L et al (2020) FixMatch: simplifying semi-supervised learning with consistency and confidence. https://doi.org/10.48550/arXiv.2001.07685

Müller R, Kornblith S, Hinton G (2019) When does label smoothing help? arXiv preprint arXiv:1906.02629

Lee DH (2013) Pseudo-label: the simple and efficient semi-supervised learning method for deep neural networks. Work challenges in represent learn, ICML 3(2):896

Lukasik M et al (2020) Does label smoothing mitigate label noise? International Conference on Machine Learning. PMLR 2020:6448–6458

Brown Tom et al (2022) Language models are few-shot learners. Adv Neural Inf Proces Syst 33(2020):1877–1901

Hu R, Singh A (2021) Transformer is all you need: multimodal multitask learning with a unified transformed. https://doi.org/10.48550/arXiv.2102.10772

Hady MFA, Schwenker F (2006) Semi-supervised learning. J R Stat Soc 172.2:530–530

Bachman P, Alsharif O, Precup D (2014) Learning with pseudo-ensembles. In Advances in neural information processing systems, pp 3365–3373. https://doi.org/10.48550/arXiv.1412.4864

Laine S, Aila T (2017) Temporal ensembling for semi-supervised learning. Int Conf Learn Representations. https://doi.org/10.48550/arXiv.1610.02242

Perez Fábio et al (2018) Data augmentation for skin lesion analysis. OR 2.0 Context-Aware Operating Theaters, Computer Assisted Robotic Endoscopy, Clinical Image-Based Procedures, and Skin Image Analysis. Springer, Cham, pp 303–311

Tarvainen A, Valpola H (2017) Mean teachers are better role models: Weight-averaged consistency targets improve semi-supervised deep learning results. Adv Neural Inf Proces Syst 30. https://doi.org/10.48550/arXiv.1703.01780

Miyato T et al (2018) Virtual adversarial training: a regularization method for supervised and semi-supervised learning. IEEE Trans Pattern Anal Mach Intell 41.8:1979–1993

Xie Q et al (2020) Unsupervised data augmentation for consistency training. Adv Neural Inf Proces Syst 33:6256–6268

Xie Q et al (2020) Self-training with noisy student improves imagenet classification. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. https://doi.org/10.48550/arXiv.1911.04252

McClosky D, Charniak E, Johnson M (2006) Effective self-training for parsing. Proceedings of the Human Language Technology Conference of the NAACL, Main Conference

Rosenberg C, Hebert M, Schneiderman H (2005) Semi-supervised self-training of object detection models. WACV/MOTION, vol 1, pp 29–36

Berthelot D, Carlini N, Goodfellow I et al (2019) Mixmatch: A holistic approach to semi-supervised learning. In Advances in Neural Information Processing Systems, pp 5050–5060

Berthelot D, Carlini N, Cubuk ED et al (2019) Remixmatch: semi-supervised learning with distribution alignment and augmentation anchoring. arXiv preprint arXiv:1911.09785

Avital O et al (2018) Realistic evaluation of semi-supervised learning algorithms. International conference on learning representations, pp 1–15

Szegedy C et al (2016) Rethinking the inception architecture for computer vision. Proceedings of the IEEE conference on computer vision and pattern recognition, pp 2818–2826

Vaswani A et al (2017) Attention is all you need. Adv Neural Inf Proces Syst 30. https://doi.org/10.48550/arXiv.1706.03762

Chorowski J, Jaitly N (2017) Towards better decoding and language model integration in sequence to sequence models. Proc Interspeech 2017:523–527

Zhang H, Cisse M, Dauphin YN et al (2017) mixup: beyond empirical risk minimization. In International Conference on Learning Representations. arXiv preprint arXiv:1710.09412

Wei C et al (2021) Crest: A class-rebalancing self-training framework for imbalanced semi-supervised learning. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 10857–10866

Cheplygina V et al (2019) Not-so-supervised: a survey of semi-supervised, multi-instance, and transfer learning in medical image analysis. Med Image Anal 54:280–296

Kitada S, Iyatomi H (2018) Skin lesion classification with ensemble of squeeze-and-excitation networks and semi-supervised learning. arXiv preprint arXiv:1809.02568

Li X et al (2018) Semi-supervised skin lesion segmentation via transformation consistent self-ensembling model. arXiv preprint arXiv:1808.03887

Zhang J et al (2019) Attention residual learning for skin lesion classification. IEEE Trans Med Imaging 38.9:2092–2103

Shi X et al (2019) An active learning approach for reducing annotation cost in skin lesion analysis. International workshop on machine learning in medical imaging. Springer, Cham, pp 628–636

Liu F et al (2021) Self-supervised mean teacher for semi-supervised chest x-ray classification. International workshop on machine learning in medical imaging. Springer, Cham, pp 426–436

Zoph B et al (2018) Learning transferable architectures for scalable image recognition. Proceedings of the IEEE conference on computer vision and pattern recognition, pp 8697–8710

Tschandl P, Rosendahl C, Kittler H (2018) The HAM10000 dataset, a large collection of multi-source dermatoscopic images of common pigmented skin lesions. Scientific Data 5.1:1–9

Shi W et al (2018) Transductive semi-supervised deep learning using min-max features. Proceedings of the European Conference on Computer Vision (ECCV), pp 299–315

Maher M, Kull M (2021) Instance-based label smoothing for better calibrated classification networks. 20th IEEE International conference on machine learning and applications (ICMLA). IEEE, pp 746–753

Qin Z et al (2020) A GAN-based image synthesis method for skin lesion classification. Comput Methods Prog Biomed 195:105568

Diaz-Pinto A et al (2019) Retinal image synthesis and semi-supervised learning for glaucoma assessment. IEEE Trans Med Imaging 38.9:2211–2218

Bai W et al (2017) Semi-supervised learning for network-based cardiac MR image segmentation. In International conference on medical image computing and computer-assisted intervention. Springer, Cham, pp 253–260

Zhang B et al (2021) Flexmatch: Boosting semi-supervised learning with curriculum pseudo labeling. Adv Neural Inf Proces Syst 34:18408–18419

Wang C et al (2022) Pseudo-labeled auto-curriculum learning for semi-supervised keypoint localization. arXiv preprint arXiv:2201.08613

Combalia M et al (2019) Bcn20000: Dermoscopic lesions in the wild. arXiv preprint arXiv:1908.02288

Li J, Xiong C et al (2021) Comatch: Semi-supervised learning with contrastive graph regularization. Proceedings of the IEEE/CVF international conference on computer vision, pp 9475-9484

Liu, Fengbei, et al. "ACPL: Anti-Curriculum Pseudo-Labelling for Semi-Supervised Medical Image Classification." Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2022: 20697-20706.

Funding

This research is supported by Xinjiang Autonomous Region key research and development project (2021B03001-4).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Visualization of classification results

Visualization of classification results

Figure 4 shows examples of the graphical results of the ReFixMatch-LS for both ISIC 2018 [33] (top) and ISIC 2019 [41] (bottom) datasets. Due to the introduction of label smoothing (smoothing factor of 0.1), the best confidence level has not reached above 0.99 as in other methods (using cross-entropy), and the results in the figure are reasonable. In addition, Melanoma and Benign keratosis showed highly similar confidence levels in both datasets. In particular, in the last figure, the two differ by only 0.01%. These phenomena indicated that Melanoma and Benign keratosis have similar characteristics. The classification performance of ReFixMatch-LS still leaves room for improvement.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Zhou, S., Tian, S., Yu, L. et al. ReFixMatch-LS: reusing pseudo-labels for semi-supervised skin lesion classification. Med Biol Eng Comput 61, 1033–1045 (2023). https://doi.org/10.1007/s11517-022-02743-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11517-022-02743-5