Abstract

Purpose

Oxygen extraction fraction (OEF) is a biomarker for the viability of brain tissue in ischemic stroke. However, acquisition of the OEF map using positron emission tomography (PET) with oxygen-15 gas is uncomfortable for patients because of the long fixation time, invasive arterial sampling, and radiation exposure. We aimed to predict the OEF map from magnetic resonance (MR) and PET images using a deep convolutional neural network (CNN) and to demonstrate which PET and MR images are optimal as inputs for the prediction of OEF maps.

Methods

Cerebral blood flow at rest (CBF) and during stress (sCBF), cerebral blood volume (CBV) maps acquired from oxygen-15 PET, and routine MR images (T1-, T2-, and T2*-weighted images) for 113 patients with steno-occlusive disease were learned with U-Net. MR and PET images acquired from the other 25 patients were used as test data. We compared the predicted OEF maps and intraclass correlation (ICC) with the real OEF values among combinations of MRI, CBF, CBV, and sCBF.

Results

Among the combinations of input images, OEF maps predicted by the model learned with MRI, CBF, CBV, and sCBF maps were the most similar to the real OEF maps (ICC: 0.597 ± 0.082). However, the contrast of predicted OEF maps was lower than that of real OEF maps.

Conclusion

These results suggest that the deep CNN learned useful features from CBF, sCBF, CBV, and MR images and predict qualitatively realistic OEF maps. These findings suggest that the deep CNN model can shorten the fixation time for 15O PET by skipping 15O2 scans. Further training with a larger data set is required to predict accurate OEF maps quantitatively.

Similar content being viewed by others

Introduction

Oxygen extraction fraction (OEF) is a biomarker of the viability of brain tissue in ischemic stroke [1,2,3,4]. Positron emission tomography (PET) with oxygen-15 gases (15O PET) is the gold standard method for quantifying OEF maps [5, 6]. Calculating the OEF map requires PET scans for cerebral blood flow (CBF) with C15O2 or H215O and cerebral blood volume (CBV) with C15O, as well as a 15O2 scan. Arterial blood sampling is also required to quantify the OEF, CBF, and CBV in 15O PET. The long fixation time of the 15O PET scans, which consists of preparing the arterial blood sampling and three PET scans (1–2 h), places a burden on patients. These issues prevent the widespread use of 15O PET in clinical settings. Kudomi and colleagues proposed a method of shortening the total fixation time by continuous inhalation of C15O2 and 15O2 gases [7]. However, the continuous inhalation protocol has not been widely used. Various methods to acquire OEF maps only with magnetic resonance (MR) imaging data [8,9,10,11,12] were proposed previously. These methods have not also been widely used in clinical due to the need for special calculation and sequences.

Deep learning, which is a type of machine learning with a neural network consisting of numerous layers [13, 14], has been recently and widely used in the computer vision area. Deep-learning techniques, such as a convolutional neural network (CNN) and generative adversarial network, have been applied for image synthesis and transformation between different images as follows: denoizing/superresolution [15, 16], synthesis of computed tomography (CT) images from MR images [17,18,19], motion correction [20], missing data recovery [21], and image reconstruction [22,23,24]. To map ischemic stroke, prediction of CBF [25] and cerebrovascular reserve [26] maps using the CNN learned with arterial spin labeling (ASL) maps and structural MR images have been proposed.

We hypothesized that the deep CNN could predict OEF maps without the 15O2 scan from the other PET and MR images. To verify this hypothesis, we performed learning of structural MR images, CBV maps, and CBF maps at rest and under stress with acetazolamide as inputs and OEF maps as a target with U-shaped CNN with skip connections (U-Net) [27]. To demonstrate which MRI, CBF, and CBV are optimal for predicting the OEF maps, we compared the models learned with various combinations of MR images, CBF, and CBV maps as inputs. Finally, we performed the test using the model learned with the best combination.

Materials and methods

Data

We retrospectively analyzed data from patients with unilateral cerebrovascular steno-occlusive disease who underwent both MR and 15O PET scans as part of their routine preoperative examination between 2011 and 2018 (n = 138; age: 65.9 ± 10.3 [range: 27–85] years; female/male = 29/109). This study was performed in accordance with the Ethical Guideline for Clinical Research, issued by the Ministry of Health, Labor and Welfare of the Japanese government (2008), and was approved by the Ethics Committee of Akita Cerebrospinal and Cardiovascular Center (no. 20-01).

We regarded the most recent data for 25 subjects (age: 63.8 ± 10.5 [37–80] years; female/male = 4/21) from the data set as test data, and the remaining data for 113 subjects (age: 66.3 ± 10.3 [27–85] years; female/male = 25/88) as training/validation data.

Scan procedures and image processing

PET data were acquired using an SET-3000GCT/M scanner (Shimadzu Corp., Kyoto, Japan) dedicated to the three-dimensional (3D) acquisition mode [28]. The details of 15O PET scans have been described elsewhere [29]. Motion correction for the 15O PET scans was performed using a previously described software-based method [30]. The OEF maps were estimated using the autoradiographic method [6] based on images acquired through inhaled 15O2 and CBF estimated from H215O PET images [31]. Blood volume was subtracted from the OEF maps by CBV estimated from C15O PET images. The stressed CBF maps were estimated using data acquired with H215O and acetazolamide, as described in previous reports [30, 32].

MR scans to acquire T1-, T2-, and T2*-weighted images were performed with a 3T MR scanner (Verio Dot, Siemens Healthcare, Germany). T1-weighted images were acquired using a turbo spin echo (TSE) sequence with dark fluid technology. The parameters of the T1-weighted image sequences were as follows: repetition time (TR): 2000 ms; echo time (TE): 9.5 ms; inversion time (TI): 858 ms; flip angle: 120°; slice thickness: 5 mm; gap: 1 mm; matrix size: 320 × 320. T2-weighted images were acquired using a TSE sequence with the following parameters: TR: 4000 ms; TE: 93 ms; flip angle: 145°; slice thickness: 5 mm; gap: 1 mm; matrix size: 512 × 512. T2*-weighted images were acquired using a gradient echo sequence with the following parameters: TR: 680 ms; TE: 16 ms; flip angle: 20°; slice thickness: 5 mm; gap: 1 mm; matrix size: 384 × 384.

The T2- and T2*-weighted images and CBF map at rest were registered to the T1-weighted images. The transformation matrix was applied to the realignment of OEF and CBV maps to the T1-weighted images. Then, all images were realigned to spaces for the T1-weighted images. These registration processes were performed using the FreeSurfer software package (https://surfer.nmr.mgh.harvard.edu/). Each slice for the realigned images was down-sampled to 256 × 256 for the input and target data for the deep CNN model. All images were standardized by the average for an individual image.

For extracting brain mask and the region-of-interest analysis described below, spatial normalization of the T1-weighted images was performed using the unified segmentation algorithm [33]. The deformation estimated for the T1-weighted images was applied for the other realigned MR images and CBF, CBV, and OEF maps. These spatial normalization processes were performed using the SPM12 software package (https://www.fil.ion.ucl.ac.uk/spm/).

Flowchart for the image pre-processing is shown in Fig. S1 on Supplementary Materials.

Training

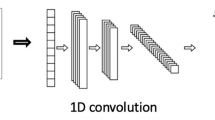

Figure 1 illustrates the U-Net used in this study. The U-Net was trained with the training data set for the 113 subjects. Briefly, the U-Net contains an encoder part to compress data for extracting robust image features and a decoder part to restore a desirable image from the extracted features. The decoder part has a mirrored structure of the encoder part. Each level of the encoder and decoder parts contains two convolutional layer blocks. Each block contains a convolutional layer, a batch normalization layer to avoid internal covariance shifts [34], and an activation layer with a rectified linear unit [35]. Up-sampling on the decoder part was implemented with a transposed convolutional layer with stride by 2. Down-sampling on the encoder was implemented with a convolutional layer with stride by 2, instead of a pooling layer, due to improving the ability of expression for the network [36]. The level of down- and up-sampling was set to 3 empirically. To avoid losses of spatial information, the skip connections were added on each level. Finally, the output images were recovered from the final image features using a convolutional layer with a 1 × 1 kernel.

Weights on the network were optimized by minimizing the mean squared error between the real and predicted OEF maps. The optimization of the weights was performed using the Adam algorithm [37]. We used the default values of hyperparameters for Adam in this study, except for β1, which was set to 0.5 empirically. The update of the weights was implemented by a batch, including eight image data sets, and iterated with 100 epochs. The initial learning rate was set to 0.001. The learning rate was linearly decayed from the 50th epoch to the end of the learning. We performed data augmentation of the training data with rotation and a horizontal flip. The training processes were implemented using the PyTorch library (https://pytorch.org) [38].

Validation for combination of input images

To validate which combination among structural MR images, rest CBF, stressed CBF, and CBV maps were optimal for predicting the OEF maps, we performed the training using various combinations of input images, as presented in Table 1. To simplify the validation, we regarded the structural MR image data set including T1-, T2-, and T2*-weighted images as one image data type with three channels, which was termed “MRI.” Stressed CBF was denoted as “sCBF” hereafter. The models were validated with five-fold cross-validation. Briefly, we split the 113 training/validation data into five data subsets, regarded four and one subsets as training and validation data, respectively, and then repeated training and evaluation of the trained model five times such that all subsets had been validated.

We calculated the intraclass correlation for agreement between the predicted and real OEF values on brain voxels (ICC (2, 1)) as an index for performance to predict the OEF map. The real and predicted OEF maps were down-sampled by 4 for rapid calculation of the ICC. We compared the ICC among the models learned using the combination of input images. Individual brain masks were calculated using spatial normalization to the Montreal Neurological Institute (MNI) template and the inverse transformation to the individual brain. We performed the Dunnett’s test to test the differences from the model with the best ICC. We also calculated the effect sizes of the differences in ICC.

A four-way repeated-measures analysis of variance (ANOVA) was performed to demonstrate which images contributed to the prediction performance. Binary variables, as to whether each image (MRI, CBF, CBV, and sCBF) was used as an input image, were regarded as independent variables. For each independent variable, we calculated the partial eta-squared (ηp2) as an effect size to contribute to the ICC values. ICC values were regarded as dependent variables. The ICC was calculated with the Pingouin library (https://pingouin-stats.org/index.html) [39]. The ANOVA was performed with the R programming language (https://www.r-project.org/).

To extract cortical OEF values, volume-of-interests (VOI) template, based on labeled data provided by Neuromorphometrics, Inc. (http://Neuromorphometrics.com) under academic subscription, was applied on the spatially normalized OEF maps. The template VOIs were masked with a gray matter mask, determined with thresholding of the tissue probability map on the MNI template by 0.5. OEF values for the cerebral cortex on each hemisphere were calculated from the average values on nine cortical regions were extracted. The nine cortical regions consisted of the frontal, parietal, occipital, temporal, central operculum, anterior cingulate, middle cingulate, posterior cingulate, and insula cortices. Ratio of OEF between ipsi- and contra-lateral VOIs for the cortical regions was calculated.

Test

To assess the generalization performance of the trained model, the model with the best prediction performance in the validation was tested using the test data for the most recent 25 subjects. The model trained with all training data for 113 subjects was tested. The ICC for the predicted OEF values on the brain voxels was calculated in a similar manner to the validation.

Results

Validation for the combination of input images

As presented in Fig. 2 and Table 2, the highest ICC (0.597 ± 0.082) to the real OEF values was observed with the model learned with MRI, CBF, CBV, and sCBF images (full model). The OEF maps predicted by the full model were similar to those of the real OEF maps, as illustrated in Fig. 3. The ICC value for the full model (0.597) indicates moderate agreement between the real and predicted OEF maps. No significant difference in ICC was observed among the models with the top-six mean ICC. In the case illustrated in Fig. 3, we did not observe marginal differences among the OEF maps predicted by the models with a top-three ICC. The ICC for the model other than top-six was significantly lower than the ICC for the full model. The large effect sizes (> 1.6) for the differences of ICC to the full model were observed with the model learned without resting CBF. The model learned only with MRI resulted in the worst mean ICC and the flat OEF maps, as illustrated in Fig. 3.

Real and predicted OEF maps for a case (77 years old, male, right internal carotid artery occlusion), with the highest ICC by the full model (MRI + CBF + CBV + sCBF) in the validation data set. The map on the top indicates the real OEF map. The maps in the three rows in the center indicate the OEF maps predicted by the model with the top-three mean ICC among the validation data set (full model; MRI + CBF + CBV; CBF + CBV + sCBF). The bottom map indicates the OEF maps predicted by the model with the worst mean ICC (MRI). ICC values for each model are also shown on the right

In the case illustrated in Fig. 4, we observed a lower contrast on the predicted OEF maps, even with the full model, as compared with the real OEF maps. This case resulted in the lowest ICC with the full model among the validation data set and had high laterality of OEF. Similar trends were observed in other cases with high laterality of OEF, as shown in Fig S2 on Supplementary Materials. The lower predicted rOEF values than real ones were observed in the cases with the higher real rOEF, as shown in Figs. 5 and S3. In the cases with low real rOEF due to cerebral infarction, as illustrated in Fig. S4, the predicted rOEF values were higher than the real rOEF values.

Real and predicted OEF maps for a case (71 years old, male, right internal carotid artery stenosis) with the lowest ICC by the full model (MRI + CBF + CBV + sCBF) among the validation data set. The legends are the same as those in Fig. 3

Table 3 indicates that all binary variables for the input images had significant effects on ICC. We observed a much stronger effect of CBF on ICC (ηp2 = 0.576) than that of the other variables. The effects of CBV (ηp2 = 0.096) and MRI (ηp2 = 0.065) on ICC were moderate. The effect of stressed CBF (ηp2 = 0.021) was the weakest among the binary variables.

For the test below, we applied the full model because it had the best ICC and the most significant effects of all input images on ICC.

Test

The mean ICC value for the test data sets was 0.591 ± 0.081. We observed no significant differences in ICC between validation and test data sets (Welch’s t test: t = 0.342; p = 0.734; effect size = 0.075). As illustrated in Fig. 6, we observed similar textures to the real OEF map in the predicted OEF map for a case with the highest ICC (0.703). However, the predicted OEF values in this case were apparently lower on the whole brain than the real OEF values were. The underestimation of OEF values was observed in 12 cases in the test data sets, as illustrated in Fig. 6. In the case illustrated in Fig. 7, lower contrast on the predicted OEF maps than that on the real OEF maps was observed; this case had high laterality of OEF, similar to that observed in the validation data set illustrated in Fig. 4. Similar trends in predicted cortical rOEF values to the validation, underestimation in the case with high real rOEF, were observed in the test dataset, as illustrated in Fig. 8.

Scatter plot of rOEF values on cerebral cortex for the test data between real and predicted with the full model. The legends are the same as those in Fig. 5

Discussion

We attempted to predict OEF maps without using the 15O2 scan through machine learning of the other PET and MR images with deep CNN. The predicted OEF maps were similar to the real maps, and the moderate ICC values obtained by the model learned with MRI, CBF, CBV, and stressed CBF maps indicate that deep CNN trained with the PET and MR images can qualitatively predict OEF maps without the 15O2 scan. This finding suggests that by skipping the 15O2 scan, the trained deep CNN can shorten the fixation time for the 15O PET scans.

The best ICC for the full model and the significant effects of all binary variables for the input images suggest that all MRI, CBF, CBV, and stressed CBF maps contribute to the prediction of the OEF map. The very strong effect of the resting CBF on the prediction was as we expected, because the OEF maps as the target were calculated with the resting CBF maps using the autoradiographic method. The contribution of the CBV maps indicates that the deep CNN model learned an association between the dilation of blood vessels and oxygen supply to the brain tissue in stroke. The contribution of stressed CBF indicates that the deep CNN model can learn the relation between cerebrovascular reactivity and OEF. The cerebrovascular reactivity, measured by the difference between rest and stressed CBF, decreases in advance of the elevation of OEF in stroke. The contribution of the MRI indicates that the CNN model learned two pieces of information from the MRI: One is the anatomical information for the individual brain, and the other information is the information on changes in susceptibility with deoxygenation of hemoglobin. The elevation in OEF results in changes in intensities in vessels in T2*-weighted images [40]. These findings suggest that all MRI, CBF, CBV, and stressed CBF maps are useful as input images for the prediction of OEF maps using the deep CNN model. Therefore, for this study, we selected the full model trained with MRI, CBF, CBV, and sCBF maps for the test.

The moderate ICC in the test data set was the same as that in the validation data set; this suggests that the trained CNN model was successfully generalized, except for cases with high laterality of OEF. The trained model failed to predict the OEF maps with high laterality, and resulted in the underestimation of rOEF values in the validation and test data sets. The overestimation of rOEF was observed in the cases with the lower real rOEF than 1.0 due to cerebral infarction. These results reflect the lack of training data, as only a few cases with high laterality and low rOEF were included in the training data set in this study. To quantitatively predict accurate OEF maps, further training of a larger number of cases with high laterality of OEF and low rOEF is required.

Another limitation of this study is that because the features learned by the deep CNN model are too complicated for humans, we cannot understand what the model has learned. However, the similarity of the predicted OEF maps to the real maps and the moderate ICC suggests that the deep CNN model has learned useful features for the prediction of OEF maps from MRI, CBF, CBV, and sCBF maps. Further studies are required to interpret the model using techniques such as attention network [41, 42].

The findings in this study suggest that the trained deep CNN can shorten the fixation time for 15O PET scans by approximately 15 min by skipping 15O2 scans. The good prediction of OEF maps with deep CNN trained with MR and CBF images (“MRI + CBF” model) as similar to the full model imply that we can shorten 15O PET scans by 30 min, by skipping C15O scan as well as 15O2 scan. However, the scans with 15O-water or C15O2 gases, arterial blood sampling, and in-house cyclotron are still required even if C15O and 15O2 scans can be skipped. The contributions of CBF maps to the prediction of OEF maps also imply that CBF maps acquired by perfusion imaging with MR such as the ASL method, and single photon emission computed tomography can be used as alternative training data for the prediction of OEF maps. Further studies are required to demonstrate the validity of the maps acquired by methods other than 15O PET.

In conclusion, the results in this study suggest that the trained deep CNN model can qualitatively predict OEF maps. To predict OEF maps, the deep CNN model can learn useful features from MRI, rest CBF, CBV, and stressed CBF. These findings suggest that by skipping the 15O2 scan, the trained deep CNN model can shorten the fixation time for 15O PET. However, training with a larger data set is required for the prediction of quantitative OEF maps.

Availability of data and materials

Please contact the corresponding author for data requests.

Code availability

Please contact the corresponding author for requests of custom codes used in this study.

References

Derdeyn CP, Videen TO, Yundt KD, Fritsch SM, Carpenter DA, Grubb RL, Powers WJ (2002) Variability of cerebral blood volume and oxygen extraction: stages of cerebral haemodynamic impairment revisited. Brain J Neurol 125:595–607. https://doi.org/10.1093/brain/awf047

Powers WJ, Grubb RL, Raichle ME (1984) Physiological responses to focal cerebral ischemia in humans. Ann Neurol 16:546–552. https://doi.org/10.1002/ana.410160504

Powers WJ (1991) Cerebral hemodynamics in ischemic cerebrovascular disease. Ann Neurol 29:231–240. https://doi.org/10.1002/ana.410290302

Gupta A, Baradaran H, Schweitzer AD, Kamel H, Pandya A, Delgado D, Wright D, Hurtado-Rua S, Wang Y, Sanelli PC (2014) Oxygen extraction fraction and stroke risk in patients with carotid stenosis or occlusion: a systematic review and meta-analysis. Am J Neuroradiol 35:250–255. https://doi.org/10.3174/ajnr.A3668

Okazawa H, Kudo T (2009) Clinical impact of hemodynamic parameter measurement for cerebrovascular disease using positron emission tomography and 15O-labeled tracers. Ann Nucl Med 23:217–227. https://doi.org/10.1007/s12149-009-0235-7

Mintun MA, Raichle ME, Martin WRW, Herscovitch P (1984) Brain oxygen utilization measured with O-15 radiotracers and positron emission tomography. J Nucl Med 25:177–187

Kudomi N, Hirano Y, Koshino K, Hayashi T, Watabe H, Fukushima K, Moriwaki H, Teramoto N, Iihara K, Iida H (2013) Rapid quantitative CBF and CMRO(2) measurements from a single PET scan with sequential administration of dual (15)O-labeled tracers. J Cereb Blood Flow Metab Off J Int Soc Cereb Blood Flow Metab 33:440–448. https://doi.org/10.1038/jcbfm.2012.188

He X, Yablonskiy DA (2007) Quantitative BOLD: mapping of human cerebral deoxygenated blood volume and oxygen extraction fraction: default state. Magn Reson Med 57:115–126. https://doi.org/10.1002/mrm.21108

Bolar DS, Rosen BR, Sorensen AG, Adalsteinsson E (2011) Quantitative imaging of extraction of oxygen and tissue consumption (QUIXOTIC) using venular-targeted velocity-selective spin labeling. Magn Reson Med Off J Soc Magn Reson Med Soc Magn Reson Med 66:1550–1562. https://doi.org/10.1002/mrm.22946

Zhang J, Liu T, Gupta A, Spincemaille P, Nguyen TD, Wang Y (2015) Quantitative mapping of cerebral metabolic rate of oxygen (CMRO2) using quantitative susceptibility mapping (QSM). Magn Reson Med 74:945–952. https://doi.org/10.1002/mrm.25463

Zhang S, Cho J, Nguyen TD, Spincemaille P, Gupta A, Zhu W, Wang Y (2020) Initial experience of challenge-free mri-based oxygen extraction fraction mapping of ischemic stroke at various stages: comparison with perfusion and diffusion mapping. Front Neurosci 14:535441. https://doi.org/10.3389/fnins.2020.535441

Cho J, Kee Y, Spincemaille P, Nguyen TD, Zhang J, Gupta A, Zhang S, Wang Y (2018) Cerebral metabolic rate of oxygen (CMRO2) mapping by combining quantitative susceptibility mapping (QSM) and quantitative blood oxygenation level-dependent imaging (qBOLD). Magn Reson Med 80:1595–1604. https://doi.org/10.1002/mrm.27135

Bengio Y, Lamblin P, Popovici D, Larochelle H (2006) Greedy layer-wise training of deep networks. In: Proceedings of the 19th international conference on neural information processing systems. MIT Press, Cambridge, pp 153–160

Hinton GE, Osindero S, Teh Y-W (2006) A fast learning algorithm for deep belief nets. Neural Comput 18:1527–1554. https://doi.org/10.1162/neco.2006.18.7.1527

Zhao K, Zhou L, Gao S, Wang X, Wang Y, Zhao X, Wang H, Liu K, Zhu Y, Ye H (2020) Study of low-dose PET image recovery using supervised learning with CycleGAN. PLoS ONE 15:e0238455. https://doi.org/10.1371/journal.pone.0238455

Liu J, Yang Y, Wernick MN, Pretorius PH, King MA (2020) Deep learning with noise-to-noise training for denoising in SPECT myocardial perfusion imaging. Med Phys. https://doi.org/10.1002/mp.14577

Su P, Guo S, Roys S, Maier F, Bhat H, Melhem ER, Gandhi D, Gullapalli RP, Zhuo J (2020) Transcranial MR imaging-guided focused ultrasound interventions using deep learning synthesized CT. AJNR Am J Neuroradiol 41:1841–1848. https://doi.org/10.3174/ajnr.A6758

Cusumano D, Lenkowicz J, Votta C, Boldrini L, Placidi L, Catucci F, Dinapoli N, Antonelli MV, Romano A, De Luca V, Chiloiro G, Indovina L, Valentini V (2020) A deep learning approach to generate synthetic CT in low field MR-guided adaptive radiotherapy for abdominal and pelvic cases. Radiother Oncol J Eur Soc Ther Radiol Oncol. https://doi.org/10.1016/j.radonc.2020.10.018

Massa H, Johnson J, McMillan A (2020) Comparison of deep learning synthesis of synthetic CTs using clinical MRI inputs. Phys Med Biol. https://doi.org/10.1088/1361-6560/abc5cb

Ko Y, Moon S, Baek J, Shim H (2020) Rigid and non-rigid motion artifact reduction in X-ray CT using attention module. Med Image Anal 67:101883. https://doi.org/10.1016/j.media.2020.101883

Xia Y, Zhang L, Ravikumar N, Attar R, Piechnik SK, Neubauer S, Petersen SE, Frangi AF (2020) Recovering from missing data in population imaging—cardiac MR image imputation via conditional generative adversarial nets. Med Image Anal 67:101812. https://doi.org/10.1016/j.media.2020.101812

Cha E, Chung H, Kim EY, Ye JC (2020) Unpaired training of deep learning tMRA for flexible spatio-temporal resolution. IEEE Trans Med Imaging PP. https://doi.org/10.1109/TMI.2020.3023620

Arshad M, Qureshi M, Inam O, Omer H (2020) Transfer learning in deep neural network based under-sampled MR image reconstruction. Magn Reson Imaging. https://doi.org/10.1016/j.mri.2020.09.018

Wang B, Liu H (2020) FBP-Net for direct reconstruction of dynamic PET images. Phys Med Biol. https://doi.org/10.1088/1361-6560/abc09d

Guo J, Gong E, Fan AP, Goubran M, Khalighi MM, Zaharchuk G (2019) Predicting 15O-Water PET cerebral blood flow maps from multi-contrast MRI using a deep convolutional neural network with evaluation of training cohort bias. J Cereb Blood Flow Metab. https://doi.org/10.1177/0271678X19888123

Chen DYT, Ishii Y, Fan AP, Guo J, Zhao MY, Steinberg GK, Zaharchuk G (2020) Predicting PET cerebrovascular reserve with deep learning by using baseline MRI: a pilot investigation of a drug-free brain stress test. Radiology 296:627–637. https://doi.org/10.1148/radiol.2020192793

Ronneberger O, Fischer P, Brox T (2015) U-net: convolutional networks for biomedical image segmentation. arXiv:1505.04597

Matsumoto K, Kitamura K, Mizuta T, Tanaka K, Yamamoto S, Sakamoto S, Nakamoto Y, Amano M, Murase K, Senda M (2006) Performance characteristics of a new 3-dimensional continuous-emission and spiral-transmission high-sensitivity and high-resolution PET camera evaluated with the NEMA NU 2–2001 Standard. J Nucl Med 47:83–90

Ibaraki M, Miura S, Shimosegawa E, Sugawara S, Mizuta T, Ishikawa A, Amano M (2008) Quantification of cerebral blood flow and oxygen metabolism with 3-dimensional PET and 15O: validation by comparison with 2-dimensional PET. J Nucl Med 49:50–59. https://doi.org/10.2967/jnumed.107.044008

Matsubara K, Ibaraki M, Nakamura K, Yamaguchi H, Umetsu A, Kinoshita F, Kinoshita T (2013) Impact of subject head motion on quantitative brain 15O PET and its correction by image-based registration algorithm. Ann Nucl Med 27:335–345. https://doi.org/10.1007/s12149-013-0690-z

Raichle ME, Martin WRW, Herscovitch P, Mintun MA, Markham J (1983) Brain blood flow measured with intravenous H215O. II. Implementation and Validation J Nucl Med 24:790–798

Yata K, Suzuki A, Hatazawa J, Shimosegawa E, Nagata K, Sato M, Moroi J (2006) Relationship between cerebral circulatory reserve and oxygen extraction fraction in patients with major cerebral artery occlusive disease: a positron emission tomography study. Stroke 37:534–536. https://doi.org/10.1161/01.STR.0000199085.40000.cf

Ashburner J, Friston KJ (2005) Unified segmentation. Neuroimage 26:839–851. https://doi.org/10.1016/j.neuroimage.2005.02.018

Ioffe S, Szegedy C (2015) Batch normalization: accelerating deep network training by reducing internal covariate shift. ArXiv:1502.03167 Cs

Nair V, Hinton GE (2010) Rectified linear units improve restricted Boltzmann machines. In: Proceedings of the 27th international conference on international conference on machine learning. Omnipress, USA, pp 807–814

Springenberg JT, Dosovitskiy A, Brox T, Riedmiller M (2015) Striving for simplicity: the all convolutional net. In: ICLR (workshop track)

Kingma D, Ba J (2014) Adam: a method for stochastic optimization. ArXiv:1412.6980 Cs

Paszke A, Gross S, Massa F, Lerer A, Bradbury J, Chanan G, Killeen T, Lin Z, Gimelshein N, Antiga L, Desmaison A, Kopf A, Yang E, DeVito Z, Raison M, Tejani A, Chilamkurthy S, Steiner B, Fang L, Bai J, Chintala S (2019) PyTorch: an imperative style, high-performance deep learning library. In: Wallach H, Larochelle H, Beygelzimer A, Alché-Buc F d\textquotesingle, Fox E, Garnett R (eds) Advances in neural information processing systems, vol 32. Curran Associates, Inc., pp 8024–8035

Vallat R (2018) Pingouin: statistics in Python. J Open Source Softw 3:1026. https://doi.org/10.21105/joss.01026

Reichenbach JR, Barth M, Haacke EM, Klarhöfer M, Kaiser WA, Moser E (2000) High-resolution Mr Venography at 3.0 Tesla. J Comput Assist Tomogr 24:949–957

Bahdanau D, Cho K, Bengio Y (2016) Neural machine translation by jointly learning to align and translate. ArXiv:1409.0473 Cs Stat

Guo Y, Stein J, Wu G, Krishnamurthy A (2019) SAU-net: a universal deep network for cell counting. In: Proceedings of the 10th ACM international conference on bioinformatics, computational biology and health informatics. Association for Computing Machinery, Niagara Falls, pp 299–306

Acknowledgments

We thank the staff of the Akita Cerebrospinal and Cardiovascular Center for the acquisition of data and clinical advice.

Funding

This study was supported by JSPS Grants-in-Aid (KAKENHI) for Scientific Research (C) (Grant No. JP19K08239).

Author information

Authors and Affiliations

Contributions

All authors contributed to drafting of the article and gave final approval of the manuscript. Keisuke Matsubara and Masanobu Ibaraki contributed to the conception of this study. All authors contributed to data acquisition. Keisuke Matsubara performed all data analyses and model development. The results were interpreted by Keisuke Matsubara, Masanobu Ibaraki, Yuki Shinohara, and Toshibumi Kinoshita.

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Ethics approval

This study was approved by the ethical committee of Akita Cerebrospinal and Cardiovascular Center (No. 20-01). It was conducted in accordance with the Ethical Guideline for Clinical Research, issued by the Ministry of Health, Labor and Welfare, Japanese Government (2008).

Informed consent

Informed consent was waived for this study by the ethical committee of Akita Cerebrospinal and Cardiovascular Center.

Consent for publication

Consent for publication was waived for this study by the ethical committee of Akita Cerebrospinal and Cardiovascular Center.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Matsubara, K., Ibaraki, M., Shinohara, Y. et al. Prediction of an oxygen extraction fraction map by convolutional neural network: validation of input data among MR and PET images. Int J CARS 16, 1865–1874 (2021). https://doi.org/10.1007/s11548-021-02356-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11548-021-02356-7