Abstract

Purpose

Computer assistance for endoscopic surgery depends on knowledge about the contents in an endoscopic scene. An important step of analysing the video contents is real-time surgical tool detection. Most methods for tool detection nevertheless depend on multi-step algorithms building upon prior knowledge like anchor boxes or non-maximum suppression which ultimately decrease performance. A real-world difficulty encountered by learning-based methods are limited datasets. Training a neural network on data matching a specific distribution (e.g. from a single hospital or showing a specific type of surgery) can result in a lack of generalization.

Methods

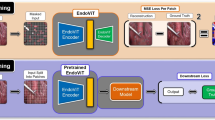

In this paper, we propose the application of a transformer based architecture for end-to-end tool detection. This architecture promises state-of-the-art accuracy while decreasing the complexity resulting in improved run-time performance. To improve the lack of cross-domain generalization due to limited datasets, we enhance the architecture with a latent feature space via variational encoding to capture common intra-domain information. This feature space models the linear dependencies between domains by constraining their rank.

Results

The trained neural networks show a distinct improvement on out-of-domain data indicating better generalization to unseen domains. Inference with the end-to-end architecture can be performed at up to 138 frames per second (FPS) achieving a speedup in comparison to older approaches.

Conclusions

Experimental results on three representative datasets demonstrate the performance of the method. We also show that our approach leads to better domain generalization.

Similar content being viewed by others

References

Allan M, Shvets A, Kurmann T, Zhang Z, Duggal R, Su Y, Rieke N, Laina I, Kalavakonda N, Bodenstedt S, García-Peraza LC, Li W, Iglovikov V, Luo H, Yang J, Stoyanov D, Maier-Hein L, Speidel S, Azizian M (2019) 2017 robotic instrument segmentation challenge. arXiv preprint arXiv:1902.06426

Carion N, Massa F, Synnaeve G, Usunier N, Kirillov A, Zagoruyko S (2020) End-to-end object detection with transformers. In: European conference on computer vision. Springer, pp 213–229

Choi B, Jo K, Choi S, Choi J (2017) Surgical-tools detection based on convolutional neural network in laparoscopic robot-assisted surgery. In: 2017 39th annual international conference of the IEEE engineering in medicine and biology society (EMBC). IEEE, pp 1756–1759

Funke I, Jenke A, Mees ST, Weitz J, Speidel S, Bodenstedt S (2018) Temporal coherence-based self-supervised learning for laparoscopic workflow analysis. In: OR 2.0 context-aware operating theaters, computer assisted robotic endoscopy, clinical image-based procedures, and skin image analysis. Springer, pp 85–93

Han K, Wang Y, Tian Q, Guo J, Xu C, Xu C (2020) GhostNet: more features from cheap operations. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 1580–1589

Hong WY, Kao CL, Kuo YH, Wang JR, Chang WL, Shih CS (2020) CholecSeg8k: a semantic segmentation dataset for laparoscopic cholecystectomy based on Cholec80. arXiv preprint arXiv:2012.12453

Jin A, Yeung S, Jopling J, Krause J, Azagury D, Milstein A, Fei-Fei L (2018) Tool detection and operative skill assessment in surgical videos using region-based convolutional neural networks. In: 2018 IEEE winter conference on applications of computer vision (WACV). IEEE, pp 691–699

Jo K, Choi Y, Choi J, Chung JW (2019) Robust real-time detection of laparoscopic instruments in robot surgery using convolutional neural networks with motion vector prediction. Appl Sci 9(14):2865

Khosla A, Zhou T, Malisiewicz T, Efros AA, Torralba A (2012) Undoing the damage of dataset bias. In: European conference on computer vision. Springer, pp 158–171

Kingma DP, Welling M (2014) Auto-encoding variational bayes. In: Bengio Y, LeCun Y (eds) 2nd international conference on learning representations, ICLR 2014, Banff, AB, Canada, April 14–16, 2014, conference track proceedings

Li H, Wang Y, Wan R, Wang S, Li TQ, Kot A (2020) Domain generalization for medical imaging classification with linear-dependency regularization. In: Advances in neural information processing systems. Curran Associates, Inc, vol 33, pp 3118–3129

Liu Y, Zhao Z, Chang F, Hu S (2020) An anchor-free convolutional neural network for real-time surgical tool detection in robot-assisted surgery. IEEE Access 8:78193–78201

Maier-Hein L, Eisenmann M, Sarikaya D, März K, Collins T, Malpani A, Fallert J, Feussner H, Giannarou S, Mascagni P, Nakawala H, Park A, Pugh C, Stoyanov D, Vedula SS, Cleary K, Fichtinger G, Forestier G, Gibaud B, Grantcharov T, Hashizume M, Heckmann-Nötzel D, Kenngott HG, Kikinis R, Mündermann L, Navab N, Onogur S, Roß T, Sznitman R, Taylor RH, Tizabi MD, Wagner M, Hager GD, Neumuth T, Padoy N, Collins J, Gockel I, Goedeke J, Hashimoto DA, Joyeux L, Lam K, Leff DR, Madani A, Marcus HJ, Meireles O, Seitel A, Teber D, Ückert F, Müller-Stich BP, Jannin P, Speidel S (2022) Surgical data science - from concepts toward clinical translation. Med Image Anal 76:102306

Maier-Hein L, Wagner M, Ross T, Reinke A, Bodenstedt S, Full PM, Hempe H, Mindroc-Filimon D, Scholz P, Tran TN, Bruno P, Kisilenko A, Müller B, Davitashvili T, Capek M, Tizabi MD, Eisenmann M, Adler TJ, Gröhl J, Schellenberg M, Seidlitz S, Lai TYE, Pekdemir B, Roethlingshoefer V, Both F, Bittel S, Mengler M, Mündermann L, Apitz M, Kopp-Schneider A, Speidel S, Nickel F, Probst P, Kenngott HG, Müller-Stich BP (2021) Heidelberg colorectal data set for surgical data science in the sensor operating room. Sci Data 8(1):1–11

Muandet K, Balduzzi D, Schölkopf B (2013) Domain generalization via invariant feature representation. In: International conference on machine learning. PMLR, pp 10–18

Nvidia: Nvidia tesla v100 GPU architecture (2017) https://images.nvidia.com/content/volta-architecture/pdf/volta-architecture-whitepaper.pdf

Nvidia: Nvidia tensorrt (2022) https://developer.nvidia.com/tensorrt

ONNX: Onnx: open neural network exchange (2022) https://github.com/onnx/onnx

Pan SJ, Yang Q (2009) A survey on transfer learning. IEEE Trans Knowl Data Eng 22(10):1345–1359

Redmon J, Divvala S, Girshick R, Farhadi A (2016) You only look once: unified, real-time object detection. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 779–788

Reiter W (2021) Co-occurrence balanced time series classification for the semi-supervised recognition of surgical smoke. Int J Comput Assist Radiol Surg 16:1–7

Ren S, He K, Girshick R, Sun J (2016) Faster R-CNN: towards real-time object detection with region proposal networks. IEEE Trans Pattern Anal Mach Intell 39(6):1137–1149

Rezatofighi H, Tsoi N, Gwak J, Sadeghian A, Reid I, Savarese S (2019) Generalized intersection over union: a metric and a loss for bounding box regression. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 658–666

Ross T, Zimmerer D, Vemuri A, Isensee F, Wiesenfarth M, Bodenstedt S, Both F, Kessler P, Wagner M, Müller Beat Kenngott H, Speidel S, Kopp-Schneider Annette Maier-Hein K, Maier-Hein L (2018) Exploiting the potential of unlabeled endoscopic video data with self-supervised learning. Int J Comput Assist Radiol Surg 13(6):925–933

Sarikaya D, Corso JJ, Guru KA (2017) Detection and localization of robotic tools in robot-assisted surgery videos using deep neural networks for region proposal and detection. IEEE Trans Med Imaging 36(7):1542–1549

Shi P, Zhao Z, Hu S, Chang F (2020) Real-time surgical tool detection in minimally invasive surgery based on attention-guided convolutional neural network. IEEE Access 8:228853–228862

Twinanda AP, Shehata S, Mutter D, Marescaux J, De Mathelin M, Padoy N (2016) EndoNet: a deep architecture for recognition tasks on laparoscopic videos. IEEE Trans Med Imaging 36(1):86–97

Vaswani A, Shazeer N, Parmar N, Uszkoreit J, Jones L, Gomez AN, Kaiser Ł, Polosukhin I (2017) Attention is all you need. In: Advances in neural information processing systems, pp 5998–6008

Yang Y, Zhao Z, Shi P, Hu S (2021) An efficient one-stage detector for real-time surgical tools detection in robot-assisted surgery. In: Annual conference on medical image understanding and analysis. Springer, pp 18–29

Zhang K, Gong M, Schölkopf B (2015) Multi-source domain adaptation: a causal view. In: Twenty-ninth AAAI conference on artificial intelligence

Zhao Z, Cai T, Chang F, Cheng X (2019) Real-time surgical instrument detection in robot-assisted surgery using a convolutional neural network cascade. Healthc Technol Lett 6(6):275

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Ethical approval

For this type of study formal consent is not required.

Informed consent

This article contains patient data from publically available datasets. Informed consent was obtained from patients for non-public recordings.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Reiter, W. Domain generalization improves end-to-end object detection for real-time surgical tool detection. Int J CARS 18, 939–944 (2023). https://doi.org/10.1007/s11548-022-02823-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11548-022-02823-9