Abstract

Purpose

Patients with aortic emergencies, such as aortic dissection and rupture, are at risk of rapid deterioration, necessitating prompt diagnosis. This study introduces a novel automated screening model for computed tomography angiography (CTA) of patients with aortic emergencies, utilizing deep convolutional neural network (DCNN) algorithms.

Methods

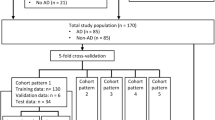

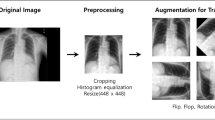

Our model (Model A) initially predicted the positions of the aorta in the original axial CTA images and extracted the sections containing the aorta from these images. Subsequently, it predicted whether the cropped images showed aortic lesions. To compare the predictive performance of Model A in identifying aortic emergencies, we also developed Model B, which directly predicted the presence or absence of aortic lesions in the original images. Ultimately, these models categorized patients based on the presence or absence of aortic emergencies, as determined by the number of consecutive images expected to show the lesion.

Results

The models were trained with 216 CTA scans and tested with 220 CTA scans. Model A demonstrated a higher area under the curve (AUC) for patient-level classification of aortic emergencies than Model B (0.995; 95% confidence interval [CI], 0.990–1.000 vs. 0.972; 95% CI, 0.950–0.994, respectively; p = 0.013). Among patients with aortic emergencies, the AUC of Model A for patient-level classification of aortic emergencies involving the ascending aorta was 0.971 (95% CI, 0.931–1.000).

Conclusion

The model utilizing DCNNs and cropped CTA images of the aorta effectively screened CTA scans of patients with aortic emergencies. This study would help develop a computer-aided triage system for CT scans, prioritizing the reading for patients requiring urgent care and ultimately promoting rapid responses to patients with aortic emergencies.

Similar content being viewed by others

References

JCS Joint Working Group (2013) Guidelines for diagnosis and treatment of aortic aneurysm and aortic dissection (JCS 2011): digest version. Circ J 77:789–828. https://doi.org/10.1253/circj.cj-66-0057

Erbel R, Aboyans V, Boileau C, Bossone E, Di Bartolomeo R, Eggebrecht H, Evangelista A, Falk V, Frank H, Gaemperli O, Grabenwöger M, Haverich A, Iung B, Manolis AJ, Meijboom F, Nienaber CA, Roffi M, Rousseau H, Sechtem U, Sirnes PA, Von Allmen RS, Vrints CJM, Zamorano JL, Achenbach S, Baumgartner H, Bax JJ, Bueno H, Dean V, Deaton C, Erol Ç, Fagard R, Ferrari R, Hasdai D, Hoes A, Kirchhof P, Knuuti J, Kolh P, Lancellotti P, Linhart A, Nihoyannopoulos P, Piepoli MF, Ponikowski P, Tamargo JL, Tendera M, Torbicki A, Wijns W, Windecker S, Czerny M, Deanfield J, Di Mario C, Pepi M, Taboada MJS, Van Sambeek MR, Vlachopoulos C (2014) 2014 ESC guidelines on the diagnosis and treatment of aortic diseases. Eur Heart J 35:2873–2926. https://doi.org/10.1093/eurheartj/ehu281

Mussa FF, Horton JD, Moridzadeh R, Nicholson J, Trimarchi S, Eagle KA (2016) Acute aortic dissection and intramural hematoma a systematic review. J Am Med Assoc 316:754–763. https://doi.org/10.1001/jama.2016.10026

Yasaka K, Akai H, Kunimatsu A, Kiryu S, Abe O (2018) Deep learning with convolutional neural network in radiology. Jpn J Radiol 36:257–272. https://doi.org/10.1007/s11604-018-0726-3

Anwar SM, Majid M, Qayyum A, Awais M, Alnowami M, Khan MK (2018) Medical image analysis using convolutional neural networks: a review. J Med Syst 42:226. https://doi.org/10.1007/s10916-018-1088-1

Harris RJ, Kim S, Lohr J, Towey S, Velichkovich Z, Kabachenko T, Driscoll I, Baker B (2019) Classification of aortic dissection and rupture on post-contrast CT images using a convolutional neural network. J Digit Imaging 32:939–946. https://doi.org/10.1007/s10278-019-00281-5

Hata A, Yanagawa M, Yamagata K, Suzuki Y, Kido S, Kawata A, Doi S, Yoshida Y, Miyata T, Tsubamoto M, Kikuchi N, Tomiyama N (2021) Deep learning algorithm for detection of aortic dissection on non-contrast-enhanced CT. Eur Radiol 31:1151–1159. https://doi.org/10.1007/s00330-020-07213-w

Rajnoha M, Burget R, Povoda L (2018) Image background noise impact on convolutional neural network training. In: 2018 10th international congress on ultra modern telecommunications and control systems and workshops (ICUMT). pp 1–4

Menikdiwela M, Nguyen C, Li H, Shaw M (2017) CNN-based small object detection and visualization with feature activation mapping. In: 2017 international conference on image and vision computing New Zealand (IVCNZ). pp 1–5

Rozado J, Martin M, Pascual I, Hernandez-Vaquero D, Moris C (2017) Comparing American, European and Asian practice guidelines for aortic diseases. J Thorac Dis 9: 551–560. https://doi.org/10.21037/jtd.2017.03.97

Rawat W, Wang Z (2017) Deep convolutional neural networks for image classification: a comprehensive review. Neural Comput 29:2352–2449. https://doi.org/10.1162/NECO_a_00990

Lin M, Chen Q, Yan S (2014) Network in network. In: 2nd international conference on learning representations, Banff, AB, 14–16 April, 2014

Breiman L (1996) Bagging predictors. Mach learn 24:123–140. https://doi.org/10.1007/BF00058655

Dietterich TG (2000) Ensemble methods in machine learning. Multiple classifier systems. Springer, Berlin, pp 1–15

Hosmer DW Jr, Lemeshow S, Sturdivant RX (2013) Applied logistic regression. Wiley, Hoboken

Robin X, Turck N, Hainard A, Tiberti N, Lisacek F, Sanchez J-C, Müller M (2011) pROC: an open-source package for R and S+ to analyze and compare ROC curves. BMC Bioinf 12:77. https://doi.org/10.1186/1471-2105-12-77

Karlo C, Gnannt R, Frauenfelder T, Leschka S, Brüesch M, Wanner GA, Alkadhi H (2011) Whole-body CT in polytrauma patients: effect of arm positioning on thoracic and abdominal image quality. Emerg Radiol 18:285–293. https://doi.org/10.1007/s10140-011-0948-5

Kahn J, Grupp U, Maurer M (2014) How does arm positioning of polytraumatized patients in the initial computed tomography (CT) affect image quality and diagnostic accuracy? Eur J Radiol 83:e67-71. https://doi.org/10.1016/j.ejrad.2013.10.002

Funding

This work was supported by JSPS KAKENHI [Grant Number 19K17253]. The funding source had no role in study design; in the collection, analysis and interpretation of data; in the writing of the report; and in the decision to submit the article for publication.

Author information

Authors and Affiliations

Contributions

TW: Conceptualization, methodology, software, formal analysis, investigation, data curation, writing—original draft, visualization, supervision, project administration, funding acquisition. MT: methodology, writing—review and editing, visualization. HM: resources, writing—review and editing. GK: resources, data curation, writing—review and editing. RK: validation, writing—review and editing. MM: Validation, writing—review and editing. NF: validation, writing—review and editing. YM: methodology, resources, writing—review and editing, supervision.

Corresponding author

Ethics declarations

Ethical statement

This single-center retrospective study was in accordance with the 1964 Declaration of Helsinki and its later amendments and was approved by the Institutional Review Board of Tokyo Metropolitan Bokutoh Hospital (Approval No.: 30–088).

Informed consent

The requirement for written informed consent was waived due to the retrospective nature of the study.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Wada, T., Takahashi, M., Matsunaga, H. et al. An automated screening model for aortic emergencies using convolutional neural networks and cropped computed tomography angiography images of the aorta. Int J CARS 18, 2253–2260 (2023). https://doi.org/10.1007/s11548-023-02979-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11548-023-02979-y