Abstract

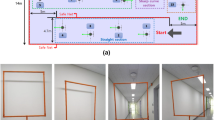

In autonomous drone racing, a drone flies through a gate track at high speed. Some solutions involve the use of camera localisation to control the drone. However, effective localisation is very demanding in processing time, which may compromise the flight speed. To address the latter, we propose a deep learning-based method for camera localisation that processes a small sequence of images of the scene at high-frequency operation. Our solution is a compact convolutional neural network based on the Inception network that uses a sequence of grey-scale images rather than colour images as input; we have called this network ‘GreySeqNet’. Our approach aims at leveraging the localisation process using a small stack of consecutive images fed as input to the network. To save computational effort, we explore the use of grey images instead of colour images, thus saving convolutional layers. We have conducted experiments in a simulated environment to measure the performance of GreySeqNet in different race tracks with variations in gate’s height and position. According to the results obtained in several test runs, our method achieves a camera pose estimation at an average operation frequency of 83 Hz running on GPU and 26 Hz on CPU, with an average camera pose error of 31 cm.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Availability of data and material

Not applicable.

Code availability

Not applicable.

References

Abadi, M., Agarwal, A., Barham, P., Brevdo, E., Chen, Z., Citro, C., Corrado, G.S., Davis, A., Dean, J., Devin, M., Ghemawat, S., Goodfellow, I., Harp, A., Irving, G., Isard, M., Jia, Y., Jozefowicz, R., Kaiser, L., Kudlur, M., Levenberg, J., Mané, D., Monga, R., Moore, S., Murray, D., Olah, C., Schuster, M., Shlens, J., Steiner, B., Sutskever, I., Talwar, K., Tucker, P., Vanhoucke, V., Vasudevan, V., Viégas, F., Vinyals, O., Warden, P., Wattenberg, M., Wicke, M., Yu, Y., Zheng, X.: TensorFlow: large-scale machine learning on heterogeneous systems (2015). https://www.tensorflow.org/. Software available from tensorflow.org

Bang, J., Lee, D., Kim, Y., Lee, H.: Camera pose estimation using optical flow and orb descriptor in slam-based mobile ar game. In: 2017 International Conference on Platform Technology and Service (PlatCon), pp. 1–4 (2017). https://doi.org/10.1109/PlatCon.2017.7883693

Casarrubias-Vargas, H., Petrilli-Barcelo, A., Bayro-Corrochano, E.: EKF-slam and machine learning techniques for visual robot navigation. In: 2010 20th International Conference on Pattern Recognition, pp. 396–399 (2010). https://doi.org/10.1109/ICPR.2010.105

Chollet, F., et al.: Keras (2015). https://keras.io

Cocoma-Ortega, J.A., Martinez-Carranza, J.: A CNN-based drone localisation approach for autonomous drone racing. In: Campoy, P. (ed.) 11th International Micro Air Vehicle Competition and Conference (IMAV2019), pp. 89–94 (2019)

Cocoma-Ortega, J.A., Martínez-Carranza, J.: Towards high-speed localisation for autonomous drone racing. In: Martínez-Villaseñor, L., Batyrshin, I., Marín-Hernández, A. (eds.) Advances in Soft Computing, pp. 740–751. Springer, Cham (2019)

Cocoma-Ortega, J.A., Rojas-Perez, L.O., Cabrera-Ponce, A.A., Martinez-Carranza, J.: Overcoming the blind spot in CNN-based gate detection for autonomous drone racing. In: 2019 Workshop on Research, Education and Development of Unmanned Aerial Systems (RED UAS), pp. 253–259 (2019). https://doi.org/10.1109/REDUAS47371.2019.8999722

Cruz-Martinez, C., Martínez-Carranza, J., Mayol-Cuevas, W.: Real-time enhancement of sparse 3D maps using a parallel segmentation scheme based on superpixels. J. Real Time Image Process. 14(3), 667–683 (2018). https://doi.org/10.1007/s11554-017-0707-2

DeTone, D., Malisiewicz, T., Rabinovich, A.: Toward geometric deep slam (2017). CoRR. arXiv:1707.07410

Jung, S., Hwang, S., Shin, H., Shim, D.H.: Perception, guidance, and navigation for indoor autonomous drone racing using deep learning. IEEE Robot. Autom. Lett. 3(3), 2539–2544 (2018). https://doi.org/10.1109/LRA.2018.2808368

Kaufmann, E., Gehrig, M., Foehn, P., Ranftl, R., Dosovitskiy, A., Koltun, V., Scaramuzza, D.: Beauty and the beast: optimal methods meet learning for drone racing. In: 2019 International Conference on Robotics and Automation (ICRA), pp. 690–696 (2019). https://doi.org/10.1109/ICRA.2019.8793631

Kaufmann, E., Loquercio, A., Ranftl, R., Dosovitskiy, A., Koltun, V., Scaramuzza, D.: Deep drone racing: learning agile flight in dynamic environments (2018). CoRR. arXiv:1806.08548

Kingma, D.P., Ba, J.: Adam: a method for stochastic optimization (2014). arXiv preprint. arXiv:1412.6980

Li, S., van der Horst, E., Duernay, P., De Wagter, C., de Croon, G.C.: Visual model-predictive localization for computationally efficient autonomous racing of a 72-gram drone. J Field Robotics. 37, 667–692 (2020). https://doi.org/10.1002/rob.21956

Li, S., Ozo, M.M., De Wagter, C., de Croon, G.C.: Autonomous drone race: a computationally efficient vision-based navigation and control strategy. Robotics and Autonomous Systems. 133, 103621 (2020). https://doi.org/10.1016/j.robot.2020.103621

Lima, R., Martinez-Carranza, J., Morales-Reyes, A., Mayol-Cuevas, W.: Toward a smart camera for fast high-level structure extraction. J. Real Time Image Process. 14(3), 685–699 (2018). https://doi.org/10.1007/s11554-017-0704-5

Loquercio, A., Kaufmann, E., Ranftl, R., Dosovitskiy, A., Koltun, V., Scaramuzza, D.: Deep drone racing: from simulation to reality with domain randomization. IEEE Transactions on Robotics. 36(1), 1–14 (2020). https://doi.org/10.1109/TRO.2019.2942989

Mansur, S., Habib, M., Pratama, G.N.P., Cahyadi, A.I., Ardiyanto, I.: Real time monocular visual odometry using optical flow: study on navigation of quadrotors UAV. In: 2017 3rd International Conference on Science and Technology-Computer (ICST), pp. 122–126 (2017). https://doi.org/10.1109/ICSTC.2017.8011864

Moon, H., Martinez-Carranza, J., Cieslewski, T., Faessler, M., Falanga, D., Simovic, A., Scaramuzza, D., Li, S., Ozo, M., De Wagter, C., de Croon, G., Hwang, S., Jung, S., Shim, H., Kim, H., Park, M., Au, T.C., Kim, S.J.: Challenges and implemented technologies used in autonomous drone racing. Intell. Serv. Robot. 12(2), 137–148 (2019)

More, V., Kumar, H., Kaingade, S., Gaidhani, P., Gupta, N.: Visual odometry using optic flow for unmanned aerial vehicles. In: 2015 International Conference on Cognitive Computing and Information Processing(CCIP), pp. 1–6 (2015). https://doi.org/10.1109/CCIP.2015.7100731

Nair, V., Hinton, G.E.: Rectified linear units improve restricted Boltzmann machines. In: Proceedings of the 27th International Conference on International Conference on Machine Learning, ICML’10, p. 807–814. Omnipress, Madison (2010)

Rojas-Perez, L.O., Martinez-Carranza, J.: Deeppilot: a CNN for autonomous drone racing. Sensors 20(16), 4524 (2020)

Rublee, E., Rabaud, V., Konolige, K., Bradski, G.: ORB: an efficient alternative to sift or surf. In: 2011 International Conference on Computer Vision, pp. 2564–2571. IEEE (2011)

Stanford Artificial Intelligence Laboratory, et al.: Robotic operating system. https://www.ros.org

Szegedy, C., Liu, W., Jia, Y., Sermanet, P., Reed, S., Anguelov, D., Erhan, D., Vanhoucke, V., Rabinovich, A.: Going deeper with convolutions. In: 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 1–9 (2015). https://doi.org/10.1109/CVPR.2015.7298594

Tateno, K., Tombari, F., Laina, I., Navab, N.: CNN-SLAM: real-time dense monocular SLAM with learned depth prediction. In: 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 6565–6574 (2017). https://doi.org/10.1109/CVPR.2017.695

Wang, S., Clark, R., Wen, H., Trigoni, N.: Deepvo: Towards end-to-end visual odometry with deep recurrent convolutional neural networks. In: 2017 IEEE International Conference on Robotics and Automation (ICRA), pp. 2043–2050 (2017). https://doi.org/10.1109/ICRA.2017.7989236

Wu, Y., Liu, Y., Li, X.: Position estimation of camera based on unsupervised learning. In: Proceedings of the International Conference on Pattern Recognition and Artificial Intelligence, PRAI 2018, pp. 30–35. ACM (2018). https://doi.org/10.1145/3243250.3243254

Yin, Z., Shi, J.: GeoNet: unsupervised learning of dense depth, optical flow and camera pose. In: 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 1983–1992 (2018). https://doi.org/10.1109/CVPR.2018.00212

Funding

This research was supported by Consejo Nacional de Ciencia y Tecnología (CONACYT) with Scholarship no. 719218.

Author information

Authors and Affiliations

Contributions

All the authors contributed to the study conception, design, literature search, data collection and analysis. The first draft of the manuscript was written by J. Arturo Cocoma-Ortega and all the authors commented on previous versions of the manuscript. All the authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Ethical approval

Not applicable.

Informed consent

Not applicable.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Cocoma-Ortega, J.A., Martinez-Carranza, J. A compact CNN approach for drone localisation in autonomous drone racing. J Real-Time Image Proc 19, 73–86 (2022). https://doi.org/10.1007/s11554-021-01162-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11554-021-01162-3