Abstract

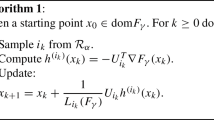

For composite nonsmooth optimization problems, which are “regular enough”, proximal gradient descent achieves model identification after a finite number of iterations. For instance, for the Lasso, this implies that the iterates of proximal gradient descent identify the non-zeros coefficients after a finite number of steps. The identification property has been shown for various optimization algorithms, such as accelerated gradient descent, Douglas–Rachford or variance-reduced algorithms, however, results concerning coordinate descent are scarcer. Identification properties often rely on the framework of “partial smoothness”, which is a powerful but technical tool. In this work, we show that partial smooth functions have a simple characterization when the nonsmooth penalty is separable. In this simplified framework, we prove that cyclic coordinate descent achieves model identification in finite time, which leads to explicit local linear convergence rates for coordinate descent. Extensive experiments on various estimators and on real datasets demonstrate that these rates match well empirical results.

Similar content being viewed by others

Data availability

Data used in this article is open and can be downloaded on the libsvm website: https://www.csie.ntu.edu.tw/~cjlin/libsvmtools/datasets/.

Notes

Note that some local rates are shown in [56] but under some strong hypothesis.

References

Bach, F.: Consistency of the group Lasso and multiple Kernel learning. J. Mach. Learn. Res. 9, 1179–1225 (2008)

Beck, A., Tetruashvili, L.: On the convergence of block coordinate type methods. SIAM J. Imaging Sci. 23(4), 651–694 (2013)

Bertrand,Q. Klopfenstein,Q. Blondel,M. Vaiter,S. Gramfort,A. and Salmon,J. Implicit differentiation of lasso-type models for hyperparameter optimization. In: International Conference on Machine Learning, 2020

Bertrand,Q. Klopfenstein, Q. Massias, M. Blondel, M. Vaiter, S. Gramfort,A. Salmon,J. Implicit differentiation for fast hyperparameter selection in non-smooth convex learning. arXiv preprint arXiv:2105.01637, 2021

Bertsekas, D.P.: On the Goldstein-Levitin-Polyak gradient projection method. IEEE Trans. Autom. Control 21(2), 174–184 (1976)

Bertsekas,D. P. Convex Optimization Theory, Chapter 1 Exercises and Solutions: Extended Version, Massachusetts Institute of Technology. URL http://www.athenasc.com/convexdualitysol1.pdf, 2009

Boser,B. E. Guyon,I. M. Vapnik,V. N. A training algorithm for optimal margin classifiers. In: Proceedings of the Fifth Annual Workshop on Computational Learning Theory, pp. 144–152. ACM, 1992

Burke, J.V., Moré, J.J.: On the identification of active constraints. SIAM J. Numer. Anal. 25(5), 1197–1211 (1988)

Chang, C.-C., Lin, C.-J.: Libsvm: a library for support vector machines. ACM transactions on intelligent systems and technology (TIST) 2(3), 27 (2011)

Chen, S.S., Donoho, D.L., Saunders, M.A.: Atomic decomposition by basis pursuit. SIAM J. Sci. Comput. 20(1), 33–61 (1998)

Combettes, P.L., Wajs, V.R.: Signal recovery by proximal forward-backward splitting. Multiscale Model. Simul. 4(4), 1168–1200 (2005)

Fadili,J. Garrigos,G. Malick,J. Peyré,G. Model consistency for learning with mirror-stratifiable regularizers. In: AISTATS, pp. 1236–1244. PMLR, 2019

Fadili, J., Malick, J., Peyré, G.: Sensitivity analysis for mirror-stratifiable convex functions. SIAM J. Optim. 28(4), 2975–3000 (2018)

Fercoq, O., Richtárik, P.: Accelerated, parallel and proximal coordinate descent. SIAM J. Optim. 25(3), 1997–2013 (2015)

Friedman, J., Hastie, T.J., Höfling, H., Tibshirani, R.: Pathwise coordinate optimization. Ann. Appl. Stat. 1(2), 302–332 (2007)

Friedman, J., Hastie, T.J., Tibshirani, R.: Regularization paths for generalized linear models via coordinate descent. J. Stat. Softw. 33(1), 1–22 (2010)

Hare, W.L.: Identifying active manifolds in regularization problems. In: Fixed-Point Algorithms for Inverse Problems in Science and Engineering, pp. 261–271. Springer, London (2011)

Hare, W.L., Lewis, A.S.: Identifying active constraints via partial smoothness and prox-regularity. J. Convex Anal. 11(2), 251–266 (2004)

Hare, W.L., Lewis, A.S.: Identifying active manifolds. Algorithmic Oper. Res. 2(2), 75–75 (2007)

Hong, M., Wang, X., Razaviyayn, M., Luo, Z.-Q.: Iteration complexity analysis of block coordinate descent methods. Math. Program. 163(1–2), 85–114 (2017)

Iutzeler, F., Malick, J.: Nonsmoothness in machine learning: Specific structure, proximal identification, and applications. Set-Valued Var. Anal. 28(4), 661–678 (2020)

Leventhal, D., Lewis, A.S.: Randomized methods for linear constraints: Convergence rates and conditioning. Math. Oper. Res. 35(3), 641–654 (2010)

Lewis, A.S.: Active sets, nonsmoothness, and sensitivity. SIAM J. Optim. 13(3), 702–725 (2002)

Li, X., Zhao, T., Arora, R., Liu, H., Hong, M.: On faster convergence of cyclic block coordinate descent-type methods for strongly convex minimization. J. Mach. Learn. Res. 18(1), 6741–6764 (2017)

Liang, J., Fadili, J., Peyré, G.: Local linear convergence of forward-backward under partial smoothness. Adv. Neural Inf. Process. Syst. 27, 1970–1978 (2014)

Liang, J., Fadili, J., Peyré, G.: Activity identification and local linear convergence of forward-backward-type methods. SIAM J. Optim. 27(1), 408–437 (2017)

Lions, P.-L., Mercier, B.: Splitting algorithms for the sum of two nonlinear operators. SIAM J. Numer. Anal. 16(6), 964–979 (1979)

Luo, Z.-Q., Tseng, P.: On the convergence of the coordinate descent method for convex differentiable minimization. J. Optim. Theory Appl. 72(1), 7–35 (1992)

Massias, M., Gramfort, A., Salmon, J.: Celer: A fast solverthe lasso with dual extrapolation. In: ICML 80, pp. 3315–3324 (2018)

Massias, M., Vaiter, S., Gramfort, A., Salmon, J.: Dual extrapolation for sparse generalized linear models. J. Mach. Learn. Res. 21, 1–33 (2020)

Necoara, I., Nesterov, Y., Glineur, F.: Linear convergence of first order methods for non-strongly convex optimization. Math. Program. 175(1–2), 69–107 (2019)

Necoara, I., Patrascu, A.: A random coordinate descent algorithm for optimization problems with composite objective function and linear coupled constraints. Comput. Optim. Appl. 57(2), 307–337 (2014)

Nesterov, Y.: Efficiency of coordinate descent methods on huge-scale optimization problems. SIAM J. Optim. 22(2), 341–362 (2012)

Nutini, J. Greed is Good: Greedy Optimization Methods for Large-Scale Structured Problems. PhD thesis, University of British Columbia, 2018

Nutini,J. Laradji,I. Schmidt, M. Let’s Make Block Coordinate Descent Go Fast: Faster Greedy Rules, Message-Passing, Active-Set Complexity, and Superlinear Convergence. arXiv preprint arXiv:1712.08859, 2017

Nutini, J., Schmidt, M., Hare, W.: Active-set complexity of proximal gradient: How long does it take to find the sparsity pattern? Optim. Lett. 13(4), 645–655 (2019)

Nutini,J. Schmidt, M. W. Laradji,I. H. Friedlander, M. P. Koepke,H. A. Coordinate descent converges faster with the Gauss-Southwell rule than random selection. In: ICML, pp. 1632–1641, 2015

Pedregosa, F., Varoquaux, G., Gramfort, A., Michel, V., Thirion, B., Grisel, O., Blondel, M., Prettenhofer, P., Weiss, R., Dubourg, V., Vanderplas, J., Passos, A., Cournapeau, D., Brucher, M., Perrot, M., Duchesnay, E.: Scikit-learn: Machine learning in Python. J. Mach. Learn. Res. 12, 2825–2830 (2011)

Poliquin, R., Rockafellar, R.: Prox-regular functions in variational analysis. Trans. Am. Math. Soc. 348(5), 1805–1838 (1996)

Polyak, B.T.: Introduction to Optimization. Optimization Software. Inc., Publications Division, New York (1987)

Poon, C., Liang, J.: Trajectory of alternating direction method of multipliers and adaptive acceleration. Adv. Neural Inf. Process. Syst. 32, 7357–7365 (2019)

Poon, C., Liang, J., Schönlieb, C.-B.: Local convergence properties of SAGA/Prox-SVRG and acceleration. In: International Conference on Machine Learning 90, pp. 4121–4129 (2018)

Qu, Z., Richtárik, P.: Coordinate descent with arbitrary sampling i: Algorithms and complexity. Optim. Methods Softw. 31(5), 829–857 (2016)

Qu, Z., Richtárik, P.: Coordinate descent with arbitrary sampling ii: Expected separable overapproximation. Optim. Methods Softw. 31(5), 858–884 (2016)

Razaviyayn, M., Hong, M., Luo, Z.-Q.: A unified convergence analysis of block successive minimization methods for nonsmooth optimization. SIAM J. Optim. 23(2), 1126–1153 (2013)

Richtárik, P., Takáč, M.: Iteration complexity of randomized block-coordinate descent methods for minimizing a composite function. Math. Program. 144(1–2), 1–38 (2014)

Saha, A., Tewari, A.: On the nonasymptotic convergence of cyclic coordinate descent methods. SIAM J. Optim. 23(1), 576–601 (2013)

Shalev-Shwartz, S., Tewari, A.: Stochastic methods for l1-regularized loss minimization. J. Mach. Learn. Res. 12, 1865–1892 (2011)

Shalev-Shwartz, S., Zhang, T.: Stochastic dual coordinate ascent methods for regularized loss minimization. J. Mach. Learn. Res. 14, 567–599 (2013)

J. She and M. Schmidt. Linear convergence and support vector identification of sequential minimal optimization. In 10th NIPS Workshop on Optimization for Machine Learning, volume 5, 2017

H.-J. M. Shi, S. Tu, Y. Xu, and W. Yin. A primer on coordinate descent algorithms. ArXiv e-prints, 2016

Sun, R., Hong, M.: Improved iteration complexity bounds of cyclic block coordinate descent for convex problems. Adv. Neural Inf. Process. Syst. 28, 1306–1314 (2015)

Tao, S., Boley, D., Zhang, S.: Local linear convergence of ISTA and FISTA on the LASSO problem. SIAM J. Optim. 26(1), 313–336 (2016)

Tibshirani, R.: Regression shrinkage and selection via the lasso. J. R. Stat. Soc. Ser. B Stat. Methodol. 58(1), 267–288 (1996)

Tseng, P.: Convergence of a block coordinate descent method for nondifferentiable minimization. J. Optim. Theory Appl. 109(3), 475–494 (2001)

Tseng, P., Yun, S.: Block-coordinate gradient descent method for linearly constrained nonsmooth separable optimization. J. Optim. Theory Appl. 140(3), 513 (2009)

Vaiter, S., Golbabaee, M., Fadili, J., Peyré, G.: Model selection with low complexity priors. Inf. Inference: A J. IMA 4(3), 230–287 (2015)

Vaiter, S., Peyré, G., Fadili, J.: Model consistency of partly smooth regularizers. IEEE Trans. Inf. Theory 64(3), 1725–1737 (2018)

H. Wang, H. Zeng, and J. Wang. Convergence rate analysis of proximal iteratively reweighted \(\ell _1\) methods for \(\ell _p\) regularization problems. Optim. Lett., pp. 1–23, 2022

Wang, H., Zeng, H., Wang, J., Wu, Q.: Relating \(\ell _p\) regularization and reweighted \(\ell _1\) regularization. Optim. Lett. 15(8), 2639–2660 (2021)

Wright, S.J.: Identifiable surfaces in constrained optimization. SIAM J. Control. Optim. 31(4), 1063–1079 (1993)

Wright, S.J.: Accelerated block-coordinate relaxation for regularized optimization. SIAM J. Optim. 22(1), 159–186 (2012)

Xu, Y., Yin, W.: A globally convergent algorithm for nonconvex optimization based on block coordinate update. J. Sci. Comput. 72(2), 700–734 (2017)

Zhang, T. Solving large scale linear prediction problems using stochastic gradient descent algorithms. In: Proceedings of the Twenty-First International Conference on Machine Learning, p. 116, (2004)

Zhao, P., Yu, B.: On model selection consistency of lasso. J. Mach. Learn. Res. 7, 2541–2563 (2006)

Zou, H., Hastie, T.J.: Regularization and variable selection via the elastic net. J. R. Stat. Soc. Ser. B Stat Methodol. 67, 301–320 (2005)

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Klopfenstein, Q., Bertrand, Q., Gramfort, A. et al. Local linear convergence of proximal coordinate descent algorithm. Optim Lett 18, 135–154 (2024). https://doi.org/10.1007/s11590-023-01976-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11590-023-01976-z