Abstract

Recently, a growing number of scientific applications have been migrated into the cloud. To deal with the problems brought by clouds, more and more researchers start to consider multiple optimization goals in workflow scheduling. However, the previous works ignore some details, which are challenging but essential. Most existing multi-objective workflow scheduling algorithms overlook weight selection, which may result in the quality degradation of solutions. Besides, we find that the famous partial critical path (PCP) strategy, which has been widely used to meet the deadline constraint, can not accurately reflect the situation of each time step. Workflow scheduling is an NP-hard problem, so self-optimizing algorithms are more suitable to solve it.

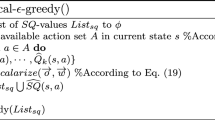

In this paper, the aim is to solve a workflow scheduling problem with a deadline constraint. We design a deadline constrained scientific workflow scheduling algorithm based on multi-objective reinforcement learning (RL) called DCMORL. DCMORL uses the Chebyshev scalarization function to scalarize its Q-values. This method is good at choosing weights for objectives. We propose an improved version of the PCP strategy called MPCP. The sub-deadlines in MPCP regularly update during the scheduling phase, so they can accurately reflect the situation of each time step. The optimization objectives in this paper include minimizing the execution cost and energy consumption within a given deadline. Finally, we use four scientific workflows to compare DCMORL and several representative scheduling algorithms. The results indicate that DCMORL outperforms the above algorithms. As far as we know, it is the first time to apply RL to a deadline constrained workflow scheduling problem.

Similar content being viewed by others

References

Senyo P K, Addae E, Boateng R. Cloud computing research: a review of research themes, frameworks, methods and future research directions. International Journal of Information Management, 2018, 38(1): 128–139

Andrae A S G, Edler T. On global electricity usage of communication technology: trends to 2030. Challenges, 2015, 6(1): 117–157

Hamilton J. Cooperative expendable micro-slice servers (cems): low cost, low power servers for internet-scale services. In: Proceedings of Conference on Innovative Data Systems Research. 2009

Khattar N, Sidhu J, Singh J. Toward energy-efficient cloud computing: a survey of dynamic power management and heuristics-based optimization techniques. The Journal of Supercomputing, 2019, 75(8): 4750–4810

Wang Y, Liu H, Zheng W, Xia Y, Li Y, Chen P, Guo K, Xie H. Multi-objective workflow scheduling with deep-Q-network-based multi-agent reinforcement learning. IEEE Access, 2019, 7: 39974–39982

Das I, Dennis J E. A closer look at drawbacks of minimizing weighted sums of objectives for pareto set generation in multicriteria optimization problems. Structural Optimization, 1997, 14(1): 63–69

Van Moffaert K, Drugan M M, Nowé A. Scalarized multi-objective reinforcement learning: novel design techniques. In: Proceedings of IEEE Symposium on Adaptive Dynamic Programming and Reinforcement Learning. 2013, 191–199

Abrishami S, Naghibzadeh M, Epema D H. Deadline-constrained workflow scheduling algorithms for infrastructure as a service clouds. Future Generation Computer Systems, 2013, 29(1): 158–169

Qin Y, Wang H, Yi S, Li X, Zhai L. An energy-aware scheduling algorithm for budget-constrained scientific workflows based on multi-objective reinforcement learning. The Journal of Supercomputing, 2020, 76: 455–480

Zitzler E, Thiele L, Laumanns M, Fonseca C M, Da Fonseca V G. Performance assessment of multiobjective optimizers: an analysis and review. TIK-Report, 2002

Qin Y, Wang H, Yi S, Li X, Zhai L. Virtual machine placement based on multi-objective reinforcement learning. Applied Intelligence, 2020

Sutton R S, Barto A G. Reinforcement Learning: An Introduction. MIT Press, 2018

Watkins C J C H. Learning from delayed rewards. Doctoral Thesis, University of Cambridge, 1989

Tsitsiklis J N. Asynchronous stochastic approximation and Q-learning. Machine Learning, 1994, 16(3): 185–202

Wiering M A, De Jong E D. Computing optimal stationary policies for multi-objective markov decision processes. In: Proceedings of IEEE International Symposium on Approximate Dynamic Programming and Reinforcement Learning. 2007, 158–165

Van Moffaert K, Nowé A. Multi-objective reinforcement learning using sets of pareto dominating policies. The Journal of Machine Learning Research, 2014, 15(1): 3483–3512

Vamplew P, Yearwood J, Dazeley R, Berry A. On the limitations of scalarisation for multi-objective reinforcement learning of pareto fronts. In: Proceedings of Australasian Joint Conference on Artificial Intelligence. 2008, 372–378

Voß T, Beume N, Rudolph G, Igel C. Scalarization versus indicator-based selection in multi-objective cma evolution strategies. In: Proceedings of IEEE Congress on Evolutionary Computation (IEEE World Congress on Computational Intelligence). 2008, 3036–3043

Yu J, Buyya R, Tham C K. Cost-based scheduling of scientific workflow applications on utility grids. In: Proceedings of the 1st International Conference on e-Science and Grid Computing. 2005

Abrishami S, Naghibzadeh M, Epema D H J. Cost-driven scheduling of grid workflows using partial critical paths. IEEE Transactions on Parallel and Distributed Systems, 2012, 23(8): 1400–1414

Li Z, Ge J, Hu H, Song W, Hu H, Luo B. Cost and energy aware scheduling algorithm for scientific workflows with deadline constraint in clouds. IEEE Transactions on Services Computing, 2015, 11(4): 713–726

Verma A, Kaushal S. A hybrid multi-objective particle swarm optimization for scientific workflow scheduling. Parallel Computing, 2017, 62: 1–19

Coello C A C, Pulido G T, Lechuga M S. Handling multiple objectives with particle swarm optimization. IEEE Transactions on Evolutionary Computation, 2004, 8(3): 256–279

Haidri R A, Katti C P, Saxena P C. Cost-effective deadline-aware stochastic scheduling strategy for workflow applications on virtual machines in cloud computing. Concurrency and Computation: Practice and Experience, 2019, 31(7): e5006

Deb K, Pratap A, Agarwal S, Meyarivan T. A fast and elitist multiobjective genetic algorithm: NSGA-II. IEEE Transactions on Evolutionary Computation, 2002, 6(2): 182–197

Wang J, Taal A, Martin P, Hu Y, Zhou H, Pang J, Laat D C, Zhao Z. Planning virtual infrastructures for time critical applications with multiple deadline constraints. Future Generation Computer Systems, 2017, 75: 365–375

Zhu D, Melhem R, Childers B R. Scheduling with dynamic voltage/speed adjustment using slack reclamation in multiprocessor real-time systems. IEEE Transactions on Parallel and Distributed Systems, 2003, 14(7): 686–700

Lee Y C, Zomaya A Y. Energy conscious scheduling for distributed computing systems under different operating conditions. IEEE Transactions on Parallel and Distributed Systems, 2010, 22(8): 1374–1381

Atkinson M, Gesing S, Montagnat J, Taylor I. Scientific workflows: past, present and future. Future Generation Computer Systems, 2017, 75: 216–227

Topcuoglu H, Hariri S, Wu M Y. Performance-effective and low-complexity task scheduling for heterogeneous computing. IEEE Transactions on Parallel and Distributed Systems, 2002, 13(3): 260–274

Bharathi S, Chervenak A, Deelman E, Mehta G, Su M H, Vahi K. Characterization of scientific workflows. In: Proceedings of the 3rd Workshop on Workflows in Support of Large-scale Science. 2008, 1–10

Calheiros R N, Ranjan R, Beloglazov A, De Rose C A, Buyya R. Cloudsim: a toolkit for modeling and simulation of cloud computing environments and evaluation of resource provisioning algorithms. Software: Practice and Experience, 2011, 41(1): 23–50

Herbst N, Bauer A, Kounev S, Oikonomou G, Eyk E V, Kousiouris G, Evangelinou A, Krebs R, Brecht T, Abad C L, et al. Quantifying cloud performance and dependability: taxonomy, metric design, and emerging challenges. ACM Transactions on Modeling and Performance Evaluation of Computing Systems (ToMPECS), 2018, 3(4): 19

Melo F S. Convergence of Q-learning: a simple proof. Institute of Systems and Robotics, Technical Report, 2001, 1–4

Acknowledgements

We would like to thank anonymous referees for their helpful suggestions to improve this paper

This work was supported in part by the National Natural Science Foundation of China (Grant No. 61672323), in part by the Fundamental Research Funds of Shandong University (2017JC043), in part by the Key Research and Development Program of Shandong Province (2017GGX10122, 2017GGX10142, and 2019JZZY010134), and in part by the Natural Science Foundation of Shandong Province (ZR2019MF072).

Author information

Authors and Affiliations

Corresponding authors

Additional information

Yao Qin received his BS degree from Shandong University, China in 2014. He is currently pursuing the PhD degree of Computer Science and Technology in Shandong University, China. His research interests include software defined network, cloud computing and network optimization.

Hua Wang received the PhD degree from Nanjing University of Science and Technology, China in 2003. From 2005 to 2008, he was a Post-Doctoral Fellow with the School of Computer Science and Technology, Shandong University, China. He is currently a professor of the School of Software, Shandong University, China, where he leads the Network Optimization Research Group. His research interests include network optimization, network algorithms, network measurement, network architecture and protocol, and network simulation. His research has been supported by China Next Generation Internet Project, and NSF of China.

Shanwen Yi received the master’s degree from Shandong University, China in 2010. He is currently pursuing the PhD degree with Shandong University, China. His main research interests include network optimization, network algorithms, network intelligence, and network architecture and protocol.

Xiaole Li received the PhD degree from Shandong University, China in 2019. He is currently a teacher in Linyi University, China. His main research interests include network optimization, network algorithms, network intelligence, and network architecture and protocol.

Linbo Zhai received his BS and MS degrees from School of Information Science and Engineering at Shandong University, China in 2004 and 2007, respectively. He received his PhD degree from School of Electronic Engineering at Beijing University of Posts and Telecommunications, China in 2010. From then on, he worked as a teacher in Shandong Normal University, China. His current research interests include cognitive radio, crowdsourcing and distributed network optimization.

Electronic supplementary material

Rights and permissions

About this article

Cite this article

Qin, Y., Wang, H., Yi, S. et al. A multi-objective reinforcement learning algorithm for deadline constrained scientific workflow scheduling in clouds. Front. Comput. Sci. 15, 155105 (2021). https://doi.org/10.1007/s11704-020-9273-z

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s11704-020-9273-z