Abstract

In this paper, we propose a lightweight network with an adaptive batch normalization module, called Meta-BN Net, for few-shot classification. Unlike existing few-shot learning methods, which consist of complex models or algorithms, our approach extends batch normalization, an essential part of current deep neural network training, whose potential has not been fully explored. In particular, a meta-module is introduced to learn to generate more powerful affine transformation parameters, known as σ and β, in the batch normalization layer adaptively so that the representation ability of batch normalization can be activated. The experimental results on miniImageNet demonstrate that Meta-BN Net not only outperforms the baseline methods at a large margin but also is competitive with recent state-of-the-art few-shot learning methods. We also conduct experiments on Fewshot-CIFAR100 and CUB datasets, and the results show that our approach is effective to boost the performance of weak baseline networks. We believe our findings can motivate to explore the undiscovered capacity of base components in a neural network as well as more efficient few-shot learning methods.

Similar content being viewed by others

References

Krizhevsky A, Sutskever I, Hinton G E. Imagenet classification with deep convolutional neural networks. In: Proceedings of the 26th Conference on Neural Information Processing Systems. 2012, 1106–1114

Simonyan K, Zisserman A. Very deep convolutional networks for large-scale image recognition. In: Proceedings of the 3rd International Conference on Learning Representations. 2015

Szegedy C, Liu W, Jia Y, Sermanet P, Reed S, Anguelov D, Erhan D, Vanhoucke V, Rabinovich A. Going deeper with convolutions. In: Proceedings of IEEE Conference on Computer Vision and Pattern Recognition. 2015, 1–9

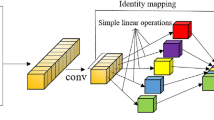

He K, Zhang X, Ren S, Sun J. Deep residual learning for image recognition. In: Proceedings of IEEE Conference on Computer Vision and Pattern Recognition. 2016, 770–778

Lake B M, Salakhutdinov R, Tenenbaum J B. Human-level concept learning through probabilistic program induction. Science, 2015, 350(6266): 1332–1338

Fei-Fei L, Fergus R, Perona P. One-shot learning of object categories. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2006, 28(4): 594–611

Vinyals O, Blundell C, Lillicrap T, Kavukcuoglu K, Wierstra D. Matching networks for one shot learning. In: Proceedings of the 30th International Conference on Neural Information Processing Systems. 2016, 3637–3645

Tian Y, Wang Y, Krishnan D, Tenenbaum J B, Isola P. Rethinking few-shot image classification: a good embedding is all you need? In: Proceedings of the 16th European Conference on Computer Vision. 2020, 266–282

Goldblum M, Reich S, Fowl L, Ni R, Cherepanova V, Goldstein T. Unraveling meta-learning: understanding feature representations for few-shot tasks. In: Proceedings of the 37th International Conference on Machine Learning. 2020, 3607–3616

Bendre N, Marín H T, Najafirad P. Learning from few samples: a survey. 2020, arXiv preprint arXiv: 2007.15484

Bengio Y, Bengio S, Cloutier J. Learning a synaptic learning rule. In: Proceedings of IJCNN-91-Seattle International Joint Conference on Neural Networks. 1991, 969

Schmidhuber J. Evolutionary principles in self-referential learning. On learning now to learn: the meta-meta-meta…-hook. Technische Universitat Munchen, Dissertation, 1987

Chen W Y, Liu Y C, Kira Z, Wang Y C F, Huang J B. A closer look at few-shot classification. In: Proceedings of the 7th International Conference on Learning Representations. 2019

Bronskill J, Gordon J, Requeima J, Nowozin S, Turner R E. TASKNORM: rethinking batch normalization for meta-learning. In: Proceedings of the 37th International Conference on Machine Learning. 2020, 1153–1164

Ba J L, Kiros J R, Hinton G E. Layer normalization. 2016, arXiv preprint arXiv: 1607.06450

Ulyanov D, Vedaldi A, Lempitsky V. Instance normalization: the missing ingredient for fast stylization. 2016, arXiv preprint arXiv: 1607.08022

Ioffe S, Szegedy C. Batch normalization: accelerating deep network training by reducing internal covariate shift. In: Proceedings of the 32nd International Conference on Machine Learning. 2015, 448–456

Santoro A, Bartunov S, Botvinick M, Wierstra D, Lillicrap T. One-shot learning with memory-augmented neural networks. 2016, arXiv preprint arXiv: 1605.06065

Graves A, Wayne G, Danihelka I. Neural turing machines. 2014, arXiv preprint arXiv: 1410.5401

Mishra N, Rohaninejad M, Chen X, Abbeel P. A simple neural attentive meta-learner. In: Proceedings of the 6th International Conference on Learning Representations. 2018

Finn C, Abbeel P, Levine S. Model-agnostic meta-learning for fast adaptation of deep networks. In: Proceedings of the 34th International Conference on Machine Learning. 2017, 1126–1135

Li Z G, Zhou F W, Chen F, Li H. Meta-SGD: learning to learn quickly for few-shot learning. 2017, arXiv preprint arXiv: 1707.09835

Nichol A, Achiam J, Schulman J. On first-order meta-learning algorithms. 2018, arXiv preprint arXiv: 1803.02999

Jamal M A, Qi G J. Task agnostic meta-learning for few-shot learning. In: Proceedings of IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2019, 11711–11719

Snell J, Swersky K, Zemel R. Prototypical networks for few-shot learning. In: Proceedings of the 31st International Conference on Neural Information Processing Systems. 2017, 4080–4090

Gidaris S, Komodakis N. Dynamic few-shot visual learning without forgetting. In: Proceedings of IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2018, 4367–4375

Dhillon G S, Chaudhari P, Ravichandran A, Soatto S. A baseline for few-shot image classification. In: Proceedings of the 8th International Conference on Learning Representations. 2020

Wang Y, Chao W L, Weinberger K Q, van der Maaten L. SimpleShot: revisiting nearest-neighbor classification for few-shot learning. 2019, arXiv preprint arXiv: 1911.04623

de Vries H, Strub F, Mary J, Larochelle H, Pietquin O, Courville A. Modulating early visual processing by language. In: Proceedings of the 31st International Conference on Neural Information Processing Systems. 2017, 6597–6607

Dumoulin V, Shlens J, Kudlur M. A learned representation for artistic style. In: Proceedings of the 5th International Conference on Learning Representations. 2017

Huang X, Belongie S. Arbitrary style transfer in real-time with adaptive instance normalization. In: Proceedings of IEEE International Conference on Computer Vision. 2017, 1510–1519

Park T, Liu M Y, Wang T C, Zhu J Y. Semantic image synthesis with spatially-adaptive normalization. In: Proceedings of IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2019, 2332–2341

Oreshkin B N, Rodriguez P, Lacoste A. TADAM: task dependent adaptive metric for improved few-shot learning. In: Proceedings of the 32nd International Conference on Neural Information Processing Systems. 2018, 719–729

Perez E, Strub F, de Vries H, Dumoulin V, Courville A. FiLM: visual reasoning with a general conditioning layer. In: Proceedings of the 32nd AAAI Conference on Artificial Intelligence. 2018, 3942–3951

Tseng H Y, Lee H Y, Huang J B, Yang M H. Cross-domain few-shot classification via learned feature-wise transformation. In: Proceedings of International Conference on Learning Representations. 2020

Guo Y, Codella N C, Karlinsky L, Codella J V, Smith J R, Saenko K, Rosing T, Feris R. A broader study of cross-domain few-shot learning. In: Proceedings of the 16th European Conference on Computer Vision. 2020, 124–141

Requeima J, Gordon J, Bronskill J, Nowozin S, Turner R E. Fast and flexible multi-task classification using conditional neural adaptive processes. In: Proceedings of the 33rd International Conference on Neural Information Processing Systems. 2019, 7957–7968

Howard A G, Zhu M L, Chen B, Kalenichenko D, Wang W J, Weyand T, Andreetto M, Adam H. MobileNets: efficient convolutional neural networks for mobile vision applications. 2016, arXiv preprint arXiv: 1704.04861

Wah C, Branson S, Welinder P, Perona P, Belongie S. The Caltech-UCSD birds-200–2011 dataset. Technical Report CNS-TR-2011–001. Pasadena, CA, USA: California Institute of Technology, 2011

Russakovsky O, Deng J, Su H, Krause J, Satheesh S, Ma S, Huang Z, Karpathy A, Khosla A, Bernstein M, Berg A C, Fei-Fei L. ImageNet large scale visual recognition challenge. International Journal of Computer Vision, 2015, 115(3): 211–252

Ravi S, Larochelle H. Optimization as a model for few-shot learning. In: Proceedings of the 5th International Conference on Learning Representations. 2017

Krizhevsky A, Hinton G. Learning multiple layers of features from tiny images. University of Toronto, Dissertation, 2009

Hilliard N, Phillips L, Howland S, Yankov A, Corley C D, Hodas N O. Few-shot learning with metric-agnostic conditional embeddings. 2018, arXiv preprint arXiv: 1802.04376

Satorras V G, Estrach J B. Few-shot learning with graph neural networks. In: Proceedings of the 6th International Conference on Learning Representations. 2018

Sung F, Yang Y, Zhang L, Xiang T, Torr P H S, Hospedales T M. Learning to compare: relation network for few-shot learning. In: Proceedings of IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2018, 1199–1208

Qiao S, Liu C, Shen W, Yuille A. Few-shot image recognition by predicting parameters from activations. In: Proceedings of IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2018, 7229–7238

Ye H J, Hu H, Zhan D C, Sha F. Few-shot learning via embedding adaptation with set-to-set functions. 2018, arXiv preprint arXiv: 1812.03664

Jiang X, Havaei M, Varno F, Chartrand G, Chapados N, Matwin S. Learning to learn with conditional class dependencies. In: Proceedings of the 7th International Conference on Learning Representations. 2019

Xing C, Rostamzadeh N, Oreshkin B N, Pinheiro P O. Adaptive cross-modal few-shot learning. In: Proceedings of International Conference on Neural Information Processing Systems. 2019, 4848–4858

Zhang C, Cai Y, Lin G, Shen C. DeepEMD: few-shot image classification with differentiable earth mover’s distance and structured classifiers. In: Proceedings of IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2020, 12200–12210

Rusu A A, Rao D, Sygnowski J, Vinyals O, Pascanu R, Osindero S, Hadsell R. Meta-learning with latent embedding optimization. In: Proceedings of the 7th International Conference on Learning Representations. 2019

Rodríguez P, Laradji I, Drouin A, Lacoste A. Embedding propagation: smoother manifold for few-shot classification. In: Proceedings of the 16th European Conference on Computer Vision. 2020, 121–138

Hu S X, Moreno P G, Xiao Y, Shen X, Obozinski G, Lawrence N D, Damianou A C. Empirical Bayes transductive meta-learning with synthetic gradients. In: Proceedings of the 8th International Conference on Learning Representations. 2020

Davies D L, Bouldin D W. A cluster separation measure. IEEE Transactions on Pattern Analysis and Machine Intelligence, 1979, 1(2): 224–227

Acknowledgements

This work was supported by the National Natural Science Foundation of China (Grant Nos. 61673396, U19A2073, 61976245).

Author information

Authors and Affiliations

Corresponding author

Additional information

Wei Gao received the bachelor’s degree from the College of Computer Science and Technology, China University of Petroleum, China in 2019 and is currently a MS at China University of Petroleum, China under the supervision of Prof. Shao. His current research interests include few-shot learning and meta-learning.

Mingwen Shao received his MS degree in mathematics from the Guangxi University, China in 2002, and the PhD degree in applied mathematics from Xi’an Jiaotong University, China in 2005. He received the postdoctoral degree in control science and engineering from Tsinghua University, China in 2008. Now he is a professor and doctoral supervisor at China University of Petroleum, China. His research interests include data mining, machine learning and generative adversarial learning.

Jun Shu is currently a PhD candidate at Xi’an Jiaotong University, China under the supervision of Prof. Deyu Meng and Prof. Zongben Xu. His current research interests include machine learning and computer vision, especially on small sample learning, learning to learn and meta learning.

Xinkai Zhuang is currently a master student at China University of Petroleum, China under the supervision of Prof. Mingwen Shao. His current research interests include machine learning and computer vision, especially on few shot learning, transfer learning and meta learning.

Electronic supplementary material

Rights and permissions

About this article

Cite this article

Gao, W., Shao, M., Shu, J. et al. Meta-BN Net for few-shot learning. Front. Comput. Sci. 17, 171302 (2023). https://doi.org/10.1007/s11704-021-1237-4

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s11704-021-1237-4